Balancing Act in Research: Strategies to Optimize Data Quality While Minimizing Volunteer Burden in Clinical Studies

This article provides a comprehensive framework for researchers and drug development professionals to navigate the critical trade-off between data integrity and participant effort in volunteer-based studies.

Balancing Act in Research: Strategies to Optimize Data Quality While Minimizing Volunteer Burden in Clinical Studies

Abstract

This article provides a comprehensive framework for researchers and drug development professionals to navigate the critical trade-off between data integrity and participant effort in volunteer-based studies. It explores the foundational principles defining data quality and volunteer burden, presents methodological approaches for efficient study design, offers troubleshooting strategies for common data collection challenges, and reviews validation techniques to assess optimization success. The synthesis aims to empower the design of more ethical, efficient, and scientifically robust biomedical research.

Understanding the Core Conflict: Defining Data Quality and Volunteer Burden in Clinical Research

Technical Support Center

Troubleshooting Guides & FAQs

Q1: Our high-throughput screening assay is yielding data with high intra-plate variability, compromising accuracy. What are the primary troubleshooting steps?

A: High variability often stems from reagent or instrumentation inconsistency. Follow this protocol:

- Instrument Calibration: Perform a full maintenance cycle on liquid handlers and readers. Log all calibration data.

- Reagent Validation: Thaw a new aliquot of critical reagents (e.g., ATP for kinase assays). Prepare a fresh batch of assay buffer.

- Control Re-test: Run a control plate (maximum, minimum, and mid-range signal controls) using the newly calibrated instrument and fresh reagents.

- Data Analysis: Calculate the Z'-factor for the control plate. A value >0.5 indicates a robust assay. If variability persists, proceed to step 5.

- Environmental Check: Verify incubator temperature and CO₂ levels are stable and logged.

Q2: We are missing critical time-point data in a longitudinal volunteer-reported outcome study, affecting completeness. How can we mitigate this during the study and handle the gaps afterward?

A: Proactive engagement and robust imputation are key.

- Proactive Mitigation Protocol:

- Implement automated, scheduled reminder messages (email, SMS) with direct links to the reporting tool.

- After a missed time-point, trigger a low-effort "catch-up" survey that asks for the most critical data points only.

- Data Handling Protocol for Gaps:

- Classify the missingness mechanism (e.g., Missing Completely at Random - MCAR) using Little's test.

- For MCAR data, apply multiple imputation by chained equations (MICE) to create 5-10 complete datasets.

- Perform your analysis on each dataset and pool the results using Rubin's rules.

Q3: Delays in sample processing at satellite collection sites are impacting the timeliness of biomarker analysis. What is a validated workflow to stabilize samples?

A: Implement a sample stabilization and logging protocol.

- Immediate Stabilization: Upon collection, immediately mix blood with a commercial cell-stabilizing reagent (e.g., PAXgene for RNA, Streck tubes for cfDNA). Invert 10 times.

- Pre-processing Log: Record exact collection time and required processing delay in a central log. Place tube in a 4°C portable cooler.

- Batch Shipping: Ship stabilized samples daily (not weekly) to the core lab using a monitored cold chain logistics service.

- Core Lab Receipt: Upon receipt, log the time, perform a visual QC, and immediately process or store at -80°C.

Q4: Inconsistencies in diagnostic criteria between clinical sites are causing major data consistency errors in our multi-center trial. How can we align assessments?

A: Implement a centralized, ongoing quality assurance program.

- Standardized Training: All site raters must complete a mandatory, interactive e-learning module with video vignettes of patient assessments.

- Certification Test: Raters must pass a >90% agreement test against a gold-standard rating panel.

- Regular Re-calibration: Every 3 months, raters review and score 5 new vignettes. Scores falling below 85% agreement trigger re-training.

- Centralized Monitoring: Use a clinical data management system with embedded logic checks that flag assessments falling outside pre-defined, consistent ranges for central review.

Table 1: Impact of Data Quality Dimensions on Regulatory & Research Outcomes

| Dimension | Definition | Key Metric | Target for Regulatory Submissions | Common Source of Error in Volunteer Studies |

|---|---|---|---|---|

| Accuracy | Closeness to true value. | % Error, Z'-factor (>0.5), CV (<20%) | Assay validation reports showing precision & accuracy within ±15%. | Uncalibrated sensors, vague survey questions, transcription errors. |

| Completeness | Proportion of expected data captured. | % Missingness, Missed Time-points | <5% missing for primary endpoints; justification required. | High volunteer burden, poor user interface, lack of reminders. |

| Timeliness | Availability & relevance within timeframe. | Processing Lag, Sample Degradation Rate | Sample processing within validated stability window (e.g., 2h post-collection). | Infrequent data sync, batch processing delays, slow adjudication. |

| Consistency | Uniformity across datasets/sources. | Inter-rater Reliability (Kappa >0.8), Database Rule Violations | Concordance between source data and CRFs; audit trails. | Differing site protocols, uncontrolled terminology, software updates. |

Experimental Protocols

Protocol 1: Assessing the Accuracy & Precision of a Volunteer-Used Digital Health Tool Objective: Validate a consumer-grade activity tracker against a research-grade accelerometer for step count accuracy in a free-living environment. Methodology:

- Participants: Recruit 30 volunteers. Fit each with the validated research-grade device (e.g., ActiGraph GT9X) on the dominant wrist and the consumer-grade device (e.g., Fitbit) on the non-dominant wrist.

- Procedure: Instruct volunteers to proceed with normal activities for 7 days. Devices are worn continuously except during water activities.

- Data Collection: Download step count data from both devices, aligned by timestamp.

- Analysis: Calculate mean absolute percentage error (MAPE) and Bland-Altman limits of agreement between the two devices for daily step counts. Perform an intraclass correlation coefficient (ICC) analysis for consistency.

Protocol 2: Optimizing Survey Completeness vs. Length Trade-off Objective: Determine the maximum survey length (number of items) that maintains >85% completion rate without sacrificing data richness. Methodology:

- Design: Create a core set of 15 essential questions (Core Set). Develop three supplementary modules of 5, 10, and 15 questions each (Module A, B, C).

- Randomization: Randomly assign 400 volunteer participants into four arms: Arm 1 (Core only), Arm 2 (Core + A), Arm 3 (Core + B), Arm 4 (Core + C).

- Procedure: Administer the assigned survey via a mobile app. Log time to completion and dropout points.

- Analysis: Compare completion rates across arms using Chi-square. Use survival analysis (Kaplan-Meier) to model dropout risk as a function of survey length and item complexity.

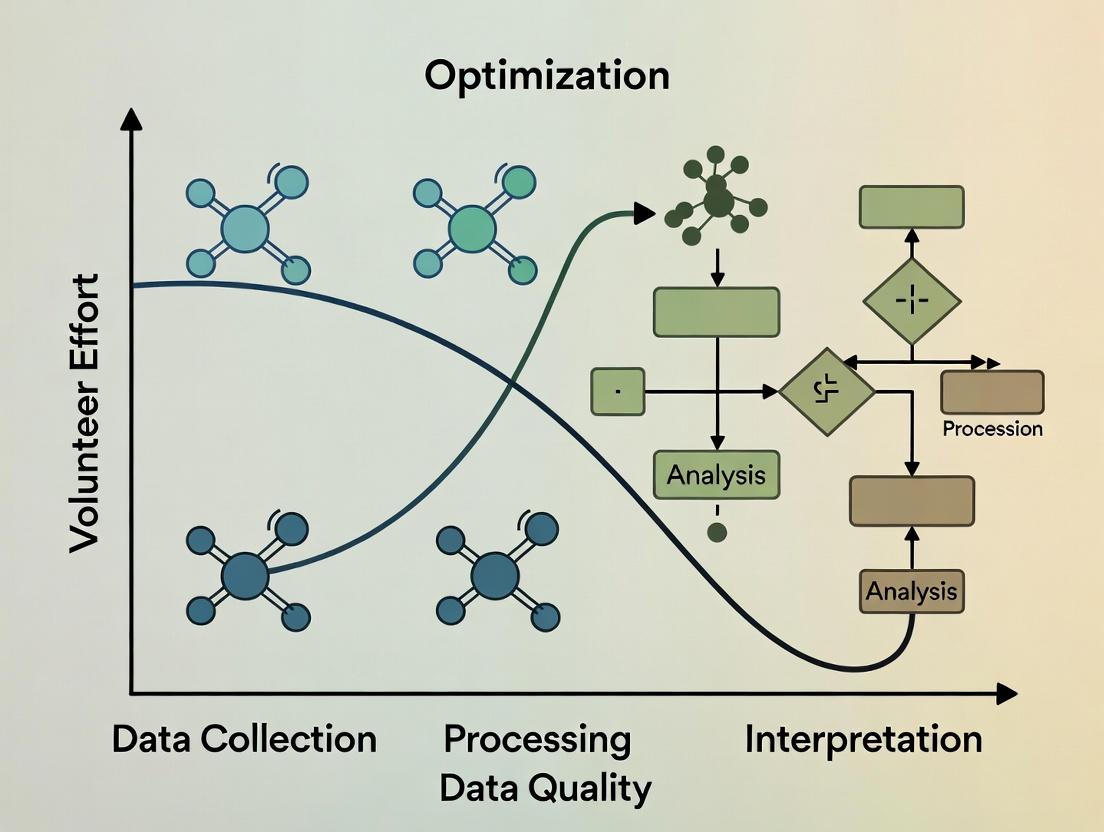

Mandatory Visualizations

Research Data Quality Optimization Workflow

Pathway from Data Capture to Regulatory Acceptance

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents for Biomarker Sample Quality Assurance

| Item | Function | Example Product (Brand) | Key for Data Quality Dimension |

|---|---|---|---|

| Cell-Stabilizing Blood Collection Tubes | Preserves cellular RNA/DNA profile at room temperature for days/weeks, enabling timely processing from remote sites. | PAXgene Blood RNA Tube, Streck Cell-Free DNA BCT | Timeliness, Accuracy |

| Protease & Phosphatase Inhibitor Cocktails | Added immediately to tissue or cell lysates to prevent protein degradation and loss of post-translational modification signals. | Halt Protease & Phosphatase Inhibitor Cocktail (Thermo) | Accuracy, Consistency |

| Quantitative PCR (qPCR) Master Mix with ROX Dye | Provides a passive reference signal to normalize for well-to-well volumetric variations in real-time PCR instruments, improving accuracy. | PowerUp SYBR Green Master Mix (Applied Biosystems) | Accuracy |

| Digital Calibration Standards | Precisely characterized particles or molecules used to calibrate flow cytometers and imaging systems across multiple sites and time points. | Rainbow Calibration Particles (Spherotech), URMC3 Microscope Calibration Slide | Consistency, Accuracy |

| Automated Nucleic Acid Quantitation Assay | Fluorometric assay (e.g., dsDNA HS) for precise, consistent concentration measurement of low-input samples prior to sequencing/library prep. | Qubit dsDNA HS Assay Kit (Thermo) | Completeness, Accuracy |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: Our study participants are reporting high frustration with the daily eDiary, leading to missed entries. How can we reduce cognitive load without compromising data granularity? A: Implement intelligent survey branching. Use initial screening questions to route participants to shorter, more relevant question sets. For example, if a participant reports "no symptom change" from baseline, skip detailed symptom severity grids. This can reduce time burden by ~40%. Validate by comparing data completeness and variance between branched and full protocols in a pilot.

Q2: Volunteer drop-out rates are spiking in our long-term observational study. How do we quantify and address the emotional cost of continued participation? A: Integrate brief, validated emotional burden scales (e.g., a single-item Perceived Burden Scale) at scheduled intervals. Correlate scores with compliance metrics. Protocol: Administer the scale monthly. If a participant's burden score increases by >30% from their baseline, trigger a support protocol: reduce contact frequency, offer a "pause" period, or provide additional context on how their data is used. This proactive approach has shown a 25% reduction in attrition in similar cohorts.

Q3: We need high-frequency physiological data but are concerned about physical intrusion (wearable devices). What's the optimal trade-off between data density and volunteer comfort? A: Conduct a crossover feasibility sub-study. Protocol: Randomize participants to wear a research-grade continuous wearable (e.g., chest strap) for 7 days, followed by a consumer-grade device (e.g., smartwatch) for 7 days, or vice versa. Compare data yield (sampling rate, completeness), technical error rates, and participant comfort scores (via daily survey). Data often shows consumer devices offer >80% data yield with significantly higher comfort and adherence.

Q4: How can we accurately measure the total time commitment for a complex, multi-visit trial? A: Implement a micro-time tracking methodology. Provide participants with a simple log (digital or paper) to record not just travel and site visit time, but also pre-visit preparation (fasting, medication pauses), at-home tasks, and communication time. Average this data across your cohort to calculate the true "hidden" time cost, which is typically 1.8x the estimated core visit time.

Q5: Our lab-based cognitive tests are yielding high-quality data but low compliance. How can we adapt them for remote, unsupervised use without introducing noise? A: Redesign tests using "gamified" elements with embedded data quality checks. Protocol: Convert a standard n-back test into a short, engaging game with adaptive difficulty. Include periodic "catch trials" where a known stimulus is presented to measure attention drift. Use the device's front-facing camera (with consent) to record ambient light and gross movement as proxy measures for testing environment quality. Pilot data shows a compliance increase of 60% with a <15% increase in data variability.

Table 1: Impact of Burden Mitigation Strategies on Data Quality & Compliance

| Burden Dimension | Mitigation Strategy | Typical Reduction in Burden Metric | Impact on Data Completeness | Impact on Data Variance |

|---|---|---|---|---|

| Cognitive Load | Survey Branching | Time: -40% | +12% | No significant change |

| Time Commitment | Visit Consolidation | Total Hours: -25% | -5% | Slight increase in diurnal noise |

| Physical Intrusion | Device Downgrade | Comfort Score: +35% | -15% Data Yield | Increased +/- 5% |

| Emotional Cost | Proactive Support | Attrition: -25% | +18% for remaining participants | Not applicable |

Table 2: Measured Volunteer Burden by Study Type

| Study Type | Avg. Cognitive Load (Survey Length mins/day) | Avg. Time Commitment (Hrs/Month) | Avg. Physical Intrusion Score (1-10) | Avg. Emotional Cost Score (1-10) |

|---|---|---|---|---|

| Phase III RCT (On-site) | 15 | 20 (incl. travel) | 7.5 | 6.2 |

| Remote Observational (Digital) | 25 | 8 (at-home tasks) | 3.1 | 4.8 |

| Bio-sampling Intensive | 8 | 15 | 8.9 | 7.1 |

| Long-Term Cohort | 10 | 5 | 2.5 | 5.5 (cumulative) |

Experimental Protocol: Crossover Feasibility for Device Intrusion

Title: Protocol for Evaluating Wearable Device Burden vs. Data Yield.

Objective: To determine the optimal trade-off between physical intrusion and data quality for continuous physiological monitoring.

Design: Randomized, open-label, two-period crossover.

Participants: N=50 healthy volunteers from target demographic.

Interventions:

- Period 1 (7 days): Device A (Research-grade chest strap, continuous ECG).

- Period 2 (7 days): Device B (Consumer-grade wrist-worn optical sensor).

- Washout: 2 days.

Outcome Measures:

- Primary Burden: Mean physical intrusion score from daily 5-point Likert scale.

- Primary Data Yield: Percentage of valid, artifact-free heart rate intervals per 24-hour period.

- Secondary: Adherence (hours worn/day), comfort questionnaire, signal-to-noise ratio.

Analysis: Paired t-tests to compare burden and yield between devices. Linear mixed model to assess period and carryover effects.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Digital Burden Assessment Studies

| Item | Function & Relevance to Burden Research |

|---|---|

| Consumer-Grade Wearables (e.g., Fitbit, Apple Watch) | Enable low-intrusion, continuous passive data collection in ecological settings; critical for assessing real-world time commitment and activity. |

| Experience Sampling (ESM) Apps (e.g., mEMA, Ethica) | Platform to deliver micro-surveys at random or fixed intervals; primary tool for quantifying in-the-moment cognitive and emotional burden. |

| Perceived Burden Scale (PBS) | Validated short-form questionnaire to quantitatively assess the multidimensional burden (emotional, time, physical) from the participant's perspective. |

| Time-Use Diaries (Digital) | Frameworks for participants to log activities in real-time; essential for capturing the "hidden" time costs of study participation beyond scheduled visits. |

| Data Quality Suites (e.g., BrainBaseline, Cambridge Cognition) | Provide remote, gamified cognitive tests with built-in integrity checks (e.g., background noise detection); reduce cognitive burden while ensuring data validity. |

| Secure Video Conferencing & eConsent Platforms | Reduce time/travel burden for visits and enable complex consent processes to be broken into digestible modules, lowering initial cognitive load. |

Visualizations

Study Design: Burden vs Data Quality Trade-off

Pathway: How High Burden Threatens Study Validity

Technical Support Center

Welcome to the Data Quality-Participant Effort Technical Support Hub. This center provides targeted guidance for researchers facing the critical trade-off between data richness and participant burden, framed within the thesis of optimizing this balance for sustainable, high-quality data.

Troubleshooting Guides

Issue: High Attrition Rates in Longitudinal Studies

- Symptoms: Participant drop-off exceeds 30% after the third study visit. Follow-up compliance for daily diaries falls below 50%.

- Diagnosis: Excessive participant burden leading to disengagement.

- Solution:

- Implement Burden Scoring: Use a tool like the "Participant Burden Questionnaire" (see Table 1) to quantify effort before study launch.

- Apply Adaptive Protocols: Design studies where data collection intensity (e.g., weekly surveys vs. daily) adapts based on individual participant compliance or self-reported fatigue signals.

- Micro-randomized Trials (MRTs): For digital interventions, use MRTs to test engagement strategies (e.g., timing of prompts) with minimal added burden, optimizing adherence.

Issue: Poor Quality or Rushed Self-Reported Data

- Symptoms: Straight-lining in surveys, implausibly fast completion times, increased missing entries in ecological momentary assessment (EMA).

- Diagnosis: Participant fatigue leading to satisficing behavior.

- Solution:

- Embedded Data Quality Checks: Integregate attention-check items and response time monitoring to flag low-quality submissions in real-time.

- Dynamic Questionnaire Branching: Use logic to skip irrelevant questions, shortening task length.

- Gamification & Feedback: Introduce subtle progress bars or provide aggregate feedback (e.g., "Your responses are helping identify X pattern") to enhance intrinsic motivation without coercion.

Issue: Sensor/Device Non-Adherence in Digital Phenotyping

- Symptoms: Poor compliance with wearable device wear-time (<10 hours/day), frequent missed passive data streams.

- Diagnosis: Device burden, privacy concerns, or unclear participant value proposition.

- Solution:

- Co-Design Device Protocols: Involve patient advocates in setting realistic wear-time expectations (e.g., 12 hrs/day vs. 24).

- Transparent Data Pathways: Clearly communicate to participants what data is collected and how it is processed (see Diagram 1: Data Flow & Participant Awareness).

- Simplify Charging Logistics: Provide portable chargers or design protocols with explicit charging windows to reduce hassle.

Diagram 1: Data Flow & Participant Awareness (86 chars)

Frequently Asked Questions (FAQs)

Q1: How can we quantitatively estimate participant burden before starting a trial? A: Use a pre-study burden assessment framework. Score different components (time, frequency, emotional load, physical effort) and sum them for a total burden score. Correlate this with predicted adherence from pilot studies.

Table 1: Pre-Study Participant Burden Assessment Matrix

| Data Collection Modality | Time Burden (per instance) | Cognitive/Emotional Load | Physical/Logistical Effort | Burden Score (1-10) |

|---|---|---|---|---|

| 60-min Clinical Visit | 90 mins (incl. travel) | High (medical procedures) | High | 9 |

| 10-item Daily EMA | 2-3 mins | Low-Medium | Low | 3 |

| Continuous Wearable | 1 min (to don/charge) | Very Low | Medium | 2 |

| Weekly Biospecimen (Saliva) | 5 mins | Low | Medium (must remember) | 4 |

Q2: What experimental protocols can dynamically balance data density and participant fatigue? A: Adaptive Trial Designs and Just-in-Time Adaptive Interventions (JITAIs) are key methodologies.

- JITAI Protocol Overview: The goal is to deliver the right intervention component at the right time, based on a participant's state, while minimizing unnecessary interactions.

- Streaming Data Input: Continuous or frequent data (e.g., GPS, step count, self-reported mood) serve as proximal outcomes.

- Decision Points: Pre-specified moments (e.g., 8 PM daily) where an algorithm assesses if a participant is in a "vulnerable state" (e.g., sedentary, low mood).

- Intervention Randomization: If the "vulnerable state" threshold is met, the system randomly assigns (or selects) an intervention (e.g., a motivational message) vs. no message. This tests the intervention's efficacy in context.

- Burden Optimization: The algorithm's sensitivity can be tuned to avoid over-solicitation, directly trading off intervention dose/data points for participant fatigue.

Q3: Our data shows a clear decline in response accuracy after week 4. How do we statistically adjust for this fatigue effect?

A: Incorporate Time-on-Study as a covariate in your longitudinal mixed-effects models. For example:

Response_Accuracy_ij = β0 + β1*(Condition) + β2*(Week_Number_ij) + u_i + e_ij

Where β2 estimates the linear effect of time (fatigue), and u_i is the random intercept for each participant. This controls for the overall decline, allowing you to isolate the true condition effect.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Optimizing the Data-Burden Trade-off

| Tool / Reagent | Function in Research | Role in Managing Trade-off |

|---|---|---|

| Digital Phenotyping Platforms (e.g., Beiwe, RADAR-base) | Open-source frameworks for passive (GPS, accelerometer) and active (EMA) data collection via smartphones. | Enable low-burden, continuous data collection in naturalistic settings, reducing need for clinic visits. |

| Consent & Transparency Tools (e.g., "Dynamic Consent" portals) | Digital platforms allowing participants to view, manage, and adjust their data sharing preferences over time. | Builds trust, reduces perceived burden of data misuse anxiety, potentially improving retention. |

| Burden Quantification Surveys (e.g., PBQ, Perceived Burden Scale) | Validated questionnaires administered during trials to measure subjective burden. | Provides real-time metrics to identify breaking points and trigger protocol adaptations. |

Adaptive Randomization Software (e.g., R AdaptiveDesign package) |

Algorithms that adjust allocation probabilities or intervention densities based on accumulating data or participant state. | Core engine for JITAIs and adaptive protocols that minimize unnecessary participant effort. |

Data Quality Suites (e.g., dataquieR in R) |

Software pipelines that perform automated quality checks (missingness, variability, paradoxical responding). | Identifies fatigue-related data degradation early, allowing for corrective contact or statistical control. |

Technical Support Center: Troubleshooting Guides & FAQs

Frequently Asked Questions (FAQs)

Q1: Our sensor-based adherence data shows high variance, potentially compromising trial validity. How can we improve data quality without overburdening participants? A: High variance often stems from inconsistent device use. Implement a tiered engagement protocol:

- Passive Optimization: First, deploy software algorithms (e.g., anomaly detection) to filter non-physiological artifacts from sensor data without participant contact.

- Low-Intensity Nudge: If artifacts persist, trigger an automated, friendly app notification reminding of proper device placement.

- Personalized Support: Only for persistent issues, initiate a brief, supportive call from the study coordinator. This minimizes effort while safeguarding data integrity.

Q2: Participant dropout rates are increasing in our long-term observational study, threatening data continuity. What are effective, ethical retention strategies? A: Retention is a key trade-off between longitudinal data quality and participant burden. Evidence-based strategies include:

Table 1: Participant Retention Strategies & Impact on Burden

| Strategy | Implementation | Expected Impact on Retention | Participant Burden Level |

|---|---|---|---|

| Micro-incentives | Small, periodic thank-you gifts or compensation milestones. | +10-15% | Low |

| Feedback Loops | Share aggregated, anonymized study findings with participants. | +5-10% | Very Low |

| Flexible Scheduling | Allow mobile app-based data entry within a wide time window. | +8-12% | Low |

| Reduced Contact Frequency | Switch from weekly to bi-weekly check-ins for stable cohorts. | May stabilize rates | Significantly Reduced |

Q3: How do we validate self-reported questionnaire data against objective biometrics without breaching trust? A: Use a transparent, consent-driven methodology. During enrollment, explicitly request permission to compare data types for validation. The analysis protocol should:

- Correlate self-reported mood scores (e.g., PHQ-9) with objective sleep patterns from wearable devices.

- Key Reagent Solution: Utilize de-identification hashing algorithms to link datasets while protecting identity, ensuring analysis occurs on pseudonymized data only.

- Present this validation plan in the informed consent form to uphold ethical transparency.

Experimental Protocol: Validating Subjective vs. Objective Data

- Objective: Assess correlation between self-reported fatigue and actigraphy data.

- Method:

- Cohort: 100 participants from chronic condition study.

- Tools: Daily 1-question fatigue VAS (Visual Analog Scale) via app; wrist-worn actigraph.

- Duration: 30 days.

- Analysis: Compute correlation coefficient (Pearson's r) between daily VAS score and actigraph-derived total sleep time & resting heart rate. Statistical significance set at p < 0.05.

- Ethical Guard: Participants can opt-out of this specific analysis while remaining in the main study.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Digital Phenotyping & Engagement

| Item / Solution | Function | Example in Practice |

|---|---|---|

| FHIR (Fast Healthcare Interoperability Resources) Standards | Enables secure, standardized data exchange between apps, devices, and EHRs. | Harmonizing patient-reported outcomes from an app with clinical lab data. |

| Federated Learning Algorithms | Trains machine learning models across decentralized devices without sharing raw data. | Developing a global predictive model for adherence while keeping individual data on participants' phones. |

| eConsent Platforms with Multimedia | Enhances participant understanding through interactive, video-based consent forms. | Ensuring true informed consent for complex data sharing and validation protocols. |

| Behavioral "Nudge" Engines | Delivers automated, personalized prompts based on participant behavior patterns. | Sending a reminder to complete a survey only when app usage indicates low-burden timing. |

Visualizations

Technical Support Center: Troubleshooting PROs in DCTs

Thesis Context: This support center addresses common technical and operational challenges in the context of research focused on optimizing the trade-offs between data completeness/quality and volunteer (patient) effort in decentralized clinical trials utilizing PRO measures.

FAQs & Troubleshooting Guides

Q1: In our DCT, PRO completion rates dropped by over 40% after the first month. What are the primary technical and human-factor causes? A: Common causes include:

- Application Fatigue: Overly frequent notifications or complex PRO interfaces.

- Technical Glitches: PRO surveys not loading or failing to submit on certain mobile OS versions.

- Lack of Integration: PRO data not syncing with wearables data, making the effort seem disjointed.

- Battery Drain: The trial app is perceived to excessively drain device battery.

- Troubleshooting Protocol:

- Analyze backend compliance data by device type and OS.

- Deploy a short, anonymous in-app survey to the non-compliant cohort asking about the primary barrier.

- Check server logs for failed submission APIs around the time of scheduled reminders.

- Pilot a simplified PRO version with a subset of participants.

Q2: How do we validate that a PRO collected via a personal smartphone in a DCT is equivalent to data collected on a provisioned device or in-clinic? A: Execute a controlled validation sub-study.

- Protocol: Recruit a small cohort (n=50-100) from your trial population. Have each participant complete the same PRO assessment three ways in a randomized order within a 24-hour window: 1) On their personal device via the DCT app, 2) On a standardized, provisioned tablet, and 3) Via a paper form (the traditional gold standard). Collect metadata (device type, OS, completion time).

- Analysis: Use Intraclass Correlation Coefficient (ICC) for agreement and multivariate analysis to identify if device type is a significant variable influencing score variance.

Q3: Our DCT platform collects PROs, wearable data, and eCOA. How can we technically triage missing data: is it a patient compliance issue or a system integration failure? A: Implement a diagnostic workflow.

Diagram Title: Triage Workflow for Missing PRO Data in DCT

Q4: What are the key technical specifications for ensuring PRO instrument adherence to FDA guidelines when delivered via a DCT app? A: The system must ensure:

- Data Integrity: End-to-end encryption with audit trails. No local caching of unsubmitted responses.

- Precision in Timing: Timestamps for question presentation, modification, and final submission.

- Content Fidelity: The PRO must be rendered exactly as validated, with no alteration of question text, order, or response scale layout across devices.

- Accessibility: Compliance with WCAG 2.1 AA standards for participants with disabilities.

Table 1: Common PRO Compliance Issues in DCTs & Mitigation Impact

| Issue | Typical Incidence Rate in DCTs | Mitigation Strategy | Observed Improvement in Compliance |

|---|---|---|---|

| Notification Overload | 25-40% of participants mute alerts | Personalized reminder scheduling based on user activity | +15-25% |

| Long PRO Burden | 50%+ drop-off for forms >10 min | Micro-randomization to test shorter, adaptive forms | +30% completion rate |

| Technical Friction | 5-15% of submissions fail | Pre-submission local validation & auto-save drafts | +12% submission success |

| Low Digital Literacy | Cohort-dependent (up to 20%) | In-app video tutorials & one-tap helpline | +18% in affected cohort |

Table 2: Data Quality Indicators: DCT vs. Traditional Site-Based PRO Collection

| Data Quality Metric | Traditional Site-Based (Paper) | DCT (Digital PRO) | Notes |

|---|---|---|---|

| Missing Item Level Data | 5-10% | <2% | Digital forms can enforce completeness. |

| Transcription Errors | Potential (manual entry) | Near Zero | Direct digital capture. |

| Ecological Validity | Lower (clinic environment) | Higher (home environment) | Context influences responses. |

| Score Variance | Often Lower | Can be Higher | Reflects real-world fluctuation. |

Experimental Protocol: Measuring the Effort-Quality Trade-off

Title: Protocol for a Micro-Randomized Trial (MRT) to Optimize PRO Reminder Strategies.

Objective: To determine the effect of different reminder message framings ("for your health" vs. "for the study") and delivery times on PRO compliance and data quality (measured by response variance and correlation with wearable activity data).

Methodology:

- Participants: 300 participants enrolled in a DCT for chronic condition X.

- Intervention: Over a 4-week period, participants are micro-randomized daily to receive one of four PRO reminder push notifications:

- Arm A: Altruistic Frame, Morning

- Arm B: Altruistic Frame, Evening

- Arm C: Personal Health Frame, Morning

- Arm D: Personal Health Frame, Evening

- Primary Outcome: PRO completion rate within 2 hours of reminder.

- Secondary Outcome: Within-person standard deviation of PRO scores, adjusted for wearable-measured activity.

- Analysis: Use generalized estimating equations (GEE) to model the proximal effect of reminder type on daily completion, accounting for participant-level factors and temporal trends.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Components for a PRO-DCT Research Stack

| Item | Function in PRO/DCT Research | Example/Note |

|---|---|---|

| eCOA/PRO Platform | Validated system for delivering questionnaires electronically. | Must be 21 CFR Part 11 compliant and support linguistic validation. |

| Wearable Data Aggregator | Device-agnostic API platform to collect actigraphy, heart rate, etc. | Enables correlation of PRO scores with objective physiological measures. |

| Micro-Randomization Engine | Software for assigning time-varying interventions at the individual level. | Core tool for optimizing engagement strategies in real-time. |

| Digital Phenotyping SDK | Passive data collection from smartphones (anonymized usage, location patterns). | Provides context for PRO responses (e.g., social activity level). |

| Participant Feedback Module | Integrated tool for in-app surveys and experience sampling. | Critical for understanding the volunteer effort perspective. |

| Data Lake with Audit Trail | Centralized repository for all trial data (PRO, wearable, device metadata). | Allows for complex, integrated analysis of quality-effort trade-offs. |

Diagram Title: PRO Data Flow & Optimization Loop in a DCT System

Practical Frameworks for Study Design: Methodologies to Maximize Data Yield Per Participant Effort

Technical Support Center

Troubleshooting Guides & FAQs

Q1: In our early-phase proof-of-concept study, our pharmacokinetic (PK) data shows high variability, making it difficult to draw clear conclusions. Are we collecting too many blood samples, potentially increasing stress and variability?

A: This is a common issue. A 'Fit-for-Purpose' approach suggests aligning sampling intensity with the phase's primary objective. For early-phase studies (Phase I/IIa), the goal is often to confirm exposure and assess safety signals, not to define the precise PK profile.

- Protocol Suggestion: Implement a sparse sampling strategy. Instead of 15-20 samples per subject, use a population PK approach with 2-4 strategically timed samples per subject across a larger cohort. This reduces burden per volunteer while still enabling model-based population parameter estimation.

- Solution: Review your primary endpoint. If it is "Proof of sufficient exposure to test the mechanism," a sparse design is valid. Use the following optimized protocol.

Experimental Protocol: Sparse PK Sampling for Early-Phase Studies

- Cohort Design: Enroll 24 subjects into 4 dose groups (n=6 each).

- Dosing: Administer single ascending oral doses.

- Sparse Sampling Schedule: For each subject, collect 4 blood samples at pre-determined windows (e.g., pre-dose, 0.5-2h post, 2-6h post, and 6-24h post-dose). Assign specific times randomly within these windows across the population to cover the profile.

- Bioanalysis: Use a validated LC-MS/MS method for compound quantification.

- Analysis: Perform population pharmacokinetic (PopPK) modeling (e.g., using NONMEM or Monolix) to estimate key parameters (CL/F, Vd/F, ka) and their variability.

Q2: We need to demonstrate target engagement for our novel kinase inhibitor in a Phase II trial. What is the most volunteer-friendly way to collect robust pharmacodynamic (PD) data without overly invasive serial biopsies?

A: The principle is to use the least invasive method sufficient to reliably measure the PD biomarker correlated with your clinical endpoint.

- Protocol Suggestion: Employ a surrogate tissue approach combined with imaging. If the target is expressed in peripheral blood mononuclear cells (PBMCs), use serial blood draws instead of tissue biopsies. Couple this with a functional imaging endpoint (e.g., FDG-PET for metabolic response) at baseline and key time points.

- Solution: A tiered biomarker strategy reduces patient burden while collecting multi-faceted evidence.

Experimental Protocol: Tiered PD Assessment for a Kinase Inhibitor

- Primary PD (Blood-based): Collect blood samples at pre-dose, 2h, 24h, and Day 15. Isolate PBMCs, lyse, and measure phospho-protein target inhibition via Western Blot or phospho-flow cytometry.

- Secondary/Exploratory PD (Imaging): Perform FDG-PET scans at screening and on Day 15 of treatment to assess metabolic response in the target tissue.

- Correlative Analysis: Link the degree and duration of target inhibition in PBMCs with changes in imaging metrics and early efficacy signals.

Q3: For our large Phase IIIb outcomes study, how do we balance the need for long-term safety data with minimizing the burden on thousands of participants who may be on drug for years?

A: In late-phase studies, the 'Fit-for-Purpose' philosophy shifts towards efficiency at scale and collecting data directly relevant to the benefit-risk profile in a real-world setting.

- Protocol Suggestion: Implement risk-based monitoring and electronic patient-reported outcomes (ePRO). Schedule clinic visits at longer intervals (e.g., every 6 months) for core safety labs and efficacy assessment, while using ePRO diaries on secure tablets/smartphones for frequent symptom and quality-of-life tracking between visits.

- Solution: Streamline in-clinic data collection and decentralize routine reporting.

Experimental Protocol: Hybrid Data Collection for Phase IIIb/IV Study

- In-Clinic Visits (Quarterly/Biannual): Conduct physical exams, comprehensive metabolic panel (CMP), complete blood count (CBC), adverse event (AE) assessment, and primary efficacy endpoint measurement.

- Remote ePRO Collection (Weekly): Patients complete validated symptom questionnaires and global assessment scales via a compliant ePRO app.

- Passive Data (Optional): Integrate data from wearable devices (e.g., step count, heart rate) with patient consent.

- Statistical Analysis: Use mixed models for repeated measures (MMRM) to analyze longitudinal ePRO data, correlating trends with clinic-based outcomes.

Table 1: Recommended Data Collection Intensity by Clinical Trial Phase

| Study Phase | Primary Goal | Recommended Sampling/Data Intensity | Key Trade-off Optimized |

|---|---|---|---|

| Phase I | Safety, Tolerability, PK | Intensive PK (full profile in limited subjects) → Sparse PK (across population) | Volunteer burden vs. Model-informed PK |

| Phase IIa | Proof of Concept, PD | Invasive serial biopsies → Surrogate tissue + imaging | Invasiveness vs. Evidence of target modulation |

| Phase IIb/III | Efficacy, Dose-response | Frequent clinic visits → Hybrid (clinic + ePRO) | Data richness vs. Participant retention & real-world relevance |

| Phase IIIb/IV | Long-term Outcomes, Safety | Traditional CRF-heavy monitoring → Risk-based + remote monitoring | Data volume vs. Operational cost & ecological validity |

Table 2: Comparison of Biomarker Collection Methods

| Method | Data Richness | Volunteer Burden/Cost | Best Fit Phase | Key Consideration |

|---|---|---|---|---|

| Serial Tumor Biopsy | Very High (direct tissue) | Very High (invasive, risky) | Phase I/II (PoC) | Ethical limits, sample feasibility |

| Sparse Blood PK | Moderate (population estimates) | Low (few blood draws) | Phase I/II | Requires robust PopPK modeling |

| PBMC PD Analysis | Moderate-High (surrogate) | Low-Moderate (blood draw) | Phase I/II | Must validate correlation to tissue |

| Imaging (PET/MRI) | High (anatomic/functional) | Moderate (cost, time) | Phase II/III | Excellent for longitudinal, non-invasive assessment |

| ePRO/Wearables | Moderate (subjective/continuous) | Very Low (remote) | Phase III/IV | Validation, patient compliance critical |

Diagrams

Diagram 1: Fit-for-Purpose Decision Pathway

Diagram 2: Tiered Biomarker Strategy Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for 'Fit-for-Purpose' Biomarker Studies

| Item | Function & Application | 'Fit-for-Purpose' Consideration |

|---|---|---|

| EDTA or Heparin Blood Collection Tubes | Stabilizes blood for plasma/PBMC isolation. Foundation for PK and surrogate PD assays. | Enables lower-volume, multi-analyte draws from a single sample, minimizing burden. |

| Lymphoprep or equivalent | Density gradient medium for isolating viable PBMCs from whole blood. | Critical for high-quality cellular PD biomarkers from a routine blood draw. |

| Phospho-Specific Flow Cytometry Antibodies | Multiplexed detection of phosphorylated signaling proteins in single cells. | Maximizes information from limited PBMC samples; more efficient than Western for sparse sampling. |

| Validated LC-MS/MS Assay Kits | Quantitative bioanalysis of drug concentrations in plasma. | High sensitivity allows for smaller sample volumes and reliable sparse PK data. |

| ePRO Platform (e.g., Medidata Rave, Castor EDC) | Secure, compliant electronic data capture for patient-reported outcomes. | Reduces clinic visit frequency, improves data quality and compliance in late-phase studies. |

| Population PK/PD Modeling Software (NONMEM, Monolix) | Analyzes sparse, unevenly sampled data to estimate population parameters. | The essential analytical tool for making robust inferences from reduced-intensity sampling designs. |

Leveraging Adaptive and Dynamic Study Designs to Reduce Unnecessary Data Points

Troubleshooting Guides & FAQs

Q1: Our interim analysis for an adaptive trial suggests futility, but the conditional power is borderline. Should we stop early or continue? A: This is a classic trade-off between volunteer effort and data quality. Follow this protocol:

- Calculate Conditional Power: Precisely compute conditional power using the current posterior distribution. Use a threshold defined in the charter (e.g., < 20% for futility).

- Check Pre-Planned Rules: Consult the trial's Statistical Analysis Plan (SAP). Deviating from pre-specified rules can introduce bias.

- Assess Operational Burden: Evaluate the remaining volunteer visits, procedures, and resource allocation against the marginal gain in information.

- Recommendation: If conditional power is below the pre-defined threshold, recommending stoppage for futility is statistically sound and ethically aligns with reducing unnecessary volunteer burden.

Q2: During a Bayesian adaptive design, how do we dynamically adjust randomization probabilities without compromising blinding? A: Use a centralized, automated randomization system (IRT). The protocol is:

- System Setup: The IRT interfaces with the trial's data capture system, receiving endpoint data.

- Independent Analysis: A pre-specified Bayesian model within a separate, unblinded statistical team analyzes accumulating data.

- Probability Calculation: The model calculates new allocation probabilities (e.g., favoring the better-performing arm).

- System Update: The unblinded team updates only the randomization algorithm within the IRT.

- Blinded Execution: Investigators and patients remain blinded. The IRT assigns the next patient using the updated probabilities, maintaining trial integrity.

Q3: Our platform trial's shared control arm data is becoming heterogeneous due to different experimental arms. How do we maintain data quality? A: Implement robust dynamic borrowing models. Methodology:

- Choose Borrowing Method: Select a model like Hierarchical Modeling (HM), Power Prior, or Commensurate Prior.

- Assess Commensurability: Continuously test for similarity between the current control data and historical/shared control data using pre-defined metrics (e.g., baseline covariate balance, outcome trends in a common run-in period).

- Dynamic Adjustment: The model automatically down-weights (borrows less from) the shared control data if heterogeneity is detected, preserving the integrity of the current arm's comparison.

- Benefit: This prevents dilution of treatment effect signals, ensuring data quality while still leveraging shared data to reduce control group size.

Q4: In a MAMS (Multi-Arm, Multi-Stage) design, how do we efficiently add a new treatment arm mid-trial? A: This requires a pre-planned, dynamic protocol amendment workflow:

- Pre-Specification: The trial master protocol must outline the criteria and process for adding new arms (e.g., based on external scientific evidence).

- Go/No-Go Decision: A dedicated oversight committee reviews the new evidence against pre-defined scientific validity criteria.

- Statistical Adjustment: The alpha-spending function and power are recalculated for the remaining stages, often using simulation, to control the overall Type I error.

- Operational Integration: The new arm is added with its own randomization allocation. The shared control arm and infrastructure are leveraged, minimizing new setup effort.

Q5: What are common pitfalls in implementing response-adaptive randomization (RAR) that lead to data loss? A: Key issues and solutions:

- Pitfall 1: High Variability Early On. Early outcome-adaptive shifts can be based on noisy data.

- Solution: Use a burn-in period with fixed 1:1 randomization until sufficient initial data is collected.

- Pitfall 2: Operational Lag. Delays in data entry and outcome assessment cause randomization based on stale data.

- Solution: Implement rapid outcome assessment (e.g., central lab, 72-hour readouts) and stringent data entry deadlines.

- Pitfall 3: Complex Logistics. Managing different drug supply for shifting probabilities.

- Solution: Utilize centralized packaging and distribution via IRT to handle dynamic allocation seamlessly.

Key Quantitative Data on Adaptive Designs

Table 1: Impact of Adaptive Designs on Sample Size & Data Points

| Design Type | Traditional Design Sample Size (Mean) | Adaptive Design Sample Size (Mean) | Average Reduction in Unnecessary Data Points | Key Enabling Factor |

|---|---|---|---|---|

| Group Sequential Design (GSD) | 100% | 85-90% | 10-15% | Early stopping for efficacy/futility |

| Sample Size Re-estimation (SSR) | 100% | 80-110%* | Variable, prevents under/overpowering | Blinded or unblinded reassessment of variance |

| Bayesian Adaptive Randomization | 100% | 75-85% | 15-25% | Dynamically allocating pts to superior arm |

| MAMS Platform Trial | 100% (per arm) | 60-80% (per arm) | 20-40% (via shared control) | Shared infrastructure & control arms |

*SSR can increase size if initial assumptions are too optimistic.

Table 2: Data Quality Metrics in Adaptive vs. Fixed Trials

| Metric | Fixed Design Benchmark | Adaptive Design Performance | Notes |

|---|---|---|---|

| Type I Error Control | 5% (Alpha) | Maintained at 5% with proper planning | Critical; requires simulation. |

| Operational Bias Risk | Low | Medium-High (if not masked) | Mitigated by Firewalls & IRT. |

| Data Completeness Rate | Typically High | Can be lower without stringent processes | Requires proactive QC. |

| Analysis Complexity | Standard | High | Needs advanced statistical expertise. |

Experimental Protocols

Protocol A: Implementing a Group Sequential Design (GSD) with O'Brien-Fleming Boundaries

- Objective: To allow for early trial termination while controlling overall Type I error.

- Design Phase:

- Define maximum number of interim analyses (K) (e.g., K=3).

- Calculate O'Brien-Fleming alpha-spending function using Lan-DeMets method. This allocates very little alpha to early looks, preserving power.

- Pre-specify efficacy and futility boundaries (Z-score thresholds) for each interim in the SAP.

- Execution Phase:

- At each pre-planned interim analysis, an independent Data Monitoring Committee (DMC) reviews unblinded data.

- The test statistic (e.g., Z-score for primary endpoint) is calculated.

- Decision Rule: If the test statistic crosses the pre-defined efficacy boundary, recommend early stop for success. If it crosses the futility boundary, recommend early stop for futility. Otherwise, continue to next stage.

- Outcome: Reduces volunteer exposure to inferior treatments or shortens time to beneficial treatment availability.

Protocol B: Blinded Sample Size Re-estimation (SSR) Based on Nuisance Parameter

- Objective: To adjust sample size based on a better estimate of a nuisance parameter (e.g., pooled variance, control group event rate) without unblinding treatment effects.

- Design Phase:

- In the SAP, specify the nuisance parameter to be re-estimated, the timing of the interim (e.g., after 50% of subjects complete), and the method for re-estimation.

- Execution Phase:

- At the interim point, a blinded statistician receives only the pooled data (treatment codes masked as A/B).

- The statistician calculates the re-estimated parameter (e.g., pooled variance σ²).

- Using the original effect size assumption, a new total sample size (N') is calculated using the standard formula.

- The sample size is adjusted upward or downward, typically with a pre-defined cap (e.g., no more than double the original).

- Outcome: Ensures the trial is adequately powered, protecting the investment and volunteer effort from a failed trial due to incorrect initial assumptions.

Visualizations

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Implementing Adaptive Clinical Trials

| Item / Solution | Function in Adaptive/Dynamic Trials |

|---|---|

| Interactive Response Technology (IRT) | Dynamically manages patient randomization (including RAR), drug supply allocation, and site management in real-time. The operational backbone. |

| Clinical Trial Management System (CTMS) with API | Tracks trial progress and site performance; integrates with IRT and EDC to provide operational data for interim decisions. |

| Electronic Data Capture (EDC) with Real-Time Data | Ensures critical endpoint and safety data are available rapidly for interim analyses and adaptive algorithm updates. |

| Statistical Software (R, SAS, EAST) | Advanced software capable of complex simulations for design, Bayesian analysis, and generating boundary tables for interim analyses. |

| Unblinded Data Analysis "Firewall" | A secured, independent team/process for conducting interim analyses to prevent operational bias and maintain trial integrity. |

| Master Protocol Template | A standardized framework for designing platform or umbrella trials, including governance, statistical, and operational sections. |

Technical Support Center: Troubleshooting & FAQs

Frequently Asked Questions (FAQs)

Q1: In a study comparing passive smartphone sensor data (e.g., GPS) with active Ecological Momentary Assessment (EMA) prompts for assessing mobility, participants in the passive cohort are exhibiting significantly higher dropout rates. What could be the cause and how can we mitigate it?

A: High dropout in passive data collection, despite its low apparent burden, often stems from "consent friction" and background battery drain. Participants may initially consent but revoke permissions later when they receive system warnings about background data usage.

- Mitigation Protocol: Implement a staged consent and education process. During onboarding, use in-app tutorials to explain why continuous background data is needed, how it will be used, and its specific impact on battery life (e.g., "This may reduce battery by ~10% per day"). Pair this with robust battery optimization coding practices, such as batching and throttling data transmission when the device is not charging.

Q2: We are observing high variance in heart rate variability (HRV) data collected passively from consumer-grade wearables. How can we determine if this is biological signal or noise introduced by the device/platform?

A: This requires a controlled validation sub-study.

- Troubleshooting Guide:

- Control Experiment: Recruit a small sub-cohort (n=10-15) to simultaneously wear the consumer wearable and a research-grade ECG chest strap (e.g., Polar H10) during a standardized protocol (rest, paced breathing, light exercise).

- Data Synchronization: Precisely time-sync data streams from both devices.

- Analysis: Calculate HRV metrics (e.g., RMSSD, SDNN) from both sources for identical time windows. Use intra-class correlation coefficients (ICC) and Bland-Altman plots to assess agreement.

- Action: If ICC is poor (<0.5), develop and apply a device-specific signal processing or calibration filter before main study analysis.

Q3: Active EMA prompts are causing significant user fatigue, leading to rushed or nonsensical responses. How can we adjust the protocol to maintain data quality?

A: This is a classic trade-off between frequency and burden. Implement an adaptive prompting algorithm.

- Solution Protocol:

- Initial Phase: Begin with a higher frequency schedule (e.g., 5 random prompts/day) for the first week to establish a baseline of compliance patterns.

- Adaptation Logic: Program the DHT app to analyze response latency and patterns. If a user consistently ignores prompts during work hours (e.g., 9 AM-5 PM), algorithmically reduce prompts during that window.

- Re-engagement: Introduce "burst" designs—increasing prompts for 48-hour periods following a detected physiological event (e.g., a period of very low activity from passive data) to capture context.

Q4: When integrating passive data from multiple sources (wearable, smartphone, smart home device), timestamps are misaligned, making merged datasets unusable. What is the standard procedure for temporal alignment?

A: This is a data engineering prerequisite. Follow this synchronization workflow.

Diagram Title: Data Stream Synchronization Workflow

- Protocol:

- Extract Metadata: For each data stream, extract the local device timestamp and the timezone offset at the moment of recording.

- Identify Paired Events: Define a shared event captured by multiple devices (e.g., "device charging start" logged by both phone and wearable). Use this to calculate device clock drift offsets.

- Transform to Common Timeline: Convert all timestamps to Coordinated Universal Time (UTC) using the recorded offset, then apply the drift correction factor.

- Validation: Post-alignment, check for logical inconsistencies (e.g., a reported "sleep" period from a wearable that overlaps with "app use" data from the phone).

Table 1: Passive vs. Active Data Collection Characteristics

| Parameter | Passive Collection (e.g., GPS, Accelerometer) | Active Collection (e.g., EMA, eDiary) |

|---|---|---|

| Participant Burden | Very Low (unobtrusive, background) | Moderate to High (requires attention/interruption) |

| Data Density | Very High (continuous streams) | Low to Medium (discrete timepoints) |

| Context Richness | Low (infers context from sensors) | High (direct subjective input) |

| Primary Bias Risk | Selection Bias (device ownership/use) | Recall & Response Bias (fatigue, social desirability) |

| Typical Compliance* | 75-95% (of enrolled device time) | 50-80% (prompt response rate) |

| Key Technical Hurdle | Data volume, battery drain, signal processing | Smart prompting, UI/UX, engagement |

*Compliance rates are illustrative medians from recent literature (2022-2024) and are highly study-dependent.

Table 2: Validation Study Results for Consumer Wearable HRV vs. Research-Grade ECG

| Metric (5-min Rest) | Consumer Wearable (Mean ± SD) | Research ECG (Mean ± SD) | Intra-class Correlation (ICC) | Recommended Action |

|---|---|---|---|---|

| RMSSD (ms) | 42.3 ± 10.5 | 38.7 ± 9.2 | 0.72 (Moderate) | Apply linear correction factor. |

| SDNN (ms) | 65.8 ± 15.1 | 58.4 ± 12.8 | 0.45 (Poor) | Do not use SDNN from this device; rely on RMSSD. |

| Valid Samples | 92% of sessions | 100% of sessions | N/A | Flag sessions with <80% wearable signal quality. |

Experimental Protocols

Protocol 1: Validating Passive Digital Mobility Metrics Against a Clinical Gold Standard

Objective: To establish the criterion validity of smartphone-derived step count and GPS circular area (a measure of mobility radius) against the Timed Up-and-Go (TUG) test and the 6-Minute Walk Test (6MWT).

- Participant Cohort: N=50 participants with a range of mobility (healthy controls to mild impairment).

- DHT Setup: Install study app on participant smartphones with permissions for continuous accelerometer and GPS data collection for 7 days.

- Clinical Visit: On day 7, participants perform:

- TUG Test: Time to rise from a chair, walk 3 meters, turn, walk back, and sit down. Performed 3x; average recorded.

- 6MWT: Total distance walked on a pre-measured flat corridor in 6 minutes.

- Digital Metric Calculation:

- Steps: Sum daily average steps from the smartphone sensor over the 7-day lead-in.

- GPS Circular Area: Calculate the 95% confidence ellipse area from all GPS pings per day, then average.

- Analysis: Perform Pearson correlation between daily average steps and 6MWT distance, and between GPS area and TUG time. Target: r > 0.7 for convergent validity.

Protocol 2: Adaptive EMA Prompting to Reduce Burden

Objective: To maintain response rate (>80%) and data quality while minimizing prompt fatigue over a 30-day study.

- Algorithm Design: Develop an algorithm with three prompting "zones":

- Green Zone (High Probability): Times of historically high response rates (>90%). Schedule 70% of prompts here.

- Yellow Zone (Medium Probability): Times of moderate response rates (50-90%). Schedule 25% of prompts.

- Red Zone (Low Probability): Times of low response rates (<50%). Schedule 5% of prompts (to check for habit change).

- Study Design: Randomized control trial (RCT). Arm 1 (n=50): Static random prompts (8/day). Arm 2 (n=50): Adaptive prompts (starting at 8/day, adjusting after day 7).

- Primary Metrics:

- Compliance: % of prompts answered.

- Latency: Time to response.

- Data Quality: Word count in open-text responses, variance in Likert-scale responses.

- Evaluation: Compare compliance and data quality metrics between arms at day 30 using t-tests. Target: Arm 2 shows non-inferior data quality with significantly higher compliance and lower perceived burden (via post-study survey).

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in DHT Research | Example Products/Tools |

|---|---|---|

| Research-Grade Validation Device | Provides a gold-standard signal to validate the accuracy and precision of consumer DHTs. | ActiGraph GT9X (activity), Polar H10/Firstbeat (HR/HRV), CamNtech MotionWatch 8 (sleep). |

| High-Fidelity Data Aggregation Platform | Securely collects, time-aligns, and standardizes multimodal data streams from various DHTs via APIs. | Beiwe, RADAR-base, Fitbit/Apple Health Kit connectors via custom pipelines. |

| Open-Source Signal Processing Library | Cleans and processes raw digital signals (e.g., accelerometer, photoplethysmography) to extract clinical features. | Python: heartpy, scikit-digital-health. R: GGIR for accelerometry. |

| Regulatory & Compliance Framework | Ensures the digital data collection meets ethical (informed consent) and regulatory (21 CFR Part 11, GDPR) standards for clinical research. | REDCap for eConsent, MyDataHelps platform with audit trails, AWS/GCP with HIPAA-compliant configurations. |

| Participant-Facing App Framework | Allows for rapid prototyping and deployment of custom study apps for active (EMA) and passive data collection. | Apple ResearchKit, Google Fit Platform, Expidata, Cardiogram. |

Technical Support Center

Troubleshooting Guide: Common Issues in Tool Optimization

Q1: During cognitive interviewing, participants struggle to articulate their thought process, resulting in poor feedback on question wording. How can I improve this? A: This is a common issue where the "thinking aloud" protocol breaks down. Implement a three-stage prompting system:

- Initial Prompt: "Please say everything that comes to mind as you read this question."

- If silent for >5 seconds, use a neutral probe: "What are you thinking about right now?"

- If still struggling, use a specific probe: "What does the term '[KEYWORD FROM QUESTION]' mean to you here?" Avoid leading the participant. Practice with pilot volunteers not involved in the main study to refine prompts, balancing the need for rich verbal data with volunteer cognitive effort.

Q2: My usability test shows a high error rate on a specific eCRF (electronic Case Report Form) page, but participants don't report it as difficult in post-task questionnaires. Which metric should I trust? A: Trust the observed performance data (error rate, time on task) over the subjective rating. This discrepancy highlights the need for triangulation. Follow this protocol:

- Review screen recordings: Identify where clicks or keystrokes deviate from the expected path.

- Conduct a retrospective cognitive interview: Replay the screen recording to the participant and ask, "I noticed you hesitated here. Can you recall what you were trying to do at this point?"

- Prioritize fixing usability issues that objectively degrade data quality (e.g., wrong field entries), even if unreported, as they directly impact your thesis variable of data quality.

Q3: How many participants are sufficient for cognitive interviewing and usability testing in this pre-study phase? A: Literature indicates a saturation point for usability issues is typically found with a small sample. The goal is iterative refinement, not statistical generalization.

| Test Phase | Recommended Sample Size | Key Rationale | Trade-off Consideration |

|---|---|---|---|

| Cognitive Interview | 5-8 per major tool revision | Identifies majority of comprehension problems and semantic issues. | Balances resource expenditure against the risk of launching a flawed tool. |

| Usability Test | 5-8 per major interface | Uncovers >80% of major usability problems (Nielsen's Law of Diminishing Returns). | Optimizes volunteer (tester) effort in the pre-study phase to prevent greater effort later. |

| Total Iterations | 2-3 cycles | Allows for "Test → Fix → Retest" to verify solutions. | Manages total pre-study timeline while ensuring meaningful optimization. |

Q4: Our sensor-based data collection app is failing to upload data in low-network field conditions, risking data loss. How can we test for this? A: You must simulate adverse conditions. Develop a controlled usability test protocol:

- Protocol: Set up a network simulation tool (e.g., Chrome DevTools Network Throttling, hardware network emulator).

- Task: Ask participants to complete a standard data entry task while connectivity degrades from 4G to 3G to "offline."

- Measure: a) Does the app provide a clear "saved locally" indicator? b) Does it automatically resume upload when connectivity is restored? c) Is the user prompted to try again?

- Observe: Participant anxiety and comprehension of the app's state. The solution must balance data integrity (no loss) with volunteer effort (minimal retyping).

FAQs: Optimizing Tools for Data Quality vs. Volunteer Effort

Q: What's the most efficient order for pre-study testing: cognitive interviews or usability testing first? A: Conduct cognitive interviews first. Logic flow: You must ensure participants understand the questions (cognitive interview) before you can efficiently test the mechanics of answering them (usability test). Fixing wording issues after usability testing wastes resources.

Q: How do I quantify improvements from pre-study optimization to support my thesis? A: Define and compare metrics before and after each optimization cycle.

| Metric | Pre-Optimization (Mean) | Post-Optimization (Mean) | Measurement Method | Interpretation for Thesis |

|---|---|---|---|---|

| Task Completion Rate | e.g., 65% | e.g., 95% | Usability test observation | Higher completion improves data comprehensiveness (quality). |

| Average Time on Task | e.g., 120 sec | e.g., 75 sec | Usability test log data | Reduced time decreases volunteer effort and potential for frustration-related errors. |

| Critical Error Rate | e.g., 25% | e.g., 5% | Data validation check against gold standard | Directly correlates with improved data accuracy (quality). |

| User Satisfaction (SUS) | e.g., 55/100 | e.g., 82/100 | System Usability Scale questionnaire | Higher satisfaction may improve long-term volunteer retention, reducing recruitment effort. |

Q: Can I use the same volunteers for both cognitive interviews and usability testing? A: It is not recommended for the same tool iteration. Exposure in the cognitive interview biases their behavior in the usability test. Use separate, naive cohorts for each test type per iteration to get clean data on both comprehension and interface interaction.

Experimental Protocol: Combined Cognitive Interview & Usability Test

Title: Iterative Protocol for Pre-Study Data Collection Tool Optimization.

Objective: To identify and rectify comprehension (cognitive) and operational (usability) flaws in a data collection tool (e.g., eCRF, survey, app) in a single integrated session, optimizing for future data quality and minimizing volunteer effort.

Methodology:

- Participant Recruitment (n=5-8 per iteration): Recruit from a population analogous to the final study volunteers. Obtain informed consent.

- Setup: Record screen, audio, and participant's face (if possible) with appropriate releases.

- Phase 1 – Pure Cognitive Interview: Present the tool as a static PDF or prototype with no interactivity. Use "think aloud" and verbal probing to assess comprehension, recall, and judgment related to each question/item.

- Phase 2 – Usability Test: Provide the functional, interactive tool (e.g., live website, app). Assign realistic data entry tasks. Observe behavior silently; do not provide help. Record errors, hesitations, and completion time.

- Phase 3 – Retrospective Probe: For any observed usability issue, replay the recording and ask non-leading questions (e.g., "What was your goal here?") to understand the root cause.

- Analysis & Redesign: Thematically analyze findings from all phases. Prioritize issues that threaten data validity. Redesign the tool.

- Iterate: Repeat Steps 1-6 with a new cohort until critical issues are resolved (typically 2-3 iterations).

Visualizations

Title: Iterative Optimization Workflow for Study Tools

Title: Core Trade-off Explored in Pre-Study Optimization

The Scientist's Toolkit: Research Reagent Solutions

| Item/Category | Function in Pre-Study Optimization |

|---|---|

| Screen Recording Software (e.g., Camtasia, OBS) | Captures all on-screen interactions, mouse movements, and keystrokes during usability testing for detailed retrospective analysis. |

| Prototyping Tool (e.g., Figma, Adobe XD) | Creates high-fidelity, interactive mockups of eCRFs or apps for usability testing without backend development. |

| Network Simulation Tool (e.g., Chrome DevTools, Apple Network Link Conditioner) | Artificially degrades network conditions to test offline functionality and data resilience of mobile data collection tools. |

| System Usability Scale (SUS) | A standardized, reliable 10-item questionnaire providing a quick global view of subjective usability and learnability. |

| Dedicated Interview Room | A quiet, controlled environment free from distractions to conduct cognitive interviews and ensure high-quality audio recording. |

| Qualitative Analysis Software (e.g., NVivo, Dedoose) | Aids in thematically coding and analyzing textual/verbal data from cognitive interview transcripts and open-ended probes. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: In our branching questionnaire, users are being presented with contradictory follow-up questions. What is the likely cause and how can we fix it? A: This is typically a logic conflict in your skip/display rules. Ensure your conditional logic (e.g., "IF Question A score > 5, THEN skip to Section C") is mutually exclusive and uses a consistent variable state. Debug by creating a test user and tracing the path with a flowchart tool. Within the thesis context, this error directly inflates perceived volunteer effort without improving data quality.

Q2: How do I calibrate new questions into an existing IRT-powered questionnaire without disrupting ongoing data collection? A: Use an online calibration design. Embed new experimental items alongside a fixed set of existing, well-calibrated "anchor" items. Direct only a randomized subset of participants to see the new items. Use the responses to estimate the new items' parameters (difficulty, discrimination) on the same scale as the anchor items, ensuring continuity. This aligns with the thesis goal of iterative optimization without burdening the entire volunteer pool.

Q3: Our data shows a high dropout rate at a specific questionnaire branch. How can we determine if the question is too difficult or irrelevant?

A: Analyze the differential effort. First, check the IRT parameters: a very high difficulty (b > 3.0) suggests the item is too challenging for your population. Second, examine response time logs for slowdowns at that node. Third, implement a prompt asking users who skip the question for a reason (e.g., "Too difficult," "Not applicable to me"). This multi-method troubleshooting isolates whether the trade-off is skewed toward unacceptable effort.

Q4: What is the minimum sample size required for a stable IRT calibration in this context? A: While larger is always better, a common rule of thumb for the 2-Parameter Logistic (2PL) or Graded Response models is N ≥ 500 for stable parameter estimation. For polytomous (rating scale) items, you may need more. See the table below for guidelines.

Table 1: Minimum Sample Size Guidelines for IRT Calibration

| IRT Model | Minimum Sample Size (Participants) | Key Consideration |

|---|---|---|

| Rasch (1PL) | 250 - 500 | Robust to smaller samples, but person measures may be less precise. |

| 2PL / 3PL | 500 - 1000 | Essential for accurate discrimination & guessing parameter estimation. |

| Graded Response | 500 - 750 | More categories per item can increase data requirements. |

| Online Calibration | 100 per item form | For embedding new items in operational tests. |

Q5: How can we validate that our modular questionnaire is actually reducing irrelevant questions without sacrificing data granularity? A: Conduct a controlled A/B experiment.

- Protocol: Randomly assign volunteers to two groups.

- Group A (Control): Receives the full, linear questionnaire.

- Group B (Experimental): Navigates the new modular/branching questionnaire.

- Metrics: Compare: 1) Average completion time (effort proxy), 2) Dropout/completion rate, 3) Data Yield: The amount of usable, non-missing data per domain per user, and 4) Scores on a short, common validation scale administered at the end (quality check).

- Thesis Alignment: A successful implementation will show statistically significant reduction in effort (time) for Group B while maintaining equivalent Data Yield and validation scores, optimizing the core trade-off.

Experimental Protocol: Validating Questionnaire Efficiency

Title: A/B Test for Branching Logic Efficiency in Volunteer-Based Research.

Objective: To quantitatively compare the trade-off between data quality and volunteer effort in a linear versus a smart modular questionnaire.

Methodology:

- Participant Recruitment: Recruit a target sample (N≥1000) from the volunteer research platform. Ensure informed consent.

- Randomization: Use a random number generator to assign participants to Group A (Linear) or Group B (Modular).

- Intervention:

- Group A: Presented with a static questionnaire containing all possible items across all domains.

- Group B: Presented with an initial "routing" module. Subsequent modules (e.g., detailed symptom domains) are presented based on pre-defined logic using IRT trait level estimates and substantive rules.

- Data Collection: Log timestamps for start/end of each module, all responses, and dropout events. All participants complete a 5-item "gold standard" validation scale at the end.

- Analysis:

- Primary Effort Metric: Mean completion time difference (Group B vs. A).

- Primary Quality Metric: Mean score difference on the validation scale.

- Secondary Metric: Compare "data density" (non-missing responses per minute of effort).

Visualizations

Diagram 1: Modular Questionnaire Decision Logic Workflow

Diagram 2: IRT Item Calibration & Routing Relationship

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Implementing Smart Questionnaires

| Tool / Reagent | Function in Research | Example / Note |

|---|---|---|

IRT Software (R mirt) |

Statistical engine for calibrating items, estimating person parameters (θ), and simulating adaptive tests. | The mirt package is the industry standard for flexible multidimensional IRT modeling. |

| Survey Platform with API | Hosts the questionnaire, manages user sessions, and allows dynamic logic via external calls. | Platforms like Qualtrics, REDCap, or LimeSurvey offer API access for custom routing. |

| Logging Database | Stores timestamps, response sequences, and decision path flags for detailed effort analysis. | Crucial for post-hoc validation of the branching logic and dropout analysis. |

| A/B Testing Framework | Randomly assigns participants to different questionnaire versions for controlled comparison. | Can be built into the survey platform or managed externally (e.g., Google Optimize). |

| Pilot Volunteer Pool | Provides the initial data sample required for stable calibration of item parameters before full launch. | A representative sample of at least 500 participants is a key "reagent" for quality. |

Solving Common Pitfalls: Troubleshooting Strategies for High-Burden, Low-Quality Data Scenarios

Technical Support Center

Troubleshooting Guides & FAQs

FAQ 1: What constitutes a "high" missing data rate, and what should I do when I encounter it?

Answer: A missing data rate exceeding 5-10% per variable, or 15-20% for a participant's record, is generally considered a red flag warranting investigation. The first step is to diagnose the pattern using Little's MCAR test.

- If data is Missing Completely At Random (MCAR): Proceed with listwise deletion or use appropriate imputation methods (e.g., Multiple Imputation by Chained Equations - MICE).

- If data is Missing Not At Random (MNAR): This is a critical bias. You must analyze the mechanism (e.g., sensitive questions causing drop-offs) and consider statistical models like selection models or pattern-mixture models that account for the missingness mechanism.

Experimental Protocol for Diagnosing Missing Data Pattern:

- Data Preparation: Clean your dataset and code missing values appropriately (e.g.,

NA). - Perform Little's MCAR Test: Use statistical software (e.g., R's

naniarorBaylorEdPsychpackage, SPSS Missing Value Analysis). - Interpretation: A non-significant p-value (p > 0.05) suggests data may be MCAR. A significant p-value (p < 0.05) indicates data is likely MNAR or MAR.

- Pattern Examination: Create visualizations (e.g., missingness matrix plot using

VIM::aggrin R) to identify if missingness clusters in specific variables or participant subgroups.

FAQ 2: How can I detect and mitigate response bias (e.g., acquiescence, straight-lining) in survey data?

Answer: Response bias threatens internal validity. Detection requires proactive questionnaire design and post-hoc analysis.

Mitigation Protocol:

- Prevention in Design:

- Use reverse-coded items to catch acquiescence.

- Incorporate instructed response items (e.g., "For quality control, please select 'Strongly Disagree' for this statement").

- Distribute attention-check questions throughout the survey.

- Vary response scale anchors to prevent automatic responses.

- Post-Hoc Detection & Cleaning:

- Straight-lining: Flag participants with zero variance in their responses across a battery of items (e.g., all "4" on a 7-point Likert scale for 10 consecutive questions).

- Analysis: Calculate intra-individual response standard deviation. Participants with a standard deviation below a defined threshold (e.g., 0.5) over a set of items should be flagged for review or exclusion.

- Mahalanobis Distance: Calculate to identify multivariate outliers indicative of aberrant response patterns.

Data Table: Common Response Biases and Detection Methods

| Bias Type | Description | Quantitative Detection Method | Threshold for Flagging |

|---|---|---|---|

| Acquiescence | Tendency to agree with all items. | High average score + lack of variance on reverse-coded items. | Score > 90th percentile & failed reverse-code check. |

| Straight-Lining | Identical responses to all items in a matrix. | Zero or near-zero standard deviation across a block of items. | Standard Deviation < 0.5 for a 10+ item block. |

| Careless Responding | Random or inattentive answers. | Failed instructed response items; implausibly fast completion time. | >1 failed instructional check; time < 2 sec/item. |

| Social Desirability | Answering in a culturally acceptable manner. | High score on a social desirability scale (e.g., Marlowe-Crowne). | Score > established normative cut-off (e.g., >15 on 33-item scale). |

FAQ 3: How do I analyze participant dropout (attrition) patterns to assess bias?

Answer: Systematic dropout can invalidate longitudinal results. The key is to compare baseline characteristics of completers vs. dropouts.

Experimental Protocol for Attrition Bias Analysis:

- Define Groups: Segment participants into