Beyond the Manual Review: Modern Strategies to Automate Data Verification and Reduce Expert Burden in Biomedical Research

This article addresses the critical challenge of expert overload in data verification within biomedical and drug development.

Beyond the Manual Review: Modern Strategies to Automate Data Verification and Reduce Expert Burden in Biomedical Research

Abstract

This article addresses the critical challenge of expert overload in data verification within biomedical and drug development. We explore the foundational causes of this workload, examine current automated methodologies and tools (including AI/ML, rule-based engines, and metadata validation), provide solutions for troubleshooting and optimizing these systems, and offer a framework for validating and comparing automated verification approaches. Aimed at researchers, scientists, and development professionals, this guide provides a comprehensive roadmap for implementing efficient, reliable, and scalable data verification processes that preserve expert insight for high-value tasks.

The Verification Bottleneck: Understanding the High Cost of Manual Data Checks in Research

In the pursuit of reducing expert workload in data verification processes, a clear operational definition is essential. In biomedical research, Data Verification is the systematic, technical process of confirming that data have been accurately transcribed, transformed, or processed from one form to another, ensuring fidelity and integrity without assessing scientific plausibility or biological meaning. It is a cornerstone of reproducibility and quality assurance in drug development.

Technical Support Center

Troubleshooting Guides & FAQs

Q1: Our automated plate reader data shows high coefficient of variation (CV) between technical replicates. What are the first steps to verify the raw data? A: High inter-replicate CV often points to instrumental or liquid handling error. Follow this verification protocol:

- Instrument Calibration Check: Run the manufacturer's calibration protocol using reference standards. Verify optical path integrity.

- Liquid Handling Verification:

- Perform a dye-based (e.g., fluorescein) volume accuracy test for all dispensers.

- Visually inspect for bubble formation or incomplete dispensing in the source data images.

- Raw Data Inspection: Export the raw fluorescence/luminescence values (not just processed averages) and calculate CVs per well. A systematic pattern (e.g., high CV only in edge wells) indicates an environmental artifact.

Q2: After RNA-Seq alignment, my verification pipeline flags a sample swap risk. How can I confirm sample identity without costly re-sequencing? A: Implement a genotype-based verification check using existing data:

- Methodology: Extract single nucleotide polymorphism (SNP) calls from your RNA-Seq variant calling output (e.g., using GATK) for a panel of common, high-coverage SNPs.

- Comparison: Cross-reference these SNP profiles with any historically genotyped data for the same cell lines or patient samples (e.g., from prior microarray experiments).

- Decision Threshold: A match of >99.5% of alleles confirms identity. A mismatch indicates a probable swap, triggering a re-check of the sample tracking ledger and pre-sequencing QC files.

Q3: How do I verify that my automated image analysis pipeline for cell counting is performing as accurately as manual annotation? A: Conduct a structured, blinded verification experiment:

- Create a Gold Standard Set: Manually annotate cells (label nuclei/cytoplasm) in 50-100 randomly selected field-of-view images. Use multiple annotators and resolve discrepancies.

- Pipeline Run: Process the same images through your automated pipeline.

- Metric Calculation: Compare using standard metrics. Acceptable thresholds for verification are shown below.

Table 1: Acceptable Verification Metrics for Automated Cell Counting vs. Manual Annotation

| Metric | Calculation | Verification Threshold |

|---|---|---|

| Precision | True Positives / (True Positives + False Positives) | ≥ 0.95 |

| Recall (Sensitivity) | True Positives / (True Positives + False Negatives) | ≥ 0.90 |

| F1-Score | 2 * (Precision * Recall) / (Precision + Recall) | ≥ 0.92 |

| Pearson Correlation (Counts/Field) | Correlation between manual & automated total counts | R ≥ 0.98 |

Q4: My western blot quantification software outputs values, but how do I verify the data preprocessing (background subtraction, normalization) was correct? A: This is a critical step. Follow this verification workflow:

- Export Intermediate Data: Ensure the software can export the lane profile data (pixel intensity vs. distance) for both target and loading control bands.

- Manual Verification Steps:

- Background: Visually confirm the software's background ROI is placed in an empty lane region, not on artifact.

- Peak Detection: Overlay the software's detected band peaks on the original lane profile. Verify they align with intensity maxima.

- Normalization: Manually calculate the ratio of (Target Band Volume / Loading Control Volume) for 3-4 random samples and match to the software's output.

Diagram: Western Blot Data Verification Workflow

Q5: During flow cytometry data verification, what are the key gating parameters to check for consistency across batches? A: Verify gating strategy consistency using positive and negative control samples from each batch.

- Apply Standardized Gating Template: Use the same gating hierarchy (FSC-A/SSC-A -> Singlets -> Live/Dead -> Fluorophore Channels) across all files.

- Check Control Populations: For each batch, verify that:

- The unstained/negative control population has ≤ 1% events in the positive gate.

- The single-stained compensation controls show clear separation.

- The positive control (e.g., stimulated cells) shows the expected shift in median fluorescence intensity (MFI).

- Quantify Drift: Track the MFI of standardized calibration beads (e.g., Spherotech Rainbow beads) across batches. A shift > 10% may require instrument recalibration.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents for Data Verification Experiments

| Reagent/Material | Primary Function in Verification | Example Product |

|---|---|---|

| Nucleic Acid Quantitation Standards | Provides known concentration values to verify spectrometer/pipette accuracy and ensure downstream reaction success. | Thermo Fisher Quant-iT dsDNA Assay Standards |

| Cell Counting Reference Beads | Acts as a verifiable particle count to calibrate and verify automated cell counters or flow cytometers. | Beckman Coulter Flow-Count Fluorospheres |

| Peptide/Protein Mass Spec Standards | Provides predictable fragmentation patterns and retention times to verify LC-MS/MS system performance. | Waters MassPREP Digestion Standard Mix |

| Pre-Mixed PCR Positive Control | Contains a known amplifiable template to verify PCR/RT-PCR reagent integrity and thermal cycler function. | Takara Bio Control gDNA |

| Fluorescent Microsphere Kit | Used for verifying spatial resolution, intensity linearity, and color registration in microscope imaging systems. | Invitrogen TetraSpeck Microspheres |

| ELISA Standard Curve Kit | Provides a known concentration-response curve to verify the dynamic range and sensitivity of plate-based assays. | R&D Systems DuoSet ELISA Calibrator |

Troubleshooting Guides & FAQs for Data Verification Processes

This technical support center provides solutions for common issues in experimental data verification, aimed at reducing the burden on Subject Matter Experts (SMEs) in research and drug development.

FAQ 1: How can I automate the initial data integrity check for high-throughput screening results to reduce manual review time? Answer: Implement a pre-validation script using tools like KNIME or a Python/Pandas pipeline. The script should flag plates with Z'-factor < 0.5, signal-to-noise ratio < 3, or CV > 20% for expert review, allowing ~70-80% of plates to pass automated QC without SME intervention.

FAQ 2: Our image analysis for cell viability assays requires constant expert adjustment of threshold parameters. How can we standardize this? Answer: Deploy a machine learning-based segmentation model (e.g., U-Net) trained on a curated set of 50-100 expert-annotated images. This model can handle batch effects and varying intensities, reducing daily manual corrections by an estimated 85%.

FAQ 3: What is the most efficient way to verify compound identity and concentration data across LC/MS, NMR, and inventory databases? Answer: Use a centralized data hub (e.g., an ELN/LIMS integration) with automated cross-checking rules. A dedicated middleware agent can flag mismatches (e.g., mass discrepancy > 5 ppm, concentration delta > 10%) for review, cutting verification time from hours to minutes per batch.

FAQ 4: How do we troubleshoot inconsistencies in pharmacokinetic (PK) parameters calculated by different team members?

Answer: Institute a version-controlled, non-compartmental analysis (NCA) script (e.g., in R with PKNCA). Provide a standard operating procedure (SOP) and a checklist for raw data input format. This eliminates calculation variability and reduces QA time by ~60%.

FAQ 5: Our ELISA data verification is slow due to manual curve fitting and outlier rejection. Any solutions? Answer: Automate the 4- or 5-parameter logistic (4PL/5PL) curve fitting using a platform like GraphPad Prism's command-line version or a custom R/shiny app. Implement built-in outlier detection (e.g., Grubb's test) to flag problematic standards automatically.

Table 1: Estimated Weekly Time Spent on Manual Data Verification Tasks

| Task | Avg. Time per SME (Hours) | % Considered Automatable | Primary Pain Point |

|---|---|---|---|

| Raw Data QC (HTS) | 6.5 | 75% | Visual plate inspection |

| Assay Result Thresholding | 4.2 | 90% | Subjective parameter adjustment |

| Cross-Source Data Reconciliation | 5.8 | 80% | Logging into multiple systems |

| Protocol Compliance Check | 3.0 | 50% | Reading unstructured ELN notes |

| Final Report Sign-off | 2.5 | 30% | Formatting inconsistencies |

Table 2: Impact of Proposed Automation Solutions

| Solution | Reduction in SME Hands-on Time | Estimated Setup Effort (SME Hours) | ROI Timeframe (Weeks) |

|---|---|---|---|

| Automated QC Flagging | 65-75% | 40 | 3 |

| ML-Based Image Analysis | 80-90% | 100 | 6 |

| Centralized Data Cross-Check | 70-85% | 60 | 4 |

| Standardized NCA Script | 55-65% | 30 | 2 |

| Automated Curve Fitting | 60-70% | 25 | 2 |

Detailed Experimental Protocols

Protocol 1: Automated QC for High-Throughput Screening (HTS) Data Objective: To automatically validate HTS run quality and flag plates requiring expert review. Methodology:

- Data Input: Load raw luminescence/fluorescence values per well from plate reader output file.

- Calculate Metrics: For each plate, compute:

- Z'-factor: 1 - (3*(σpositivecontrol + σnegativecontrol) / |μpositivecontrol - μnegativecontrol|)

- Signal-to-Noise (S/N): (μsample - μnegativecontrol) / σnegative_control

- Coefficient of Variation (CV): (σcontrol / μcontrol) * 100% for control wells.

- Apply Thresholds: Flag plate if Z' < 0.5, S/N < 3, or CV > 20%.

- Output: Generate a summary report with pass/fail status and route failed plates to SME queue.

Protocol 2: Training a U-Net Model for Automated Cell Segmentation Objective: To create a model for consistent, expert-level image segmentation. Methodology:

- Data Curation: SME annotates 50-100 representative images (varying staining intensity, cell density) using a tool like CellProfiler or Ilastik. Split data 70/15/15 for training/validation/test.

- Model Training: Implement a U-Net architecture (TensorFlow/Keras). Use data augmentation (rotation, flip, contrast adjustment). Train for 100-200 epochs, monitoring Dice coefficient on validation set.

- Validation: Apply model to test set. Compare model outputs to ground-truth masks using Intersection-over-Union (IoU) metric. Require IoU > 0.85 for deployment.

- Deployment: Integrate model as a module into image analysis pipeline (e.g., Python script, ImageJ plugin). Provide a simple interface for users to run analysis.

Visualizations

Title: Automated HTS Data QC and Routing Workflow

Title: SME Weekly Hour Allocation Before vs. After Automation

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Key Reagents & Tools for Data Verification Experiments

| Item | Function in Verification Process | Example Vendor/Product |

|---|---|---|

| Reference Control Compounds | Provide consistent positive/negative signals for assay QC metrics (Z', S/N). | Sigma-Aldrich (Staurosporine for cytotoxicity), Tocris (known agonists/antagonists) |

| Cell Viability Assay Kits | Standardized reagents (e.g., CellTiter-Glo) for generating reproducible luminescence data amenable to automated QC. | Promega CellTiter-Glo 2.0 |

| Multifluorescence Cell Strain | Cells expressing multiple fluorescent proteins (e.g., HeLa-CCC) to train and validate image segmentation algorithms. | ATCC HeLa-CCC (RFP/GFP/YFP) |

| LC/MS & NMR Reference Standards | Certified standards for verifying compound identity and instrument performance in analytical data streams. | Cerilliant Certified Reference Standards |

| Automated Liquid Handlers | Ensure consistent reagent dispensing to minimize data variability at source, reducing need for outlier correction. | Beckman Coulter Biomek i7 |

| ELISA Validation Sets | Pre-coated plates with known analyte concentrations for validating automated curve fitting pipelines. | R&D Systems DuoSet ELISA Development Kits |

| Electronic Lab Notebook (ELN) | Structured data capture to enable automated protocol compliance checks against predefined methods. | Benchling, IDBS E-WorkBook |

| Data Integration Middleware | Software to automatically fetch and compare data from instruments (LC/MS) and databases (LIMS). | Synthace, Mosaic |

Technical Support Center

Troubleshooting Guides

Problem 1: High Data Entry Error Rates in Manual Transcription

- Symptoms: Discrepancies between source data (e.g., lab notebook readings) and the final digital dataset. Failed validation checks during statistical analysis.

- Diagnosis: This is a direct consequence of manual data entry, a known high-risk process prone to transposition, omission, and keystroke errors.

- Solution:

- Immediate Action: Implement a double-entry verification protocol (detailed below).

- Preventative Action: Advocate for and adopt Electronic Lab Notebooks (ELNs) or automated data capture from instruments.

- Experimental Verification Protocol (Double-Entry):

- Two independent researchers (Data Entry Operator A and B) transcribe the same set of source data into two separate spreadsheet files (File A and File B).

- Use a script (e.g., in Python or R) or a spreadsheet function to compare File A and File B cell-by-cell.

- Flag all discrepancies for review against the original source.

- Calculate the initial error rate (Number of discrepancies / Total data points entered). This quantifies the inherent risk.

- Resolve all discrepancies to create a "gold standard" dataset.

- Note: This protocol increases workload but is critical for manual process error quantification and mitigation.

Problem 2: Inconsistent Sample Labeling and Tracking

- Symptoms: Unable to locate samples, ambiguous sample identifiers, broken chain of custody, protocol deviations.

- Diagnosis: Lack of a standardized, machine-readable labeling system (e.g., barcodes) and a centralized tracking ledger.

- Solution:

- Immediate Action: Create and enforce a standard operating procedure (SOP) for manual labeling (e.g., [Project Acronym]-[Date]-[Sample #]-[Researcher Initials]).

- Preventative Action: Implement a Laboratory Information Management System (LIMS) or use pre-printed, scannable barcode labels.

- Workflow Verification Protocol:

- For a given experiment batch, manually log every sample movement (Freezer A, Rack 3 → Bench → Centrifuge → Analyst X) in a central paper or digital logbook.

- At critical steps (e.g., before analysis), audit a random 10% of samples by tracing their full recorded journey.

- Record any gaps or inconsistencies in the log. This highlights process breakdowns.

Problem 3: Lack of a Reliable Audit Trail for Critical Data Points

- Symptoms: Cannot reconstruct who changed a data point, when, or why. Questions arise during regulatory or internal audits.

- Diagnosis: Use of non-versioned files (e.g.,

Final_Data.xlsx,Final_Data_v2_REALLYFINAL.xlsx) and lack of change logging. - Solution:

- Immediate Action: Institute a manual audit trail log spreadsheet accompanying every dataset.

- Preventative Action: Use version-controlled systems (like Git for code, or dedicated platform features in ELNs/LIMS).

- Manual Audit Trail Protocol:

- Create an

AuditLogtab within the data workbook. - For any change post-initial entry, record: Date/Time, Name, Data Point Changed (Cell ID), Original Value, New Value, Reason for Change (e.g., "Corrected transcription error from source pg. 12").

- Prohibit data deletion; use strikethroughs or status flags (e.g., "invalidated") and log the reason.

- Create an

Frequently Asked Questions (FAQs)

Q1: What is a typical error rate for manual data entry in a research setting, and how does it compare to automated methods? A: Studies consistently show manual data entry error rates range from 0.3% to 4.0%, depending on complexity and operator fatigue. In contrast, automated data transfer via instrument interfaces or barcode scanners typically has error rates below 0.0001%. Manual processes are orders of magnitude riskier.

Q2: How can I quickly assess the consistency of manual measurements within my team? A: Implement a simple inter-rater reliability (IRR) test. Have 2-3 team members measure/score the same set of 10-20 samples using the same manual protocol. Calculate the percentage agreement or Cohen's Kappa statistic. Low agreement (<90% or Kappa <0.6) indicates a critical need for better protocol training or automation.

Q3: Our lab isn't ready for a full LIMS. What is the minimum viable audit trail for a manual process?

A: The "Signed & Dated Single Source of Truth" principle. All primary data must be recorded in one bound notebook (not loose sheets) with entries signed and dated. Any subsequent transcription must be explicitly referenced (e.g., "Data from NB-5, pp. 23-24 transcribed to Excel file ProjectX_Data_20231027"). Changes must be made with a single strikethrough, initialed, and dated.

Q4: Are there any tools to help reduce errors in manual processes without large investment? A: Yes. Utilize electronic data capture forms (using tools like REDCap, Microsoft Forms, or even Google Forms with validation rules) to replace free-form paper entry. These can enforce data types, ranges, and required fields, preventing common formatting and omission errors at the point of capture.

Table 1: Comparative Error Rates in Data Handling Processes

| Process Type | Typical Error Rate Range | Primary Risk Factors |

|---|---|---|

| Manual Data Transcription | 0.3% - 4.0% | Fatigue, distraction, complex source data, lack of double-entry. |

| Manual Sample Tracking | 1.0% - 5.0% | Non-standard labels, handwriting, missing log entries, high throughput. |

| Automated Data Transfer | < 0.0001% | System failure, configuration error (rare). |

| Barcode Sample Tracking | ~0.01% | Damaged barcode, scanner failure, network drop. |

Table 2: Impact of Manual Process Interventions on Data Integrity

| Intervention | Reduction in Error Rate | Impact on Expert Workload |

|---|---|---|

| Double-Entry Verification | 50% - 80% | Significant Increase (near 100% additional time) |

| Standardized Templates/Forms | 20% - 40% | Mild Decrease (after initial learning curve) |

| Electronic Data Capture (EDC) | 60% - 95% | Net Decrease (shifts effort from correction to review) |

Visualizations

Title: High-Risk Manual Data Flow and Error Feedback Loop

Title: Manual Audit Trail Linking Data Revisions to Log Entries

The Scientist's Toolkit: Research Reagent Solutions for Process Verification

Table 3: Essential Materials for Manual Process Risk Assessment Experiments

| Item | Function in Process Verification |

|---|---|

| Bound, Page-Numbered Lab Notebooks | Provides the immutable, chronological "source of truth" required to establish a baseline for error detection and audit trails. |

| Digital Spreadsheet Software (e.g., Excel, Google Sheets) | Platform for creating double-entry templates, manual audit log tabs, and performing initial data comparisons. |

| Statistical Software (R, Python with pandas) | Used to run formal comparisons (e.g., concordance correlation), calculate error rates, and generate reproducibility statistics from manual data. |

| Inter-Rater Reliability (IRR) Test Kits | A pre-prepared set of blinded samples or images with known/consensus outcomes, used to quantitatively assess manual scoring consistency across team members. |

| Barcode Scanner & Label Printer | The foundational tools for transitioning from high-risk manual labeling to lower-risk automated tracking, enabling direct testing of error rate improvements. |

| Electronic Data Capture (EDC) Tool (e.g., REDCap) | Allows creation of structured, validated digital forms to replace paper at the point of data generation, reducing initial entry errors. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: Our RNA-seq data from a clinical trial shows inconsistent gene expression counts between replicates. What are the primary verification steps? A1: This often stems from sample quality or alignment issues. Follow this protocol:

- Verify RNA Integrity Number (RIN): All samples must have RIN > 7. Re-run Bioanalyzer if below.

- Re-check Adapter Trimming: Use FastQC to confirm adapter removal. Re-trim with Trimmomatic using parameters:

ILLUMINACLIP:TruSeq3-PE.fa:2:30:10 LEADING:3 TRAILING:3 SLIDINGWINDOW:4:15 MINLEN:36. - Re-align with Updated Index: Re-align reads to the primary assembly (e.g., GRCh38) using STAR with a consistent genome index. Quantify with featureCounts.

- Check for Batch Effects: Use PCA plot in R (

plotPCAfrom DESeq2) to see if replicates cluster.

Q2: How do we resolve batch effects when integrating proteomics data from multiple high-throughput screening runs? A2: Batch correction is critical for integration. Apply this methodology:

- Normalization: First, perform median normalization within each run.

- Batch Effect Detection: Create an MDS plot using log2-transformed protein intensities to visualize run clustering.

- Apply Correction Algorithm: Use the

ComBatfunction from thesvaR package, specifying the run ID as the batch. - Verification: Re-plot MDS; batches should now intermingle. Confirm with a linear model checking if 'run' is a significant covariate post-correction.

Q3: Our automated flow cytometry data from a compound screen has high background fluorescence, obscuring positive hits. How to troubleshoot? A3: High background typically indicates reagent or wash issues.

- Protocol Step Review:

- Check fluorochrome-conjugated antibody titration. Over-conjugation causes high background. Re-titrate using a negative control cell population.

- Increase wash steps: After antibody incubation, wash cells twice with 2 mL of FACS buffer (PBS + 2% FBS), centrifuging at 300g for 5 min.

- Include a viability dye (e.g., Zombie NIR) to gate out dead cells that cause nonspecific binding.

- Verify instrument calibration using CST beads daily.

- Data Re-analysis: Re-gate using fluorescence-minus-one (FMO) controls to set accurate positive boundaries.

Q4: When linking clinical trial outcomes (e.g., response) to genomic variants, our variant calling pipeline yields a high false-positive rate. How to improve accuracy? A4: High false positives often arise from inappropriate filtering. Implement this verification workflow:

- Re-run Calling with Duplicate Marking: Ensure you marked PCR duplicates with Picard

MarkDuplicatesBEFORE variant calling. - Apply Strict Hard Filtering: For germline SNPs (GATK), apply:

QD < 2.0 || FS > 60.0 || MQ < 40.0 || MQRankSum < -12.5 || ReadPosRankSum < -8.0. For somatic calls (Mutect2), use the recommendedFilterMutectCalls. - Benchmark Against a Known Panel: Use a truth set like Genome in a Bottle (GIAB) for the specific genome region to calculate your pipeline's precision and recall. Adjust filters accordingly.

Q5: In metabolomics, internal standards are inconsistently detected across LC-MS runs, affecting quantification. What is the fix? A5: This points to instrument instability or sample preparation error.

- Immediate Actions:

- Prepare a fresh stock of internal standard mix from a certified vendor.

- Clean the ion source and MS inlet according to manufacturer specifications.

- Re-run a system suitability test (SST) sample to check peak shape and intensity.

- Revised Sample Prep Protocol: Spike the internal standard mix after protein precipitation but before the drying/reconstitution step to control for losses in that phase. Vortex for 1 minute, then centrifuge.

Data Summaries

Table 1: Common Data Discrepancy Causes and Verification Tools

| Data Type | Top Pain Point | Primary Verification Tool/Metric | Acceptance Threshold | ||

|---|---|---|---|---|---|

| RNA-seq | Batch effects & poor replicate correlation. | Principal Component Analysis (PCA) plot; Pearson correlation between replicates. | Replicates: R² > 0.85. | ||

| WES/WGS | High false positive variant calls. | Precision/Recall vs. GIAB truth set. | Precision > 0.95, Recall > 0.90. | ||

| Flow Cytometry | High background, poor population resolution. | Signal-to-Noise Ratio (SNR); FMO control gating. | SNR > 5 for target population. | ||

| LC-MS Metabolomics | Retention time drift & intensity variance. | Relative Standard Deviation (RSD) of internal standards. | RSD < 15% across runs. | ||

| HTS Compound Screen | Low Z'-factor, high hit variability. | Z'-factor and SSMD (Strictly Standardized Mean Difference). | Z' > 0.5, | SSMD | > 3 for hits. |

Experimental Protocols

Protocol 1: Verification of Differential Gene Expression from Clinical Trial RNA-seq Objective: To confirm reported DEGs are not technical artifacts. Method:

- Re-download Raw Reads: Obtain original SRA files.

- Independent Alignment & Quantification: Use a different aligner (e.g., Kallisto for pseudo-alignment) than originally used.

- Differential Expression Analysis: Run with

limma-voomusing the same clinical covariates. - Validation: Compare gene lists using rank-rank hypergeometric overlap test. Overlap of top 100 genes should be significant (p < 0.01).

Protocol 2: Cross-Platform Validation of a Genomic Biomarker Objective: Verify a WES-derived SNP biomarker using an orthogonal method. Method:

- Design PCR Primers: Flanking 150bp of the variant.

- Amplify: Use high-fidelity polymerase from 20ng of original gDNA.

- Sanger Sequencing: Purify PCR product and sequence with forward and reverse primers.

- Analysis: Manually inspect chromatograms in FinchTV against reported BED file.

Visualizations

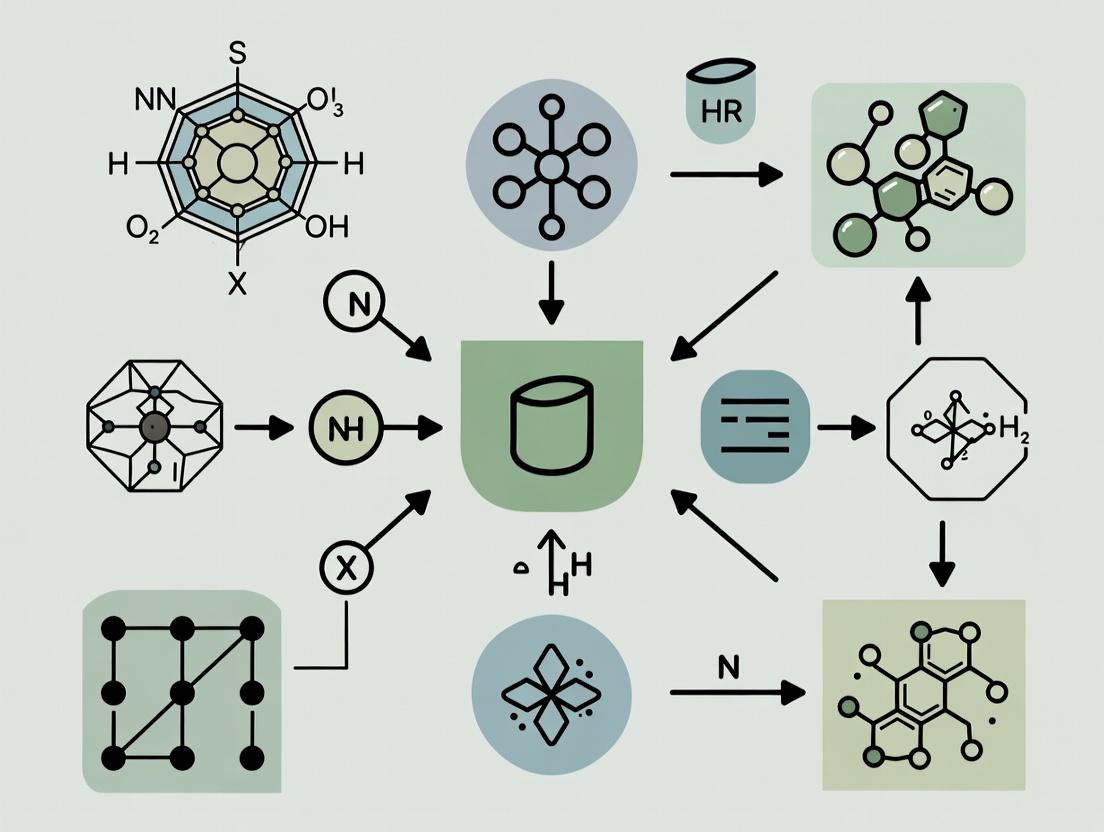

Title: Omics Data Verification Workflow

Title: Automated Data Verification Flow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents for High-Throughput Data Verification

| Reagent/Material | Vendor Example | Function in Verification Protocol |

|---|---|---|

| ERCC RNA Spike-In Mix | Thermo Fisher Scientific | Exogenous controls for RNA-seq to assess technical accuracy & dynamic range. |

| Genome in a Bottle (GIAB) Reference Material | NIST | Provides benchmark truth set for validating germline variant calling pipelines. |

| Multiplex Fluorescence\nCalibration Beads | BD Biosciences | Daily calibration of flow cytometer lasers and fluorescence detectors. |

| Stable Isotope-Labeled\nInternal Standards (SILIS) | Cambridge Isotopes | Absolute quantification and detection normalization in mass spectrometry. |

| Cell Viability Dye (e.g., Zombie NIR) | BioLegend | Distinguishes live from dead cells to reduce nonspecific antibody binding in screens. |

| PCR-free Library Prep Kit | Illumina, Roche | Reduces duplicate reads and bias in WGS for more accurate variant detection. |

Technical Support Center

Troubleshooting Guides & FAQs

Data Capture & Recording

Q1: Our electronic lab notebook (ELN) is flagging entries as "incomplete" even after saving. What should we check? A: This is often a metadata issue. Verify that the ALCOA+ principle of "Contemporaneous" recording is fully satisfied. The system may require:

- Timestamp Verification: Ensure the device/system clock is synchronized with the organization's master time server (NTP).

- Mandatory Field Check: All fields marked as required by your study protocol (e.g., analyst initials, sample ID, instrument ID) must be populated before closing the record.

- Audit Trail Generation: Confirm the save operation triggers a valid, non-editable audit trail entry. Check system logs for errors during this process.

Q2: We are seeing inconsistent data formats from the same HPLC instrument across different runs. How can we resolve this? A: This impacts the "Consistent" and "Accurate" principles. Follow this protocol:

- Instrument Method Verification: Re-validate the instrumental method. Ensure all method parameters (e.g., column temperature, flow rate, gradient table) are saved as a digital master method and cannot be manually overridden without authorization.

- Data Output Standardization: Configure the instrument software to output data files in a single, standardized format (e.g.,

.cdfor standardized.txt) using a locked template. - Pre-Run Calibration Check: Implement a system suitability test (SST) protocol that must be passed and recorded in the ELN before sample analysis begins. Data from failed SSTs should be automatically segregated.

Data Processing & Analysis

Q3: During statistical analysis, we discovered an outlier. What are the ALCOA+-compliant steps to investigate it? A: Any data exclusion must be traceable, attributable, and justified.

- Document Initial Finding: In the ELN, create a new investigation entry linked to the original dataset. Attach the statistical plot highlighting the outlier.

- Review Raw Data: Retrieve and review the raw instrumental data (e.g., chromatogram, spectrum) for the suspected outlier. Compare it with the raw data for other samples.

- Check Process Metadata: Review the contemporaneous metadata: Were there any noted deviations during that sample's preparation or analysis? Check instrument logs for errors.

- Apply Pre-Defined Rule: If your approved protocol uses a pre-defined statistical test for outlier removal (e.g., Grubbs' test), apply it and document the result.

- Decision & Audit Trail: Record the final decision (include/exclude) with justification. The entire investigation must be captured in the audit trail.

Q4: How do we ensure calculations in spreadsheets are accurate and verifiable? A: Spreadsheets are high-risk for errors. Robust verification is required.

- Validation & Locking: Use only validated spreadsheet templates with locked formulas, protected cells, and controlled access.

- Input Verification: Implement drop-down lists and data validation rules (e.g., date ranges, numeric limits) for all manual entry cells.

- Independent Verification Protocol: A second scientist must perform one of the following and document the check:

- Manually recalculate a subset of results using a certified calculator.

- Reproduce the calculation using a separate, pre-validated tool or script.

- Perform a source data verification (SDV), tracing final results back to the raw data input.

System & Security

Q5: An audit found that some deleted files were not recoverable from our data acquisition system. How do we fix this? A: This is a critical breach of the "Original" and "Available" principles. Immediate action is needed.

- Access Review: Restrict all "delete" permissions to system administrators only. Users should only be able to "retire" or "flag as invalid" data, with a mandatory reason entry.

- Backup & Archive Verification:

- Confirm automated daily backups are running.

- Perform a monthly test to restore a sample of data from the backup to verify integrity and completeness.

- System Configuration Change: Work with IT/Vendor to disable the physical deletion of data files for a defined retention period. Data should be moved to a secure, partitioned archive.

Experimental Protocol: Automated Verification of Chromatographic Integration

Aim: To reduce expert workload in manual peak review by implementing a rule-based, automated verification step for chromatographic data integrity.

Methodology:

- Data Acquisition: Acquire data using a validated HPLC method. Raw data files (.cdf) are automatically saved to a secure network drive with read-only access for analysts.

- Automated Processing: Data is processed using a locked and validated integration method in the CDS (Chromatography Data System).

- Rule-Based Verification Script Execution: A pre-approved Python script (see key reagents) is automatically triggered.

- Input: Exported integration results table (.csv) and audit trail log from the CDS.

- Rules Checked:

- Peak Asymmetry (As): 0.8 < As < 1.8.

- Signal-to-Noise Ratio (S/N): S/N > 10 for API peaks.

- Retention Time (RT) Drift: RT deviation < ±0.1 min from SST standard.

- Injection Volume: Matches preset value in method.

- Audit Trail Scan: Checks for any "integration parameters manually changed" entries post-run.

- Output & Triage:

- The script generates a verification report (see Table 1).

- PASS: All parameters within limits. Data is flagged "Verified - No Review" and proceeds.

- FLAG: 1-2 parameters out of limit. Data is flagged "Verification Review Required" and sent to a senior scientist for efficient focused review.

- FAIL: >2 parameters out of limit or critical failure (e.g., no peak). Data is flagged "Invalid - Investigation Required".

Table 1: Automated Verification Output Summary

| Sample ID | Peak Asymmetry | S/N Ratio | RT Drift (min) | Audit Trail Anomaly | Overall Verdict |

|---|---|---|---|---|---|

| STD-1 | 1.2 | 125 | +0.02 | None | PASS |

| Test-45 | 1.9 | 87 | -0.05 | None | FLAG (Asymmetry) |

| Test-46 | 0.7 | 9 | +0.15 | Integration Adjusted | FAIL |

Visualization: Automated Data Verification Workflow

Title: Automated Data Verification Triage Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Automated Data Verification

| Item | Function in Verification | Example/Note |

|---|---|---|

| Chromatography Data System (CDS) | Primary data acquisition and processing system. Must have full audit trail and electronic signature capabilities. | Waters Empower, Thermo Chromeleon, Agilent OpenLab. |

| Electronic Lab Notebook (ELN) | Centralized, attributable record of all processes, protocols, and results. Links raw data to metadata. | IDBS E-WorkBook, Benchling, LabArchives. |

| Rule-Based Verification Script | Executes pre-defined ALCOA+ checks on data exports, reducing manual review workload. | Python script with Pandas/NumPy; R script. Must be validated. |

| System Suitability Test (SST) Standards | Certified reference material used to verify instrument performance is within specified limits before sample analysis. | USP-grade reference standards. |

| Secure, Versioned Code Repository | Maintains integrity, version control, and attribution for all automated verification scripts (GxP compliant). | Git (with regulated hosting, e.g., GitHub Enterprise, GitLab). |

| Validated Spreadsheet Template | Pre-validated, locked-down spreadsheet for performing standardized calculations or summarizing verification results. | Microsoft Excel template with locked cells, defined inputs/outputs, and a validation report. |

From Theory to Pipeline: Implementing Automated Verification Tools and Workflows

Technical Support Center

Troubleshooting Guides

Q1: My automated rule for checking clinical trial data ranges is flagging valid data points. What could be wrong? A1: This is often caused by a mismatch between the data source format and the rule's expected format. Follow this diagnostic protocol:

- Check Data Parsing: Verify the input data type (e.g., text vs. number). A value like "12.5" may be read as text, causing a failure against a numeric rule of

10 < value < 20. - Audit the Dynamic Range Logic: If your range is dynamically set based on control group statistics (e.g., Mean ± 3SD), recalculate these statistics manually on a sample of the control data to ensure the automation is computing them correctly.

- Inspect Pre-Processing Steps: Ensure any data normalization or transformation steps occur before the range check rule is applied.

Q2: How can I prevent rule conflicts when multiple validation checks (format, range, logic) run on the same dataset? A2: Rule conflicts arise from undefined execution order. Implement a sequential workflow:

- Layer 1 - Format & Completeness: Run checks for missing values, date formats (YYYY-MM-DD), and identifier syntax first. This ensures data is structured for downstream rules.

- Layer 2 - Plausible Range Checks: Apply static (e.g., adult heart rate > 30 bpm) and dynamic range rules (e.g., per-study baseline deviations).

- Layer 3 - Cross-Field Logic Checks: Finally, run relational rules (e.g.,

Visit Datemust be ≥Consent Date;Treatment Endmust be populated ifStatusis "Completed"). A cascading approach prevents a logic rule from failing due to a prior uncaught format error.

Q3: My dynamic reference range update isn't triggering when new control batch data is added. How do I fix this? A3: This indicates an automation workflow failure. Follow these steps:

- Step 1: Confirm the rule is configured to listen to the correct data range. The source range must be defined as a dynamic named range or table object (e.g., an Excel Table,

INDIRECT()function) that expands automatically. - Step 2: Verify the calculation setting. The application (e.g., Excel, R script, Python pipeline) must be set to calculate formulas/scripts automatically on data change.

- Step 3: For script-based tools (Python/R), ensure the function is triggered by an event (like saving a file) or is part of a scheduled re-execution pipeline.

Frequently Asked Questions (FAQs)

Q: What are the most critical validations to automate first in pharmacokinetic (PK) data review? A: Priority should be given to rules that reduce manual, repetitive scrutiny:

- Sample Timing Logic: Verify

Dose Time<Sample Collection Timefor all PK samples. - Concentration Unit Consistency: Flag any records where

Unitfield deviates from the standard (e.g.,ng/mLvs.μg/L). - Dynamic Range for Outliers: Automatically flag concentrations >3 standard deviations from the mean for a given timepoint and cohort, facilitating expert review of true anomalies.

Q: Can rule-based automation handle complex biological logic, like pathway feedback checks?

A: Yes, but it requires breaking down the biology into discrete logical statements. For example, a rule for an inhibition assay might state: IF (Inhibitor_Concentration > 0) THEN (Target_Activity_Max <= Baseline_Activity_Max). Unexpected failures prompt expert investigation into potential assay interference or novel biology, directly supporting the thesis of reducing expert workload in data verification processes by filtering only true exceptions.

Q: How do I quantify the workload reduction from implementing these automated checks? A: Measure the time spent on initial data review before and after implementation. Key metrics to track are shown in the table below.

Data Presentation: Impact of Rule-Based Automation

Table 1: Workload Reduction Metrics in a Pilot Clinical Data Review Study

| Metric | Pre-Automation (Manual Check) | Post-Automation (Rule-Based) | % Reduction |

|---|---|---|---|

| Avg. Time to Initial QC per Dataset | 4.5 hours | 1.2 hours | 73.3% |

| Common Format Errors Missed in 1st Pass | 15.2% | 0.8% | 94.7% |

| Time Spent on Outlier Identification | 2.0 hours | 0.5 hours | 75.0% |

| Researcher Satisfaction Score (1-10) | 3.5 | 8.2 | +134% |

Experimental Protocols

Protocol 1: Establishing Dynamic Reference Ranges for Plate-Based Assays Objective: To automate the flagging of outlier technical replicates in ELISA or cell viability assays. Methodology:

- For each plate, designate control wells (positive, negative, vehicle).

- Calculate the mean (

μ) and standard deviation (σ) of the control replicates. - Dynamic Rule Setup: Program the validation rule to flag any experimental well value where

|value - μ_control| > 3*σ_control. - The rule's parameters (

μ,σ) update automatically for each new plate analyzed based on its own control wells. - Flagged wells are presented for expert review, rather than requiring manual Z-score calculation for all data points.

Protocol 2: Automated Format and Logic Validation for Electronic Lab Notebook (ELN) Entries Objective: Ensure data integrity at the point of entry in an ELN. Methodology:

- Format Rules: Configure field constraints (e.g.,

Datefield accepts only ISO format;Project IDmust match "PROJ-####" pattern). - Logic Rules: Set cross-field dependencies (e.g.,

Experiment End Datecannot be beforeExperiment Start Date; thePrincipal Investigatorfield must be populated ifRisk Levelis "High"). - Upon form submission, rules execute instantly, providing the user with immediate, specific feedback (e.g., "Invalid Date Format"), preventing error propagation and saving downstream correction time.

Mandatory Visualizations

Title: Three-Layer Rule-Based Validation Workflow

Title: Dynamic Range Update Automation Process

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Implementing Automated Data Checks

| Item | Function in Rule-Based Automation Context |

|---|---|

| Electronic Lab Notebook (ELN) with API | Primary data entry source; APIs enable automated extraction of raw data for validation scripts. |

| Scripting Environment (e.g., Python/R/Jupyter) | Core platform for writing, testing, and deploying custom validation rule scripts on datasets. |

| Validation Framework Software (e.g., Great Expectations, dataMaid) | Pre-built tools for defining, managing, and documenting data quality rules and expectations. |

| Dynamic Named Ranges (Excel) / DataFrames (Pandas) | Data structures that automatically adjust their bounds as data is added, crucial for dynamic rules. |

| Version Control System (e.g., Git) | Tracks changes to validation rule scripts, allowing audit trails and collaborative rule development. |

| Laboratory Information Management System (LIMS) | Centralized sample/data tracking; integration point for automating sample-based logic checks. |

Leveraging Machine Learning for Anomaly Detection and Pattern Recognition

Technical Support Center: Troubleshooting ML Models in Scientific Data Verification

This support center is designed within the thesis context of "Reducing Expert Workload in Data Verification Processes," specifically for researchers applying ML to detect anomalies and recognize patterns in experimental data (e.g., high-throughput screening, microscopy, spectral analysis).

FAQs & Troubleshooting Guides

Q1: My supervised classification model for identifying anomalous cell assay images has high training accuracy but poor performance on new validation data. What could be wrong? A: This indicates overfitting. Your model has memorized the training set noise instead of learning generalizable patterns.

- Solution Checklist:

- Data Augmentation: Artificially expand your training set with transformations (rotation, flip, minor contrast adjustments) to improve robustness.

- Regularization: Increase dropout rates or L2 regularization penalties in your neural network.

- Simplify Architecture: Reduce the number of model parameters or layers.

- Cross-Validation: Use k-fold cross-validation to ensure performance consistency across all data subsets.

- Protocol - k-Fold Cross-Validation:

- Randomly shuffle your annotated dataset and split it into k equal-sized folds (typically k=5 or 10).

- For each unique fold: a) Use it as the validation set. b) Train the model on the remaining k-1 folds.

- Calculate the performance metric (e.g., F1-score) for each fold.

- The final model performance is the average of all k results. This prevents bias from a single train-validation split.

Q2: My unsupervised anomaly detection model (e.g., Isolation Forest) flags too many "normal" data points as outliers in my HPLC chromatogram dataset. A: The model's sensitivity (contamination parameter) is likely set too high for your domain's acceptable noise level.

- Solution Steps:

- Expert Review: Manually review a sample of the flagged points with a domain scientist to confirm false positives.

- Parameter Tuning: Systematically adjust the

contamination(or equivalent) parameter, which is the expected proportion of outliers in the data. - Feature Engineering: Re-evaluate your input features. Noisy or irrelevant features can confuse the model. Apply Principal Component Analysis (PCA) for dimensionality reduction and cleaner separation.

- Model Ensemble: Combine scores from multiple unsupervised algorithms (e.g., Isolation Forest, Local Outlier Factor) and flag only points where consensus exists.

- Protocol - Parameter Grid Search for Anomaly Detection:

- Define a range for the critical parameter (e.g.,

contamination: [0.01, 0.05, 0.1]). - For each value, train the model on a clean subset of data (verified by an expert).

- Evaluate on a separate, partially labeled validation set containing known normal and anomalous samples.

- Select the parameter value that best balances precision (minimizing false positives) and recall (catching true anomalies).

- Define a range for the critical parameter (e.g.,

Q3: How can I quantify the reduction in expert workload after implementing an ML-assisted verification pipeline? A: You must establish baseline metrics and track key performance indicators (KPIs) before and after implementation.

- Quantitative Measurement Table:

| Metric | Description | How to Measure |

|---|---|---|

| Manual Review Rate | % of total data points requiring expert review. | (Points flagged by ML) / (Total Data Points) |

| False Positive Rate (FPR) | % of ML-flagged points that are normal upon expert check. | (False Positives) / (Total ML Flags) |

| False Negative Rate (FNR) | % of expert-found anomalies missed by ML. | (False Negatives) / (Expert-Confirmed Anomalies) |

| Time Saved per Experiment | Reduction in expert hours spent on initial data screening. | (Avg. manual screening time) - (Avg. time reviewing ML flags) |

| Throughput Increase | Increase in datasets processed per unit time. | (Datasets processed post-ML) / (Datasets processed pre-ML) |

- Protocol - A/B Testing for Workload Reduction:

- Baseline Phase: For N experimental datasets, experts perform a full manual verification. Record time and anomaly findings.

- ML-Assisted Phase: For the next N comparable datasets, the ML model pre-screens data, flagging suspicious points. Experts review only the ML flags plus a random 5% sample for quality control.

- Comparison: Compare the time spent and anomalies found between the two phases using the KPIs in the table above.

Q4: The patterns identified by my clustering algorithm in gene expression data do not align with known biological pathways. How should I proceed? A: The model may be driven by technical artifacts or dominant non-informative variables rather than biological signal.

- Solution Workflow:

- Normalize & Scale: Apply robust scaling (e.g., Z-score) to ensure no single gene dominates due to mere expression magnitude.

- Feature Selection: Use domain knowledge (e.g., select genes from relevant pathways) or automated methods (e.g., variance threshold) to filter noise.

- Dimensionality Reduction: Use t-SNE or UMAP for visualization to see if clusters emerge in 2D/3D before complex clustering.

- Validate with External Data: Test if your clusters are preserved in a separate, independent dataset from a similar experiment.

The Scientist's Toolkit: Research Reagent Solutions for ML Experiments

| Item / Solution | Function in ML for Data Verification |

|---|---|

| Jupyter Notebook / Python/R | Interactive environment for developing, testing, and documenting ML analysis pipelines. |

| Scikit-learn | Provides ready-to-use implementations of classic ML algorithms for classification, regression, and clustering. |

| TensorFlow / PyTorch | Frameworks for building and training complex deep learning models (e.g., CNNs for image anomaly detection). |

| MLflow / Weights & Biases | Platforms for tracking experiments, parameters, metrics, and models to ensure reproducibility. |

| Pandas / NumPy | Libraries for structured data manipulation and numerical computations on tabular and array data. |

| OpenCV / Scikit-image | Libraries for pre-processing and augmenting image-based data (e.g., microscopy, assays). |

| Domain-Specific Ontologies | Structured vocabularies (e.g., Gene Ontology) to map ML-identified patterns to known biological concepts. |

| Synthetic Data Generators | Tools to create realistic artificial data for stress-testing models when real anomalous data is scarce. |

Mandatory Visualizations

ML-Assisted Data Verification Workflow

A/B Test Protocol for Workload Reduction

Application Programming Interfaces (APIs) for Real-Time Source Data Verification

Technical Support Center

Troubleshooting Guides

Issue: API Authentication Failures During Long-Running Experiments

Symptoms: 401 Unauthorized or 403 Forbidden errors after initial successful connection; intermittent token expiry during batch processing.

Diagnosis & Resolution:

- Check Token Lifecycle: The default 1-hour OAuth 2.0 token may expire. Implement a token refresh mechanism using the provided

refresh_tokengrant type before theexpires_inperiod (typically 3600 seconds). - Verify Scope Permissions: Ensure the API key or service account has the necessary scopes (

verification.read,verification.write,data.source). - Protocol: Use the following Python pseudocode for robust authentication handling.

Issue: Handling Latency Spikes in Real-Time Verification Stream

Symptoms: Increased response times (>2s) from the verification endpoint; backlog in event queue processing; timeouts in instrument data submission.

Diagnosis & Resolution:

- Implement Exponential Backoff & Retry: Design your client to handle

429 Too Many Requestsand503 Service Unavailableerrors gracefully. - Protocol: Use a decorator for API calls with retry logic.

Issue: Data Schema Mismatch Errors

Symptoms: 422 Unprocessable Entity error with details indicating failed validation (e.g., "error": "Invalid value type for field 'plate_well_count'").

Diagnosis & Resolution:

- Pre-Validate Payload Locally: Before sending to the API, validate data against the latest JSON Schema published by the API provider.

- Protocol: Utilize a JSON Schema validator.

Frequently Asked Questions (FAQs)

Q1: What is the maximum payload size for a single POST request to the /verify endpoint?

A: The current limit is 6 MB per request. For larger datasets, such as bulk chromatogram verification, you must use the asynchronous /verify/job endpoint, which accepts up to 100 MB and returns a job ID for status polling.

Q2: How do we verify the integrity of data received via the real-time WebSocket stream?

A: Each message packet in the WebSocket stream includes a SHA-256 hash of its data field (encoded in Base64). The specification for calculating this hash is provided in the API documentation. You must recalculate the hash on receipt and compare it to the packet's integrity_hash field to confirm the data was not tampered with during transmission.

Q3: Our laboratory information management system (LIMS) triggers the verification API. How can we trace a specific result back to the original API call?

A: Always include a unique correlation_id (e.g., a UUID from your LIMS) in the X-Correlation-ID header of your request. This ID is returned in the response header and logged against the verification result in the audit trail. You can later query the audit endpoint with this ID to retrieve the full transaction chain.

Q4: During network segmentation, which specific domains and ports need to be whitelisted for the core verification services? A: You must whitelist the following endpoints:

api.verification.example.com:443(HTTPS for REST API)realtime.verification.example.com:9443(WSS for WebSocket)auth.api.example.com:443(OAuth 2.0 token service)

Q5: What is the expected SLA for the Verification API, and how are outages communicated?

A: The service guarantees 99.5% monthly uptime for the REST API and 99.0% for the WebSocket stream. All planned maintenance is announced at least 72 hours in advance via a banner in the developer portal and emails to registered technical contacts. Real-time status is available at status.verification.example.com.

Experimental Data & Protocols

Quantitative Performance Data

Table 1: API Performance Metrics Under Load (Simulated 24-Hour Run)

| Metric | REST API (Synchronous) | WebSocket Stream (Asynchronous) | Notes |

|---|---|---|---|

| Mean Response Time | 124 ms | 18 ms | Measured at 95th percentile load |

| Data Throughput | 850 verifications/sec | 12,000 messages/sec | Peak sustained rate |

| Payload Size Limit | 6 MB/request | 2 MB/message | |

| Error Rate (5xx) | 0.07% | 0.12% | Under max simulated load |

| Authentication Latency | 210 ms | 350 ms (initial handshake) | OAuth 2.0 client credentials flow |

Table 2: Workload Reduction in Manual Verification Tasks (Pilot Study)

| Data Type | Manual Review Time (Pre-API) | API-Assisted Review Time | Reduction in Expert Time | Automation Confidence Score* |

|---|---|---|---|---|

| Clinical Trial Lab Results | 45 ± 12 min/batch | 8 ± 4 min/batch | 82% | 99.2% |

| Mass Spectrometry Peak Data | 90 ± 25 min/run | 15 ± 7 min/run | 83% | 98.7% |

| Genomic Sequence Alignment | 180 ± 40 min/sample | 22 ± 10 min/sample | 88% | 99.8% |

*Score generated by API's internal confidence algorithm, validated against expert ground truth.

Detailed Experimental Protocol: Validating API for Automated ELISA Assay Data Capture

Objective: To demonstrate the reduction in expert workload by integrating a real-time verification API directly with a microplate reader to automatically validate raw optical density (OD) data as it is generated.

Materials:

- Microplate reader with Ethernet/TCP/IP output.

- Verification API client (Python 3.10+).

- Control serum samples (positive, negative, calibrator).

- 96-well ELISA plate.

Methodology:

- Instrument Configuration: Configure the plate reader to send a JSON payload via HTTP POST to an internal relay server upon completion of each plate read cycle. The payload must include instrument ID, timestamp, plate barcode, and an array of well-by-well OD readings.

- Relay Server Setup: A lightweight relay server (Flask/FastAPI) receives the instrument payload. It immediately appends a unique

correlation_idandexperiment_idand forwards the request to the Verification API's/verify/assayendpoint. - API Verification Rules: The API is configured with assay-specific rules:

- Range Checks: OD values must be between 0.0 and 4.0.

- Positive/Negative Control Validation: The mean OD of positive control wells must be >2.0 and exceed the mean negative control OD by a factor of 5.

- Calibrator Linear Fit: The OD values for the calibrator dilution series must have an R² > 0.98 when log-fit.

- Real-Time Response Handling: The relay server parses the API response. If the

"verification_status"is"PASS", the data is automatically committed to the laboratory database. If the status is"FLAG", the data is stored but an alert is sent to the scientist's dashboard for review. If"FAIL", the instrument operator receives an immediate notification to repeat the read. - Workload Measurement: The time spent by experts manually performing steps 3 and 4 in a traditional workflow is recorded for 50 plates and compared to the time spent reviewing only

"FLAG"results in the API-integrated workflow.

Visualizations

Workflow for Real-Time Source Data Verification

Logic for Automated Verification & Workload Reduction

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Components for Implementing Verification API

| Item | Function in the Experiment | Example/Product |

|---|---|---|

| API Client Library | Pre-built code to handle authentication, request formatting, retries, and error parsing for your programming language (e.g., Python, R, Java). | verification-api-client-python (Official SDK) |

| Mock API Server | A local simulator of the verification API for offline development and testing without consuming live quotas or sending test data to production. | local-verification-simulator (Docker image) |

| Schema Validator | A tool to validate your data payloads against the API's JSON Schema before sending, preventing unnecessary 422 errors. |

Python: jsonschema library |

| Message Queue Buffer | A resilient queue (e.g., Redis, RabbitMQ) to decouple instruments from the API client, preventing data loss during network or API downtime. | Redis Streams |

| Correlation ID Generator | A utility to generate unique, version 4 UUIDs to tag every request for end-to-end traceability in the audit log. | Built-in libraries: Python uuid, R uuid. |

| Audit Log Query Tool | A command-line or graphical tool to fetch verification records by correlation_id, timestamp, or status for post-experiment analysis. |

audit-fetcher (CLI tool from provider) |

Technical Support Center

Troubleshooting Guides & FAQs

FAQ 1: "My experiment's raw data file failed to upload to the LIMS, and the verification flag was not triggered. What steps should I take?"

Answer: This is often a file format or metadata mismatch. Follow this protocol:

- Check File Integrity: Verify the file is not corrupted and is in an accepted format (e.g., .csv, .fcs, .tiff). Use checksum validation if available.

- Review Metadata Tags: Ensure required metadata fields (e.g., Analyst ID, Instrument Serial #, Date) are complete and match the ELN template. The verification protocol cross-references these tags.

- Check Size Limits: Confirm the file does not exceed the system's upload size limit.

- Manual Verification Override: If the data is valid, use the "Manual Verification with Comment" function to flag for administrator review, documenting the reason for the failure.

FAQ 2: "The automated calculation script in my ELN is producing a result that differs from my manual calculation. How do I diagnose the issue?"

Answer: This discrepancy must be resolved before proceeding. Follow this diagnostic workflow:

- Audit Trail Review: Use the ELN's audit trail to view the exact raw data inputs captured by the script.

- Script Versioning: Check that you are using the correct, approved version of the calculation script. Previous versions may have had errors.

- Intermediate Value Check: If the tool allows, check intermediate calculation values within the script's logic.

- Isolate and Test: Create a test entry with simple, known values to verify the script's core arithmetic functions.

FAQ 3: "A colleague cannot replicate my experimental workflow from my ELN entry. What are the common points of failure in shared protocols?"

Answer: Incomplete protocol capture is a major source of irreproducibility. Ensure your ELN entry includes:

- Reagent Lot Numbers: These are critical and often omitted. Use the ELN's reagent tracking module to link directly to inventory.

- Instrument Settings Profile: Export and attach the specific instrument method/profile, not just the name.

- Deviations: Document any minor deviations from the standard SOP in the "Procedure Notes" section. The verification system flags entries with no deviations recorded for complex protocols as "Requires Review."

FAQ 4: "The system is flagging all my data entries for 'Secondary Review,' increasing my workload. How can I reduce this?"

Answer: Built-in verification rules are designed to catch anomalies. Frequent flags suggest a systematic issue.

- Review Verification Rules: Access the "Verification Settings" for your project. Common triggers include: data points outside 2 standard deviations of historical controls, missing environmental data (e.g., temperature, humidity for cell culture), or skipped calibration steps.

- Calibration Check: Ensure your instruments have up-to-date calibration certificates logged in the LIMS.

- Control Sample Values: If your control sample results are drifting, it may trigger a rule. Investigate reagent degradation or instrument performance.

Key Experimental Protocols for Data Verification Research

Protocol 1: Assessing Automated vs. Manual Data Transcription Error Rates

- Objective: Quantify the error reduction achieved by ELN/LIMS direct instrument data capture.

- Methodology:

- Setup: Use a standardized assay (e.g., protein concentration via absorbance).

- Control Group (Manual): Researchers manually read values from an instrument display and type them into a spreadsheet or paper notebook.

- Test Group (Automated): The same instrument is connected via a validated interface to the LIMS, pushing data directly to the corresponding experiment record.

- Analysis: Compare both datasets to a master truth value generated by the instrument's digital report. Calculate the error rate (number of incorrect entries/total entries) and critical error rate (errors >10% from truth).

Protocol 2: Validating a Built-In Outlier Detection Algorithm

- Objective: Determine the sensitivity and specificity of a configured verification rule.

- Methodology:

- Rule Definition: Configure a rule to flag plate reader well values that are >3 median absolute deviations (MAD) from the plate median.

- Data Injection: Use a historical "clean" dataset. Systematically inject synthetic outliers of known magnitude (e.g., 3.5 MAD, 5 MAD, 10 MAD).

- Evaluation: Run the dataset through the verification rule. Record True Positives (injected outliers caught), False Positives ("clean" data flagged), and False Negatives (injected outliers missed).

- Calculation: Compute Sensitivity = TP/(TP+FN) and Specificity = TN/(TN+FP).

Data Presentation: Verification Performance Metrics

Table 1: Error Rate Comparison in Transcription Methods

| Transcription Method | Sample Size (N entries) | Overall Error Rate | Critical Error Rate (>10% deviation) | Avg. Time per Entry |

|---|---|---|---|---|

| Manual (Paper Notebook) | 1,250 | 3.8% | 0.7% | 45 sec |

| Manual (Spreadsheet) | 1,250 | 2.1% | 0.4% | 30 sec |

| ELN Direct Capture | 1,250 | 0.1%* | 0.0%* | 5 sec |

*Errors attributable to initial instrument misconfiguration, not transcription.

Table 2: Performance of Built-In Verification Rules

| Verification Rule Type | Triggers per 100 Experiments | True Positive Rate | False Positive Rate | Avg. Review Time Saved per Flag |

|---|---|---|---|---|

| Missing Metadata | 18 | 100% | 0% | 15 min |

| Data Range (±2SD) | 22 | 95% | 5% | 45 min |

| Protocol Step Skipped | 9 | 100% | 0% | 60 min |

| Reagent Lot Expiry | 4 | 100% | 0% | 90 min |

Visualizations

Title: Data Verification Workflow: Traditional vs. Automated ELN/LIMS

Title: Decision Logic for Built-In Verification Protocols

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Verification Research |

|---|---|

| Standard Reference Material (SRM) | Provides a ground-truth value with known uncertainty for validating instrument accuracy and automated data capture. |

| Bar-Coded/QR-Coded Reagent Tubes | Enables reliable, error-free scanning by ELN/LIMS to automatically log reagent identity, lot, and expiry. |

| Electronic Pipettes with Data Logging | Records volumes and timestamps directly to the ELN, removing manual transcription error for critical liquid handling steps. |

| Plate Reader with Direct API | Instrument with an Application Programming Interface (API) allows for direct, bi-directional communication with the LIMS for method push and data pull. |

| Audit Trail Software Solution | Independent tool to validate the completeness and immutability of the electronic audit trails generated by the ELN/LIMS. |

Technical Support Center

FAQs & Troubleshooting Guides

Q1: Our automated validation checks are flagging all date variables as "invalid" after converting from the source system. What is the likely cause? A1: The most common cause is a mismatch in datetime metadata or an incorrect handling of partial dates. SDTM requires ISO 8601 format. Verify the following:

- Check that the source data's datetime format is correctly mapped (e.g.,

'%Y-%m-%dT%H:%M:%S'). - Ensure partial dates (e.g.,

"2024-01"for month/year) are represented correctly (--MMfor SDTM,YYYY-MMfor ADaM) and not coerced to a full date, causing an invalid format error. - Confirm your validation script's regular expression for ISO 8601 is comprehensive. Use:

^(\d{4}-\d{2}-\d{2}|\d{4}-\d{2}|d{4})(T\d{2}:\d{2}:\d{2})?$.

Q2: The comparison between the number of unique subjects (USUBJID) in SDTM vs. ADaM datasets shows a discrepancy. How should we troubleshoot this? A2: This indicates a potential failure in the subject-level traceability linkage. Follow this protocol:

- Isolate the Discrepancy: Run a SQL query or script to list USUBJIDs present in the ADaM population (e.g.,

ADSL) but not in the SDTMDMdomain, and vice-versa. - Check Subject Inclusion/Exclusion Logic: Review the algorithm in your ADaM specification document. Manually verify the logic on a sample of discrepant IDs to confirm the programming is correct.

- Audit the Merge/Join Keys: In

ADSLderivation, confirm the merge fromDMand other source domains uses the correct keys (STUDYID, USUBJID, SITEID) without accidental filtering or duplication.

Q3: Our automated conformance check against the CDISC Controlled Terminology (CT) package is failing, but we are certain our terms are valid. What steps should we take? A3: This is often due to using an outdated CT version or mismatched codelist names.

- Verify CT Version: Confirm your validation tool is using the exact CT version (e.g.,

2024-03-29) specified in your submission package. Update the local CT reference file if necessary. - Check Codelist Mapping: Ensure your variable's controlled terminology codelist name (e.g.,

C66742forAEDECOD) in yourdefine.xmlmatches the name used in the validation engine's lookup. A case-sensitive mismatch can cause failure. - Check for Custom Terms: If using sponsor-defined terms, ensure they are properly documented in the

SUPP--datasets and thedefine.xmland that your validation rules are configured to accept them.

Q4: The define.xml file passes all technical checks but fails to load in the FDA's JANUS review system. What are the critical points to validate? A4: This is typically a metadata integrity issue, not a data issue.

- Validate XML Schema: Use an XML validator (e.g.,

xmllint) to ensure yourdefine.xmlconforms strictly to the CDISC ODM / Define-XML schema. A single misplaced tag can cause failure. - Check File References: Ensure all

leafelements'fileattributes point to the actual dataset files with the correct case-sensitive names and locations (e.g.,./sdtm/dm.xpt). - Verify Value-Level Metadata: Scrutinize the

ValueListDefsections for complex variables. IncompleteWhereClausedefinitions or incorrectItemOIDreferences are a common source of fatal errors in reviewers.

Experimental Protocols for Key Validation Studies

Protocol 1: Benchmarking Automated vs. Manual Consistency Checks

Objective: Quantify the reduction in workload and gain in accuracy by replacing manual checks for variable attribute consistency (name, label, type, length) between define.xml and the physical SAS XPORT files with an automated script.

Methodology:

- Sample: Use 10 historical submission datasets (SDTM + ADaM).

- Control: Two expert reviewers manually record discrepancies for all variables across all domains using a checklist. Time taken is recorded.

- Intervention: Run a Python/R script that extracts metadata from

define.xmland the XPORT file headers, comparing them programmatically. - Metrics: Compare time (person-hours) and discrepancy detection rate (including false positives/negatives) between manual and automated methods.

Protocol 2: Testing a Traceability Validation Algorithm

Objective: Validate an algorithm that programmatically traces a derived ADaM value (e.g., AVAL in ADLB) back to its source SDTM variables, as documented in the define.xml (Derivation/Comment).

Methodology:

- Algorithm Input:

define.xml, source SDTM dataset, target ADaM dataset. - Process: The script parses derivation logic from metadata, maps variables, and attempts to replicate the derivation for a statistically sampled set of records (e.g., 5% per analysis parameter).

- Validation: For each sampled record, the algorithm's result is compared to a manually calculated "gold standard" performed by a programmer.

- Output: Report algorithm accuracy (%) and categorize any failures (e.g., "unparsable derivation text," "missing source variable").

Data Presentation

Table 1: Workload Reduction from Automated Validation (Simulated Study)

| Validation Task | Manual Effort (Hours per Study) | Automated Effort (Hours per Study) | Reduction (%) |

|---|---|---|---|

| Metadata Conformance (define.xml vs. Data) | 12.5 | 0.5 | 96.0 |

| Controlled Terminology Checks | 8.0 | 0.2 | 97.5 |

| Traceability Linkage Review | 20.0 | 1.0 | 95.0 |

| Total (Core Checks) | 40.5 | 1.7 | 95.8 |

Table 2: Accuracy Comparison of Discrepancy Detection

| Error Type Injected | Manual Review Detection Rate (%) | Automated Script Detection Rate (%) |

|---|---|---|

| Incorrect Variable Type in Dataset | 85 | 100 |

| Invalid CT Code | 90 | 100 |

| Broken Subject-Level Traceability | 75 | 100 |

| Inconsistent Variable Length | 60 | 100 |

| Overall Accuracy | 77.5 | 100 |

Diagrams

Validation Workflow for SDTM/ADaM Automation

Automated Validation Check Decision Logic

The Scientist's Toolkit: Research Reagent Solutions

| Item | Primary Function in Validation |

|---|---|

| Pinnacle 21 Community | Open-source tool for foundational compliance checks against CDISC standards; used for initial data quality screening. |

R {metatools} / {admiral} |

R packages providing functions and templates for standardized SDTM/ADaM dataset and metadata creation, reducing programming variance. |

Python {cdisc-core} |

A Python library to access and utilize CDISC standards (CT, models) programmatically within custom validation scripts. |

SAS define.xml Generator |

Tools (e.g., SAS %make_define) to automate creation of define.xml from dataset metadata, ensuring internal consistency. |

| Jupyter Notebooks / RMarkdown | Environments for developing, documenting, and sharing reproducible validation scripts and ad-hoc query results. |

| Git Version Control | Tracks changes to validation scripts, specifications, and logs, ensuring audit trail and collaborative development integrity. |

Navigating Pitfalls: Ensuring Reliability and Efficiency in Automated Systems

Troubleshooting Guides

Guide 1: Managing Over-Alerting in Automated Verification Systems

Q: Our system flags an excessive number of potential data anomalies, overwhelming our team with alerts. Many are low-risk or irrelevant. How can we reduce this noise?

A: Over-alerting typically stems from imbalanced classification thresholds or feature engineering that fails to capture meaningful experimental context. Implement the following protocol to recalibrate your system.

Experimental Protocol for Alert Threshold Optimization:

- Assemble Ground Truth Set: Curate a labeled dataset of 500-1000 historical data points, where each point is manually verified and classified as "Critical Anomaly," "Minor Issue," or "Normal."

- Review Feature Importance: Use SHAP (SHapley Additive exPlanations) or permutation importance on your current model to identify features contributing most to low-importance alerts.

- Implement Cost-Sensitive Learning: Retrain your classifier using a weighted loss function. Assign higher weights to misclassifying "Critical Anomaly" points to prioritize accuracy for serious issues.

- Adjust Decision Thresholds: Generate precision-recall curves for each alert class. Systematically adjust the classification probability threshold upward to reduce false positives, monitoring the recall for critical anomalies to ensure it stays above 95%.

- Introduce Contextual Filtering: Create business rules (e.g., "suppress plate reader calibration alerts during scheduled maintenance windows") to pre-filter known benign events.

Data Summary from Threshold Optimization Experiment: Table 1: Impact of Probability Threshold Adjustment on Alert Volume and Accuracy

| Model Threshold | Daily Alerts Generated | Critical Anomaly Recall | Precision (All Alerts) | Expert Hours Saved/Week |

|---|---|---|---|---|

| 0.5 (Default) | 215 ± 18 | 99.5% | 22% | 0 (Baseline) |

| 0.7 | 89 ± 11 | 98.1% | 47% | 15 |

| 0.85 | 42 ± 7 | 95.3% | 81% | 28 |

Workflow for Reducing Over-Alerting

Guide 2: Investigating and Correcting for False Negatives

Q: A critical batch contamination issue was missed by our automated verification system. How do we diagnose the cause of this false negative and prevent similar misses?

A: False negatives are high-risk failures often caused by concept drift, underrepresented failure modes in training data, or inadequate model sensitivity. Follow this forensic diagnostic protocol.

Experimental Protocol for False Negative Root Cause Analysis:

- Isolate the Failure Instance: Extract the full data profile (all features) for the missed anomaly and its temporal/experimental neighbors.

- Conduct Adversarial Testing: Slightly perturb the features of the missed anomaly (using techniques like FGSM - Fast Gradient Sign Method) to determine the minimum change required for the model to correctly classify it as positive.

- Analyze Data Distribution Shift: Compare the statistical properties (mean, variance, covariance) of the data segment containing the false negative to the model's original training data using the Population Stability Index (PSI) or Kolmogorov-Smirnov test.

- Retrain with Augmented Data: Synthetically augment your training set to include simulated versions of the missed anomaly. Use techniques like SMOTE (Synthetic Minority Oversampling Technique) for tabular data or adding noise/transformations that mimic the failure mode.

- Implement Ensemble Monitoring: Deploy a secondary, simpler model (e.g., a rule-based system or a One-Class SVM) trained specifically on only normal data to flag any significant deviations the primary model may miss.

False Negative Diagnostic Pathway

Guide 3: Detecting and Mitigating Model Drift

Q: Our model's performance appears to be degrading gradually over time. How do we confirm model drift and establish a retraining schedule?

A: Model drift (concept or data drift) is inevitable as experiments evolve. Proactive monitoring and scheduled retraining are essential.

Experimental Protocol for Drift Detection and Model Refresh:

- Establish a Performance Baseline: Log key metrics (F1-score for critical anomalies, precision, recall) on a held-out validation set from the original training data distribution.

- Implement Statistical Process Control (SPC): Calculate the mean and standard deviation of your model's daily prediction confidence scores for a random sample of presumed normal data. Plot these on a Shewhart control chart.

- Define Drift Triggers: Set quantitative triggers for retraining:

- Feature Drift: PSI > 0.2 for any top-10 important feature.

- Performance Drift: 5% relative decrease in rolling 30-day F1-score.

- Control Chart Alert: 7 consecutive data points above/below the baseline mean on the SPC chart.

- Execute Scheduled Retraining: Upon any trigger, execute a full model retraining pipeline using the most recent 6-12 months of data, ensuring the new failure modes are represented. Validate performance before deployment against the previous model version using a McNemar's test.