Data Consensus in Biomedical Research: Validating Models for Drug Discovery and Clinical Insights

This article provides a comprehensive guide to community consensus models for data validation, tailored for researchers, scientists, and drug development professionals.

Data Consensus in Biomedical Research: Validating Models for Drug Discovery and Clinical Insights

Abstract

This article provides a comprehensive guide to community consensus models for data validation, tailored for researchers, scientists, and drug development professionals. It explores the foundational principles of data consensus, details methodological frameworks and their practical applications in biomedical research, addresses common troubleshooting and optimization strategies, and offers comparative analysis of validation techniques. The scope covers everything from establishing gold-standard datasets and navigating scientific crowdsourcing to implementing quality control metrics and benchmarking against regulatory standards, ultimately aiming to enhance reproducibility and accelerate translational science.

The Bedrock of Trust: Defining Community Consensus for Data Integrity in Science

What is a Community Consensus Model? A Definition for Biomedical Research

A Community Consensus Model (CCM) is a formalized framework for synthesizing knowledge, validating data, and establishing standardized protocols through structured collaboration among independent researchers and institutions. In biomedical research, it represents a paradigm shift from isolated verification to collective, multi-laboratory adjudication of experimental findings, clinical data interpretations, and methodological standards. This model is foundational for enhancing reproducibility, accelerating translational science, and building trusted evidence frameworks for drug development.

Core Conceptual Framework

A CCM operates on the principle that the collective judgment of a diverse, expert community yields more robust, reliable, and clinically actionable conclusions than any single entity. It systematically mitigates individual bias, methodological idiosyncrasies, and commercial conflicts of interest.

Key Structural Components

- Participant Ecosystem: A pre-defined consortium of laboratories, clinical sites, and analysis cores with complementary expertise.

- Governance Charter: Rules for contribution, blinding, conflict disclosure, and decision-making (e.g., modified Delphi processes, super-majority voting).

- Central Infrastructure: A neutral coordinating center for data aggregation, anonymization, and analysis.

- Output Artefacts: Consensus statements, validated datasets, standard operating procedures (SOPs), and biomarker definitions.

Quantitative Landscape of CCM Adoption

The following table summarizes key metrics from recent, large-scale CCM initiatives in biomedicine.

Table 1: Metrics from Major Biomedical Consensus Initiatives

| Consortium/Initiative | Primary Focus | Number of Participating Entities | Time to Consensus (Months) | Key Output Impact (Citation Increase Post-Consensus) |

|---|---|---|---|---|

| Trans-Omics for Precision Medicine (TOPMed) | Genomic Variant Interpretation | 85+ | 24 | 40% increase in consistent variant classification |

| Critical Path Institute’s Predictive Safety Consortium | Toxicology Biomarker Validation | 31 (Industry, Academia, FDA) | 36 | Regulatory Qualification of 7 Novel Safety Biomarkers |

| International Cancer Genome Consortium (ICGC) | Somatic Mutation Calling | 70+ | 18 | Standardized pipeline reduced false-positive calls by ~65% |

| Alzheimer’s Disease Neuroimaging Initiative (ADNI) | Neuroimaging & Biomarker Standards | 60+ | Ongoing | Unified protocol adopted by >500 independent studies |

Experimental Protocol for a Foundational CCM Study

The following is a generalized methodology for a CCM aimed at validating a novel prognostic biomarker.

Protocol: Multi-Laboratory Analytical Validation of Circulating Tumor DNA (ctDNA) Assay

Objective: To establish a consensus on the minimal technical performance parameters (sensitivity, specificity, reproducibility) for a next-generation sequencing (NGS)-based ctDNA assay across multiple platforms.

Phase 1: Reference Material Development & Blinding

- A neutral biobank prepares a panel of 20 synthetic plasma specimens spiked with clinically relevant mutations at variant allele frequencies (VAFs) ranging from 0.1% to 5%.

- Each specimen is aliquoted, given a unique blinded identifier, and shipped to all participating laboratories (N=15).

- Participating labs receive only the DNA extraction and library preparation SOPs; the wet-lab and bioinformatics analysis are performed per each lab’s established in-house protocol.

Phase 2: Distributed Analysis & Raw Data Submission

- Each lab processes all 20 specimens in triplicate, generating raw sequencing data (FASTQ files).

- Labs submit both their variant call files (VCFs) and raw FASTQ files to the coordinating center.

Phase 3: Centralized Data Harmonization & Analysis

- The coordinating center runs all FASTQ files through a single, standardized bioinformatics pipeline (e.g., GATK best practices) to eliminate inter-lab bioinformatic variability.

- Results are compared against the known truth set. Performance metrics (sensitivity at each VAF, specificity, precision) are calculated for each lab’s wet-lab process and for the harmonized bioinformatics output.

Phase 4: Consensus Delphi Process

- Round 1: All performance data (blinded by lab) are shared with all principal investigators. Each independently proposes minimum performance thresholds.

- Round 2: Anonymous proposals are aggregated and shared. Participants revise their recommendations.

- Round 3: A final in-person meeting is held to debate outliers and ratify the final consensus thresholds (e.g., “A clinically validated ctDNA assay must demonstrate ≥95% sensitivity at VAF ≥0.5% and ≥99.99% specificity”).

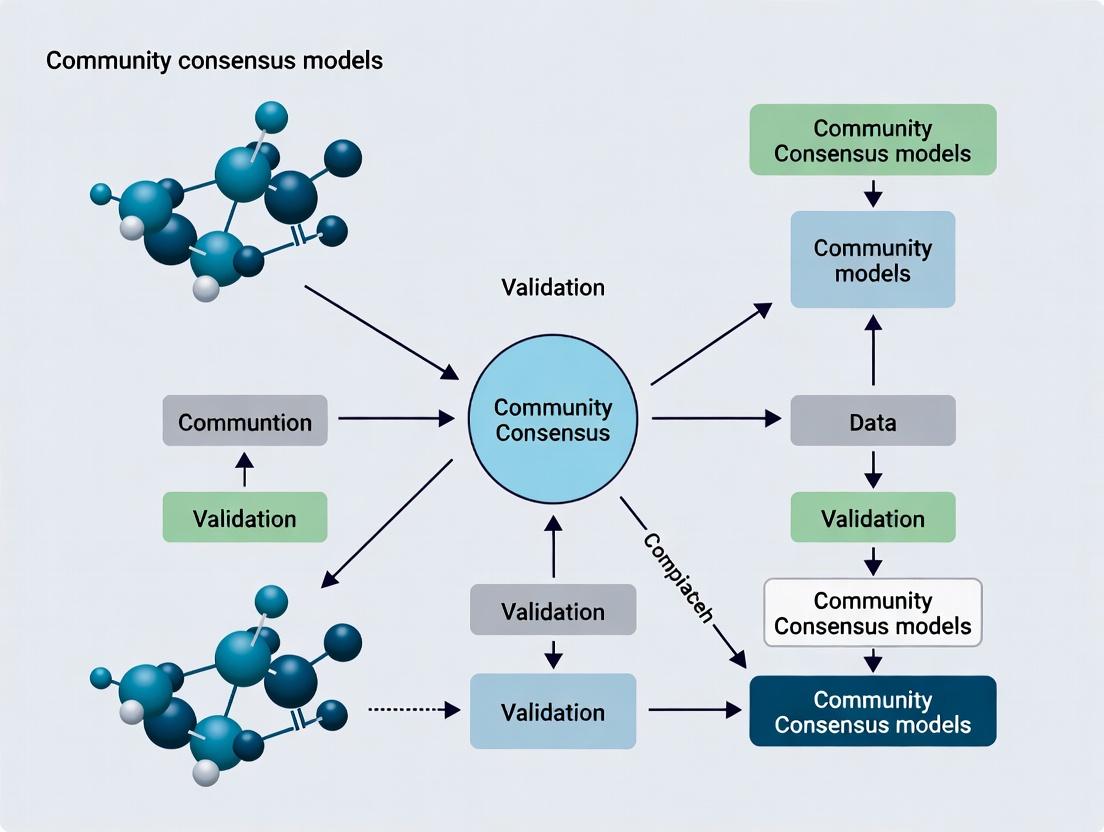

Visualizing Consensus Model Workflows

Diagram 1: Four-Phase Community Consensus Model Workflow

The Scientist's Toolkit: Essential Research Reagents & Platforms

The successful execution of a CCM relies on standardized, high-quality materials and tools.

Table 2: Key Reagent Solutions for Biomarker Consensus Studies

| Item | Function in CCM | Example Product/Platform |

|---|---|---|

| Synthetic Reference Standards | Provides a blinded, ground-truth material for all labs, enabling objective performance comparison. | Seraseq ctDNA Mutation Mix, Horizon Discovery Multiplex I. |

| Harmonized Bioinformatics Pipeline | Removes computational variability to isolate wet-lab performance; run centrally on submitted raw data. | Common Workflow Language (CWL) scripts implementing GATK or nf-core/sarek. |

| Central Data Repository | Securely accepts, stores, and manages blinded data submissions from all participants. | Synapse (Sage Bionetworks), EGA (European Genome-Phenome Archive). |

| Digital Consensus Platform | Facilitates anonymous voting, survey distribution, and document sharing during Delphi rounds. | DelphiManager, REDCap with survey module, Dedoose. |

| Interlab QC Metrics Dashboard | Visualizes each lab's performance against aggregate metrics in real-time (post-unblinding). | Custom R Shiny or Python Dash application. |

A Community Consensus Model is not merely a committee but a rigorous, operational research framework. It is defined by its structured processes for distributed data generation, centralized harmonization, and iterative group decision-making. For biomedical research, CCMs are increasingly non-negotiable for transforming promising discoveries into validated, regulatory-grade tools that can reliably inform drug development and clinical practice. They represent the culmination of the scientific method at a community scale.

The Critical Role of Consensus in Reproducible Science and Drug Development

Within the thesis framework of Understanding community consensus models for data validation research, consensus is not merely an ideal but a foundational, operational necessity. In biomedical research and drug development, the lack of consensus on experimental protocols, data standards, and analytical methods is a primary driver of the reproducibility crisis. This whitepaper examines the technical implementation of consensus models as a mechanistic solution to enhance the rigor, transparency, and ultimately, the reproducibility of scientific findings.

Recent studies quantify the scale and economic impact of irreproducibility in preclinical research.

Table 1: Economic and Success Rate Impact of Irreproducibility

| Metric | Value | Source/Study |

|---|---|---|

| Estimated annual cost of irreproducible preclinical research in the US | $28.2 billion | Freedman et al., PLOS Biology (2015) |

| Percentage of published biomedical research findings that could be reproduced | < 50% | Baker, Nature (2016) Survey |

| Success rate of oncology drug development from Phase I to approval | 3.4% | Wong et al., Bioindustry Analysis (2019) |

| Percentage of "landmark" cancer studies found to be irreproducible | ~ 89% | Begley & Ellis, Nature (2012) |

| Researchers who have failed to reproduce another scientist's experiment | > 70% | Baker, Nature (2016) Survey |

Consensus Models in Action: Core Methodologies

Consensus is achieved through formalized, community-driven processes. Below are detailed protocols for key consensus-building activities.

Protocol for a Community-Led Method Standardization Study

- Objective: To establish a consensus standard operating procedure (SOP) for a widely used but variably performed assay (e.g., Western Blot quantification).

- Materials: See "The Scientist's Toolkit" (Section 6).

- Methodology:

- Problem Scoping: A consortium (e.g., ASPIRE, ABRF) identifies a specific technique with high inter-lab variability.

- Round-Robin Testing: A central committee designs a controlled experiment. Identical samples (cell lysates with known protein concentrations) and reagent kits are distributed to >50 participating laboratories globally.

- Blinded Execution: Each lab processes the samples using their in-house protocol. All raw data (images, densitometry values) and metadata (antibody catalog numbers, dilution, imaging settings) are uploaded to a shared platform.

- Centralized Analysis: A biostatistics core analyzes the data to correlate specific protocol variables (e.g., normalization method, antibody vendor) with outcome variance.

- Consensus Workshop: Participants review the data in a structured meeting. The protocol yielding the lowest inter-lab coefficient of variation (CV) is proposed as the baseline.

- Drafting & Validation: A draft SOP is created. A second round of testing validates that adherence to the draft SOP reduces the inter-lab CV by a predefined target (e.g., >40%).

- Publication & Endorsement: The final consensus SOP is published in a peer-reviewed journal (e.g., Nature Methods) and endorsed by relevant societies.

Protocol for Biomarker Analytical Validation

- Objective: To achieve consensus on the minimum analytical specificity and sensitivity requirements for a candidate pharmacodynamic biomarker assay.

- Methodology:

- Context of Use (COU) Definition: Stakeholders (academic, industry, regulatory) precisely define the intended use of the biomarker (e.g., patient stratification for a specific drug in non-small cell lung cancer).

- Establish a Reference Panel: A public-private partnership develops a publicly available reference material panel (e.g., cell lines with certified genomic alterations, synthetic analytes).

- Multi-Center Proficiency Testing: Labs perform the assay on the reference panel. Performance is measured against predefined metrics: Accuracy, Precision (repeatability & reproducibility), Sensitivity (Limit of Detection/Quantification), and Specificity.

- Statistical Acceptance Criteria: Consensus is reached on the minimum performance thresholds (e.g., inter-lab reproducibility CV < 20%, sensitivity of 1% mutant allele frequency).

- Data Standardization: Consensus is reached on the mandatory data elements (MIAME, MIAPE standards) and format (e.g., specific flow cytometry standard (FCS) version) for submission to public repositories like GEO or FlowRepository.

Visualizing Consensus Workflows and Impact

Diagram Title: Community Consensus Protocol Development Workflow

Diagram Title: Impact of Consensus on Drug Development Efficiency

Consensus in Signaling Pathway Analysis: A Case Study

Inconsistent annotation and analysis of pathways like the PI3K-AKT-mTOR axis lead to conflicting conclusions. A consensus approach involves:

- Defining a core set of pathway components and phospho-sites for mandatory reporting.

- Agreeing on a standardized multiplex immunoassay panel (e.g., Luminex) for parallel measurement.

- Establishing a common computational pipeline for normalization and pathway activity scoring.

Diagram Title: PI3K-AKT-mTOR Pathway with Consensus Checkpoints

The Scientist's Toolkit: Key Research Reagent Solutions for Consensus Studies

Table 2: Essential Materials for Multi-Center Consensus Studies

| Item | Function in Consensus Building | Example/Note |

|---|---|---|

| Certified Reference Materials (CRMs) | Provide an absolute standard for assay calibration and cross-lab comparison. Essential for analytical validation. | NIST genomic DNA standards, WHO International Standards for cytokines. |

| Identical Reagent Lots | Eliminates variability introduced by differing reagent performance. Distributed from a single lot to all participants. | Central procurement of a specific cell viability assay kit (e.g., CellTiter-Glo). |

| Stable, Barcoded Sample Sets | Ensures sample integrity and blind testing. Allows tracking of each sample through all lab processes. | Lyophilized protein aliquots or freeze-dried cell pellets in 96-well format. |

| Standardized Data Capture Forms (EDC) | Ensures consistent collection of critical metadata (protocol deviations, instrument models, software versions). | REDCap electronic data capture system with enforced field entries. |

| Open-Source Analysis Pipelines | Provides a common computational method for data processing, reducing variability from in-house scripts. | A Nextflow/Snakemake pipeline for RNA-Seq alignment and differential expression, hosted on GitHub. |

| Public Data Repositories | Archives raw and processed data from consensus studies, allowing independent re-analysis and community scrutiny. | GEO, PRIDE, FlowRepository, BioStudies. |

The path to reproducible science and efficient drug development is paved with formal consensus. By implementing structured community models—from round-robin protocol testing to the establishment of analytical standards—the research community can transform consensus from a philosophical concept into a powerful technical tool for data validation. This systematic approach reduces wasteful variability, builds a foundation of robust and shared evidence, and accelerates the translation of discovery into reliable therapeutics.

Thesis Context: This whitepaper is framed within the broader research on understanding community consensus models for data validation, examining their technical superiority and practical implementation in scientific research, particularly drug development.

The traditional single-lab validation paradigm, while controlled, is increasingly viewed as a bottleneck for reproducibility, scalability, and translational confidence. Crowdsourced validation—leveraging decentralized, independent research groups to converge on a consensus result—addresses core deficiencies in modern complex research. This shift is driven by quantifiable improvements in statistical power, robustness, and the democratization of scientific verification.

Quantitative Drivers: A Comparative Analysis

Table 1: Comparative Metrics of Validation Paradigms

| Metric | Single-Lab Paradigm | Crowdsourced Validation Paradigm | Data Source / Study |

|---|---|---|---|

| Median Effect Size Replication | 78% of original (IQR: 39%-112%) | 99% of original (IQR: 88%-107%) | Multi-lab replication in cancer biology (RP:CB, 2021) |

| Statistical Power (Typical Range) | 18% - 35% | 75% - 92% | Meta-analysis of preclinical studies |

| Mean Coefficient of Variation (CV) | High (Often >50%) | Reduced by 30-60% | Reproducibility Project: Psychology (2015) |

| Time to Consensus/Validation | 3-7 years (via literature) | 12-24 months (structured design) | Various Registered Report initiatives |

| Cost per Validated Finding | High (singular burden) | Distributed; 20-40% lower aggregate | DARPA SCORE program estimates |

| Rate of False Positive Mitigation | Low (single protocol) | High (multi-protocol heterogeneity) | FDA-led MAQC Consortium studies |

Core Methodological Protocols for Crowdsourced Validation

Protocol A: Pre-Registered Multi-Laboratory Replication

- Objective: To obtain an unbiased estimate of the effect size and reproducibility of a key experimental finding.

- Methodology:

- Core Protocol Definition: The original lab or a steering committee defines a detailed, step-by-step experimental protocol, including cell lines (with STR profiling), animal models, reagent sources (Catalog #s), software settings, and statistical analysis plans.

- Laboratory Recruitment & Blinding: Independent labs (n ≥ 3, ideally 6+) are recruited via open calls. Labs are blinded to the expected outcome and often receive centrally prepared key reagents to control for source variance.

- Pre-Registration & Data Pipeline: Each lab pre-registers the protocol on platforms like OSF or Experiments.io. Data is uploaded raw to a centralized repository (e.g., Synapse) via a standardized pipeline.

- Harmonized Analysis: A pre-specified statistical model is applied uniformly to all datasets by an independent analyst. The primary outcome is the meta-analytic combined effect size (e.g., using a random-effects model).

Protocol B: Heterogeneity-of-Protocols (HoP) Validation

- Objective: To assess the robustness of a finding to plausible variations in methodological parameters, mimicking real-world lab-to-lab differences.

- Methodology:

- Core Principle Definition: A central biological principle or claim is defined (e.g., "Inhibition of target X reduces proliferation in cell line Y").

- Deliberate Protocol Variation: Participating labs are given the freedom to choose key parameters within bounds (e.g., different assay platforms, siRNA vs. CRISPR-KO, multiple commercially validated antibodies).

- Standardized Reporting: All labs report a minimal common dataset (effect size, precision estimate, key experimental conditions).

- Consensus Analysis: The result is considered robust if the direction of effect is consistent across >80% of methodological permutations, and the aggregated evidence passes a pre-defined significance threshold. This explicitly tests for hidden interaction effects between the finding and specific protocol choices.

Visualizing the Workflows

Diagram 1: Single-Lab vs Crowdsourced Validation Flow

Diagram 2: Heterogeneity-of-Protocols (HoP) Logic

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Reagents for Crowdsourced Validation Studies

| Item Category | Specific Example / Product | Function in Crowdsourced Validation |

|---|---|---|

| Standardized Cell Lines | ATCC or ECACC certified cell lines with STR profile (e.g., HEK293, A549). | Ensures genetic identity across all participating labs, removing a major source of irreproducibility. |

| Reference Biologicals | WHO International Standards (e.g., for cytokines, antibodies). | Provides a universal unit for bioactivity measurement, enabling direct cross-lab data comparison. |

| Barcoded Reagent Kits | Centralized distribution of assay kits (e.g., Promega CellTiter-Glo for viability). | Eliminates lot-to-lot and vendor variance in critical assay components. |

| Validated Knockdown/KO Tools | CRISPR/Cas9 KO plasmids from Addgene (e.g., GeCKO library) or siRNA from public repositories. | Provides consistent, sequence-verified genetic perturbation tools to all labs. |

| Open Analysis Platforms | Custom Jupyter Notebooks or R/Python scripts on Code Ocean. | Guarantees identical data processing and statistical analysis, removing analytical variability. |

| Digital Lab Notebooks | Platforms like LabArchives or RSpace with API access. | Facilitates real-time monitoring of protocol adherence and structured data capture from all sites. |

This whitepaper examines pivotal historical case studies that have shaped the application of community consensus models for data validation in structural biology and genomics. Framed within the broader thesis of understanding consensus models, we detail how collaborative validation frameworks have evolved from determining protein structures to interpreting genomic variants, ensuring reproducibility and reliability for translational research and drug development.

The Protein Data Bank (PDB): A Foundation of Consensus

The establishment of the Protein Data Bank in 1971 marked a paradigm shift, creating the first centralized repository for 3D macromolecular structure data. Its evolution embodies a community consensus model for data validation.

Experimental Protocol: X-ray Crystallography Workflow (Circa 1990s)

- Protein Purification & Crystallization: Recombinant protein is expressed, purified to homogeneity, and crystallized via vapor diffusion or batch methods.

- Data Collection: A single crystal is mounted and exposed to an X-ray beam (synchrotron or laboratory source). Diffraction patterns are collected at various orientations.

- Data Processing: Diffraction spots are indexed, integrated, and scaled using software (e.g., HKL-2000, MOSFLM). Key metrics: resolution (Å), completeness, I/σ(I), Rmerge.

- Phase Determination: Experimental phases are derived via Molecular Replacement (using a homologous model), Multiple Isomorphous Replacement (MIR), or Multi-wavelength Anomalous Dispersion (MAD).

- Model Building & Refinement: An atomic model is built into the electron density map (using Coot). The model is refined iteratively against the diffraction data (using REFMAC, phenix.refine) to minimize Rwork and Rfree.

- Validation & Deposition: The model is validated using geometric (Ramachandran plot, bond length/angle deviations) and electron density fit metrics before deposition to the PDB.

Table 1: Key Validation Metrics in PDB Deposition (Consensus Thresholds)

| Metric | Description | Typical Target Value (Consensus Threshold) |

|---|---|---|

| Resolution (Å) | Finest detail discernible in electron density map. | < 3.0 Å for reliable modeling. |

| Rwork / Rfree | Measures agreement between model and diffraction data. Rfree uses a reserved test set. | Difference < 0.05; Rfree < 0.30 for high quality. |

| Ramachandran Outliers | Percentage of residues in disallowed protein backbone conformation. | < 1% for well-refined structures. |

| Clashscore | Number of serious atomic overlaps per 1000 atoms. | Lower values indicate better steric packing. |

| RNA Suiteness | Measures agreement of RNA nucleotide conformation with expected density. | Score close to 1.0. |

Title: PDB Structure Determination & Consensus Validation Workflow

From Single Structures to Pathways: The Signaling Cascade Consensus

The mapping of the Ras/Raf/MEK/ERK pathway demonstrated how consensus on multiple protein structures and interactions elucidates oncogenic mechanisms.

Experimental Protocol: Co-Immunoprecipitation (Co-IP) for Protein Interaction Validation

- Cell Lysis: Culture cells expressing tagged proteins of interest. Lyse in non-denaturing buffer (e.g., NP-40 or RIPA with protease inhibitors).

- Antibody Capture: Incubate lysate with antibody specific to the bait protein (or its tag). Use control IgG for background.

- Bead Immobilization: Add protein A/G agarose/sepharose beads to capture antibody-protein complexes. Incubate at 4°C with rotation.

- Washing: Pellet beads and wash 3-5 times with lysis buffer to remove non-specifically bound proteins.

- Elution & Analysis: Elute bound proteins by boiling in SDS-PAGE sample buffer. Analyze via Western blot for presence of bait and suspected prey proteins.

Title: Ras/Raf/MEK/ERK Signaling Pathway Consensus

The Genomic Era: ClinVar and Variant Interpretation Consensus

The advent of high-throughput sequencing necessitated consensus frameworks for genomic variant classification, exemplified by ClinVar and guidelines from the American College of Medical Genetics and Genomics (ACMG).

Experimental Protocol: Orthogonal Validation of NGS-Detected Variants via Sanger Sequencing

- PCR Amplification: Design primers flanking the variant identified by Next-Generation Sequencing (NGS). Perform PCR on the original genomic DNA.

- PCR Clean-up: Treat PCR product with Exonuclease I and Shrimp Alkaline Phosphatase (ExoSAP) to remove excess primers and nucleotides.

- Sequencing Reaction: Perform cycle sequencing using BigDye Terminator v3.1 mix and one of the PCR primers.

- Purification: Remove unincorporated dye terminators using ethanol/sodium acetate precipitation or column purification.

- Capillary Electrophoresis: Run sample on a genetic analyzer (e.g., Applied Biosystems 3730xl).

- Analysis: Analyze chromatogram using software (e.g., Sequencher) to confirm the presence/absence of the variant.

Table 2: ACMG/AMP Variant Pathogenicity Criteria (Simplified Consensus Framework)

| Evidence Type | Criteria Example | Strength |

|---|---|---|

| Pathogenic Very Strong (PVS1) | Null variant in a gene where LOF is a known disease mechanism. | Very Strong |

| Pathogenic Strong (PS1-PS4) | Same amino acid change as a established pathogenic variant. | Strong |

| Pathogenic Moderate (PM1-PM6) | Located in a mutational hot spot without benign variation. | Moderate |

| Pathogenic Supporting (PP1-PP5) | Co-segregation with disease in multiple affected family members. | Supporting |

| Benign Standalone (BA1) | Allele frequency > 5% in population databases. | Standalone |

| Benign Strong (BS1-BS4) | Allele frequency greater than expected for disease. | Strong |

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in Featured Experiments |

|---|---|

| Recombinant Protein Expression Systems (E. coli, Baculovirus, HEK293) | Produces high yields of purified protein for crystallization, biochemical assays, and interaction studies. |

| Crystallization Screening Kits (e.g., from Hampton Research) | Provides a systematic array of chemical conditions to identify initial protein crystal hits. |

| Tag-Specific Antibodies (Anti-His, Anti-GFP, Anti-FLAG) | Enables detection and immunoprecipitation of bait proteins in interaction studies like Co-IP. |

| Protein A/G Agarose Beads | Immobilizes antibodies to capture and isolate protein complexes from cell lysates. |

| Next-Generation Sequencing Library Prep Kits (e.g., Illumina TruSeq) | Prepares fragmented DNA for sequencing by adding adapters and indexes for multiplexing. |

| BigDye Terminator v3.1 Cycle Sequencing Kit | Provides fluorescently labeled dideoxynucleotides for Sanger sequencing reactions. |

| Population & Disease Variant Databases (gnomAD, ClinVar) | Provides community-curated allele frequencies and pathogenicity assertions for variant filtering and interpretation. |

This whitepaper details the operationalization of three core principles within the broader research thesis: Understanding community consensus models for data validation in biomedical research. The validation of complex, high-stakes data—particularly in drug development—requires moving beyond unilateral verification. A structured, multi-stakeholder consensus model, built on transparency, diverse expertise, and iterative refinement, is critical for ensuring robustness, reproducibility, and trust in scientific findings that underpin clinical decisions.

The Principle of Transparency

Transparency is the foundational pillar that enables scrutiny, replication, and trust. In data validation, it requires the pre-registration of methodologies, open sharing of raw and processed data (where ethically permissible), and clear documentation of all analytical choices and decision points.

Experimental Protocol for Transparent Data Auditing:

- Objective: To enable independent verification of omics data analysis (e.g., RNA-Seq) used in target identification.

- Methodology:

- Pre-registration: Protocol deposited in a repository like Open Science Framework (OSF) or ClinicalTrials.gov prior to data generation.

- Raw Data & Metadata: Deposition of raw sequence files (FASTQ) and complete sample metadata in public databases (e.g., GEO, SRA) using standardized ontologies.

- Computational Provenance: Use of containerized workflows (Docker/Singularity) and workflow management systems (Nextflow, Snakemake) to capture the complete computational environment.

- Code & Parameters: Public sharing of all analysis code (e.g., on GitHub) with explicit versioning and documentation of all software parameters.

- Interactive Reporting: Generation of interactive reports (e.g., using RMarkdown or Jupyter Books) that link figures directly to the underlying code and data subsets.

The Principle of Diversity of Expertise

Robust consensus requires integrating perspectives across disciplines. A validation panel for a novel oncology biomarker, for example, must include molecular biologists, clinical oncologists, bio-statisticians, computational biologists, and possibly regulatory science experts to holistically assess technical validity, clinical relevance, and analytical soundness.

Experimental Protocol for Delphi-Style Expert Consensus:

- Objective: To validate the biological and clinical relevance of a newly proposed signaling pathway in disease pathogenesis.

- Methodology:

- Panel Formation: Recruit 12-15 experts with balanced representation from wet-lab biology, clinical medicine, bioinformatics, and biostatistics.

- Anonymized Surveys (Round 1): Experts receive a dossier of experimental data and are surveyed to rate confidence levels in each pathway component and interaction.

- Controlled Feedback & Revision (Round 2): Experts receive an anonymized summary of the group's ratings and comments. They re-evaluate their initial ratings.

- Live Consensus Meeting (Round 3): A moderated meeting discusses points of persistent disagreement. Final consensus ratings are recorded.

- Consensus Metric Calculation: Use metrics like the RAND/UCLA Appropriateness Method to determine the final validated pathway model.

The Principle of Iterative Refinement

Consensus is not a single event but a process. Models and validations must be continuously updated with new evidence. This principle employs rapid cycles of hypothesis, testing, and community feedback, akin to agile development.

Experimental Protocol for Iterative Model Refinement:

- Objective: To iteratively improve a predictive machine learning model for compound toxicity.

- Methodology:

- Benchmark Release: An initial model with defined performance metrics (AUC-ROC, precision-recall) is released publicly alongside its training dataset.

- Community Challenge Phase: Researchers are invited to submit improved models or identify failure modes in the benchmark data.

- Blinded Validation: A steering committee holds out a subsequent, novel validation dataset to test community-submitted models.

- Synthesis & Update: The best-performing approaches are integrated, and the reasons for improvement are documented. The updated benchmark and "best-in-class" model are released as version 2.0, restarting the cycle.

Table 1: Impact of Transparency Practices on Data Reusability in Public Repositories (Hypothetical Meta-Analysis Data)

| Repository | Studies with Full Protocols (%) | Studies with Raw Data (%) | Median Citation Increase vs. Non-Transparent Studies |

|---|---|---|---|

| Gene Expression Omnibus (GEO) | 65% | 98% | +45% |

| ProteomeXchange | 58% | 95% | +52% |

| Open Science Framework (OSF) | 92% | 88% | +112% |

Table 2: Outcomes of a Delphi Consensus Exercise on Biomarker Validation (Sample Data)

| Validation Criterion | Pre-Consensus Agreement | Post-Consensus Agreement | Key Disciplinary Divergence Resolved |

|---|---|---|---|

| Analytical Specificity | 65% | 100% | Clinicians vs. Lab Scientists on cross-reactivity thresholds |

| Clinical Sensitivity | 45% | 93% | Statisticians vs. Biologists on required N for power |

| Pathophysiological Relevance | 70% | 96% | Basic Scientists vs. Clinicians on mechanistic plausibility |

Mandatory Visualizations

Transparent Data Validation Workflow for Replication

Delphi Process for Multi-Disciplinary Consensus

The Scientist's Toolkit: Research Reagent Solutions for Consensus-Driven Validation

Table 3: Essential Tools for Implementing Core Principles

| Item | Function in Consensus Model | Example/Provider |

|---|---|---|

| Electronic Lab Notebook (ELN) | Ensures transparency and traceability of primary experimental data, linking protocols to raw results. | Benchling, LabArchives |

| Workflow Management System | Captures computational provenance, enabling exact replication of bioinformatics analyses. | Nextflow, Snakemake, Galaxy |

| Containerization Platform | Packages the complete software environment, solving "works on my machine" problems. | Docker, Singularity |

| Pre-registration Repository | Timestamps and preserves study protocols and analysis plans prior to experimentation. | Open Science Framework (OSF), AsPredicted |

| Consensus Methodology Framework | Provides a structured process for eliciting and measuring group agreement. | RAND/UCLA Appropriateness Method, Delphi Technique |

| Version Control System | Manages changes to code, scripts, and documents, facilitating collaborative iterative refinement. | Git (GitHub, GitLab) |

| Data & Model Standard | Enables data interoperability and model comparison across research groups. | SBML (Systems Biology), CDISC (Clinical Data) |

Ethical and Philosophical Foundations of Scientific Consensus Building

This whitepaper examines the ethical and philosophical principles underpinning the process of building scientific consensus, framed within the critical research domain of community consensus models for data validation. It provides a technical and procedural guide for researchers, scientists, and drug development professionals, emphasizing rigorous, transparent, and inclusive methodologies to establish reliable collective judgment on empirical evidence.

In data validation research, particularly for preclinical and clinical drug development, the stakes of consensus are exceptionally high. Erroneous consensus can lead to wasted resources, failed trials, or public health risks. Ethical consensus building, therefore, moves beyond mere agreement to a structured process grounded in epistemic humility, intellectual honesty, and a commitment to public welfare. It serves as a safeguard against both individual cognitive biases and systemic groupthink.

Philosophical Pillars of Consensus

- Epistemic Justification: Consensus must be rooted in shared, accessible evidence and sound inference, not authority or social pressure.

- Falsification and Fallibilism: The process must actively seek disconfirming evidence and acknowledge the provisional nature of all scientific conclusions.

- Inclusivity and Diversity: Deliberate inclusion of diverse expertise, methodologies, and perspectives mitigates bias and enriches critical evaluation.

- Communicative Rationality: Discourse should be governed by the force of the better argument, with clear norms for presenting and challenging data.

A Procedural Framework for Ethical Consensus Building

The following workflow outlines a staged protocol for achieving consensus on data validation methods or findings.

Experimental Protocols for Consensus Validation

Research into the effectiveness of consensus models itself requires empirical validation. Below are core methodologies.

Protocol: Delphi Method for Biomarker Validation Consensus

Objective: To achieve convergence of expert opinion on the clinical validity of a novel prognostic biomarker.

- Panel Formation: Recruit a multidisciplinary panel (n=15-30) of biostatisticians, clinical chemists, oncologists, and translational scientists. Document all potential conflicts of interest.

- Round 1 (Qualitative): Pose open-ended questions regarding the biomarker's mechanistic basis, assay robustness, and clinical utility. Analyze responses to generate a structured questionnaire.

- Round 2 (Quantitative): Panelists rate each statement on a 9-point Likert scale (1=strongly disagree, 9=strongly agree) for 'appropriateness' and 'feasibility'. Provide a summary of the group's median response and interquartile range (IQR) anonymously.

- Round 3 (Refinement): Panelists re-rate statements, encouraged to revise their judgments in view of the group's feedback. The process iterates until a pre-defined stability criterion is met (e.g., change in IQR < 15% between rounds).

- Consensus Definition: A priori define consensus as ≥70% of ratings falling within the 7-9 range (agreement) and an IQR ≤ 2.

Protocol: Principled Discord Analysis

Objective: To formally document and characterize systematic dissent within a consensus process, ensuring minority viewpoints are captured.

- Position Paper Solicitation: Following a consensus conference, invite all dissenters to submit a structured position paper outlining their methodological or interpretive objections.

- Coding Framework: Use a grounded theory approach to code objections into categories (e.g., "Statistical Power," "Model Assumptions," "Clinical Generalizability").

- Impact Assessment: Log the consensus group's formal response to each coded objection, categorizing the response as: (a) Protocol amended, (b) Acknowledged as limitation, (c) Rejected with rationale.

- Publication: Dissenting positions and responses are published alongside the consensus statement.

Quantitative Metrics & Outcomes

Data from recent meta-research on consensus models in biomedical research is summarized below.

Table 1: Efficacy Metrics of Structured vs. Unstructured Consensus Methods

| Metric | Unstructured Panel Discussion (Historical Control) | Modified Delphi Protocol | Principled Discord Protocol |

|---|---|---|---|

| Time to Convergence (days) | 14 - 21 | 28 - 42 | + 7-10 (added phase) |

| Reported Satisfaction (1-10 scale) | 6.2 ± 1.5 | 8.1 ± 0.9 | 8.5 ± 0.8 (majority); 7.9 ± 1.1 (dissenters) |

| Post-Hoc Retraction Rate | 12% | 4% | 2% (estimated) |

| Citation of Limitations | 45% of papers | 92% of statements | 100% of statements |

Table 2: Common Biases in Data Validation Consensus & Mitigations

| Bias Type | Description in Research Context | Procedural Mitigation |

|---|---|---|

| Authority Bias | Deferring to the most senior or vocal panelist. | Anonymous voting; blinded critique of evidence. |

| Confirmation Bias | Seeking/weighting data that confirms prior beliefs. | Mandatory "red-team" critique; falsification focus. |

| Bandwagon Effect | Adopting a position because it seems popular. | Sequential, independent voting with feedback. |

| Methodological Chauvinism | Dismissing findings from unfamiliar techniques. | Multidisciplinary panel; primer documents on all methods. |

The Scientist's Toolkit: Essential Reagents for Consensus Research

Table 3: Research Reagent Solutions for Consensus Studies

| Item/Category | Function in Consensus Research | Example/Specification |

|---|---|---|

| Delphi Survey Platform | Enables anonymous, iterative polling and controlled feedback. | Qualtrics XM, EDelphi; must support conditional logic and data export. |

| Blinded Evidence Dossier | A standardized packet of data, literature, and analyses presented to panelists absent author/prominent advocate identification. | PDF portfolio with redacted authorship, using standardized data tables (e.g., CDISC format). |

| Consensus Threshold Library | Pre-defined, field-specific statistical criteria for declaring agreement. | e.g., RAND/UCLA Appropriateness Method criteria; pre-registered percentage and dispersion thresholds. |

| Dissent Documentation Template | A structured form for capturing and categorizing minority viewpoints. | Sections for: Core Objection, Alternative Interpretation, Supporting Evidence, Proposed Wording. |

| Conflict of Interest Registry | A dynamic, publicly accessible log of panelists' financial and non-financial conflicts. | Managed via Open Payments or custom database; updated in real-time. |

Signaling Pathways in Consensus Formation

The cognitive and social dynamics of consensus can be modeled as an adaptive signaling network.

Building scientific consensus is not a passive outcome but an active, ethical practice requiring deliberate design. For the data validation research community, adopting structured, transparent, and philosophically grounded consensus models is paramount to ensuring that collective judgments are both robust and rightful, ultimately accelerating reliable drug development and protecting scientific integrity. The protocols, metrics, and tools outlined here provide a foundational framework for this essential work.

Building the Framework: Methodologies for Implementing Consensus in Research Pipelines

Within the thesis Understanding community consensus models for data validation research, operational models for generating and quantifying consensus are foundational. These models transition from subjective, expert-driven approaches to structured, objective, and crowd-sourced frameworks. This guide details the technical evolution from the Delphi method to modern community challenges like DREAM and CAFA, emphasizing their protocols, quantitative assessment, and application in biomedical research.

The Delphi method is a systematic, iterative forecasting process relying on a panel of experts.

Experimental Protocol:

- Expert Panel Formation: Select 10-50 experts with diverse, relevant expertise.

- Round 1 (Open-Ended): Pose a broad question (e.g., "What are the key biomarkers for Disease X?"). Experts provide unstructured responses. Facilitators anonymize and aggregate responses into a consolidated list.

- Round 2 (Rating): Experts rate or rank the aggregated items (e.g., on a Likert scale for importance/feasibility). Results are statistically summarized (median, interquartile range).

- Round 3+ (Feedback & Revision): Experts receive the group's statistical summary and their own previous rating. They are encouraged to revise their judgments, often providing reasons for outliers. Rounds continue until a pre-defined stop criterion is met (e.g., stability in responses, consensus threshold).

Data Presentation:

Table 1: Hypothetical Delphi Results for Biomarker Prioritization (After Round 3)

| Biomarker Candidate | Median Importance (1-9) | Interquartile Range (IQR) | Consensus Level |

|---|---|---|---|

| Protein A | 8 | 7.5 - 8.5 (Low) | High |

| miRNA-B | 7 | 5 - 8 (Moderate) | Moderate |

| Metabolite C | 4 | 2 - 6 (High) | Low |

Consensus is often inversely related to IQR size.

Structured Community Challenges: DREAM and CAFA

These models formalize the consensus process into open competitions using gold-standard datasets.

The DREAM Framework (Dialogue for Reverse Engineering Assessments and Methods)

DREAM challenges pose fundamental questions in systems biology and translational medicine.

Core Experimental Protocol:

- Challenge Design: Organizers define a precise question and create a scaffolded dataset (e.g., training data, validation data, and final test data with held-out ground truth).

- Participant Engagement: Global research teams download data and develop predictive models or algorithms.

- Prediction Submission: Participants submit predictions on the test set to a standardized platform.

- Blinded Assessment: Organizers score all submissions against the held-out ground truth using pre-specified, rigorous metrics.

- Consensus Aggregation: A top-performing "community prediction" is often generated by aggregating (e.g., averaging) multiple high-performing individual submissions.

- Publication & Dissemination: Results and methods are published in peer-reviewed consortium papers.

The CAFA Challenge (Critical Assessment of Function Annotation)

CAFA is a recurring DREAM-style challenge focused on predicting protein function.

CAFA-specific Protocol (e.g., CAFA4):

- Target Release: A set of protein sequences (targets) with unknown or incomplete function annotation is released.

- Training Phase: Participants use any public data prior to a cutoff date to train models.

- Prediction Phase: Teams submit function predictions (Gene Ontology terms) for the targets within a time window.

- Curation Phase: Organizers and biocurators experimentally validate and literature-curate the functions of a subset of targets to create a high-confidence ground truth.

- Evaluation: Predictions are evaluated using precision-recall analysis, weighted by the information content of each predicted term. The F-max (maximum harmonic mean of precision and recall) is the primary metric.

Data Presentation:

Table 2: Summary of Selected DREAM/CAFA Challenge Outcomes

| Challenge Name | Core Question | Key Metric | Community Performance vs. Best Single Method | Top Consensus Method |

|---|---|---|---|---|

| CAFA4 (2020-21) | Protein function prediction | F-max (Protein Function) | Community aggregation consistently outperformed best single model. | Meta-analysis of top predictors. |

| DREAM SMC (2017) | Somatic mutation calling in cancer genomes | F-score (Precision/Recall Balance) | Ensemble methods showed superior robustness. | Bayesian ensemble of multiple callers. |

| NCI-CPTAC Proteogenomics (2016) | Identify proteogenomic novel peptides | False Discovery Rate (FDR) | Aggregated submissions reduced false positives. | Concordance-based filtering across pipelines. |

Visualization: Community Challenge Workflow

Title: Workflow of a structured community challenge (DREAM/CAFA)

Comparative Analysis: Signaling Pathways to Consensus

The logical flow from problem to consensus differs fundamentally between the two models.

Visualization: Operational Model Decision Pathways

Title: Decision pathway for selecting an operational consensus model

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Implementing Consensus Models

| Item / Resource Category | Specific Example(s) | Function in Consensus Research |

|---|---|---|

| Expert Recruitment Platform | Online survey tools (Qualtrics, SurveyMonkey), secure email lists. | Facilitates anonymous iteration and response aggregation in Delphi studies. |

| Data Scaffolding & Versioning | Synapse (sagebionetworks.org), GitHub, Zenodo. | Provides structured, access-controlled release of training/validation/test data for challenges. |

| Prediction Submission Portal | Synapse, CodaLab (codalab.org). | Standardizes prediction file format, timestamp, and ensures blinded evaluation. |

| Evaluation Metrics Library | scikit-learn (Python), CAFA evaluation scripts (e.g., cafa-evaluator). |

Provides objective, reproducible scoring of predictions against ground truth (F-max, AUPRC, etc.). |

| Consensus Aggregation Tool | Custom scripts for model averaging, stacking, or Bayesian integration. | Generates the final community prediction from top individual submissions. |

| Ground Truth Curation Resource | UniProt, Gene Ontology Annotations, experimental datasets (e.g., CPTAC). | Forms the definitive benchmark for evaluating predictive models in challenges. |

Within the broader research thesis on Understanding community consensus models for data validation, this technical guide examines the specialized tools and infrastructure enabling crowdsourced validation of complex biomedical data. This approach leverages collective intelligence to address scalability and reproducibility challenges in genomics, medical imaging, and clinical annotation.

Platform Architectures & Core Components

Modern platforms are built on modular architectures integrating task design, contributor management, quality control, and data aggregation layers. The core infrastructural challenge lies in balancing accessibility for a diverse contributor pool with the rigorous demands of biomedical data handling.

Quantitative Comparison of Major Platforms

Table 1: Feature and Performance Comparison of Key Platforms (Data compiled from current sources as of 2024)

| Platform / Tool | Primary Data Type | Common Validation Task | Avg. Contributor Pool Size | Reported Accuracy Gain vs. Single Expert | Key Consensus Model |

|---|---|---|---|---|---|

| Zooniverse | Medical Images, Ecology | Phenotype classification, Object detection | 1.5M+ volunteers | 15-25% (varies by project) | Weighted Majority Vote |

| CellHunter (Custom Platform) | Cellular Microscopy | Cell boundary annotation, Organelle ID | 500-5K (expert-leaning) | 30-40% | Probabilistic Graphical Model |

| Amazon SageMaker Ground Truth | Multi-omics, Text | Variant calling, Entity recognition | Configurable (Public/Private) | N/A (Tool, not study) | Expectation Maximization |

| Figure Eight (now Appen) | Clinical Text, Sensor | Adverse event extraction, Time-series label | 1M+ contributors | 20-30% | Dawid-Skene Model |

| Citizen Science Cancer | Histopathology Slides | Tumor region segmentation | ~100K volunteers | ~25% (reaching pathologist concordance) | Spatial Consensus Clustering |

Experimental Protocols for Validation Studies

Rigorous methodology is required to evaluate the efficacy of crowdsourcing for biomedical data validation.

Protocol: Measuring Inter-Annotator Agreement on Genomic Variant Classification

Objective: Quantify consensus reliability among distributed contributors on pathogenicity labels for genetic variants.

Materials:

- Dataset: 500 curated variants from ClinVar with conflicting interpretations.

- Platform: Custom React.js frontend with Node.js/PostgreSQL backend.

- Contributors: Recruited via partner patient advocacy groups (n=200) and MSc/PhD students (n=50).

- Gold Standard: Expert panel classification (3 clinical geneticists).

Procedure:

- Pre-Task Training: Contributors complete a 15-minute interactive module on variant interpretation basics.

- Task Design: Each variant is presented with genomic context, protein effect, and population frequency. Contributors choose: Pathogenic, Likely Pathogenic, Uncertain Significance, Likely Benign, Benign.

- Assignment: Each variant is assigned to 10 independent contributors using an overlap control design.

- Data Collection: Responses, confidence scores, and time-on-task are logged.

- Consensus Calculation: Apply the Dawid-Skene model to estimate true label and individual contributor error rates, accounting for task difficulty.

- Validation: Compare crowd consensus (model-derived) to expert gold standard using Cohen's Kappa. Perform subgroup analysis (advocacy group vs. students).

Protocol: Distributed Validation of Single-Cell RNA-Seq Clustering

Objective: Utilize crowd insight to validate automated clustering results from scRNA-seq data.

Materials:

- Data: UMAP/t-SNE embeddings of 50,000 cells from a tumor microenvironment dataset.

- Tool: Cellxgene VIP interface configured for crowd labeling.

- Contributors: 30 bioinformatics trainees.

Procedure:

- Algorithmic Pre-Clustering: Generate 15 initial clusters using Seurat's FindClusters.

- Crowd Task: Present contributors with 2D embeddings and gene marker tables. Ask: "Should Cluster A and Cluster B be merged? (Yes/No/Uncertain)" for all pairwise combinations within a subset.

- Aggregation: Use a weighted majority vote, weighting contributors by their agreement with a prior derived from gene marker specificity.

- Hierarchical Reconciliation: Apply hierarchical clustering constrained by the crowd's merge/no-merge decisions.

- Benchmark: Compare biological coherence (e.g., enrichment of known cell-type signatures) of crowd-corrected clusters vs. algorithmic clusters.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools and Materials for Building or Utilizing Crowdsourcing Platforms

| Item / Solution | Provider/Example | Primary Function in Crowdsourcing Workflow |

|---|---|---|

| Annotation Frontend Framework | React + Redux, Vue.js | Provides responsive, interactive interfaces for complex data labeling tasks (e.g., polygon drawing on images, sequence browsing). |

| Consensus Modeling Library | crowd-kit (Yandex Toloka), dawid-skene (Python PyPI) |

Implements statistical models (Dawid-Skene, MACE, GLAD) to infer true labels from noisy, multi-contributor data. |

| Task Routing Engine | Apache Airflow, Prefect | Manages dynamic task assignment, redundancy logic, and quality control workflows. |

| Biomedical Data Viewer | cellxgene, OHIF Viewer, IGV.js | Enables secure, web-based visualization of specialized data (single-cell, medical images, genomics) for non-expert contributors. |

| Quality Control Dashboard | Grafana, Metabase | Monitors contributor performance, task completion rates, and consensus convergence in real-time. |

| Data De-identification Tool | presidio (Microsoft), PhiDeidentifier |

Automates the removal of Protected Health Information (PHI) from clinical text and DICOM headers to enable secure crowdsourcing. |

| Contributor Reputation Database | PostgreSQL with custom schema | Tracks contributor accuracy over time, expertise domains, and reliability scores for adaptive task assignment. |

Workflow & System Diagrams

Crowdsourcing Validation Platform Core Workflow

Dawid-Skene Statistical Consensus Model Logic

This technical guide details the design of a consensus initiative, a systematic approach to aggregating independent judgments to validate complex data, within the broader research thesis on Understanding community consensus models for data validation research. In fields like drug development, where data integrity is paramount, such models harness collective expertise to assess preclinical findings, clinical trial design, or biomarker identification. This document provides a rigorous framework for participant recruitment and task design to generate reliable, auditable consensus.

Participant Recruitment: Strategies and Criteria

Effective recruitment targets a defined community of experts to minimize bias and maximize validity.

Recruitment Strategies

- Stratified Purposive Sampling: Recruit participants from predefined strata (e.g., academia, industry, clinical practice, biostatistics) to ensure diverse perspectives.

- Snowball Sampling: Ask identified experts to nominate qualified peers, expanding the network while leveraging professional trust.

- Invitation-Only Panels: Generate high commitment and reduce noise by directly inviting leaders in the field based on publication records or proven expertise.

Eligibility & Screening Criteria

Participants must be vetted against objective metrics to ensure qualification.

Table 1: Quantitative Eligibility Criteria for Participant Screening

| Criterion Category | Specific Metric | Minimum Threshold | Validation Method |

|---|---|---|---|

| Professional Experience | Years in relevant field (e.g., oncology) | ≥ 5 years | CV/Resume review |

| Research Output | Number of peer-reviewed publications on topic | ≥ 3 first/senior author | PubMed/Scopus query |

| Clinical Trial Involvement | Role as PI/Co-I on registered trials | ≥ 1 trial | ClinicalTrials.gov search |

| Formal Recognition | Grants awarded, society leadership roles | At least one indicator | Documentation review |

Recruitment Workflow Protocol

Protocol: Recruiting a Stratified Expert Panel

- Define Population Strata: Identify and weight relevant professional sectors (e.g., 40% clinical researchers, 30% basic scientists, 20% biostatisticians, 10% patient advocacy leads).

- Generate Prospect List: Compile potential participants from recent high-impact literature, conference proceedings, and professional society directories.

- Initial Contact & Screening: Send a standardized invitation outlining the initiative's goals, time commitment, and compensation. Include a link to a screening survey to collect data per Table 1.

- Vetting & Selection: A steering committee reviews screened applicants against thresholds. Selections aim to meet stratum quotas while ensuring no institutional or ideological over-representation.

- Formal Enrollment: Send enrollment packets with confidentiality agreements and detailed project briefs to selected participants.

Title: Participant Recruitment and Selection Workflow

Well-designed tasks standardize the process of judgment elicitation, enabling quantitative aggregation.

Core Task Typology

Tasks should move from independent assessment to structured interaction.

- Independent Rating: Participants privately score statements or evidence on Likert scales (e.g., 1-9) for strength, validity, or relevance.

- Calibrated Estimation: Participants provide numeric estimates (e.g., drug efficacy, effect size) with confidence intervals, potentially using seed questions with known answers to weight expertise.

- Structured Argumentation: Participants provide written justifications for their ratings, identifying key evidence or assumptions.

- Iterative Feedback (Modified Delphi): Participants review anonymized summaries of the group's ratings and justifications, then have the opportunity to revise their initial judgments.

Experimental Protocol for a Modified Delphi Consensus Task

Protocol: Iterative Consensus on a Target Validation Dataset

- Pre-Work: Distribute a standardized evidence dossier (preclinical data, early clinical readouts, literature review) to all participants.

- Round 1 - Independent Assessment: Using a secure platform, participants:

- Rate the statement: "The collective evidence strongly validates Target X as a primary intervention for Disease Y."

- Scale: 1 (Strongly Disagree) to 9 (Strongly Agree).

- Provide a confidence rating (50-100%) and a mandatory free-text rationale.

- Analysis & Feedback Preparation: Calculate median score, inter-quartile range (IQR), and anonymize key rationales for both agreement and disagreement.

- Round 2 - Iterative Revision: Participants receive their own Round 1 response alongside the group's statistical summary and anonymized rationales. They then submit a revised rating and rationale.

- Consensus Measurement: Final consensus is defined as ≥70% of ratings within a 3-point range (e.g., 7-9) AND an IQR ≤ 2. Statistical tests (e.g., Wilcoxon signed-rank) assess significance of rating shifts.

Quantitative Outputs and Data Aggregation

Data from tasks must be summarized for clarity and decision-making.

Table 2: Example Aggregated Results from a Consensus Round

| Metric | Round 1 | Round 2 | Change | Interpretation |

|---|---|---|---|---|

| Median Score (1-9) | 6.5 | 7.5 | +1.0 | Increased group confidence |

| Inter-Quartile Range (IQR) | 4.0 (Q1=5, Q3=9) | 2.0 (Q1=7, Q3=9) | -2.0 | Convergence of opinion |

| % in 7-9 Range | 55% | 82% | +27% | Consensus threshold met |

| Mean Confidence | 78% | 85% | +7% | Increased self-assuredness |

Title: Modified Delphi Task Iterative Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials & Platforms for Consensus Initiatives

| Item / Solution | Category | Primary Function |

|---|---|---|

| Secure Online Delphi Platform (e.g., DelphiManager, ExpertLens) | Software | Hosts surveys, manages iterative rounds, anonymizes responses, and provides real-time analytical dashboards for facilitators. |

| REDCap (Research Electronic Data Capture) | Software | A secure, HIPAA-compliant web platform for building and managing online surveys and databases, suitable for initial data collection. |

| Standardized Evidence Dossier Template | Document | Ensures all participants receive identical, structured background information (PDF/Web), minimizing bias from variable evidence access. |

| Consensus Criteria Definition Matrix | Document | Pre-specifies the statistical and percentage thresholds (e.g., IQR ≤2, 70% agreement) that define consensus, stopping rules, and handling of outliers. |

| Anonymized Rationale Aggregation Script (Python/R) | Code | Automates the extraction, sanitization (removing identifiers), and thematic grouping of free-text rationales for feedback between rounds. |

Statistical Analysis Package (e.g., SPSS, R, with irr package) |

Software | Calculates inter-rater reliability (Krippendorff's alpha), rating distribution statistics, and significance tests for rating changes across rounds. |

Statistical and Computational Methods for Aggregating Annotations and Predictions

This whitepaper provides an in-depth technical guide on methods for aggregating diverse annotations and predictions, a critical component in data validation research. Framed within a broader thesis on understanding community consensus models, these methods are essential for generating reliable ground truth from noisy, subjective, or conflicting data sources—a common challenge in fields ranging from computational biology to drug development.

Foundational Aggregation Models

Statistical Models for Categorical Labels

For tasks where multiple annotators label items into discrete categories (e.g., disease classification from histopathology images), several probabilistic models estimate both the true label and annotator reliability.

Dawid-Skene Model: A classic expectation-maximization (EM) algorithm that models each annotator's confusion matrix.

- Latent Variable: True label ( z_i ) for item ( i ).

- Parameters: Sensitivity ( \alphaj^{(k)} = p(\text{annotator } j \text{ says } k | zi = k) ) and specificity ( \betaj^{(k)} = p(\text{annotator } j \text{ doesn't say } k | zi \neq k) ).

- Update Rules: E-step computes posterior of true labels given current parameters. M-step updates annotator parameters using weighted counts.

Generative Model of Labels, Abilities, and Difficulties (GLAD): Extends Dawid-Skene by introducing item difficulty.

- Annotator ( j ) has expertise ( \alpha_j \in (-\infty, \infty) ).

- Item ( i ) has difficulty ( \beta_i \in (0, \infty) ).

- Probability annotator ( j ) gets item ( i ) correct: ( \sigma(\alphaj \betai) ), where ( \sigma ) is the logistic function.

Aggregation of Continuous Predictions

For regression tasks or confidence scores, aggregation focuses on bias correction and variance reduction.

Bayesian Truth Serum (BTS) and its Variants: Rewards annotators based on both their answer and their prediction of the population's answer, encouraging truthful reporting. Linear Opinion Pool: A weighted average of predictions: ( \hat{y}i = \sum{j=1}^J wj y{ij} ), where weights ( wj ) can be learned based on past performance. Logarithmic Opinion Pool: ( \hat{y}i \propto \prod{j=1}^J Pj(yi)^{\alphaj} ), equivalent to a weighted geometric mean, often leading to sharper, more confident aggregates.

Table 1: Performance Comparison of Aggregation Methods on Public Datasets

| Method | Dataset (Task) | # Annotators | # Items | Aggregate Accuracy (F1) | Benchmark |

|---|---|---|---|---|---|

| Majority Vote | LabelMe (Image Class.) | 77 | 1000 | 0.891 | Baseline |

| Dawid-Skene | LabelMe (Image Class.) | 77 | 1000 | 0.927 | +4.0% |

| GLAD | RTE (Textual Entailment) | 164 | 800 | 0.912 | +5.2% over MV |

| MACE | Crowdflower (Sentiment) | 203 | 5000 | 0.941 | Superior for spam detection |

| BWA (Bias-Aware) | BioMedical NER | 5 experts | 1500 | 0.884 | Handles systematic bias |

Table 2: Impact of Aggregation on Predictive Model Performance

| Training Label Source | Model (Drug-Target Interaction) | AUROC | AUPRC | Notes |

|---|---|---|---|---|

| Single Expert Annotator | Random Forest | 0.81 | 0.76 | High variance |

| Simple Majority Vote | Graph Neural Network | 0.87 | 0.82 | Improved consistency |

| Dawid-Skene Aggregation | Graph Neural Network | 0.91 | 0.88 | Robust to noisy annotators |

| Multi-Phase Consensus | Deep Ensemble | 0.90 | 0.87 | Requires iterative labeling |

Experimental Protocols for Validation

Protocol: Benchmarking Aggregation Algorithms

- Dataset Curation: Obtain a dataset with multiple annotations per item (e.g., CheXpert chest X-rays with radiologist labels, or CASP protein structure predictions).

- Ground Truth Establishment: For a subset, establish high-confidence ground truth via expert adjudication or confirmed experimental validation.

- Algorithm Implementation: Implement target aggregation methods (Majority Vote, Dawid-Skene, GLAD, MACE, etc.).

- Training/Estimation: For parametric models, partition data: 70% for estimating annotator parameters, 30% for evaluation.

- Evaluation: Compare aggregated labels against the high-confidence ground truth. Metrics: Accuracy, F1-score, Cohen's Kappa. For continuous predictions, use RMSE, correlation coefficient.

- Statistical Testing: Perform paired t-tests or McNemar's test to determine if performance differences are significant (p < 0.05).

Protocol: Evaluating Consensus Impact on Downstream Models

- Generate Multiple Label Sets: Create several training datasets using different aggregation methods (e.g., MV, DS, GLAD) from the same raw annotations.

- Train Predictive Models: Train identical model architectures (e.g., a specific CNN or GNN) on each label set.

- Evaluate on Gold-Standard Test Set: Assess all models on a common, expertly-validated test set.

- Analyze Variance: Perform ANOVA to determine if the source of training labels introduces significant variance in final model performance.

Diagrams and Workflows

Workflow for Consensus Aggregation

Dawid-Skene Probabilistic Graphical Model

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools & Packages for Aggregation Research

| Item (Package/Platform) | Function | Key Features / Use Case |

|---|---|---|

| Crowd-Kit | Python library for crowdsourced data aggregation. | Implements Dawid-Skene, GLAD, MACE, BWA. Scalable via Spark. |

| Label Studio | Open-source data labeling platform. | Manages annotation workflows, integrates aggregation backends. |

| Amazon SageMaker Ground Truth | Commercial data labeling service. | Built-in majority vote & EM-based consensus. Active learning. |

| PyStan / PyMC3 | Probabilistic programming. | For implementing custom Bayesian aggregation models (HMM, CRF). |

| scikit-learn | Machine learning library. | For implementing simple baselines (majority vote, weighted averages). |

| Snorkel | Weak supervision framework. | Uses labeling functions (from multiple sources) to train generative model. |

| Doccano | Open-source text annotation tool. | Supports consensus metrics for NLP tasks (NER, sentiment). |

| CVAT | Computer Vision Annotation Tool. | Tracks annotator agreement for image/video tasks. |

Within modern translational research, the integration of multi-omics data (genomics, transcriptomics, proteomics) with deep clinical phenotyping represents a monumental challenge and opportunity. The inherent noise, batch effects, and biological heterogeneity in these datasets necessitate robust consensus models. These models, framed within data validation research, are not simple averages but sophisticated computational frameworks that reconcile disparate data sources to generate validated, high-confidence biological insights. This guide details the technical application of consensus-building for target identification in drug development.

Core Consensus Methodologies

2.1. Omics Data Integration & Meta-Analysis The first pillar involves generating a consensus molecular signature from heterogeneous omics studies.

- Experimental Protocol: Cross-Platform Transcriptomic Meta-Analysis

- Data Curation: Systematically gather raw data (FASTQ or CEL files) from public repositories (e.g., GEO, ArrayExpress) for the disease of interest using standardized search queries.

- Reprocessing Pipeline: Re-process all data through a uniform computational pipeline (e.g., STAR for alignment, DESeq2/edgeR for RNA-Seq count normalization; RMA for microarray normalization) to eliminate batch and pipeline artifacts.

- Effect Size Calculation: For each study, calculate differential expression using an appropriate model (e.g., linear models for microarrays, negative binomial for RNA-Seq). Convert results to a common effect size metric (e.g., standardized mean difference).

- Consensus Modeling: Apply fixed-effects or random-effects meta-analysis models (e.g., using the

metaforpackage in R) to combine effect sizes across studies. Assess heterogeneity using I² and Q-statistics. - Signature Definition: Define the consensus signature as genes with a meta-analysis FDR-adjusted p-value < 0.05 and consistent direction of change in >75% of constituent studies.

Table 1: Consensus Omics Meta-Analysis Output for Hypothetical Disease 'X'

| Metric | Value | Interpretation |

|---|---|---|

| Studies Integrated | 12 (7 RNA-Seq, 5 Microarray) | Broad evidence base |

| Initial Candidate Genes | 15,000 | Pre-meta-analysis pool |

| Consensus Signature Genes | 342 | High-confidence set |

| Meta-Analysis I² Statistic | 45% | Moderate heterogeneity |

| Top Pathway Enrichment (FDR<0.01) | JAK-STAT Signaling, Inflammasome | Mechanistic insight |

2.2. Clinical Phenotype Harmonization Consensus clinical phenotyping transforms electronic health records (EHR) and trial data into computable phenotypes.

- Experimental Protocol: Phenotype Algorithm Development & Validation

- Phenotype Definition: Define the target phenotype (e.g., "Rapid Progressor of Heart Failure") using a consensus clinical panel (e.g., Delphi method).

- Feature Extraction: From EHRs, extract structured data (ICD codes, lab values, medications) and unstructured data (clinical notes via NLP).

- Algorithm Development: Train multiple machine learning models (e.g., logistic regression, random forest, NLP-based transformers) on a gold-standard, manually curated patient set.

- Consensus Labeling: Employ a consensus ensemble method (e.g., stacking or majority vote from the top-performing models) to assign the final phenotype label to each patient, improving accuracy over any single model.

- Validation: Assess algorithm performance on a held-out test set and report precision, recall, and F1-score.

Table 2: Performance of Consensus Phenotyping Algorithm

| Model | Precision | Recall | F1-Score |

|---|---|---|---|

| Logistic Regression | 0.82 | 0.75 | 0.78 |

| Random Forest | 0.88 | 0.81 | 0.84 |

| NLP Transformer | 0.85 | 0.88 | 0.86 |

| Consensus Ensemble | 0.91 | 0.87 | 0.89 |

2.3. Convergent Target Identification The final step integrates consensus omics signatures with consensus clinical phenotypes to prioritize drug targets.

- Experimental Protocol: Multi-Layer Network Prioritization

- Network Construction: Build a protein-protein interaction (PPI) network centered on the consensus omics signature genes using a high-quality database (e.g., STRING, HuRI).

- Layer Integration: Overlay additional network "layers": (a) genetic evidence (GWAS hits from public databases), (b) druggability annotations (e.g., from DGIdb, ChEMBL), (c) phenotype association strength (correlation of gene expression with consensus phenotype severity).

- Consensus Scoring: Implement a multi-criteria decision analysis (MCDA) or a random walk with restart algorithm that traverses this multi-layered network. The consensus score for each gene is a weighted function of its degree in the PPI layer, its genetic evidence score, its druggability tier, and its phenotype correlation.

- Target Prioritization: Rank genes by their consensus score. Validate top candidates in silico (e.g., gene essentiality in CRISPR screens) and in vitro.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents & Tools for Consensus-Driven Research

| Item | Function in Consensus Workflow |

|---|---|

| Bulk RNA-Seq Kits (e.g., Illumina Stranded Total RNA) | Generate standardized, high-quality transcriptomic data from tissue samples for input into meta-analysis. |

| Multiplex Immunoassay Panels (e.g., Olink, MSD) | Quantify hundreds of proteins from minimal sample volume, providing proteomic data for cross-omics consensus. |

| Single-Cell RNA-Seq Solutions (e.g., 10x Genomics Chromium) | Profile cellular heterogeneity within tissues, allowing consensus cell-type-specific signatures to be derived. |

| Digital Pathology & Image Analysis Software (e.g., QuPath) | Quantify clinical phenotype features from histology slides (e.g., immune cell infiltration) for algorithm training. |

| CRISPR Knockout Libraries (e.g., Brunello) | Functionally validate prioritized target genes via pooled screens in disease-relevant cellular models. |

| Cloud Computing Platform (e.g., Google Cloud Life Sciences) | Provide scalable, reproducible environments for running consensus computational pipelines on large datasets. |

Visualizing Consensus Workflows & Pathways

Title: Consensus Target ID Multi-Layer Network

Title: Meta-Analysis & Phenotyping Workflow

Integrating Consensus Outputs into Drug Discovery Workflows and Regulatory Submissions

This technical guide, framed within the broader thesis on Understanding community consensus models for data validation research, details the integration of consensus methodologies into the drug discovery pipeline. As biological data grows in volume and complexity, reliance on single-method validation is insufficient. Community consensus models—where multiple independent analytical methods, algorithms, or laboratories converge on a unified result—provide a robust framework for data validation, enhancing decision-making from target identification through regulatory filing.

The Consensus Framework: Definitions and Applications

A consensus output is defined as the synthesized result from two or more independent, validated methods (e.g., computational predictions, in vitro assays, in vivo models) aimed at answering the same biological or pharmacological question. Its primary value lies in risk mitigation.

Table 1: Applications of Consensus Models Across the Drug Development Pipeline

| Pipeline Stage | Consensus Question | Common Methodologies for Consensus | Regulatory Impact |

|---|---|---|---|

| Target Identification | Is Target X genuinely associated with Disease Y? | Genome-wide association studies (GWAS) meta-analysis; multi-omic data integration; independent CRISPR knockout screens. | Strengthens rationale for Investigational New Drug (IND) application. |

| Lead Optimization | Does Compound A selectively engage the intended target with favorable PK/PD? | SPR/BLI binding assays; cellular thermal shift assay (CETSA); orthogonal functional assays (e.g., cAMP, calcium flux). | Reduces risk of preclinical attrition due to off-target effects. |

| Preclinical Toxicology | Is the observed hepatotoxicity compound-specific? | Histopathology from two independent labs; transcriptomic analysis from different platforms; high-content imaging. | Critical for defining safe starting dose in FIH trials. |

| Clinical Biomarker Analysis | Is Biomarker B a reliable indicator of target engagement or efficacy? | IHC from central lab vs. local labs; ELISA vs. MSD immunoassay; digital PCR vs. NGS. | Supports biomarker qualification submissions to regulators. |

| Clinical Endpoint Analysis | Is the treatment effect reproducible and statistically robust? | Independent statistical analysis of clinical data; adjudication committee review of events; central vs. local radiology review. | Cornerstone of New Drug Application/Biologics License Application (NDA/BLA) efficacy evidence. |

Experimental Protocols for Generating Consensus Data

Protocol: Orthogonal Target Engagement Validation

Objective: To generate consensus on a lead compound's binding to and functional modulation of a protein target.

- Method 1: Surface Plasmon Resonance (SPR)

- Reagents: Biotinylated target protein, streptavidin sensor chip, lead compound in DMSO, reference compound, HBS-EP+ buffer.

- Procedure: Immobilize target protein. Inject compound serial dilutions. Record resonance units (RU) over time. Calculate kinetics (ka, kd) and equilibrium dissociation constant (KD) using a 1:1 binding model.

- Method 2: Cellular Thermal Shift Assay (CETSA)

- Reagents: Relevant cell line, lead compound, DMSO vehicle, protease inhibitors, Western blot or MS detection reagents for target.