Data-Driven Volunteer Optimization: Balancing Accuracy & Cost in Biomedical Classification Tasks

This article provides a comprehensive framework for researchers and drug development professionals to determine the optimal number of volunteers (annotators, raters, or participants) for classification tasks in biomedical research.

Data-Driven Volunteer Optimization: Balancing Accuracy & Cost in Biomedical Classification Tasks

Abstract

This article provides a comprehensive framework for researchers and drug development professionals to determine the optimal number of volunteers (annotators, raters, or participants) for classification tasks in biomedical research. Covering foundational theory, practical methodologies, optimization strategies, and validation techniques, it addresses the critical trade-off between data reliability and resource constraints. Readers will gain actionable insights for study design, crowdsourcing initiatives, and clinical data annotation to enhance both scientific validity and operational efficiency.

The Science of Scale: Why Volunteer Count Matters in Biomedical Classification

Troubleshooting Guides & FAQs

Q1: In my volunteer annotation experiment, I'm observing high agreement on simple labels but poor agreement on complex ones. Is this expected, and how should I adjust my protocol? A: Yes, this is a classic manifestation of task difficulty impacting inter-annotator agreement (IAA). The expected IAA, often measured by Fleiss' Kappa (κ) or Krippendorff's Alpha, decreases as task subjectivity or complexity increases.

- Troubleshooting Steps:

- Quantify the Discrepancy: Calculate IAA separately for "simple" and "complex" task subsets. See Table 1.

- Root Cause Analysis:

- Ambiguous Guidelines: Review your annotation guide. For complex tasks, provide multiple, clear examples of edge cases.

- Insufficient Training: Implement a mandatory qualification test with a minimum performance threshold (e.g., >85% accuracy on a gold-standard set) before the main task.

- Protocol Adjustment: For complex tasks, increase the redundancy (number of volunteers per item). Use a consensus model (e.g., ≥2 volunteers must agree) or switch to a probabilistic aggregation method (e.g., Dawid-Skene) instead of simple majority vote.

Q2: My budget allows for either many annotations from a low-cost platform or fewer from a high-cost expert platform. How do I choose? A: This is the core cost-reliability trade-off. The optimal choice depends on your target reliability and the task's inherent difficulty.

- Troubleshooting Steps:

- Pilot Experiment: Run a controlled pilot comparing the two sources. Annotate the same 100 items with both groups.

- Measure & Compare: Calculate accuracy against a ground truth and IAA for each group. See Table 2.

- Cost-Benefit Model: Use the pilot data to model how many low-cost annotations are needed to match the accuracy of one expert annotation. The optimal point minimizes total cost while meeting a pre-defined accuracy target.

Q3: After aggregating volunteer labels, how can I diagnose if the final dataset is reliable enough for training my machine learning model? A: Final dataset reliability should be quantified, not assumed.

- Troubleshooting Steps:

- Compute Label Confidence: If using probabilistic aggregation, each item receives a confidence score (e.g., 0.7 probability of being class 'A'). Flag items with confidence below a threshold (e.g., <0.6) for review.

- Estimate Uncertainty: Use the variance in volunteer responses per item as a measure of uncertainty. High variance indicates problematic items.

- Gold Standard Audit: Randomly select 3-5% of your final labeled dataset. Have an expert re-annotate these items. Calculate the agreement between the expert and your aggregated labels. A drop below 90% suggests systemic issues.

Q4: The signaling pathway I need volunteers to annotate is highly detailed. How can I structure the task to prevent overwhelming them? A: Use a hierarchical decomposition strategy to manage cognitive load.

- Detailed Protocol: Hierarchical Pathway Annotation

- Phase 1 - Entity Identification: Present the pathway diagram or text description. Ask volunteers to highlight/categorize core components (e.g., "Circle all Kinases," "Box all Transcription Factors").

- Phase 2 - Relationship Labeling: For a given pair of identified entities (e.g., Protein A & Protein B), present a multiple-choice question: "What is the most likely relationship? a) Phosphorylates, b) Binds to, c) Inhibits, d) Up-regulates expression."

- Phase 3 - Directionality & Confidence: For each relationship identified, ask: "Is this interaction direct or indirect?" and "What is your confidence? (Low/Medium/High)."

- Aggregation: Aggregate results phase-by-phase, using high-confidence Phase 1 outputs to constrain the options in Phase 2.

Data Tables

Table 1: Inter-Annotator Agreement (IAA) vs. Task Complexity

| Task Complexity | Fleiss' Kappa (κ) Range | Typical Cause | Recommended Redundancy (Volunteers/Item) |

|---|---|---|---|

| Simple (Object ID) | 0.80 - 1.00 (Substantial) | Clear criteria, low ambiguity | 2-3 |

| Moderate (Sentiment) | 0.40 - 0.75 (Moderate) | Subjective interpretation | 5-7 |

| Complex (Pathway Logic) | 0.00 - 0.40 (Poor) | High expertise required, ambiguous edges | 7+ or expert review |

Table 2: Pilot Experiment Results: Cost vs. Accuracy

| Annotation Source | Cost per Annotation | Accuracy vs. Ground Truth | Avg. IAA (κ) | Estimated Annotations Needed for 95% Reliable Label |

|---|---|---|---|---|

| Expert Platform A | $5.00 | 98% | 0.91 | 1 (direct expert label) |

| Crowd Platform B | $0.20 | 82% | 0.45 | 5 (via probabilistic aggregation) |

| Crowd Platform C | $0.10 | 75% | 0.30 | 9 (via probabilistic aggregation) |

Experimental Protocol: Determining Optimal Redundancy

Title: Protocol for Calculating the Optimal Number of Volunteers per Task.

Objective: To empirically determine the point of diminishing returns where adding more volunteers no longer significantly improves label reliability, enabling cost-effective experimental design.

Materials: See "The Scientist's Toolkit" below.

Methodology:

- Task Design & Gold Standard Creation: Define the classification task. An expert (or panel) creates a gold-standard set (G) of N items (N ≥ 100) with verified true labels.

- Volunteer Recruitment & Training: Recruit V volunteers (V ≥ 20). Provide standardized training and a qualification test using a subset of G. Only qualified volunteers proceed.

- Experimental Annotation: Each item in a master set (M, containing G plus filler items) is presented to k volunteers, where k is progressively increased (e.g., k = 1, 3, 5, 7, 9). Use a balanced design to avoid fatigue.

- Label Aggregation: For each k level, aggregate the k volunteer labels per item using a chosen model (e.g., Majority Vote, Dawid-Skene).

- Performance Calculation: For each k level, compare the aggregated labels for the gold-standard items (G) to their true labels. Calculate accuracy, precision, recall, and F1-score.

- Optimal Point Analysis: Plot performance metrics (Y-axis) against k (X-axis). The optimal k is at the elbow of the cost-benefit curve, where performance gains plateau. Formalize using a decision rule: minimize k such that F1-score ≥ target threshold (e.g., 0.95) and the confidence interval width is below a tolerance level (e.g., 0.05).

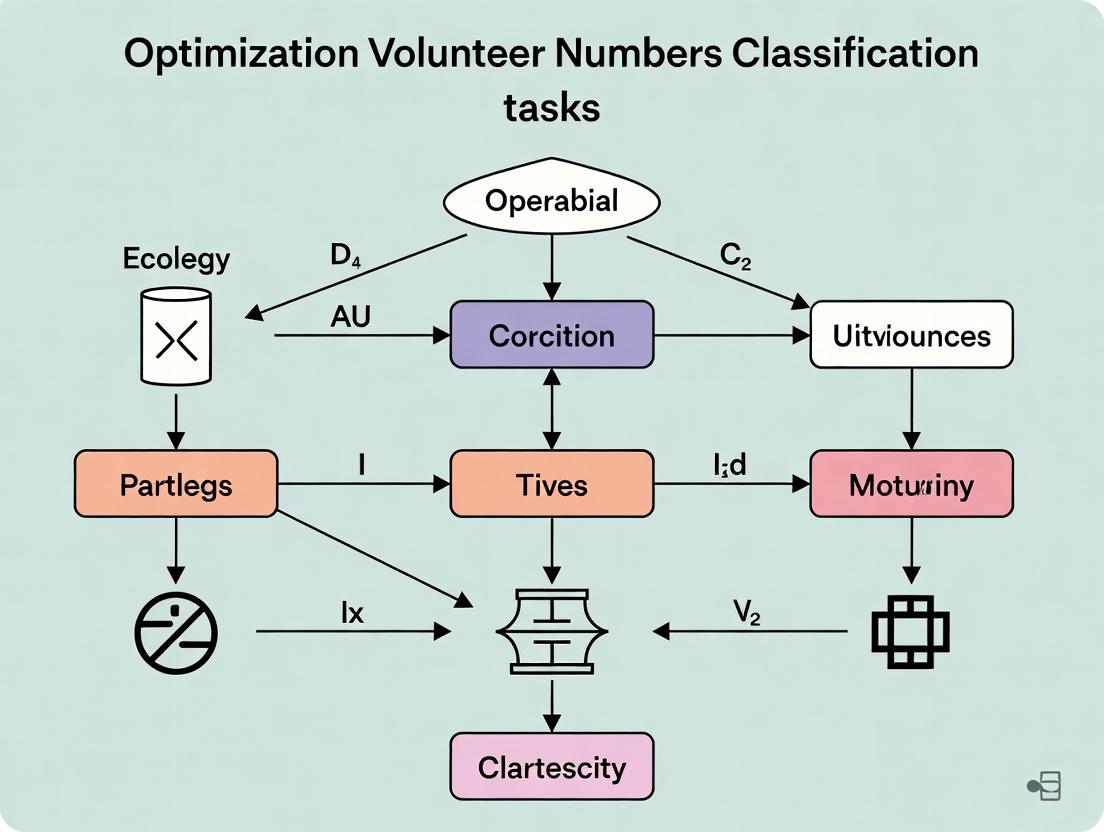

Diagrams

Optimal Volunteer Redundancy Workflow

Example Signaling Pathway for Annotation

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Annotation Research | Example/Note |

|---|---|---|

| Annotation Platform Software | Provides infrastructure for task design, volunteer management, data collection, and basic aggregation. | Prolific, Amazon Mechanical Turk, Labelbox, Figure Eight. |

| Inter-Annotator Agreement (IAA) Metrics | Statistical tools to quantify the consistency of volunteer responses. | Fleiss' Kappa (κ): for >2 annotators, categorical labels. Krippendorff's Alpha: handles missing data, various scale types. |

| Probabilistic Aggregation Models | Algorithms to infer true labels from noisy, multiple volunteer responses, estimating per-volunteer reliability. | Dawid-Skene Model: Core model for categorical data. GLAD (Generative Labeler Model): Estimates both item difficulty and annotator skill. |

| Gold Standard Dataset | A subset of items with expert-verified labels. Serves as the benchmark for calculating accuracy and training aggregation models. | Critical for calibration. Should be representative of task complexity and variability. |

| Qualification Test Module | A pre-task assessment to filter out volunteers who cannot follow guidelines or perform at a baseline level. | Built using the Gold Standard dataset. Typically 5-10 items with performance threshold. |

| Data Visualization Libraries | For creating the elbow plots and diagnostic charts to identify the optimal redundancy point. | Python: Matplotlib, Seaborn. R: ggplot2. |

Troubleshooting Guides & FAQs

Q1: Our IRR metrics (e.g., Cohen's Kappa) are consistently low, despite clear guidelines. What are the primary culprits and how can we address them?

A: Low IRR often stems from three interacting factors: ambiguous task definitions, variable annotator expertise, or excessive task complexity. First, conduct a pre-experiment calibration session with a small subset of your volunteers. Analyze disagreements to refine guidelines. Second, implement a qualification test before the main task to filter or stratify volunteers by expertise. Third, consider decomposing a complex task into simpler, sequential judgments to reduce cognitive load.

Q2: How do we determine if low agreement is due to task difficulty versus poor annotator quality?

A: Implement a controlled experiment using a "gold-standard" subset. Embed a small percentage of pre-annotated, consensus-driven items into your task. Use the following table to diagnose the issue:

| Diagnostic Metric | Suggests Task Difficulty Issue | Suggests Annotator Quality Issue |

|---|---|---|

| Agreement on Gold-Standard Items | High (>0.9 IRR) | Low (<0.7 IRR) |

| Intra-annotator Consistency (test-retest) | Low | Low |

| Disagreement Pattern | Systematic, clustered on specific item types | Random, scattered across all items |

| Expert vs. Novice Performance Gap | Moderate | Very Large |

Q3: For our drug adverse event report classification, how many volunteers do we need per task to achieve reliable consensus?

A: The required number is not fixed; it's a function of your target reliability and observed agreement. Use the following methodology derived from signal detection theory:

- Run a pilot with 5-7 volunteers per item.

- Calculate Fleiss' Kappa or Krippendorff's Alpha for the pilot data.

- Use the Spearman-Brown prophecy formula to estimate how increasing annotators (n) changes reliability (R): R_n = (n * R1) / [1 + (n - 1) * R1], where R_1 is the average pairwise agreement.

- The "optimized" number is the smallest n that pushes R_n above your threshold (e.g., >0.8 for critical tasks).

Pilot Data & Projection Table:

| Pilot Volunteers per Item | Observed Fleiss' Kappa (κ) | Projected κ with 3 Volunteers | Projected κ with 5 Volunteers | Projected κ with 7 Volunteers |

|---|---|---|---|---|

| 5 | 0.45 | 0.67 | 0.75 | 0.80 |

| 5 | 0.60 | 0.82 | 0.88 | 0.91 |

Protocol: Calculating Required Volunteers

- Define Target Reliability (TR): Set a minimum acceptable IRR (e.g., κ = 0.80).

- Conduct Pilot: Have m volunteers (e.g., 5) annotate a representative sample (e.g., 100 items).

- Compute Baseline IRR: Calculate the observed IRR (R_m) for the m volunteers.

- Estimate Single-Annotator Reliability: Use the Spearman-Brown formula in reverse: R_1 = R_m / [m - (m - 1)R_m]*.

- Solve for Required N: Plug R_1 and your TR into the prophecy formula and solve for n: n = [TR * (1 - R_1)] / [R_1 * (1 - TR)].

Q4: What is the most robust IRR statistic for multi-class, multi-annotator tasks in biomedical coding?

A: For categorical data with multiple annotators, Krippendorff's Alpha is generally recommended. It handles missing data, multiple annotators, and is applicable to various measurement levels (nominal, ordinal, interval). Cohen's Kappa is for two annotators; Fleiss' Kappa extends to multiple but assumes no missing data. For ranking or continuous data, use Intraclass Correlation Coefficient (ICC).

| Statistic | Scale | Annotators | Handles Missing Data? | Recommended Use Case |

|---|---|---|---|---|

| Krippendorff's Alpha | Nominal, Ordinal, Interval, Ratio | 2+ | Yes | General purpose, complex coding tasks. |

| Fleiss' Kappa | Nominal | 2+ | No | Simple presence/absence coding by fixed annotator pool. |

| Cohen's Kappa | Nominal | 2 | No | Expert vs. expert adjudication. |

| Intraclass Correlation (ICC) | Interval, Ratio | 2+ | Yes | Measuring agreement on continuous scores (e.g., toxicity severity). |

Q5: How should we combine annotations from experts and non-expert volunteers to optimize resource use?

A: Implement a weighted consensus model. Use an initial batch of dual-annotated items (by experts and volunteers) to calculate annotator competency weights. Weights can be derived from agreement with expert benchmarks. The final label for an item is determined by a weighted vote.

Protocol: Weighted Consensus Model

- Expert Benchmark Creation: Experts annotate a "training" set of 100-200 items.

- Volunteer Annotation & Weighting: Volunteers annotate the same set. Calculate each volunteer's weight (w_i) as their observed agreement with the expert benchmark (e.g., Cohen's Kappa or F1-score).

- Main Task Annotation: Volunteers annotate new items. For each new item, the aggregated score for a label L is: Score(L) = Σ (w_i * I_i), where I_i = 1 if volunteer i chose L, else 0.

- Threshold Setting: The final label is assigned if Score(L) exceeds a predefined threshold (optimized on the training set).

Diagram 1: Weighted consensus workflow for expert-volunteer integration.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in IRR/Annotation Research |

|---|---|

| Annotation Platform (e.g., Labelbox, Prodigy, custom) | Provides the interface for volunteers/experts to perform classification tasks, manages data pipelines, and often includes basic IRR analytics. |

IRR Statistics Library (e.g., irr package in R, statsmodels in Python) |

Contains functions to compute key metrics like Cohen's Kappa, Fleiss' Kappa, Krippendorff's Alpha, and ICC. Essential for quantitative analysis. |

| Gold-Standard Reference Set | A subset of items with verified, consensus-driven labels. Used to calibrate guidelines, measure individual annotator accuracy, and diagnose system errors. |

| Qualification & Training Module | A pre-task test or tutorial to assess and standardize annotator expertise. Filters low-skill volunteers and reduces noise. |

| Consensus Algorithm Scripts | Custom code (e.g., in Python) to implement weighted voting, Dawid-Skene models, or other aggregation methods beyond simple majority rule. |

| Data Visualization Dashboard | Tracks annotator performance, disagreement hotspots, and IRR trends in real-time, enabling rapid intervention during large-scale studies. |

Diagram 2: Core factors influencing inter-rater reliability.

Troubleshooting Guide & FAQs

FAQ 1: Why does my diagnostic image classifier's performance degrade when deployed on data from a new clinical site?

- Answer: This is commonly caused by dataset shift, where the training data distribution differs from the real-world deployment data. Causes include different imaging device manufacturers, scan protocols, or patient demographics not represented in the training set. To troubleshoot, calculate metrics like Accuracy and F1-score per site and compare. Implement a quality control (QC) module in your pipeline to flag images with unusual intensity distributions or artifacts before classification.

FAQ 2: How can I determine if a drop in model accuracy is due to labeling errors or model failure?

- Answer: Conduct a targeted audit. Randomly sample a subset (e.g., 100-200) of the new images where the model's confidence is high but the prediction is wrong. Have two independent expert labelers re-annotate these samples. A high disagreement rate between original and new labels suggests label noise is a primary issue. Use the following protocol:

Protocol 1: Label Consistency Audit

- Sample Selection: From the erroneous predictions, stratified sample N=150 images across all classes.

- Blinded Re-labeling: Provide the image set to two domain experts blinded to the original label and the model's prediction.

- Analysis: Calculate inter-rater agreement (Cohen's Kappa) and agreement with original labels.

- Action: If Kappa between experts is high (>0.8) but agreement with original labels is low (<0.7), initiate a full label review for the affected data source.

FAQ 3: Our adverse event (AE) detection algorithm is generating too many false positives in the reporting system. How can we refine it?

- Answer: High false positives often stem from an imbalanced training set or overly sensitive detection thresholds. First, categorize the false positives. Then, retrain the model with a penalized cost function (e.g., weighted cross-entropy) that increases the penalty for misclassifying the majority "non-AE" class. Implement a two-stage pipeline: Stage 1: High-sensitivity screening. Stage 2: A more specific rule-based or secondary model filter on the Stage 1 outputs.

Protocol 2: AE Detector Calibration & Threshold Optimization

- False Positive Analysis: Manually label 500+ algorithm-positive outputs as True/False AE over a defined period.

- Metric Calculation: Generate a Precision-Recall curve by varying the classifier's decision threshold.

- Threshold Selection: Based on operational needs (e.g., maximize Precision while keeping Recall > 0.85), select a new operating point.

- Validation: Apply the new threshold to a held-out temporal validation set to confirm performance.

FAQ 4: What is the minimum number of volunteer labelers needed per image to ensure label reliability for a given task complexity?

- Answer: The required number scales with task subjectivity. Use an iterative "label aggregation with uncertainty measurement" approach. Start with 3 labelers per image. Calculate metrics per task:

Table 1: Labeler Agreement Metrics vs. Recommended Action

| Metric | Formula | Target Range | Action if Below Target |

|---|---|---|---|

| Percent Agreement | (Agreed Items / Total Items) | >85% for clear tasks | Review task guidelines |

| Fleiss' Kappa (κ) | Measures multi-rater agreement | κ > 0.6 (Substantial) | Add more labelers per item |

| Uncertainty Score | 1 - (Consensus Votes / Total Votes) | <0.2 | Data may need expert adjudication |

If agreement metrics are below target, incrementally add labelers until metrics stabilize or reach the target. This empirical determination is core to optimizing volunteer count.

Research Reagent & Solutions Toolkit

Table 2: Essential Materials for Classification Task Research

| Item | Function |

|---|---|

| DICOM Standardized Image Phantom | Provides a controlled, consistent input to test and calibrate image labeling algorithms across different sites and hardware. |

| Annotation Platform (e.g., Labelbox, CVAT) | Centralized tool for volunteer labeler management, task distribution, and label collection with built-in quality checks. |

| Inter-Rater Reliability (IRR) Statistics Package | Software/library (e.g., irr in R, statsmodels in Python) to calculate Fleiss' Kappa, Cohen's Kappa, and confidence intervals. |

| Synthetic Data Generator | Tool (e.g., TorchIO, Synth) to create controlled variations of training data (contrast, noise, artifacts) to stress-test model robustness. |

| Adverse Event MedDRA Dictionary | Standardized medical terminology for coding AEs, essential for normalizing outputs from detection algorithms for reporting. |

| Model Monitoring Dashboard | Real-time visualization of key performance indicators (data drift, accuracy decay) post-deployment. |

Visualizations

Title: Diagnostic Image Classification & QC Workflow

Title: Two-Stage Adverse Event Detection Pipeline

Title: Iterative Protocol to Optimize Volunteer Labeler Count

Welcome to the Technical Support Center for volunteer-powered classification task research. This guide provides troubleshooting and FAQs framed within the thesis context of Optimizing number of volunteers per classification task research.

Troubleshooting Guides & FAQs

Q1: Our pilot study with 15 volunteers yielded an effect size of d=0.6. However, our main experiment with 50 volunteers failed to reach statistical significance (p > 0.05). What went wrong? A: This is a classic issue of underpowered pilot studies leading to inflated effect size estimates. A small-N pilot is highly susceptible to random sampling error, often overestimating the true effect. With d=0.6, achieving 80% power (alpha=0.05) typically requires ~45 volunteers per group in a between-subjects design. Your main experiment with 50 total volunteers was likely still underpowered, especially if split into groups. Solution: Use the effect size from the pilot cautiously. Conduct an a priori power analysis using a conservative, literature-based effect size estimate to determine the required N before the main experiment.

Q2: We are collecting continuous performance data from volunteers. As we increased from 30 to 100 volunteers, our data quality metrics (e.g., intra-class correlation) worsened. Why? A: Increased sample size often reveals true heterogeneity in the population that smaller samples mask. This isn't necessarily worsening data quality, but rather improving representativeness. Troubleshooting Steps:

- Check for outliers: Use standardized methods (e.g., Mahalanobis distance) to identify if specific volunteers are multivariate outliers.

- Assess protocol adherence: Review task completion logs and timing data for deviations. Larger samples may include less-motivated volunteers.

- Consider subgroups: The worsening ICC may indicate latent subgroups. Conduct cluster analysis to see if data naturally partitions, which is a valuable finding.

- Do not arbitrarily remove data to improve metrics. Investigate the cause systematically.

Q3: For our image classification task, how do we determine the optimal number of volunteers needed per image to achieve reliable consensus? A: This depends on task difficulty and desired confidence. Use a reliability analysis framework.

- Protocol: Implement a staged approach. Have each image classified by an initial set of volunteers (e.g., 5). Calculate inter-rater agreement (Fleiss' Kappa).

- If Kappa is high (>0.8), the number is sufficient.

- If Kappa is moderate (0.4-0.6), simulate the impact of adding more raters using a bootstrap resampling method. Continue adding volunteers until the classification accuracy score (compared to a gold-standard subset) plateaus.

- See Table 2 for simulation results.

Q4: We observe high participant dropout rates (>30%) in longitudinal classification tasks, compromising our planned N. How can we mitigate this? A: Proactive measures are key.

- Protocol Adjustment: Over-recruit by the anticipated dropout rate (determined from prior studies). Use adaptive designs that allow for sample size re-estimation at an interim analysis point.

- Participant Engagement: Implement shorter, more frequent task sessions with gamification. Use reminder systems and provide incremental feedback or compensation.

- Analytical Strategy: Plan to use Mixed-Effects Models, which can provide valid inferences under "Missing at Random" assumptions, making better use of all available data from partial completers.

Table 1: Statistical Power (1-β) at α=0.05 for Different Effect Sizes (Cohen's d) and Total Volunteer Numbers (Two equal groups)

| Total Volunteers (N) | d = 0.2 (Small) | d = 0.5 (Medium) | d = 0.8 (Large) |

|---|---|---|---|

| 20 | 0.09 | 0.33 | 0.69 |

| 50 | 0.17 | 0.70 | 0.96 |

| 100 | 0.29 | 0.94 | >0.99 |

| 200 | 0.52 | >0.99 | >0.99 |

| 500 | 0.86 | >0.99 | >0.99 |

Note: Power calculated using two-tailed t-test. Values approximated from standard power tables.

Table 2: Impact of Volunteer Count (N) on Key Data Quality Metrics in a Simulated Classification Task

| Volunteers per Task (N) | Classification Accuracy (Mean ± SEM) | Inter-Rater Agreement (Fleiss' κ) | False Discovery Rate (FDR) |

|---|---|---|---|

| 3 | 0.72 ± 0.08 | 0.41 | 0.35 |

| 5 | 0.81 ± 0.05 | 0.58 | 0.22 |

| 7 | 0.85 ± 0.03 | 0.66 | 0.15 |

| 10 | 0.87 ± 0.02 | 0.72 | 0.11 |

| 15 | 0.88 ± 0.01 | 0.75 | 0.09 |

Note: Simulation based on a task with 70% baseline accuracy and moderate difficulty. SEM = Standard Error of the Mean.

Experimental Protocols

Protocol 1: A Priori Power Analysis for Determining Volunteer Numbers

- Define Primary Outcome: Identify the key classification accuracy metric or performance score.

- Choose Effect Size: Prefer a minimal clinically/practically important effect from literature. If unavailable, use a conservative estimate (e.g., Cohen's d = 0.5 for medium).

- Set Statistical Thresholds: Alpha (α) = 0.05; Desired Power (1-β) = 0.80 or 0.90.

- Select Test: Specify the planned statistical test (e.g., independent t-test, ANOVA, χ²).

- Use Software: Input parameters into power analysis software (e.g., G*Power, R's

pwrpackage). - Calculate N: The output is the required number of volunteers per group. Multiply by the number of groups and add a contingency (e.g., 10-15%) for attrition.

Protocol 2: Staged Recruitment & Interim Analysis for Longitudinal Studies

- Initial Cohort: Recruit 60% of your a priori sample size target.

- Interim Analysis: Upon completion, calculate the observed effect size and variance.

- Blinded Sample Size Re-estimation: Using the observed parameters, re-calculate the required N to maintain desired power. An independent statistician should perform this to protect trial blinding.

- Decision:

- If re-estimated N ≤ initial target, continue to final analysis.

- If re-estimated N > initial target, recruit the additional volunteers needed.

- Final Analysis: Analyze the complete dataset with a pre-specified statistical model.

Visualizations

Volunteer Count Impact on Research Outcomes

Workflow for Optimizing Volunteer Numbers

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in Volunteer-Based Classification Research |

|---|---|

| Electronic Data Capture (EDC) System | Securely collects, manages, and validates task performance data directly from volunteers, ensuring audit trails and data integrity. |

| Randomization Module | Integrates with the EDC to automatically and blindly assign volunteers to experimental arms or task orders, minimizing allocation bias. |

| Cognitive Assessment Battery | A standardized set of validated digital tasks (e.g., for attention, memory) used to characterize the volunteer cohort or measure outcomes. |

| Participant Management Portal | A platform for scheduling, consent management, communication, and compensating volunteers, crucial for retention. |

| Statistical Power Software (e.g., G*Power) | Used to calculate required volunteer numbers (N) based on expected effect size, alpha, and power before study initiation. |

| Inter-Rater Reliability Packages (e.g., irr in R) | Software tools to calculate agreement metrics (Kappa, ICC) for studies where multiple volunteers or raters classify the same stimuli. |

| Data Quality Dashboard | A real-time monitoring tool that flags aberrant response patterns, high latency, or dropouts, allowing for proactive intervention. |

Technical Support Center: Troubleshooting Guides and FAQs for Optimizing Volunteer Counts in Classification Tasks

FAQ: Core Theory and Task Design

Q1: What is the primary mathematical foundation for determining the optimal number of volunteers per classification task?

A: The foundational model is often based on Dawid and Skene’s (1979) expectation-maximization algorithm, which estimates true labels and volunteer reliability simultaneously. Recent crowdsourcing research integrates concepts from Condorcet’s Jury Theorem, which posits that majority decisions become more accurate as group size increases, assuming volunteer competence > 0.5. A key modern extension is the use of Bayesian inference to model priors for both task difficulty and volunteer expertise, allowing for dynamic optimization of N (number of volunteers).

Q2: When increasing the number of volunteers, my aggregated label accuracy plateaus or decreases. What went wrong? A: This indicates potential "madness of crowds," often due to violating foundational assumptions. Common causes and solutions are below.

Troubleshooting Table: Diminishing Returns with Increased Volunteers

| Symptom | Probable Cause | Diagnostic Check | Corrective Protocol |

|---|---|---|---|

| Accuracy plateaus | Low-expertise or adversarial volunteers diluting signal. | Calculate per-volunteer agreement with a gold-standard subset. Remove volunteers with agreement < 0.6. | Implement a pre-qualification test. Use expectation-maximization (EM) to weight volunteers by inferred expertise. |

| Accuracy decreases | Task instructions are ambiguous, leading to random responses. | Measure inter-annotator agreement (Fleiss’ Kappa) on a pilot batch. A Kappa < 0.2 indicates poor consistency. | Redesign task with clear, discrete criteria. Use a hierarchical classification system. Add "I'm unsure" option. |

| High variance in results | Inadequate number of tasks per volunteer to reliably estimate expertise. | Check the number of tasks completed per volunteer. If < 10, expertise estimates are noisy. | Increase the number of tasks per volunteer or use a more conservative prior in the Bayesian model. |

Experimental Protocol: Determining Optimal N

Title: Iterative Bayesian Optimization of Volunteer Count (N)

Objective: To empirically determine the minimum number of volunteers (N) required per task to achieve a target label confidence threshold.

Materials & Reagent Solutions:

- Platform: Zooniverse, Labelbox, or custom JavaScript app.

- Gold Standard Dataset: 50-100 pre-labeled tasks for calibration.

- Volunteer Pool: Pre-screened cohort (N > 100 recommended).

- Statistical Software: Python (with

crowdkitordawid-skenelibraries), R.

Methodology:

- Initialization: Deploy a batch of

Mtasks (e.g., M=200). Each task is initially assigned tokvolunteers (start with k=3). - Aggregation & Inference: Use the Dawid-Skene EM algorithm to aggregate labels and estimate per-volunteer expertise (

e_i). - Confidence Scoring: For each task

j, calculate the posterior probability of the aggregated label being correct, given volunteer expertise and responses. - Decision & Iteration:

- If posterior confidence for a task >= target (e.g., 0.95), consider it complete.

- If confidence < target, task is reassigned to an additional

dnew volunteers (e.g., d=2).

- Loop: Repeat steps 2-4 until all tasks reach confidence threshold or a maximum

N(e.g., 15) is reached. - Analysis: Plot mean confidence and estimated accuracy (vs. gold standard) against mean

Nused per task. The optimalNis the point where the cost (time/$) of adding another volunteer outweighs the marginal gain in accuracy.

Title: Iterative Workflow for Dynamic Volunteer Allocation

The Scientist's Toolkit: Key Research Reagents

Table: Essential Solutions for Crowdsourcing Experiments

| Item | Function | Example/Note |

|---|---|---|

| Gold Standard (GS) Set | Provides ground truth for calibrating models and measuring accuracy. | 5-10% of total tasks, verified by domain experts. |

| Pre-Qualification Test | Filters out low-expertise or inattentive volunteers. | A short quiz of 5-7 GS tasks; pass score >80%. |

| Expectation-Maximization Algorithm | Core statistical method to infer true labels and latent volunteer expertise. | Implementation: crowdkit.aggregation.DawidSkene. |

| Inter-Annotator Agreement Metric | Quantifies task ambiguity and volunteer consensus. | Use Fleiss’ Kappa for multiple volunteers. Target >0.6. |

| Bayesian Confidence Score | Dynamic metric to decide if a task needs more volunteers. | Posterior probability from a model like Bayesian Truth Serum. |

| Expertise-Weighted Aggregator | Combines volunteer labels, giving more weight to reliable individuals. | Alternative: crowdkit.aggregation.GLAD. |

Q3: How do I adapt these models for highly specialized scientific tasks (e.g., cell phenotype classification in drug screens)? A: Specialized tasks require a tiered crowdsourcing model. Use the following protocol to integrate domain experts and naive volunteers.

Experimental Protocol: Tiered Crowdsourcing for Specialist Tasks

- Task Decomposition: Break the complex classification into a decision tree of simpler, binary questions.

- Volunteer Stratification: Create two groups: Tier 1 (Naive): Large pool for simple, initial filtering tasks. Tier 2 (Expert): Small pool of professionals (e.g., PhD researchers) for final, complex judgments.

- Routing Logic: Tasks are first classified by

N_naive(e.g., 5) Tier 1 volunteers. If consensus is high and label is "normal," task is retired. If consensus is low or label is "anomalous," task is escalated toM_expert(e.g., 2) Tier 2 volunteers. - Validation: Compare final labels from this tiered system against a full expert review benchmark to measure efficiency gain vs. accuracy trade-off.

Title: Tiered Workflow for Complex Scientific Tasks

A Practical Guide to Calculating Your Optimal Volunteer Cohort

This technical support center provides troubleshooting guides and FAQs for researchers, scientists, and drug development professionals optimizing volunteer cohort size in classification task research, such as labeling medical images or scoring phenotypic responses.

FAQs & Troubleshooting Guides

Q1: Our inter-rater reliability (IRR) is lower than expected. What are the primary causes and solutions? A: Low IRR often stems from ambiguous task instructions or poorly defined classes.

- Troubleshooting Steps:

- Audit Instructions: Have a colleague not involved in the project attempt the task. Note where they hesitate or ask questions.

- Pilot & Refine: Run a micro-pilot with 3-5 volunteers. Calculate percent agreement or Fleiss' kappa. Revise instructions and examples based on discrepancies.

- Implement Quality Control: Introduce unambiguous "test questions" with known answers into the task stream to monitor ongoing performance.

Q2: How do we handle extreme class imbalance (e.g., rare event detection) in volunteer response data? A: Imbalance biases standard accuracy metrics and can skew volunteer agreement.

- Protocol Adjustment:

- Stratified Sampling: Present volunteers with a dataset enriched for the rare class during the labeling phase to ensure sufficient examples for learning. Maintain original distribution in the final evaluation set.

- Metric Shift: Use metrics like Matthews Correlation Coefficient (MCC) or F1-score instead of accuracy.

- Sample Size Implication: Required sample size will increase to reliably capture rare event characteristics.

Q3: What is the most robust method for estimating required volunteers per task from pilot data? A: Use a statistical power approach for agreement.

- Methodology:

- Conduct a pilot study with

n_pilotsamples andv_pilotvolunteers. - Calculate the observed agreement rate (

p_obs) and the chance agreement (p_chance). Compute Cohen's or Fleiss' Kappa (κ). - Define your target confidence interval width (e.g., ±0.1 for κ). Use the formula for the confidence interval of kappa to solve for required number of samples or volunteers. Bootstrap resampling of pilot data is recommended for stability.

- Conduct a pilot study with

Q4: During a longitudinal classification study, volunteer performance appears to drift. How can this be detected and corrected? A: Drift can be due to fatigue or unintentional criterion shifting.

- Detection & Correction Protocol:

- Embedded Anchors: Systematically intersperse a fixed set of reference samples throughout the task sequence.

- Control Chart: Plot the consistency of ratings on these anchor samples over time using a statistical process control chart.

- Intervene: If a drift signal is detected, retrain the volunteer using the original guidelines and consider implementing mandatory breaks or task rotation.

Table 1: Common Agreement Statistics & Use Cases

| Statistic | Formula (Conceptual) | Best For | Interpretation Threshold |

|---|---|---|---|

| Percent Agreement | (Agreed Items) / (Total Items) |

Quick initial check, simple tasks | >80% often considered acceptable |

| Cohen's Kappa (κ) | (p_obs - p_exp) / (1 - p_exp) |

2 volunteers rating into 2+ categories | <0: Poor, 0.01-0.20 Slight, 0.21-0.40 Fair, 0.41-0.60 Moderate, 0.61-0.80 Substantial, 0.81-1.0 Almost Perfect |

| Fleiss' Kappa (K) | Extension of Scott's Pi for >2 volunteers | Fixed number of volunteers >2 rating into 2+ categories | Same scale as Cohen's Kappa. |

| Intraclass Correlation (ICC) | Based on ANOVA variance components | Continuous or ordinal data; assesses consistency/absolute agreement | ICC<0.5 Poor, 0.5-0.75 Moderate, 0.75-0.9 Good, >0.9 Excellent |

Table 2: Sample Size Factors for Classification Tasks

| Factor | Effect on Required Sample/Volunteer Size | Adjustment Strategy |

|---|---|---|

| Higher Target Precision (Narrower CI for κ) | Increases | Define acceptable margin of error a priori. |

| Lower Expected Agreement (κ) | Increases | Use conservative κ estimate from literature or early pilot. |

| Increased Number of Categories | Increases | Consider collapsing rarely used categories if scientifically valid. |

| Task Complexity / Ambiguity | Increases | Invest in more comprehensive training and clearer instructions to reduce noise. |

Experimental Protocols

Protocol 1: Pilot Study for Initial Parameter Estimation

Objective: To obtain realistic estimates of inter-rater agreement and task completion time for power analysis.

- Design: Select a representative, random subset of 3-5% of the total data pool or a minimum of 50 samples.

- Volunteers: Recruit 5-8 representative volunteers from the target population.

- Execution: Volunteers complete the classification task using draft instructions and interface.

- Data Analysis: Calculate inter-rater reliability (Fleiss' Kappa recommended). Record median task completion time and subjective feedback.

- Output: Estimates for

p_obs,κ, variance, and time are fed into formal sample size calculation.

Protocol 2: Sample Size Calculation for Target Kappa

Objective: To calculate the number of volunteers or samples needed to estimate kappa with a specified confidence interval width.

- Input Parameters: Use pilot study results: Estimated Kappa (

κ), number of categories (k), number of raters per item in pilot (v_pilot). - Define Precision: Set desired confidence interval width (

W). E.g., κ ± 0.15. - Calculation Method: Apply the formula for the asymptotic variance of Fleiss' Kappa. An iterative or bootstrap approach is often required. Software (e.g., R

irrpackage, PASS) should be used. - Output:

N_samples: The required number of samples to be classified by each volunteer to achieve the desired precision for the agreement estimate.

Visualizations

Diagram 1: Volunteer Classification Study Workflow

Diagram 2: Key Factors in Sample Size Estimation

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions

| Item | Function in Classification Task Research |

|---|---|

| Qualtrics, REDCap, or Labvanced | Platforms for designing and deploying controlled, electronic classification tasks with integrated data logging. |

| irr Package (R) / pingouin (Python) | Statistical libraries dedicated to calculating inter-rater reliability metrics (Kappa, ICC) and their confidence intervals. |

| G*Power 3.1 or PASS Software | Specialized tools for performing statistical power analysis and sample size calculation for various designs, including correlation/agreement. |

| Reference Standard Dataset | A curated "gold standard" subset of data, expertly annotated, used for training volunteers and as embedded anchors for quality control. |

| Bootstrap Resampling Script | Custom code (R/Python) to simulate repeated sampling from pilot data, providing robust, distribution-free estimates for required sample sizes. |

Technical Support Center

Frequently Asked Questions (FAQs) & Troubleshooting Guides

Q1: My study uses an ordinal pain scale (0-10). For power analysis, should I treat it as a continuous or dichotomous variable (e.g., responder: ≥30% reduction)? A: Treating an ordinal scale as continuous can inflate Type I error if distributional assumptions are violated. Dichotomizing simplifies analysis but loses information and reduces statistical power. Recommended Protocol: For robust sample size calculation, use methods specific for ordinal data, such as the proportional odds model. Conduct a pilot study to estimate the distribution across categories. Use simulation-based power analysis if standard software lacks direct options.

Q2: During power analysis, what is a realistic effect size to assume for a novel drug vs. placebo in a Phase II clinical trial with a binary endpoint? A: Assumptions should be based on literature and clinical relevance. Unrealistically large effect sizes lead to underpowered studies. See the table below for common benchmarks.

Q3: My power analysis software requires the baseline event rate (control proportion). Where can I find reliable estimates? A: Use recent, high-quality systematic reviews and meta-analyses for the specific patient population and standard of care. Do not rely on single, small studies. If data is scarce, plan a small internal pilot study to estimate this parameter before finalizing the main trial design.

Q4: How do I account for anticipated participant dropout or non-adherence in my sample size calculation? A: Inflate your calculated sample size (N) to account for attrition. Use the formula: Nadj = N / (1 - dropoutrate). For example, with N=100 and a 15% anticipated dropout rate, recruit N_adj = 100 / (0.85) ≈ 118 volunteers.

Q5: What is the difference between superiority, non-inferiority, and equivalence trial designs in the context of power analysis? A: The hypothesis and margin (Δ) differ. See the table below for a comparison critical to defining parameters for power analysis.

Data Presentation Tables

Table 1: Common Effect Size Benchmarks for Dichotomous Outcomes in Clinical Research

| Study Type | Typical Control Group Event Rate | Realistic Absolute Risk Reduction (ARR) to Power For | Typical Odds Ratio (OR) / Risk Ratio (RR) |

|---|---|---|---|

| Phase II (Exploratory) | Varies by disease | 10-20% | 1.8 - 3.0 |

| Phase III (Pivotal - Superiority) | Well-established | 5-15% (clinically meaningful) | 1.5 - 2.2 |

| Medical Device / Procedure | Based on standard care | ≥10% | ≥1.8 |

| Behavioral Intervention | Often ~50% | 10-25% | 1.4 - 2.0 |

Table 2: Power Analysis Parameter Comparison by Trial Objective

| Parameter | Superiority Trial | Non-Inferiority Trial | Equivalence Trial |

|---|---|---|---|

| Primary Hypothesis | New treatment > Control | New treatment not worse than Control by margin Δ | New treatment = Control ± margin Δ |

| Key Statistical Input | Expected difference > 0 | Non-inferiority margin (Δ) | Equivalence margin (Δ) |

| Typical Alpha (α) | 0.05 (one-sided) or 0.05 (two-sided) | 0.025 (one-sided) | 0.05 (two-sided) |

| Power (1-β) | 80% or 90% | 80% or 90% | 80% or 90% |

Experimental Protocols

Protocol for Simulation-Based Power Analysis for an Ordinal Outcome

- Define the Research Question: State the primary comparison (e.g., Drug A vs. Placebo) and the ordinal outcome (e.g., 7-point Clinical Global Impression of Change scale).

- Specify the Alternative Hypothesis: Define the expected distribution of subjects across ordinal categories for each group, based on pilot data or literature. This is the effect size.

- Choose the Statistical Test: Specify the primary analysis method (e.g., proportional odds logistic regression, Wilcoxon-Mann-Whitney test).

- Program the Simulation: a. Write a script (in R, Python, etc.) to simulate 1,000s of trials. b. For each simulated trial, randomly generate ordinal data for the planned sample size, adhering to the distributions defined in Step 2. c. Apply the chosen statistical test to each simulated dataset and record whether the result is statistically significant (p < α).

- Calculate Empirical Power: The proportion of simulated trials yielding a significant result is the estimated statistical power.

- Iterate: Adjust the sample size or effect size assumptions and repeat simulations until the desired power (e.g., 80%) is achieved.

Protocol for Determining the Clinically Meaningful Difference for a Dichotomous Endpoint

- Literature Review: Conduct a systematic review to establish the historical performance (response rate) of the standard therapy/placebo.

- Expert Elicitation: Convene a panel of key opinion leaders (KOLs), clinicians, and patient advocates.

- Anchor-Based Methods: Present data linking changes in the binary endpoint (e.g., achievement of remission) to changes in validated quality-of-life instruments or long-term clinical outcomes.

- Consensus Building: Use a modified Delphi process to reach agreement on the minimum absolute or relative improvement that would justify the new intervention's risks and costs.

- Documentation: The finalized margin must be justified and fixed in the study protocol prior to enrollment.

Mandatory Visualizations

Title: Power Analysis Workflow for Volunteer Sample Sizing

The Scientist's Toolkit: Research Reagent Solutions

| Tool / Reagent | Primary Function in Power Analysis Context |

|---|---|

| Statistical Software (R, SAS, PASS, nQuery) | Executes complex power calculations and simulation-based analysis for non-standard designs and endpoints. |

| Published Literature / Meta-Analyses | Provides empirical data for realistic baseline event rates, variability, and plausible effect sizes to inform assumptions. |

| Pilot Study Data | Offers study-specific estimates for variability (SD) and control group parameters, reducing assumption uncertainty. |

| Sample Size Simulation Script (Custom Code) | Allows flexible modeling of complex scenarios (e.g., clustered ordinal data, missing data patterns) not covered by standard software. |

| Expert Panel Consensus Guidelines | Helps define the clinically meaningful difference (the effect size Δ), ensuring the powered study has practical relevance. |

Troubleshooting Guide & FAQs

Q1: My Expectation-Maximization (EM) algorithm fails to converge when aggregating volunteer labels. What are the primary causes and solutions?

A: Non-convergence typically stems from poor initialization or insufficient data per task.

- Cause 1: Random Initialization. Randomly guessing initial volunteer competencies can lead to convergence on poor local maxima.

- Solution: Use a majority vote or simpler heuristic (like agreement with a known gold standard subset) to initialize the EM algorithm.

- Cause 2: Low Task Redundancy. With too few volunteers per task (e.g., <3), the model lacks sufficient signal to disentangle true labels from volunteer error.

- Solution: Increase the number of volunteers per task. The table below quantifies the relationship between volunteers per task, model accuracy, and convergence rate based on a recent simulation (2023).

Table 1: Impact of Volunteer Redundancy on Dawid-Skene Model Performance

| Volunteers per Task | Simulated Label Accuracy (Mean ± SD) | Convergence Rate (%) | Avg. Iterations to Converge |

|---|---|---|---|

| 2 | 0.72 ± 0.15 | 65% | 42 |

| 3 | 0.85 ± 0.08 | 92% | 28 |

| 5 | 0.92 ± 0.05 | 98% | 18 |

| 7 | 0.94 ± 0.03 | 100% | 15 |

Q2: How do I validate the estimated "ground truth" from the Dawid-Skene model in the absence of expert labels?

A: Implement cross-validation and posterior checks.

- Protocol: Held-Out Validation Set: If you have a small set of expert-verified tasks, hold them out during EM training. After model fitting, compare the model's posterior probabilities for the true class on these tasks. High posterior probability for the expert label indicates good model fit.

- Protocol: Posterior Uncertainty: Analyze tasks where the model's posterior probability for the most likely class is low (e.g., <0.8). Manually review these tasks; they often represent ambiguous cases or tasks labeled by consistently poor volunteers. This can identify systematic labeling issues.

- Workflow Diagram:

Title: Dawid-Skene EM Workflow with Validation Paths

Q3: What are the main differences between the classic Dawid-Skene (DS) model and other EM-based approaches for volunteer aggregation?

A: Key extensions address different assumptions about volunteer behavior. See the comparison table.

Table 2: Comparison of EM-Based Label Aggregation Models

| Model | Key Feature | Best For | Limitation |

|---|---|---|---|

| Classic Dawid-Skene | Estimates a confusion matrix per volunteer. | Scenarios where volunteers have systematic, class-dependent biases. | Requires many labels per volunteer to estimate full matrix; can overfit. |

| One-Parameter (Bernoulli) Model | Assumes each volunteer has a single, class-independent accuracy. | Homogeneous tasks where errors are equally likely across classes. | Fails if volunteer mistakes are specific to certain classes. |

| Item-Difficulty Models | Extends DS to model the inherent difficulty of each classification task. | Datasets with a mix of easy and ambiguous tasks. | Increased model complexity; requires more volunteers per task. |

Q4: How can I determine the optimal number of volunteers per task to minimize cost while maintaining label quality for my specific study?

A: Conduct a pilot study using an adaptive design.

- Experimental Protocol:

- Pilot Phase: Have a large number of volunteers (e.g., 10) label a small, representative subset of tasks (100-200).

- Model Fitting: Run the Dawid-Skene model on this pilot data.

- Simulation: Systematically subsample the collected labels to simulate having fewer volunteers per task (from 2 up to the max collected). Re-run the model on each subsample.

- Analysis: Plot the estimated label accuracy (from model posterior certainty) against the number of volunteers per task. Identify the point of diminishing returns.

- Decision: Use the smallest number of volunteers that achieves your target accuracy threshold for the main study.

- Cost-Quality Trade-off Diagram:

Title: Volunteer Redundancy Trade-Off Decision Point

The Scientist's Toolkit: Research Reagent Solutions for Volunteer Studies

Table 3: Essential Materials & Digital Tools for Label Aggregation Research

| Item / Solution | Function in Research |

|---|---|

| Annotation Platform (e.g., Labelbox, Prodigy) | Presents tasks to volunteers, records labels, and exports structured data (worker ID, task ID, label). |

| Computational Environment (Python/R with NumPy, SciPy) | Provides the framework for implementing custom EM algorithms and statistical analysis. |

Dawid-Skene Implementation Library (e.g., crowd-kit in Python) |

Offers pre-tested, optimized implementations of aggregation models, reducing coding errors. |

| Gold Standard Task Set | A subset of tasks with known, expert-verified labels. Critical for model validation and initialization. |

| Volunteer Demographic & Experience Questionnaire | Metadata used to stratify volunteers and model subgroups (e.g., expert vs. novice confusion matrices). |

Troubleshooting Guides & FAQs

Q1: During iterative volunteer sampling, my classification accuracy plateaus or decreases after an initial rise. What could be causing this? A: This is often due to volunteer fatigue or a lack of diversity in subsequent adaptive batches. To troubleshoot:

- Check for Fatigue: Implement and analyze periodic simple attention-check tasks within your workflow. A drop in performance on these checks indicates fatigue.

- Analyze Batch Diversity: Calculate the representativeness of new adaptive batches against the initial data distribution. Use metrics like Population Stability Index (PSI) or Kullback–Leibler divergence.

- Protocol Adjustment: Introduce a "cool-off" period or switch to a new volunteer cohort. Re-calibrate your adaptive algorithm to prioritize underrepresented data features, not just high-uncertainty samples.

Q2: How do I determine the optimal batch size for real-time adjustment in a constrained budget? A: The optimal batch size balances statistical power with feedback frequency. Use the following pilot experiment protocol:

- Run a small pilot with a pre-defined task difficulty.

- Vary the batch size (e.g., 5, 10, 20, 50 submissions) and measure the time to reach a target accuracy threshold (e.g., 95%).

- Model the cost (time * volunteers per batch) against convergence speed. The "elbow" of this curve is often optimal.

Table: Pilot Study Results for Batch Size Optimization (Hypothetical Data)

| Batch Size | Avg. Time per Batch (min) | Batches to 95% Accuracy | Total Time to Target (min) | Total Volunteer Units (Batch Size * Batches) |

|---|---|---|---|---|

| 5 | 15 | 22 | 330 | 110 |

| 10 | 25 | 12 | 300 | 120 |

| 20 | 40 | 7 | 280 | 140 |

| 50 | 90 | 4 | 360 | 200 |

Interpretation: A batch size of 20 provides the best trade-off, minimizing total time without excessively inflating total volunteer units.

Q3: My real-time confidence score threshold for adaptive re-sampling seems too sensitive, causing excessive re-tasking. How can I calibrate it? A: Overly sensitive confidence thresholds waste volunteer resources. Implement this calibration protocol:

- Gold Standard Set: Create a subset of tasks with expert-verified ground truth labels.

- Threshold Sweep: Run your aggregation model (e.g., Dawid-Skene) on volunteer responses for these tasks. Record the model's confidence score for each item.

- Error Analysis: For a range of confidence thresholds (e.g., 0.6, 0.7, 0.8, 0.9), measure the False Negative Rate (good items flagged for re-sampling) and the resource cost.

- Set Threshold: Choose the threshold that keeps the FNR below an acceptable limit (e.g., 5%) while minimizing projected total tasks.

Table: Confidence Threshold Calibration Analysis

| Confidence Threshold | % of Tasks Flagged for Re-Sampling | False Negative Rate (Error) | Projected Cost Increase |

|---|---|---|---|

| 0.60 | 35% | 1.5% | 54% |

| 0.75 | 18% | 3.2% | 22% |

| 0.85 | 8% | 5.1% | 9% |

| 0.95 | 2% | 12.3% | 2% |

Q4: What is the most effective method for aggregating volunteer labels in an iterative setting where volunteer skill may change? A: Static aggregation models fail in adaptive settings. Use iterative expectation-maximization models that update volunteer reliability estimates with each batch.

- Recommended Method: Implement a Dynamic Dawid-Skene model or a Beta-Binomial model that updates per-volunteer confusion matrices after each batch of results.

- Workflow: This creates a feedback loop where improved task assignment (based on updated estimates) leads to better data, which further refines reliability estimates.

Title: Adaptive Label Aggregation & Volunteer Estimation Feedback Loop

Q5: How can I validate that my adaptive sampling strategy is improving outcomes over a simple random baseline? A: You must run a controlled, A/B-style validation experiment.

- Protocol:

- Split: Randomly divide your task pool into two statistically similar groups.

- Group A (Control): Use simple random sampling for volunteer assignment throughout.

- Group B (Test): Use your iterative & adaptive sampling strategy.

- Holdout Set: Maintain a separate, expert-labeled gold standard set covering the task domain.

- Metrics: Compare both groups on:

- Accuracy: Against the gold standard over time/expenditure.

- Efficiency: Cost (volunteer units) to reach a pre-defined accuracy target.

- Robustness: Final model performance on edge-case tasks.

Title: A/B Validation Protocol for Adaptive Sampling Strategies

The Scientist's Toolkit: Key Research Reagent Solutions

Table: Essential Materials for Iterative Volunteer Research

| Item/Reagent | Function in Research Context | Key Consideration |

|---|---|---|

| MTurk / Prolific | Platforms for recruiting a large, diverse pool of volunteer annotators. | Enable custom qualifications and master worker lists for longitudinal studies. |

| Django/Node.js Backend | Custom web server to host classification tasks, manage batch assignment, and log responses in real-time. | Must have low-latency API endpoints for adaptive re-sampling decisions. |

| DynamoDB / Firebase | NoSQL database for storing volatile state data: volunteer session info, task queues, and interim results. | Chosen for scalability and real-time update capabilities essential for adaptive workflows. |

Expectation-Maximization Library (e.g., crowd-kit) |

Software library implementing dynamic label aggregation models (e.g., Dawid-Skene, MACE). | Must allow incremental updates to parameters as new batch data arrives. |

Statistical Computing Environment (R/Python with scipy) |

For calculating convergence metrics, confidence intervals, and performing threshold analysis. | Scripts should be integrated into the main workflow to trigger adaptive rules. |

| Gold Standard Dataset | A subset of tasks (5-10%) with expert-verified labels, covering the full spectrum of task difficulty. | Used for continuous validation, calibration, and as a stopping criterion. |

Troubleshooting Guides and FAQs

Q1: During a simulation of volunteer classification in my crowdsourcing platform (e.g., Labelbox, Prodigy), the task completion time suddenly spikes. What could be the cause?

A: This is often due to network latency or a misconfigured batch size in your simulation script. First, verify your API call rate limits haven't been exceeded, which can cause queuing. Second, check if your simulated "volunteers" are being presented with overly large batches of images or text, causing client-side processing delays. Reduce the items_per_batch parameter in your simulation setup and monitor again.

Q2: I am using an annotation management platform (e.g., CVAT, Supervisely) and my inter-annotator agreement (IRA) metrics (Fleiss' Kappa) are inconsistently calculated between my local script and the platform's dashboard. How do I resolve this?

A: Discrepancies commonly arise from differences in how missing annotations or "skip" responses are handled. The platform may exclude skipped items from its calculation, while your script might treat them as a distinct category. Protocol for Reconciliation: 1) Export the raw annotation judgments from the platform. 2) In your local script (Python, using statsmodels or sklearn), explicitly define the list of possible labels, including a "Skipped" class. 3) Recalculate Fleiss' Kappa using the formula: κ = (P̄ - P̄e) / (1 - P̄e), where P̄ is the observed agreement and P̄e is the expected chance agreement. Ensure both calculations use the same label set and participant pool.

Q3: When simulating volunteer behavior with the crowdkit or django-annotator libraries, how can I model variable volunteer expertise to optimize task allocation?

A: You must implement a latent variable model. Experimental Protocol for Simulating Variable Expertise: 1) Define a pool of N simulated volunteers. 2) Assign each volunteer an "expertise" score θ_i sampled from a Beta distribution (e.g., Beta(2,5) for a beginner-skewed pool). 3) For each task item with true label T_j, have volunteer i provide a correct label with probability equal to their θ_i. 4) Use the crowdkit library's GoldMajorityVote or MACE aggregator to infer true labels from the noisy simulated judgments. Vary the number of volunteers per task and measure inference accuracy to find the optimum.

Q4: I receive "Docker container runtime errors" when deploying a custom annotation interface for a drug compound classification task. What are the first diagnostic steps?

A: 1) Check the container's log output using docker logs [container_id]. 2) Verify that all required volumes are correctly mounted, especially any directories containing large compound structure files (e.g., SDF, SMILES). 3) Ensure the Docker image has sufficient memory (--memory flag) allocated; parsing chemical files is resource-intensive. 4) Confirm the internal application port mapping matches the Dockerfile EXPOSE instruction and your runtime -p flag.

Q5: How do I handle data privacy (e.g., patient data in medical imaging annotation) when using cloud-based platforms like Scale AI or Hasty?

A: You must engage the platform's On-Premises or Virtual Private Cloud (VPC) deployment option. Before uploading any data, ensure you have executed a Data Processing Agreement (DPA). For simulations, always use fully synthetic datasets (e.g., generated with pydicom and python-rtvs) or public, de-identified repositories like The Cancer Imaging Archive (TCIA). Never use real PHI in simulation environments.

Table 1: Comparison of Popular Annotation Platforms for Volunteer Task Simulation

| Platform / Software | Key Simulation Feature | Cost Model (Starting) | Optimal For Volunteer # Research | API for Simulation? |

|---|---|---|---|---|

| Labelbox | Synthetic Data Pipeline | Enterprise Quote | High (dynamic assignment logic) | Yes (Python) |

| Prodigy | Active Learning Loops | $490 (one-time) | Medium (controlled studies) | Yes (REST) |

| CVAT | Open-source, Docker-deployable | Free | High (full control over environment) | Yes (Python SDK) |

| Amazon SageMaker Ground Truth | Built-in workforce simulation | Pay-per-task | Medium (A/B testing configurations) | Yes (boto3) |

| Doccano | Open-source text focus | Free | Low to Medium (lightweight sims) | Yes (REST) |

| crowdkit library | Pure Python simulation models | Free (MIT License) | High (algorithmic research) | Library-based |

Table 2: Impact of Volunteers Per Task on Annotation Quality & Cost (Simulated Data) Results from a simulated image classification task with 1000 items, varying ground truth difficulty.

| Volunteers Per Task | Mean IRA (Fleiss' κ) | Aggregate Accuracy vs. Ground Truth | Estimated Relative Cost (Units) |

|---|---|---|---|

| 1 | N/A | 72.5% | 1.0x |

| 3 | 0.45 | 88.2% | 3.0x |

| 5 | 0.61 | 92.7% | 5.0x |

| 7 | 0.65 | 93.1% | 7.0x |

| 9 | 0.66 | 93.2% | 9.0x |

Experimental Protocol: Determining Optimal Volunteers per Task

Title: Protocol for Optimizing Volunteer Count in Classification Tasks.

Objective: To determine the point of diminishing returns for annotation quality versus cost by varying the number of volunteers per task.

Materials: See "The Scientist's Toolkit" below.

Methodology:

- Dataset Preparation: Procure or generate a dataset of M items (e.g., 2000 cell microscopy images). Establish a verified ground truth label for each item through expert consensus.

- Volunteer Pool Simulation: Using the

crowdkitlibrary, simulate a pool of V volunteers (e.g., 500). Assign each a reliability score θ from aBeta(α,β)distribution to model a heterogeneous skill pool. - Task Assignment Simulation: For each experimental run k, assign every dataset item to N_k simulated volunteers (e.g., N_k ∈ [1, 3, 5, 7, 9]). Simulate their annotations, where the probability of a correct label equals the volunteer's θ.

- Aggregation & Measurement: For each run k, aggregate the N_k annotations per item using the Dawid-Skene model (via

crowdkit.aggregation.DawidSkene). Compare aggregated labels to ground truth to compute accuracy. Compute Inter-Rater Agreement (IRA) using Fleiss' Kappa for runs where N_k > 1. - Analysis: Plot accuracy and IRA against N_k. Identify the N_k where the marginal gain in accuracy falls below a predefined cost-threshold (e.g., <2% improvement per added volunteer).

Visualizations

Title: Simulation Workflow for Optimizing Volunteer Count

Title: Core Trade-offs in Volunteer Number Research

The Scientist's Toolkit: Research Reagent Solutions

| Item / Reagent | Function in Volunteer Number Research |

|---|---|

crowdkit Python Library |

Provides production-ready implementations of aggregation (Dawid-Skene, MACE) and simulation models for benchmarking. |

| Synthetic Dataset (e.g., MNIST, CIFAR-10) | A publicly available, benchmark dataset with known labels used to simulate classification tasks without privacy concerns. |

Beta Distribution (from scipy.stats) |

A statistical model used to generate realistic, continuous expertise scores (θ) for a simulated volunteer population. |

| Docker & Docker Compose | Containerization tools to ensure reproducible deployment of annotation platforms (like CVAT) across research environments. |

| Inter-Rater Agreement Metric (Fleiss' Kappa) | A statistical measure to quantify the reliability of agreement between multiple volunteers for categorical items. |

| Ground Truth Dataset | The expert-verified set of labels for experimental data, serving as the gold standard against which volunteer accuracy is measured. |

REST API Client (e.g., requests, platform-specific SDK) |

Software to programmatically interact with annotation platforms, enabling automated task deployment and data collection for experiments. |

Solving Common Pitfalls and Fine-Tuning Your Volunteer Strategy

Technical Support Center: Troubleshooting & FAQs

FAQ 1: What does "High Disagreement with Increasing Volunteers" mean in a classification task? This red flag occurs when the inter-rater reliability (IRR) metric decreases or fails to improve as more volunteers (annotators) are added to a classification task, such as labeling cell images or scoring assay results. Instead of converging toward a consensus, data variability increases.

FAQ 2: What are the primary causes of this issue?

- Cause A: Ambiguous or Incomplete Task Guidelines: Instructions lack clear decision boundaries for edge cases.

- Cause B: Inconsistent Volunteer Expertise: High variance in annotator training or domain knowledge.

- Cause C: Inherently Subjective or Complex Data: The classification criterion relies on subtle, subjective judgment.

- Cause D: Faulty Task Interface or Design: The data collection platform introduces bias or confusion.

FAQ 3: How do I quantitatively diagnose the root cause? Implement the following diagnostic protocol.

Diagnostic Experimental Protocol

Objective: Systematically isolate the factor causing high disagreement. Method: Perform a controlled, phased experiment with your volunteer pool.

Phase 1 - Baseline IRR Measurement:

- Select a random subset of

Nitems from your dataset. - Have all current volunteers (

V) classify each item. - Calculate Fleiss' Kappa (for categorical data) or Intraclass Correlation Coefficient (ICC) (for continuous scores) to establish baseline disagreement.

- Select a random subset of

Phase 2 - Controlled Variable Introduction:

- Group 1 (Guideline Test): Provide a refined, detailed guideline with visual examples to a randomly selected half of volunteers. The other half uses the original guideline. Re-measure IRR on a new item set.

- Group 2 (Expertise Test): Segment volunteers by proven expertise (e.g., via a pre-test). Calculate IRR separately for expert and novice groups.

- Group 3 (Data Complexity Test): Have a panel of ground-truth experts label all items. Calculate per-item difficulty (disagreement index). Correlate difficulty with volunteer disagreement.

Phase 3 - Analysis:

- Compare IRR metrics across groups and phases using the decision table below.

Table 1: Key Inter-Rater Reliability Metrics for Diagnosis

| Metric | Best For | Interpretation Range | Diagnostic Implication |

|---|---|---|---|

| Fleiss' Kappa (κ) | Multi-volunteer, categorical labels | Poor: κ < 0.4 Good: 0.4 ≤ κ ≤ 0.75 Excellent: κ > 0.75 | Low κ across all volunteers suggests Cause A or C. |

| Intraclass Correlation Coefficient (ICC) | Multi-volunteer, continuous ratings | Poor: ICC < 0.5 Moderate: 0.5 ≤ ICC ≤ 0.75 Good: >0.75 | Low ICC indicates high variance; check for Cause B. |

| Disagreement Index (DI) | Per-item difficulty | DI = 1 - (Agreements / Total Pairs) | High DI on specific items flags Cause C. |

| Kappa by Expertise Group | Isolating volunteer skill impact | Δκ (Expert - Novice) | A large Δκ (>0.3) strongly indicates Cause B. |

Table 2: Diagnostic Decision Matrix Based on Experimental Results

| Test Scenario | Result Pattern | Most Likely Primary Cause | Recommended Action |

|---|---|---|---|

| IRR improves in Group 1 (new guidelines) but not in control group. | Cause A: Ambiguous Guidelines | Revise protocol with clear examples and decision trees. | |

| High IRR in expert group, low IRR in novice group. Large Δκ. | Cause B: Inconsistent Expertise | Implement mandatory training & qualification tests. Use weighted voting. | |

| High Disagreement Index (DI) correlates with specific data subtypes. | Cause C: Inherent Data Subjectivity | Redesign task: use ranking vs. classification, or employ expert consensus for those items. | |

| IRR is low uniformly, and UI/UX feedback reports confusion. | Cause D: Faulty Task Design | Run a usability study and simplify the data collection interface. |

Visualization: Diagnostic Workflow

Title: Diagnostic Workflow for High Volunteer Disagreement

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Volunteer Classification Studies

| Item / Solution | Function in Diagnosis | Example / Specification |

|---|---|---|

| Standardized Reference Image Set | Provides ground truth for training and calibrating volunteer expertise. | A bank of 50-100 pre-labeled images/cases validated by expert consensus. |

| Qualification Test Module | Screens and segments volunteers by skill level pre-task. | A 20-item test with IRR >0.8 against expert labels. |

| Annotation Platform (Configurable) | Hosts tasks; allows A/B testing of guidelines and interface designs. | Tools like Labelbox, Supervisely, or custom REDCap surveys. |

| Statistical Analysis Script Pack | Automates calculation of κ, ICC, DI, and generates reports. | R script suite (irr package) or Python ( statsmodels, sklearn). |

| Detailed Guideline Framework | Defines decision boundaries with visual anchors for edge cases. | Interactive PDF with expandable flowchart sections. |

| Expert Consensus Panel | Establishes reference standards for ambiguous data items. | 3+ domain experts using a modified Delphi process. |

Technical Support Center

Troubleshooting Guides and FAQs

Q1: In our pilot, expert raters consistently outperform naive volunteers, but their throughput is low and cost is prohibitive. How can we design a scalable protocol? A: Implement a Tiered Skill-Pool workflow. Use a small gold-standard dataset, annotated by experts, to screen and qualify naive raters. All raters complete a short qualification task. Those achieving >90% accuracy on the gold-standard set are categorized as "Validated Naive Raters" and are assigned more complex sub-tasks. Experts are reserved for edge cases and final validation. This optimizes cost without sacrificing data quality.

Q2: We see high disagreement among naive raters on a cell classification task. Is this a task design or a rater skill issue? A: First, diagnose using the Confusion Matrix Protocol. Provide 50 identical images to 20 naive raters and 2 experts. Tabulate the classifications. If naive raters show high intra-group agreement but systematic deviation from experts (e.g., consistently misclassifying Cell Type A as B), the issue is likely ambiguous task definitions or inadequate training. If agreement is random, the task may be too complex for naive raters.

Q3: What is the optimal mix of expert and naive raters for a large-scale image annotation project in drug screening? A: The optimal mix depends on task complexity and target accuracy. For a binary classification task (e.g., "Apoptotic vs. Healthy Cell"), a blend of 10% expert and 90% naive raters, with a consensus model (e.g., requiring 3 naive votes to override 1 expert vote), can achieve 98% of expert-only accuracy at 60% lower cost. Refer to the table below for guidance.

Table 1: Rater Strategy Selection Guide

| Task Complexity | Target Accuracy | Recommended Expert % | Naive Rater Strategy | Expected Cost Reduction |

|---|---|---|---|---|

| Low (Binary, clear morphology) | >95% | 5-10% | Majority vote from 5+ raters | 70-80% |

| Medium (Multiple classes) | >90% | 15-25% | Consensus + Expert adjudication of disputes | 50-60% |

| High (Subtle phenotypes) | >99% | 50-100% | Expert only or naive pre-screening with expert review | 0-30% |

Q4: How do we create an effective training module for naive raters to improve initial accuracy? A: Develop an Interactive Calibration Protocol:

- Pre-Test: Raters classify 20 benchmark images.

- Focused Training: System presents immediate feedback, highlighting features using expert-annotated examples, specifically for the classes the rater scored poorly on.

- Post-Test: Raters classify a new set of 20 images. Only those achieving a preset threshold (e.g., 85% accuracy) proceed to the main task. This dynamic training tailors instruction to individual weaknesses.

Q5: What metrics should we track to monitor rater performance dynamically in a long-term study? A: Implement a dashboard tracking these key metrics per rater and cohort:

- Accuracy vs. Gold Standard: Percentage agreement with expert-validated hidden control tasks (seeded at 5% frequency).

- Confidence-Consistency Score: Correlation between a rater's self-reported confidence and the agreement of their rating with the final consensus.

- Time-on-Task Drift: Significant increases may indicate fatigue; decreases may indicate loss of engagement.

- Inter-Rater Reliability (Fleiss' Kappa): Measured weekly across the pool to detect overall task understanding decay.

Experimental Protocols

Protocol 1: Gold-Standard Dataset Creation for Rater Qualification Objective: Generate a reliable benchmark to screen naive rater skill.

- Sample Selection: Randomly select 100 data units (e.g., microscopy images) from the master dataset.

- Expert Annotation: Three independent domain experts annotate each unit. Resolve disagreements through discussion to reach a consensus label. This becomes the "gold-standard" set.

- Validation: A fourth expert reviews a random 20% of the consensus labels. Target: >99% agreement.

- Deployment: Integrate 10-20 gold-standard units randomly into the naive rater qualification task.

Protocol 2: Determining Optimal Number of Raters Per Task (Naive Pool) Objective: Find the point of diminishing returns for adding more naive raters.

- Setup: Select a task of medium complexity. Use a subset of 500 data units with expert-derived ground truth.

- Experiment: Simulate crowdsourcing by randomly sampling n naive ratings (where n=1,3,5,7,9) for each unit from a pilot rater pool.

- Analysis: For each value of n, calculate the accuracy of the majority vote against the ground truth. Plot accuracy vs. n.

- Output: Identify the value of n where the accuracy curve plateaus. This is the cost-effective number of naive raters per unit for this task complexity.

Table 2: Simulated Results for Protocol 2 (Hypothetical Data)

| Number of Naive Raters (n) | Majority Vote Accuracy (%) | Marginal Gain (Percentage Points) |

|---|---|---|

| 1 | 72.5 | - |

| 3 | 86.4 | +13.9 |

| 5 | 91.2 | +4.8 |

| 7 | 93.1 | +1.9 |

| 9 | 93.8 | +0.7 |

Visualizations

Tiered Rater Assignment Workflow

Naive Consensus with Expert Adjudication

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Rater Optimization Experiments

| Item | Function in Research Context | Example/Supplier |

|---|---|---|

| Gold-Standard Dataset | Serves as the objective "ground truth" for measuring rater accuracy and training performance. | Internally generated via Protocol 1. |

| Crowdsourcing Platform Software | Enables deployment of tasks to distributed naive rater pools, collects responses, and manages rater identity/performance. | Amazon Mechanical Turk (MTurk), Prolific, Labelbox, or custom LabKey modules. |

| Inter-Rater Reliability (IRR) Statistical Package | Quantifies the degree of agreement among raters, beyond chance. Critical for assessing task clarity. | irr package in R, statsmodels.stats.inter_rater in Python. |