Ensuring Reliability in Biomedical Research: A Framework for Calibrating Automated Ecological Data Verification Systems

This article provides researchers, scientists, and drug development professionals with a comprehensive guide to calibrating automated verification systems for complex ecological data.

Ensuring Reliability in Biomedical Research: A Framework for Calibrating Automated Ecological Data Verification Systems

Abstract

This article provides researchers, scientists, and drug development professionals with a comprehensive guide to calibrating automated verification systems for complex ecological data. It explores the foundational principles of why traditional QA/QC fails with ecological datasets, details methodological approaches for building and applying robust calibration protocols, offers troubleshooting strategies for common system failures, and presents validation frameworks for benchmarking performance against industry standards. The scope covers the entire lifecycle from system design to regulatory-grade validation, addressing the critical need for reliability in data driving biomedical discoveries and clinical decisions.

The Imperative for Calibration: Why Ecological Data Breaks Standard QA/QC

Technical Support Center: Troubleshooting & FAQs

Context: This support center operates within the framework of a thesis project on Calibrating automated verification systems for ecological data research. The guides address common issues when integrating diverse ecological data streams into biomedical analyses.

Frequently Asked Questions (FAQs)

Q1: During microbiome-host multi-omics integration, my automated verification pipeline flags a batch effect. The environmental metadata (e.g., sampling location, diet logs) and the host transcriptome data appear misaligned temporally. What are the first steps to diagnose this? A: This is a common calibration challenge for automated systems. Follow this protocol:

- Re-synchronize Timestamps: Verify the ISO 8601 format (YYYY-MM-DDThh:mm:ss) is consistent across all instruments and sample logs. Even minor timezone mismatches can cause flags.

- Run a Metadata Concordance Check: Use a script to cross-reference sample IDs from the sequencing manifest against the environmental database. A discrepancy rate >5% typically indicates a systematic ingestion error.

- Execute a Control Correlation: Select one known stable variable (e.g., laboratory ambient temperature from a controlled incubator) present in both datasets. Calculate its correlation across all samples. A correlation coefficient (r) < 0.7 suggests a misalignment requiring manual audit.

Q2: My ecological exposure data (air quality sensors, geospatial data) is continuous, but my patient cytokine data is from discrete time-points. How should I pre-process the environmental variables for association analysis without introducing bias? A: The key is to avoid arbitrary aggregation. Use an exposure window model based on the biological system under study.

- Define the Latency Window: Consult literature for your cytokine of interest (e.g., for IL-6 response to PM2.5, a 24-72 hour window is often relevant).

- Calculate the Area Under the Exposure Curve (AUEC): For each patient's cytokine measurement timepoint T, integrate the continuous environmental signal over the defined latency window preceding T.

- Use as Covariate: Input the calculated AUEC value as a continuous covariate in your linear mixed model alongside other omics data.

Q3: When calibrating my verification system for 16S rRNA data, I encounter high false-positive warnings for "taxonomic outlier samples." The system uses a pre-trained model on Earth Microbiome Project (EMP) data. How can I refine it for a specialized host-associated dataset (e.g., gut microbiome in a specific disease cohort)? A: This indicates a domain mismatch. Retrain the outlier detection layer.

- Create a Cohort-Specific Baseline: From your own data, after rigorous QC, randomly select 80% of samples to calculate a robust center (median) for key beta-diversity distances.

- Calculate Adaptive Thresholds: Define outlier thresholds as the 95th percentile of distances within this baseline set, not the EMP-derived thresholds.

- Update the Verification Rule: Replace the global EMP threshold in your automated system with this cohort-adaptive threshold. Re-run verification.

Q4: In a multi-omics workflow (metagenomics, metabolomics, clinical vitals), the automated data integrity checks pass, but the integrated data stream fails the "plausibility check" for a machine learning model. What does this mean? A: Integrity checks validate format, but plausibility checks assess biological/technical coherence. This failure suggests feature-level inconsistencies.

- Run a Inter-Modality Correlation Test: For a subset of features known to be related (e.g., E. coli abundance from metagenomics and lipopolysaccharide (LPS) intensity in metabolomics), perform a Spearman correlation. See expected correlation ranges below.

- Check for Unit of Measurement (UoM) Errors: Ensure all continuous variables are on a comparable scale. A common error is logging (log2, log10, ln) applied inconsistently across omics layers.

Table 1: Expected Correlation Ranges for Multi-Omics Feature Pairs

| Feature Pair (Omics Layer 1 -> Layer 2) | Expected Spearman Correlation Range (ρ) | Threshold for Flagging (ρ outside range) |

|---|---|---|

| E. coli abundance (MetaG) -> LPS intensity (Metabolomics) | +0.5 to +0.8 | < +0.3 |

| Dietary Fiber Log (Eco) -> SCFA Butyrate (Metabolomics) | +0.4 to +0.7 | < +0.2 |

| Community Diversity (16S) -> Host Inflammatory Score (Transcriptomics) | -0.6 to -0.3 | > -0.2 |

Table 2: Common Data Discrepancy Rates & System Responses

| Discrepancy Type | Typical Rate in Raw Data | Automated Verification Action (Threshold) | Calibration Requirement |

|---|---|---|---|

| Sample ID Mismatch | 2-8% | Halt Pipeline if >3% | Review LIMS-to-Wet Lab handoff |

| Metadata Field Missingness | 5-15% | Flag for Review if >10% | Implement required field validation |

| Multi-Omics Batch Effect (PCA) | -- | Flag for Review if PC1 explains >50% of variance | Apply ComBat or other correction |

Experimental Protocols

Protocol 1: Calibrating an Automated Verifier for Ecological Metadata Completeness

Purpose: To set threshold rules for an automated system flagging incomplete environmental sample records.

Materials: Ecological metadata spreadsheet, verification software (e.g., custom Python/R script with pandas, great_expectations).

Methodology:

- Ingest Data: Load the metadata file (CSV format) containing fields:

SampleID,Collection_DateTime,Location_GPS,Temperature_C,pH,Collector_ID. - Define "Complete" Rule: A record is defined as complete if

Collection_DateTime,Location_GPS, and at least two of the three analytical fields (Temperature_C,pH,Collector_ID) are populated. - Calculate Baseline Completeness: Run the rule on 5 prior, validated studies (N > 1000 samples total). Determine the historical mean completeness rate (e.g., 94%) and standard deviation (e.g., ±2%).

- Set Calibrated Threshold: Program the verifier to issue a Warning if completeness for a new dataset is below (historical mean - 2SD), and to Halt if below (historical mean - 4SD).

Protocol 2: Signal Alignment for Temporal Ecological and Biomedical Streams

Purpose: To algorithmically align continuous sensor data with discrete biospecimen collection points.

Materials: Time-series sensor data (e.g., PM2.5 readings), biospecimen collection log with UTC timestamps, computational environment (e.g., Python with pandas, numpy).

Methodology:

- Data Input: Load sensor data (timestamp, value). Load biospecimen log (sampleid, collectiontimestamp).

- Define Integration Window: For each biospecimen, define a biologically relevant look-back window (e.g., 48 hours prior to collection).

- Calculate Integrated Exposure: For each sample i, query all sensor readings where timestamp ∈ [Ti - 48h, Ti]. Calculate the time-weighted average exposure for that window.

- Output: Generate a new aligned dataset:

sample_id, collection_timestamp, integrated_exposure_value.

Diagrams

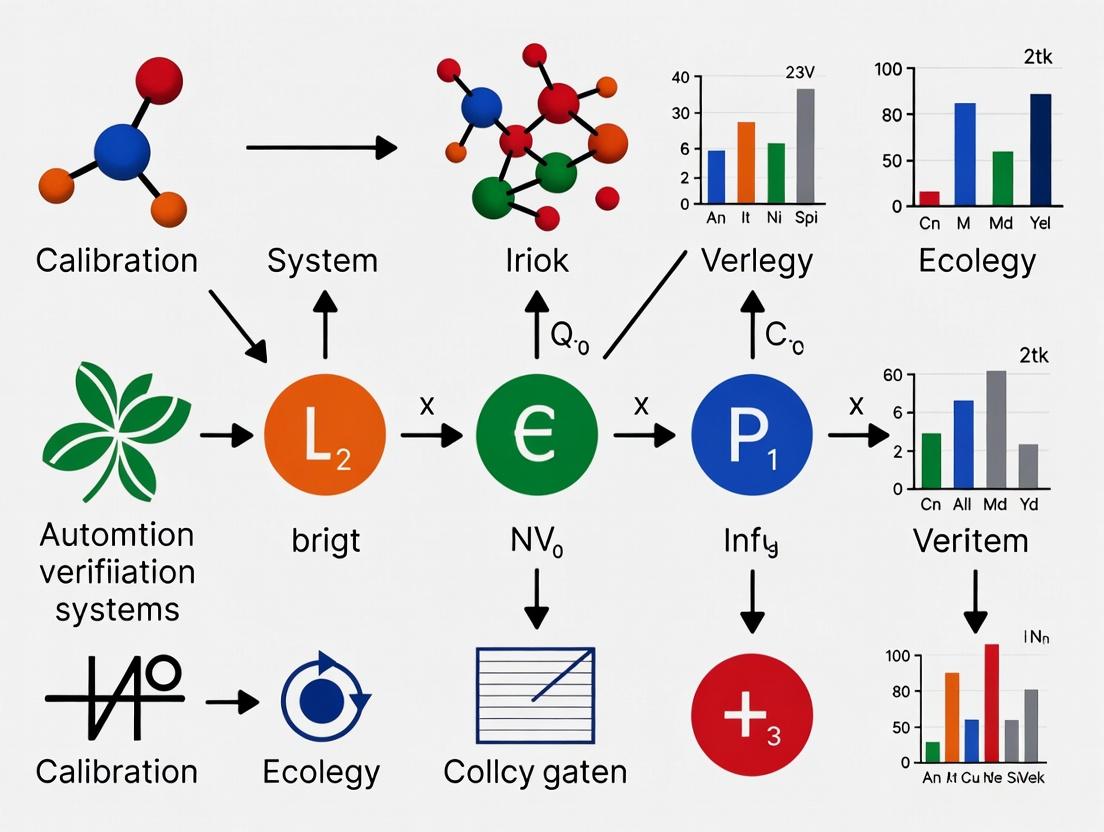

Diagram 1: Multi-Omics Data Verification Workflow

Diagram 2: Ecological Exposure Integration for Host Analysis

The Scientist's Toolkit: Research Reagent Solutions

| Item/Category | Primary Function in Ecological-Biomedical Research |

|---|---|

| Environmental Sample Preservation Kit (e.g., RNAlater for soil/water) | Stabilizes RNA/DNA from complex environmental samples at point-of-collection, enabling subsequent microbiome and host gene expression analysis from the same source. |

| Internal Standard Spike-Ins for Metabolomics (Isotopically Labeled) | Added to biospecimens pre-processing to correct for technical variation in mass spectrometry, allowing quantitative comparison of metabolites across different ecological exposure groups. |

| Synthetic Microbial Community (SynCom) Standards | Defined mixtures of known microbial strains used as positive controls in sequencing runs to calibrate taxonomic classification pipelines and detect batch-specific bias. |

| Geospatial Mapping Software & APIs (e.g., ArcGIS, Google Earth Engine) | Links patient or sample coordinates to curated ecological databases (land use, air quality, climate) to generate quantitative environmental exposure variables. |

| Multi-Omics Data Integration Platform (e.g., Symphony, KNIME) | Provides a workflow environment to harmonize, transform, and jointly analyze disparate data types (ecological, genomic, clinical) with consistent provenance tracking. |

Troubleshooting & FAQ: Calibrating Automated Verification for Ecological Data

FAQ 1: High Dimensionality & Feature Selection

Q: My verification system's performance degrades drastically when I input the full set of 10,000+ ecological variables (e.g., species counts, environmental sensors). What is the primary cause and how can I address it?

A: This is the "curse of dimensionality." In high-dimensional spaces, data becomes sparse, and distance metrics lose meaning, causing model overfitting and increased computational cost. Implement a two-stage feature selection protocol:

- Variance Stabilization: Apply a centered log-ratio (CLR) transformation to compositional data (like microbial abundances).

- Dimensionality Reduction: Use guided feature selection. First, apply a filter method (e.g., mutual information with the target) to reduce features to the top 1,000. Then, use a wrapper method like recursive feature elimination with cross-validation (RFECV) on a Random Forest model to select the final 50-100 most informative features.

Q: My Principal Component Analysis (PCA) results are dominated by a few technical artifacts, not biological signals. How do I correct this?

A: This indicates strong batch effects or non-biological variance. Before PCA, use a method like ComBat (from the sva R package) or linear mixed-effects models to adjust for known technical covariates (sequencing batch, sampling day). Always run PCA on the corrected data.

FAQ 2: Non-Stationarity & Temporal Drift

Q: My model, trained on last year's sensor data from a forest ecosystem, fails to accurately predict outcomes this year, despite similar seasonal conditions. What's happening?

A: You are encountering non-stationarity—the underlying data distribution has changed over time (concept drift). This is common in ecology due to climate change, species migration, or gradual soil depletion. Your verification system needs recalibration.

Protocol: Detecting and Correcting for Concept Drift

- Sliding Window Validation: Instead of a single train/test split, use a temporal cross-validation scheme. Train on data from window

[t-n, t], test on[t+1, t+m]. Iteratively slide the window forward. - Drift Detection Test: Apply the Kolmogorov-Smirnov (KS) test or Page-Hinkley test to the distributions of model prediction errors over sequential time windows. A significant change (p < 0.01) indicates drift.

- Recalibration: If drift is detected, retrain the model on the most recent data window or use online learning algorithms that adapt incrementally.

FAQ 3: Complex Interactions & Unobserved Confounders

Q: I've identified a strong predictive relationship between "Pollinator Species A" and "Crop Yield," but my domain expert insists it's not directly causal. How can my verification system account for hidden interactions?

A: The relationship is likely mediated or confounded by unmeasured variables (e.g., a specific soil microbe that benefits both). You must test for interaction effects and employ causal inference frameworks.

Protocol: Testing for Higher-Order Interactions

- Model-Based Testing: Use a tree-based model (Gradient Boosting Machine) known for capturing interactions. Calculate SHAP (SHapley Additive exPlanations) interaction values.

- Statistical Testing: For a hypothesized interaction (e.g., Species A * Soil Moisture), include an interaction term in a generalized linear model. A significant coefficient (p < 0.05) confirms the interaction.

- Causal Discovery: Use algorithms like the Fast Causal Inference (FCI) on your observed variables to propose a causal graph that includes potential latent confounders.

Q: The system flags a novel species interaction as an "anomaly." How do I determine if it's a genuine discovery or a data error?

A: Follow the Anomaly Verification Workflow:

- Raw Data Back-Trace: Locate the original field sensor log or sequencing read for the flagged observation.

- Contextual Plausibility Check: Query ecological databases (e.g., GBIF, NEON) for known co-occurrence records of the involved species in similar biomes.

- Independent Verification Signal: Check if a secondary, unrelated sensor stream (e.g., acoustic data indicating animal activity) correlates with the anomaly.

- Expert Triage: The anomaly, with all above evidence, is sent to a domain scientist for final classification (Discovery, Artifact, or Requiring Further Study).

Table 1: Common Dimensionality Reduction Techniques Comparison

| Technique | Best For Data Type | Handles Sparsity | Preserves Non-Linear Relationships | Key Parameter to Tune |

|---|---|---|---|---|

| PCA (Linear) | Continuous, Compositional (after CLR) | No | No | Number of Components |

| UMAP (Non-Linear) | Mixed, High-Dim Ecological States | Yes | Yes | nneighbors, mindist |

| t-SNE (Non-Linear) | Visualization of Clusters | Moderately | Yes | Perplexity |

| PHATE (Non-Linear) | Temporal Trajectory Data | Yes | Yes | t (diffusion time) |

Table 2: Concept Drift Detection Methods Performance

| Method | Detection Speed | Data Type Supported | Primary Output | Suitable For |

|---|---|---|---|---|

| Kolmogorov-Smirnov Test | Slow (Batch) | Univariate Distributions | p-value | Sudden Drift |

| Page-Hinkley Test | Fast (Streaming) | Univariate Metrics (e.g., error) | Threshold Alert | Gradual Drift |

| ADWIN | Fast (Streaming) | Numeric Streams | Change Point | Adaptive Windows |

| LDD-DM (Learning) | Medium | Model Predictions | Drift Probability | Complex, Multivariate |

Experimental Protocol: Calibrating for Non-Stationarity

Title: Temporal Holdout Validation for Model Performance Estimation

Objective: To estimate the real-world performance of an ecological verification model under non-stationary conditions.

Materials:

- Time-stamped ecological dataset (

D) spanning timeT0toTn. - Automated verification model

M.

Procedure:

- Define a validation start time

Tv(e.g., 70% into the total timeline). - Training: Train model

Mon all data from[T0, Tv]. - Testing (Temporal Holdout): Evaluate

Mon data from(Tv, Tn]. Record performance metrics (F1-score, AUC-ROC). - Iterative Forward Validation: Slide

Tvforward in steps (e.g., 5% of total time). Repeat steps 2-3. This creates multiple performance estimates. - Analysis: Plot performance metrics against the end time of the training window. A declining trend indicates model decay due to non-stationarity. The slope of this trend informs the required recalibration frequency.

Visualizations

Diagram 1: Anomaly Verification Workflow

Diagram 2: High-Dim Data Processing Pipeline

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Ecological Data Verification |

|---|---|

| Centered Log-Ratio (CLR) Transformation | Normalizes compositional data (e.g., microbiome reads) to mitigate spurious correlations in high-dimensional feature space. |

| SHAP (SHapley Additive exPlanations) | A game-theoretic approach to explain the output of any machine learning model, crucial for interpreting complex interactions. |

| Fast Causal Inference (FCI) Algorithm | A constraint-based causal discovery method that can suggest the presence of unobserved confounders in data. |

| Recursive Feature Elimination (RFE) | A wrapper method for feature selection that recursively removes the least important features to find an optimal subset. |

| Page-Hinkley Statistical Test | A sequential analysis technique for detecting a change in the average of a streaming signal, used for online drift detection. |

| Uniform Manifold Approximation (UMAP) | A non-linear dimensionality reduction technique particularly effective for visualizing complex ecological clusters and trajectories. |

Technical Support Center: Troubleshooting Guides & FAQs

FAQ Category 1: Data Verification in Ecological & Compound Screening

- Q1: Our model trained on ecological sensor data shows high accuracy on test sets but fails in field deployment. What's wrong?

- A: This is a classic sign of a verification gap. The test set likely shares the same source or bias as your training data. Perform spatial and temporal stratification during dataset splitting. Crucially, verify against an independent, geographically distinct dataset collected under different conditions before deployment.

- Q2: High-throughput screening (HTS) for bioactive natural compounds returned 1000+ hits, but follow-up validation failed for >95%. What happened?

- A: Poor verification at the primary screening stage is the likely cause. Common issues include compound interference (fluorescence, quenching), assay artifacts (precipitates, aggregation), or incorrect hit selection thresholds (Z'-factor < 0.5). Implement orthogonal verification assays (e.g., biophysical binding, secondary functional assay) immediately after the primary HTS.

FAQ Category 2: Model Training & Algorithmic Validation

- Q3: Our deep learning model for predicting species distribution performs erratically when new environmental variables are introduced.

- A: The model's feature space was not properly verified for robustness. Use sensitivity analysis (e.g., Monte Carlo simulations) during training to identify which input variables the model is overly dependent on. Retrain with regularization techniques (L1/L2) and a more diverse feature set.

- Q4: Quantitative Structure-Activity Relationship (QSAR) models for toxicity prediction are rejected by regulators due to "lack of applicability domain (AD) definition."

- A: Regulatory bodies (FDA, EMA) mandate strict AD verification. You must define the chemical space your model is valid for using methods like leverage, distance-to-model, or PCA. Any prediction for a compound outside the AD is considered unreliable.

FAQ Category 3: Regulatory Submission & Data Integrity

- Q5: Our drug discovery submission was queried on "data traceability and audit trail" for the verification steps. What is required?

- A: Regulatory agencies require a complete, unbroken chain of custody and processing for all data, including verification steps. This means using electronic lab notebooks (ELNs) with audit trails, version-controlled analysis scripts, and documented Standard Operating Procedures (SOPs) for every verification assay and data QC step.

- Q6: What is the most common data verification flaw leading to clinical trial delays?

- A: Inconsistent biomarker verification across pre-clinical and clinical phases. The assay used to validate the target in animals must undergo rigorous "fit-for-purpose" validation (precision, sensitivity, specificity) before being used to measure human samples. Failure to bridge this gap causes irreconcilable data.

Summarized Quantitative Data on Verification Failures

Table 1: Impact of Poor Verification in Drug Discovery Pipelines

| Stage | Typical Attrition Rate (With Rigorous Verification) | Attribution Increase Due to Poor Verification | Common Verification Flaw |

|---|---|---|---|

| Target Identification | 40-50% | +20% | Use of non-physiological assay systems; insufficient genetic validation. |

| HTS to Lead | 85-90% | +10% | Artifact-driven false positives; lack of orthogonal assay confirmation. |

| Pre-clinical to Phase I | 50-60% | +15% | Poor PK/PD model verification; species translation inaccuracies. |

| Phase II to III | 60-70% | +5-10% | Biomarker assay not clinically validated; patient stratification errors. |

Table 2: Model Performance Decay Due to Verification Gaps

| Model Type | Reported Test Accuracy | Real-World Performance Drop (Observed) | Primary Verification Gap |

|---|---|---|---|

| Ecological Niche Model | 92% | Drop to 65-70% | Training on biased, spatially autocorrelated occurrence data. |

| Pre-clinical Toxicity Predictor | 88% (AUC) | AUC falls to ~0.65 | Predictions made far outside the model's defined Applicability Domain. |

| Clinical Trial Outcome Simulator | N/A (High Fit) | Failed to predict actual trial outcome | Over-fitting to small, non-diverse historical trial datasets. |

Detailed Experimental Protocols

Protocol 1: Orthogonal Verification for High-Throughput Screening Hits Objective: To eliminate false positives from a primary fluorescence-based HTS. Methodology:

- Primary Hit Identification: Compounds showing >50% activity in the primary assay.

- Dose-Response Confirmation: Re-test primary hits in a 10-point dose-response in the original assay. Confirm potency (IC50/EC50).

- Orthogonal Assay 1 (Biophysical): Use Surface Plasmon Resonance (SPR) or Thermal Shift Assay (DSF) to confirm direct, stoichiometric binding to the purified target protein. Hits must show dose-dependent binding/signal.

- Orthogonal Assay 2 (Functional): For an enzyme target, use an HPLC-MS/MS-based activity assay to verify functional modulation independent of fluorescence.

- Counter-Screen Assay: Test verified hits against an unrelated target or in an assay designed to detect common interferants (e.g., redox activity, aggregation). True hits should be inactive here.

- Verified Hit Criteria: Compound must pass steps 2, 3 (or 4), and 5.

Protocol 2: Establishing Model Applicability Domain (AD) for a QSAR Model Objective: To define the chemical space where a QSAR model's predictions are reliable. Methodology:

- Descriptor Calculation: For both training set and new query compounds, calculate a standard set of molecular descriptors (e.g., RDKit, Dragon).

- PCA Projection: Perform Principal Component Analysis (PCA) on the training set descriptors.

- Domain Definition:

- Leverage (h): Calculate the leverage for each query compound. Threshold: h* = 3(p+1)/n, where p=number of model variables, n=training set size. h > h* indicates high leverage (extrapolation).

- Distance to Model (DModX): Use PLS or similar. Standardized DModX > 3 for a query compound indicates it is an outlier.

- AD Visualization: Plot training and query compounds in the PCA space (PC1 vs. PC2). Draw a convex hull or confidence ellipse around the training set. Query compounds outside this boundary are outside the AD.

- Reporting: Any prediction for a compound outside the AD must be flagged as "unreliable" in regulatory submissions.

Mandatory Visualizations

Diagram 1: HTS Hit Verification Workflow

Diagram 2: QSAR Model Applicability Domain (AD) Decision Logic

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents for Verification Assays

| Reagent/Material | Function in Verification | Key Consideration |

|---|---|---|

| Tag-Free Recombinant Protein | For orthogonal biophysical assays (SPR, DSF). Eliminates risk of tags interfering with compound binding. | Purity (>95%) and functional activity must be verified. |

| Aggregation Probe (e.g., DTT) | Used in counter-screens to detect promiscuous aggregation-based inhibitors. | Include in HTS follow-up to rule out non-specific activity. |

| Validated Positive/Negative Control Compounds | Provides a benchmark for every verification assay run, ensuring system performance. | Must be pharmacologically well-characterized and stable. |

| Stable Cell Line with Reporter Gene | For functional verification orthogonal to biochemical assays. Confirms activity in a cellular context. | Requires rigorous validation of reporter specificity and response dynamics. |

| Internal Standard (IS) for LC-MS | Critical for analytical verification assays. Normalizes for instrument variability and sample prep losses. | Should be a stable isotope-labeled analog of the analyte, if possible. |

| Electronic Lab Notebook (ELN) with Audit Trail | Not a wet reagent, but essential for documenting verification steps. Ensures data integrity and traceability for regulators. | Must be 21 CFR Part 11 compliant for regulatory submissions. |

Technical Support Center

Troubleshooting Guides & FAQs

FAQ 1: My automated sensor array is reporting consistent but incorrect humidity readings in my microclimate chamber. What should I check?

- Answer: This indicates a potential accuracy issue, often due to calibration drift or sensor placement. First, perform a manual verification using a NIST-traceable calibrated hygrometer placed adjacent to the automated sensor. Record readings from both devices at 5-minute intervals over one hour. If a consistent offset is observed, follow the Sensor Recalibration Protocol below. Also, ensure the sensor is not in direct line of airflow from chamber vents, which can cause localized drying.

FAQ 2: The robotic liquid handler for my soil sample dilutions shows high variability in volume dispensed across its 96 tips. How can I diagnose this?

- Answer: This is a classic precision (repeatability) problem. Perform a gravimetric analysis for each tip. Using an analytical balance, dispense 10 replicates of a set volume (e.g., 100 µL of distilled water) from each tip into a pre-weighed vessel. Calculate the coefficient of variation (CV) for each tip. A CV > 5% for volumes ≥50 µL suggests maintenance is required. Common causes include partially clogged tips, worn piston seals, or misalignment. Refer to the Liquid Handler Precision Verification Protocol.

FAQ 3: My automated image analysis script for counting plant seedlings works perfectly one day but fails the next with no code changes. How do I approach this?

- Answer: This points to a reliability (robustness) failure, often due to uncontrolled environmental variables. The algorithm is likely sensitive to changes in lighting conditions. To troubleshoot, first check the consistency of your imaging setup (fixed lighting intensity and camera settings). Implement a pre-processing normalization step in your script to standardize image histograms. Secondly, create a validation set of 100 images manually annotated by two researchers. Run the script daily for a week and track its performance against this gold standard using an F1-score. Fluctuations in the score correlate with environmental instability.

FAQ 4: The data pipeline for integrating canopy temperature and soil moisture readings frequently stalls, causing gaps in time-series data.

- Answer: This is a system reliability issue related to data integrity. First, verify the health of the network connection between field sensors and the ingress point using continuous ping tests. Implement a "heartbeat" signal for each data stream. Secondly, configure your ingestion pipeline with a dead-letter queue to capture failed transactions for manual review, preventing complete halts. Ensure your timestamp synchronization protocol (e.g., using PTP or consistent NTP servers) is functioning across all devices to maintain data cohesion.

Experimental Protocols

Protocol 1: Sensor Recalibration for Accuracy Verification

- Objective: To correct systematic error (bias) in an environmental sensor.

- Materials: Unit Under Test (UUT), reference standard (calibrated to NIST-traceable standards), controlled environmental chamber.

- Method:

- Place the UUT and reference standard in the controlled chamber, ensuring they are experiencing identical conditions.

- Program the chamber to step through 5 points across the operational range (e.g., for temperature: 5°C, 15°C, 25°C, 35°C, 45°C).

- At each setpoint, allow the system to stabilize for 30 minutes.

- Record 10 readings from both the UUT and reference at 1-minute intervals.

- Calculate the mean reading for both devices at each setpoint.

- Apply a linear regression (UUT reading vs. Reference Value) to derive a correction function.

- Program the correction function into the UUT's firmware or apply it during data post-processing.

Protocol 2: Liquid Handler Precision Verification (Gravimetric Method)

- Objective: To assess the repeatability (precision) of an automated liquid dispenser.

- Materials: Robotic liquid handler, analytical balance (0.1 mg sensitivity), low-evaporation weighing vessels, distilled water, temperature probe.

- Method:

- Allow water and equipment to equilibrate to ambient lab temperature (record temperature).

- Tare a weighing vessel on the balance.

- Program the liquid handler to dispense a target volume (e.g., 100 µL) into the vessel. Record the actual dispensed mass.

- Repeat Step 3 for n=10 replicates per tip.

- Calculate the mean mass (m̄) and standard deviation (σ) for each tip.

- Convert mass to volume using the density of water at the recorded temperature (V = m / ρ).

- Calculate the Coefficient of Variation: CV (%) = (σ / m̄) * 100.

- Compare CV to manufacturer specifications (typically <2-5% for this volume). Tips exceeding the threshold require cleaning, priming, or mechanical service.

Data Presentation

Table 1: Performance Metrics of Three Automated Soil pH Analyzers

| Analyzer Model | Mean Error vs. Reference (Accuracy) | Within-Run CV % (Precision) | Uptime over 30 Days (Reliability) | Mean Time Between Failures (MTBF) |

|---|---|---|---|---|

| EcoSensor Pro X | -0.12 pH units | 1.8% | 99.1% | 450 hours |

| LabBot AgriScan | +0.31 pH units | 3.5% | 95.4% | 220 hours |

| Veridi CoreMax | -0.05 pH units | 0.9% | 99.8% | 1200 hours |

Table 2: Impact of Calibration Frequency on Data Accuracy

| Calibration Interval | Mean Absolute Error (Temp. Sensor) | Data Loss Events (Pipeline) | Correct Classification Rate (Image Analysis) |

|---|---|---|---|

| Weekly | 0.15°C | 2 | 98.5% |

| Monthly | 0.42°C | 5 | 96.2% |

| Quarterly | 1.18°C | 15 | 91.7% |

| Never (Factory Cal.) | 2.05°C | 32 | 85.4% |

Visualizations

Title: Ecological Data Verification Workflow

Title: Factors Affecting Automated System Reliability

The Scientist's Toolkit: Research Reagent Solutions

| Item / Reagent | Function in Automated Ecological Verification |

|---|---|

| NIST-Traceable Reference Standards | Provides an unbroken chain of calibration to SI units, essential for establishing the accuracy of sensors. |

| Certified Reference Materials (CRMs) | Homogeneous, stable materials with certified property values (e.g., soil pH, nutrient content) used to validate entire analytical pipelines. |

| High-Purity Solvents & Water | Used for cleaning sensors, preparing calibration curves, and gravimetric testing to prevent contamination bias. |

| Stable Dye Markers (e.g., Fluorescein) | Used in liquid handler validation to visually and spectrophotometrically assess dispensing precision and cross-contamination. |

| Data Simulator Software | Generates synthetic datasets with known errors to stress-test automated verification algorithms for reliability. |

| Buffer Solutions (pH 4, 7, 10) | Essential for the regular three-point calibration of automated pH electrodes in soil and water analysis systems. |

Building the Calibration Pipeline: A Step-by-Step Methodological Guide

Technical Support Center: Troubleshooting Guides & FAQs

Data Profiling and Sensor Integration

Q: During initial data profiling, my environmental sensor array is reporting values that are consistently out of range compared to legacy manual measurements. How do I determine if this is a calibration drift or a genuine ecological shift?

A: This is a common integration challenge. Follow this protocol to isolate the issue.

- Concurrent Calibration Check: Deploy a newly calibrated, certified reference sensor alongside the automated array at the same location for a 72-hour period.

- Controlled Environment Test: Remove one field sensor and place it with the reference sensor in a controlled environmental chamber, replicating a range of known conditions (e.g., temperature, humidity).

- Data Alignment Analysis: Calculate the Mean Absolute Percentage Error (MAPE) and Root Mean Square Error (RMSE) between the automated sensor and the reference standard for both field and controlled tests.

Interpretation: A high error in both tests indicates a calibration drift requiring sensor re-calibration. A high error only in the field suggests an site-specific interference (e.g., biotic contamination, improper placement) or a genuine ecological anomaly needing further investigation.

Q: My automated verification system flags "anomalies" during stable diurnal cycles, creating excessive false positives. How do I establish a robust statistical baseline to reduce noise?

A: The baseline must account for temporal autocorrelation. Use this methodology:

- Data Segmentation: Segment your initial "normal operation" historical data by key periodic cycles (e.g., hour-of-day, season).

- Model Fitting: For each segment, fit a distribution (e.g., Weibull for wind speed, Normal for pH in stable systems). Do not assume a normal distribution.

- Threshold Calculation: Calculate the Median Absolute Deviation (MAD) for each segment, which is more robust to outliers than standard deviation. Set your anomaly thresholds at Median ± (3 * MAD) for each time segment.

- Validation: Apply this dynamic baseline to a new dataset and manually verify flagged events. Adjust the multiplier (e.g., from 3 to 2.5) based on your acceptable False Positive Rate.

Table 1: Sensor Validation Error Metrics (Example from Soil Moisture Calibration)

| Sensor ID | Test Environment | Reference Value Mean | Sensor Value Mean | MAPE (%) | RMSE | Diagnosis |

|---|---|---|---|---|---|---|

| SM-AB01 | Field Concurrent | 25.4 VWC% | 28.7 VWC% | 13.0 | 3.3 | Field Interference Suspected |

| SM-AB01 | Chamber Controlled | 30.0 VWC% | 30.2 VWC% | 0.67 | 0.2 | Sensor Calibration Valid |

| SM-AB02 | Chamber Controlled | 30.0 VWC% | 33.5 VWC% | 11.7 | 3.5 | Requires Re-calibration |

Table 2: Anomaly Detection Performance with Dynamic Baseline

| Baseline Method | False Positive Rate (FPR) | True Positive Rate (TPR) | Precision | Notes |

|---|---|---|---|---|

| Global Mean ± 3σ | 8.2% | 94% | 68% | Poor, flags normal cycles |

| Hourly Median ± 3*MAD | 1.5% | 92% | 91% | Recommended method |

| Daily Rolling Average | 4.1% | 88% | 79% | Lag introduces delay |

Experimental Protocols

Protocol: Establishing an Anomaly Detection Baseline for Ecological Time-Series Data

Objective: To create a statistically robust, time-aware baseline profile for automated verification of continuous ecological data streams.

Materials: See "Research Reagent Solutions" below. Methodology:

- Data Acquisition & Cleaning: Collect a minimum of 3 full periodic cycles (e.g., 3 seasons, 3 lunar cycles) of "normal condition" data. Apply quality checks (e.g., remove sensor-flagged errors, interpolate single-point dropouts using a moving window median).

- Temporal Segmentation: Segment data into relevant time bins (e.g.,

{hour_of_day}_{season}). - Distribution Analysis: For each segment, test goodness-of-fit for Normal, Log-Normal, and Weibull distributions using the Kolmogorov-Smirnov test.

- Baseline Parameter Calculation: For the best-fit distribution of each segment, calculate the central tendency (Median) and spread using Median Absolute Deviation (MAD).

MAD = median(|Xi - median(X)|). - Threshold Definition: Define the upper and lower anomaly thresholds for segment i as:

Threshold_high[i] = Median[i] + (k * MAD[i])andThreshold_low[i] = Median[i] - (k * MAD[i]). Start withk=3. - Baseline Validation & Tuning: Apply thresholds to a held-out validation dataset. Manually audit flagged points. Adjust

kto achieve the desired operational balance between FPR and TPR as per Table 2.

Diagrams

Title: Workflow for Dynamic Statistical Baseline Establishment

Title: Logic Flow for Real-Time Anomaly Verification

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Automated Ecological Data Verification

| Item | Function & Specification |

|---|---|

| NIST-Traceable Reference Sensors | Provides the "ground truth" measurement for calibrating in-field automated sensor arrays. Critical for Step 1 validation. |

| Environmental Chamber/Calibrator | A controlled unit to test sensor response across a known range of temperatures, humidities, or gas concentrations. |

| Data Logging Middleware (e.g., Fledge, Node-RED) | Software to collect, harmonize, and forward heterogeneous sensor data to a central profiling database. |

| Time-Series Database (e.g., InfluxDB, TimescaleDB) | A database optimized for storing and querying timestamped profiling data and baseline parameters. |

| Statistical Computing Environment (R/Python with pandas, SciPy) | For conducting distribution analysis, calculating MAD, and automating the baseline generation protocol. |

| Anomaly Detection Framework (e.g., Tesla, custom script) | A rules engine to apply dynamic thresholds in real-time and flag outliers for review. |

Troubleshooting Guides & FAQs

Q1: Why does my rule-based system fail to flag obvious outliers in my sensor-derived water quality data (e.g., pH, dissolved oxygen)? A: This is often due to static, context-insensitive thresholds. A pH of 3.5 is an outlier in a forest stream but may be valid in a peat bog. Solution: Implement adaptive, context-aware rules. For example, dynamically set thresholds based on location-specific historical data (e.g., 5th and 95th percentiles for that site). Integrate a simple statistical check (like a moving Z-score) to run alongside the rules to catch what fixed rules miss.

Q2: My statistical anomaly detection model (e.g., Isolation Forest) labels all rare biological events (like a fish kill) as errors. How can I prevent this? A: This is a classic false-positive issue where "rare" is conflated with "incorrect." Solution: Implement a two-stage verification pipeline.

- Let the statistical model flag all low-probability events.

- Feed these events into a rule-based filter that incorporates domain knowledge (e.g., "If dissolved oxygen < 2 mg/L AND temperature > 25°C, flag as 'Plausible Hypoxic Event' not 'Sensor Error'"). This creates a priority list for human review.

Q3: When integrating ML for time-series imputation (filling missing temperature data), how do I choose between methods like ARIMA, Prophet, and LSTM? A: The choice depends on your data characteristics and infrastructure. See the comparison table below.

Table 1: Comparison of ML/Statistical Time-Series Imputation Methods for Ecological Data

| Method | Type | Best For | Key Assumption/Limitation | Computational Demand |

|---|---|---|---|---|

| Linear Interpolation | Statistical | Very short gaps, simple trends. | Data changes linearly between points. | Very Low |

| Seasonal Decomposition + ARIMA | Statistical | Data with clear trends/seasonality. | Series is stationary after differencing. | Medium |

| Facebook Prophet | Statistical | Strong seasonal patterns, holiday effects. | Seasonality is additive or multiplicative. | Medium |

| Long Short-Term Memory (LSTM) | Machine Learning | Complex, nonlinear patterns, long sequences. | Requires large amounts of training data. | Very High |

Q4: How do I resolve contradictions between a rule-based verification result and an ML model's prediction for the same data point? A: Establish a structured arbitration protocol.

- Assign Confidence Scores: Have both systems output a confidence score (e.g., rule: "threshold exceedance level", ML: "prediction probability").

- Meta-Verification Layer: Create a simple arbiter model (e.g., a random forest) trained on historical cases where the correct outcome was known. Use the outputs and confidence scores from both systems as features for the arbiter.

- Escalate to Review: If the arbiter's confidence is low, flag the data point for mandatory expert review.

Experimental Protocol: Calibrating a Hybrid Verification System for Soil Sensor Data

Objective: To validate a hybrid (Rule-Based + ML) verification system for automated soil moisture and nitrate sensor data.

Materials & Reagents:

- Field Sensors: Deploy paired, calibrated soil moisture (capacitance) and nitrate (ion-selective) sensors.

- Validation Data: Manual, lab-analyzed soil cores for moisture (gravimetric) and nitrate (spectrophotometry) as ground truth.

- Computational Environment: Python/R with libraries (scikit-learn, statsmodels, pandas).

Procedure:

- Data Collection: Collect 3 months of high-frequency (hourly) sensor data and weekly manual validation samples from the same plots.

- Rule-Based Module Setup:

- Define plausibility rules (e.g., "Soil moisture must be between 0% and 60% WHC").

- Define cross-sensor consistency rules (e.g., "If a large precipitation event is recorded, soil moisture must increase shortly after").

- Statistical/ML Module Setup:

- Train an Isolation Forest model on normalized sensor data to detect anomalous readings.

- Train a Multivariate LSTM model to predict nitrate levels based on moisture, temperature, and historical values. Flag readings where prediction error exceeds 3 standard deviations.

- Integration & Arbitration:

- Run both verification modules on the sensor stream.

- Use a simple weighted voting arbiter: If 2+ modules flag a point, it is marked

"Invalid."If only one flags it, it is marked"Requires Review."

- Performance Validation:

- Compare system flags against anomalies confirmed by manual validation samples.

- Calculate Precision, Recall, and F1-score for error detection.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents & Materials for Ecological Data Verification Studies

| Item | Function in Verification Calibration |

|---|---|

| Calibration Standards (e.g., Nitrate Std. Solutions) | Provide ground truth for calibrating sensor hardware, forming the basis for all subsequent algorithmic verification. |

| Data Logging & Validation Software (e.g., R, Python Pandas) | Enables systematic comparison of raw sensor output, algorithmic flags, and manual validation data. |

| Cloud Compute Credits (AWS, GCP, Azure) | Necessary for training and deploying resource-intensive ML models (e.g., LSTM) on large-scale ecological datasets. |

| Statistical Analysis Suites (scikit-learn, statsmodels) | Provide pre-built, peer-reviewed implementations of key statistical and ML algorithms for robust experimentation. |

Workflow & Pathway Diagrams

Troubleshooting Guides & FAQs

Q1: During automated verification of sensor-derived ecological features, the system flags too many false positives, overwhelming researchers. What are the likely causes and solutions?

A1: This is often caused by static, overly sensitive thresholds. Common causes and solutions are:

- Cause: Thresholds based on limited pilot data that do not account for seasonal or diurnal variation.

- Solution: Implement rolling window percentiles (e.g., 95th/99th) calculated over a preceding period (e.g., 30 days) to set dynamic thresholds.

- Cause: Confidence intervals for feature means are too narrow due to underestimated variance.

- Solution: Use bootstrapping methods on feature distributions to generate robust, non-parametric confidence intervals that account for skewness and outliers.

- Cause: Sensor drift or calibration decay is misinterpreted as an ecological signal.

- Solution: Integrate a parallel calibration-check signal into the workflow. Flag events only when the ecological feature exceeds its dynamic threshold and the calibration signal remains within its stable range.

Q2: How do we determine the appropriate time window and statistical method for calculating dynamic thresholds for a new ecological feature?

A2: Follow this experimental protocol:

- Baseline Collection: Accumulate uncontaminated, reference data for a full cycle of the system's dominant periodicity (e.g., 1 full year for seasonal cycles, 1 month for tidal cycles).

- Window Sensitivity Analysis: Test different window lengths (e.g., 7, 14, 30, 60 days) for calculating moving percentiles. Analyze the trade-off between responsiveness (shorter window) and stability (longer window).

- Optimization Criterion: Select the window length that maximizes the F1-score (harmonic mean of precision and recall) against a manually verified "event" dataset, or that maintains a user-defined false positive rate (e.g., <5%).

Q3: When establishing confidence intervals for population counts (e.g., via camera traps or acoustic monitors), which method is most robust to low sample sizes and non-normal data?

A3: Bayesian credible intervals or bootstrapped confidence intervals are preferred over standard parametric methods. See the comparison table below.

Table 1: Comparison of Confidence Interval Methods for Non-Normal Ecological Count Data

| Method | Principle | Advantage for Ecological Data | Typical Use Case | Computation Load |

|---|---|---|---|---|

| Parametric (Normal) | Assumes normal distribution around mean. | Simple, fast. | Large sample sizes (>30), near-normal data. | Low |

| Bootstrapped | Resamples observed data to estimate sampling distribution. | Makes no distributional assumptions; robust to skew. | Small to moderate samples, unknown/odd distributions. | High (requires iteration) |

| Bayesian (Credible Interval) | Updates prior belief with observed data to form posterior distribution. | Incorporates prior knowledge; intuitive probability interpretation. | Incorporating expert knowledge, sequential data analysis. | Moderate-High |

Table 2: Dynamic Threshold Performance for Anomaly Detection (Simulated Data)

| Threshold Method | False Positive Rate (FPR) | True Positive Rate (TPR) | F1-Score | Recommended Scenario |

|---|---|---|---|---|

| Static Global (Mean ± 3SD) | 12.5% | 65% | 0.55 | Stable, aperiodic systems only. |

| Dynamic Rolling Percentile (30-day, 99th) | 4.8% | 88% | 0.86 | Systems with slow trends & seasonality. |

| Exponentially Weighted Moving Average (EWMA) | 5.2% | 92% | 0.89 | Systems where recent data is most predictive. |

Experimental Protocols

Protocol 1: Establishing a Dynamic Threshold via Rolling Window Percentiles

- Objective: To programmatically define an "extreme value" threshold for an ecological feature that adapts to temporal trends.

- Methodology:

- For a given feature (e.g., nightly soundscape intensity in decibels), extract the time series

Y(t). - At each time point

t, define a lookback windowW(e.g., preceding 30 days). - Calculate the

p-th percentile (e.g., 95th or 99th) of the values withinY(t-W : t). - Assign this percentile value as the dynamic threshold

T(t)for timet. - An event or anomaly is flagged when

Y(t) > T(t).

- For a given feature (e.g., nightly soundscape intensity in decibels), extract the time series

- Validation: Compare flagged events against ground-truthed observations to calculate precision, recall, and adjust

Wandp.

Protocol 2: Generating Bootstrapped Confidence Intervals for Species Abundance Estimates

- Objective: To compute a robust confidence interval for a population mean when data is sparse or non-normal.

- Methodology:

- From your original sample of

nindependent observations (e.g., counts fromncamera trap days), calculate your statistic of interestS(e.g., mean count per day). - Create a "bootstrap sample" by randomly selecting

nobservations with replacement from the original data. - Calculate the statistic

S*for this bootstrap sample. - Repeat steps 2-3 a large number of times (e.g.,

B = 10,000) to create a distribution ofS*. - The 95% bootstrap confidence interval is defined by the 2.5th and 97.5th percentiles of this

S*distribution.

- From your original sample of

Diagrams

Dynamic Threshold Workflow for Anomaly Detection

Bootstrap Confidence Interval Methodology

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Dynamic Threshold & Confidence Interval Analysis

| Item / Solution | Function in Experiment | Key Consideration |

|---|---|---|

R tidyverse/dplyr |

Data wrangling, rolling window calculations, and summarization. | Use slider package for efficient rolling window operations on time series. |

Python pandas & numpy |

Time series manipulation, percentile calculation, and array operations for dynamic thresholds. | Use .rolling() and .quantile() methods. Ensure datetime index is sorted. |

Bootstrapping Library (boot in R, sklearn.utils.resample in Python) |

Automates the resampling and statistic calculation process for CI generation. | Set a random seed for reproducibility. Number of iterations (B) should be >=1000. |

Bayesian Inference Library (Stan, PyMC3, brms) |

Fits hierarchical models to incorporate prior knowledge and generate credible intervals for complex ecological data. | Requires specification of appropriate likelihood functions and priors. |

Visualization Library (ggplot2, matplotlib/seaborn) |

Plots time series with dynamic thresholds overlaid and visualizes bootstrap distributions. | Critical for diagnostic checking of model assumptions and results. |

Troubleshooting Guides & FAQs

Q1: Our automated verification system is flagging a high percentage of valid ecological sensor readings as "anomalous." What are the first diagnostic steps? A: First, verify the calibration state of your reference datasets. Run a manual verification on a sample of the flagged data (e.g., 100 points). Check for temporal drift by comparing system outputs from the same sensor from one month ago. Ensure your anomaly detection thresholds (e.g., Z-score > 3.5) are appropriate for the current season's data volatility. Update the training set with newly confirmed valid data points and retrain the model.

Q2: After retraining the system with new field data, performance metrics degrade. How can we isolate the cause? A: This indicates potential poisoning or skew in the new feedback data. Implement the following protocol:

- Split Analysis: Compare performance on the old test set versus the new data. If old performance holds, the issue is data quality.

- Data Audit: Check the source and labeling consistency of the newly added data. Use clustering (e.g., t-SNE) to visualize if new data forms anomalous clusters.

- Rollback & Incremental Add: Revert to the previous model and add new data in smaller, validated batches, monitoring precision/recall after each batch.

Q3: How do we quantify the "confidence" of the automated system's verification for drug ecotoxicity data? A: Implement a confidence scoring system based on ensemble methods and data provenance. The score should combine:

- Model Agreement: Percentage of agreeing sub-models in the ensemble (e.g., 4 out of 5 models agree => 80% agreement score).

- Data Quality Score: A metric based on sensor health, recency of calibration, and data completeness.

- Historical Accuracy: The system's past accuracy for that specific sensor type and parameter.

| Confidence Score | Composite Range | Recommended Action |

|---|---|---|

| High | 85 - 100% | Accept verification automatically; log for long-term trend analysis. |

| Medium | 60 - 84% | Flag for a single human expert review within 24 hours. |

| Low | < 60% | Escalate for full panel review and immediate calibration check. |

Q4: What is a standard protocol for validating a new ecological data type (e.g., a new pesticide biomarker) in the system? A: Follow this phased experimental protocol:

Phase 1: Baseline Establishment.

- Manually curate a gold-standard dataset (N ≥ 500 data points) with expert-labeled verifications.

- Split into training (60%), validation (20%), and test (20%) sets.

Phase 2: Model Integration & Training.

- Initialize system with pre-trained feature extractors.

- Train a dedicated classifier on the new training set. Use 5-fold cross-validation.

- Performance must exceed a pre-set benchmark (e.g., F1-score > 0.85) on the validation set.

Phase 3: Shadow Deployment & Feedback.

- Run the new model in "shadow mode" parallel to the existing workflow for 2 weeks, logging all predictions without acting on them.

- Experts are presented with both human and system verifications blind, providing corrected labels where they disagree. This generates the initial feedback loop data.

Phase 4: Live Deployment with Guardrails.

- Deploy for live verification but with a conservative confidence threshold (e.g., only auto-verify scores >90%).

- All other predictions are routed for human review, which in turn feeds the feedback loop.

Key Experimental Protocol: Evaluating Feedback Loop Efficacy

Objective: To measure the improvement in automated verification accuracy after integrating a structured human feedback loop over a defined period.

Methodology:

- Initial Model (M0): Deploy the current production model. Over 7 days, collect all its verification predictions and associated confidence scores on incoming data stream D1.

- Human Verification & Labeling: A panel of 3 expert scientists independently verifies every data point in D1. A gold-standard label is assigned where ≥2 experts agree.

- Feedback Loop Integration: Use the gold-standard labels from D1 to create an augmented training set. Retrain the model to produce M1.

- Controlled Test: On day 8, deploy M1 and M0 in an A/B test on a new, statistically similar data stream D2. Collect predictions from both.

- Evaluation: Immediately have experts verify all low-confidence predictions and a random 20% sample of high-confidence predictions from both models on D2.

- Metrics Calculation: Compare the Precision, Recall, and F1-Score of M0 vs. M1 on the verified subset of D2.

Expected Outcome & Metrics Table:

| Model | Precision (%) | Recall (%) | F1-Score | Avg. Confidence Score | False Positive Rate (%) |

|---|---|---|---|---|---|

| M0 (Baseline) | 88.2 | 75.4 | 0.813 | 82.1 | 4.8 |

| M1 (Post-Feedback) | 91.7 | 82.3 | 0.867 | 85.6 | 3.1 |

| Improvement (Δ) | +3.5 | +6.9 | +0.054 | +3.5 | -1.7 |

Continuous Feedback Loop Architecture

Diagram Title: Automated Verification Feedback Loop Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Ecological Data Verification |

|---|---|

| Gold-Standard Reference Datasets | Curated, expert-validated data used as ground truth for training and benchmarking system performance. |

| Synthetic Anomaly Generators | Algorithms to create controlled anomalous data points for stress-testing system detection limits. |

| Model Versioning Software (e.g., DVC, MLflow) | Tracks iterations of machine learning models, linking each to specific training data and performance metrics. |

| Data Provenance Tracker | Logs the origin, calibration history, and processing steps of all input ecological data. |

| Inter-Rater Reliability (IRR) Tools (e.g., Cohen's Kappa Calculator) | Quantifies agreement among human experts to ensure quality of feedback labels. |

| Confidence Calibration Algorithms (e.g., Platt Scaling, Isotonic Regression) | Adjusts raw model prediction scores to reflect true probability of correctness. |

| Feedback Loop Dashboard | Real-time visualization of system accuracy, confidence scores, and human-override rates. |

Technical Support Center: Troubleshooting & FAQs

Frequently Asked Questions

Q1: Our verification system flags a high percentage of samples from a longitudinal study as "Low Biomass - Contaminant Risk." What are the primary causes and solutions? A1: This is common in longitudinal studies where sample biomass fluctuates. Key causes and actions are:

| Cause | Diagnostic Check | Recommended Action |

|---|---|---|

| True Low Biomass | Quantify 16S rRNA gene copies via qPCR (threshold: <10^3 copies/µL). | Apply batch-specific decontamination (e.g., decontam R package prevalence method, using negative controls). Do not discard automatically. |

| Inconsistent DNA Extraction Efficiency | Compare yield across extraction batches using a standardized mock community. | Re-calibrate the verification threshold per batch. Implement a pre-extraction spike-in (e.g., known quantity of Pseudomonas fluorescens DNA) to normalize. |

| Degraded/Damaged DNA in Storage | Check DNA integrity via Bioanalyzer/Fragment Analyzer; low DV200 indicates degradation. | Exclude severely degraded samples. For partial degradation, use PCR protocols optimized for damaged DNA (e.g., shorter amplicons). |

| PCR Inhibition | Assess via internal amplification control (IAC) in qPCR; cycle threshold (Ct) shift >2 indicates inhibition. | Dilute template (1:10), use inhibition-resistant polymerases, or apply a pre-treatment clean-up step. |

Q2: During time-series verification, we detect an implausible, sharp taxonomic shift (e.g., >80% change in dominant genus) between two consecutive time points from the same subject. How should we investigate? A2: Follow this protocol to discriminate technical artifact from biological reality:

Experimental Verification Protocol:

- Wet-Lab Re-analysis: From the original specimen aliquot (if available), repeat:

- DNA Extraction: Using the original and an alternative kit.

- Library Prep: In duplicate, including a positive control (mock community) and a no-template control.

- Sequencing: On a different flow cell lane if possible.

- Bioinformatic Interrogation:

- Re-process raw FASTQs through two independent pipelines (e.g., QIIME2 & Mothur).

- Apply strict chimera removal (UCHIME2/VSEARCH) and verify with

is-tobe-rmtool. - Check for batch effects: Use PERMANOVA on Bray-Curtis distances to test if the shift correlates with processing date.

- Data Integrity Check:

- Verify sample metadata for labeling or collection errors.

- Cross-check sequencing depth: Ensure both time points have >10,000 high-quality reads.

Q3: How do we calibrate the verification system's thresholds for "acceptable" within-subject temporal variability? A3: Thresholds should be study-specific. Use this calibration protocol:

Calibration Protocol:

- Define a "Stable" Control Cohort: Identify a subset of subjects (e.g., healthy adults not undergoing interventions) with expected minimal microbiome change.

- Calculate Baseline Variability Metrics: From the control cohort's longitudinal data, compute:

- Mean within-subject Bray-Curtis dissimilarity between consecutive time points.

- Standard deviation of the above.

- Maximum observed change in key alpha-diversity metrics (Shannon, Observed ASVs).

- Set Thresholds: The verification system's "warning" threshold can be set at

Mean + 2*SDof the within-subject dissimilarity. "Alert" threshold atMean + 4*SD. - Validate with Mock Data: Test thresholds using a synthetic dataset with introduced errors (e.g., swapped samples, simulated contamination).

Research Reagent Solutions Toolkit

| Item | Function in Verification/Calibration |

|---|---|

| ZymoBIOMICS Microbial Community Standard (D6300) | Defined mock community of bacteria and fungi. Serves as positive control for DNA extraction, sequencing, and bioinformatic pipeline accuracy to detect technical bias. |

| PhiX Control v3 (Illumina) | Sequencing run quality control. Spiked in (~1%) to monitor cluster generation, sequencing accuracy, and phasing/prephasing. |

| Pseudomonas fluorescens DNA (ATCC 13525) | Non-biological synthetic spike-in. Added pre-extraction to low-biomass samples to quantify and correct for variation in extraction efficiency across batches. |

| Blank Extraction Kit Reagents | Processed alongside samples as negative controls. Critical for identifying kit-borne contaminating DNA for decontamination algorithms. |

| HI-STOPP Molecular Grade Water (PCR Clean) | Used for no-template controls (NTCs) in PCR and library preparation to detect reagent contamination. |

| DNase/RNase-Free Magnetic Beads (e.g., SPRIselect) | For consistent library clean-up and size selection, reducing adapter dimer contamination that impacts quantification. |

Visualizations

Diagnosing and Resolving Common Calibration Failures in Practice

Troubleshooting False Positives/Negatives in Complex, Noisy Datasets

FAQs

Q1: My automated species call from acoustic data has a high false positive rate for a rare bird species. What could be causing this?

A1: This is often due to background noise or calls from common species with similar acoustic signatures being misclassified. Implement a post-processing filter that requires secondary validation (e.g., a specific frequency profile or temporal pattern) for low-probability/high-impact detections. Ensure your training data for the rare species is representative of its call variations.

Q2: In my high-content imaging for drug toxicity screening, I'm getting false negatives in cell death assays under high-confluence conditions. How can I troubleshoot?

A2: This is likely a segmentation artifact. At high confluence, the algorithm may fail to separate individual cells, causing it to miss apoptotic bodies. Implement a confluence-based analysis rule: for fields exceeding 70% confluence, switch to a fluorescence intensity-based threshold (e.g., cleaved caspase signal) rather than relying solely on morphological segmentation.

Q3: Soil sensor data for nutrient levels is showing false negatives during wet season spikes. What's the likely issue?

A3: Sensor calibration drift due to humidity is probable. The protocol requires using a set of physical control sensors in a buffer solution at the field site. Compare field sensor readings to these calibrated controls daily. Apply a humidity-dependent correction factor to the raw voltage data before converting to concentration units.

Q4: My qPCR verification of transcriptomic data consistently yields false positives for supposedly upregulated genes in my treatment group. Why?

A4: Primer dimerization or non-specific amplification in samples with low overall RNA integrity is the common culprit. For any candidate gene from noisy RNA-seq data, you must design primers with stringent checks for secondary structure and perform a melt curve analysis. Include a no-template control and a minus-reverse transcriptase control for each sample set.

Q5: How do I distinguish between a true weak signal and instrumental noise in mass spectrometry for novel metabolite detection?

A5: You must establish a noise baseline experimentally. Run multiple blank samples (without biological material) through the entire preparation and LC-MS workflow. Any peak in the experimental sample must have a signal-to-noise ratio (S/N) > 5 when compared to the standard deviation of the baseline in the corresponding m/z and retention time window in the blank.

Experimental Protocols

Protocol 1: Acoustic Data Validation for Rare Species

Objective: To confirm or reject automated detections of a target species in noisy field recordings.

- For each automated detection timestamp, extract a 10-second audio clip.

- Generate a spectrogram (Hanning window, 512-point FFT, 50% overlap).

- Apply a band-pass filter isolating the known frequency range of the target call.

- Calculate two secondary metrics: (a) Temporal symmetry score, (b) Harmonic-to-noise ratio.

- Compare these metrics to distributions from a verified training set. A detection is confirmed only if both secondary metrics fall within the 10th-90th percentile range of the verified data.

Protocol 2: Confluence-Adjusted Cell Death Analysis

Objective: To accurately quantify apoptosis in high-confluence cell cultures.

- Acquire images (DAPI for nuclei, FITC for Annexin V/ Caspase substrate).

- Low Confluence (<70%): Use a watershed segmentation algorithm on the DAPI channel to identify individual nuclei. Measure FITC intensity per nucleus.

- High Confluence (≥70%): Abandon nuclear segmentation. Switch to a pixel-based analysis. Apply a global threshold to the FITC channel (threshold level determined from positive control wells). Report the percentage of FITC-positive pixels per field.

- Normalize all results to vehicle control wells processed with the same confluence logic.

Protocol 3: Humidity Compensation for Field Soil Sensors

Objective: To correct nutrient sensor output for ambient humidity interference.

- Deploy three additional "control" sensors in a sealed box containing a standard buffer solution of known nutrient concentration alongside field sensors.

- Log readings from field sensors and control sensors every hour.

- Calculate the deviation of the control sensors from the known standard value.

- For each time point, apply a linear correction (based on the control sensor deviation) to all field sensor readings from the same sensor batch. The correction formula is:

Corrected_Value = Raw_Value * (Known_Standard / Control_Sensor_Mean).

Table 1: Impact of Secondary Validation on Acoustic Detection Accuracy

| Species Prevalence | Auto-Detection Count | After Temporal Symmetry Filter | After Harmonic-Noise Filter | Final Validated Count | False Positive Reduction |

|---|---|---|---|---|---|

| Rare (<0.1%) | 150 | 45 | 32 | 28 | 81.3% |

| Common (>5%) | 10500 | 10100 | 9950 | 9900 | 5.7% |

Table 2: Cell Death Detection Rate by Analysis Method and Confluence

| Confluence Level | Morphological Segmentation (Apoptotic Cells/Field) | Intensity-Based Threshold (% Positive Pixels) | Ground Truth (Manual Count) |

|---|---|---|---|

| 50% | 22.5 ± 3.1 | 15.2 ± 5.4 | 23 |

| 80% | 8.1 ± 4.2 | 21.8 ± 3.7 | 20 |

| 95% | 2.5 ± 1.8 | 19.5 ± 4.1 | 18 |

The Scientist's Toolkit

Table 3: Research Reagent Solutions for Verification Experiments

| Item | Function in Troubleshooting |

|---|---|

| Synthetic Oligo Standards (qPCR) | Provides an absolute quantification standard to rule out amplification efficiency issues causing false negatives. |

| Stable Isotope-Labeled Internal Standards (MS) | Distinguishes true metabolite signal from background chemical noise by mass shift; corrects for ionization suppression. |

| CRISPR-Cas9 Knockout Cell Line | Serves as a definitive negative control for antibody or probe specificity in imaging/blotting, confirming true signal. |

| Acoustic Playback System & Blank Recorder | Allows field validation of detector algorithms with known, clean calls; the blank recorder characterizes device noise. |

| Sensor Calibration Buffer Kit (Field) | Provides on-site reference points to correct for sensor drift due to environmental variables like humidity. |

Diagrams

Title: Troubleshooting Workflow for Noisy Dataset Analysis

Title: Confluence-Based Analysis Decision Tree

Optimizing for Computational Efficiency Without Sacrificing Verification Rigor

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My automated verification script for sensor data is taking over 24 hours to complete a single run. How can I speed this up without compromising the statistical validation? A: Implement a staged verification pipeline. First, run a fast, approximate check (e.g., a Shapiro-Wilk test on a random subset) to flag only highly anomalous datasets. Apply full, rigorous verification (e.g., full distribution fitting, cross-validation) only to these flagged datasets. This targets computational resources effectively.

Q2: When calibrating my model with Markov Chain Monte Carlo (MCMC), convergence is slow. Are there efficiency optimizations that still guarantee robust parameter estimation? A: Yes. Utilize Hamiltonian Monte Carlo (HMC) or the No-U-Turn Sampler (NUTS) algorithms, which are more computationally efficient per effective sample than standard Metropolis-Hastings. Crucially, maintain verification rigor by:

- Setting

target_accept_rate=0.8(or similar) for optimal tuning. - Running multiple chains (≥4) and verifying convergence with the rank-normalized $\hat{R}$ statistic ($\hat{R} < 1.01$).

- Confirming effective sample size (ESS) is >400 per chain.

Q3: I'm verifying species classification from camera trap images using a neural network. How can I optimize the evaluation process on a large image set? A: Move from evaluating every image to a stratified random sampling protocol. Stratify your test set by confidence score bins from the classifier. Sample more images from low-confidence bins. Use the Clopper-Pearson exact method to calculate confidence intervals for precision/recall metrics, ensuring statistical rigor despite the smaller evaluated sample.

Q4: My spatial data verification involves computationally expensive null model simulations. Any alternatives? A: Replace full simulation-based null models with analytical approximations where possible (e.g., using the Gaussian Process for spatial correlation). When simulations are mandatory, employ variance-reduction techniques like Importance Sampling. Always verify that the optimized method's output distribution matches a full, slow simulation on a small, representative subset.

Q5: During batch processing of ecological time-series, how do I quickly identify datasets that failed quality checks? A: Implement a "verification fingerprint" log. As each dataset passes a check (completeness, outlier detection, spectral density validity), it receives a coded flag. A final composite check simply verifies the fingerprint matches the expected sequence. This replaces re-running checks with a instant hash-map lookup.

Experimental Protocols

Protocol 1: Staged Verification for High-Volume Sensor Data

- Input: Raw time-series data from

Nenvironmental sensors. - Stage 1 (Fast Filter): For each sensor's data, take a random subsample of 1000 points.

- Apply the Shapiro-Wilk test (α=0.05) to the subsample.

- Flag any sensor where the test rejects normality.

- Stage 2 (Rigor): For flagged sensors only, perform:

- Full Anderson-Darling test on the complete dataset.

- Visual inspection of Q-Q plots.

- Imputation or transformation if failure is confirmed.

- Output: A validation report and a cleaned data array.

Protocol 2: Calibrating an Agent-Based Model with Efficient MCMC

- Model: Define your agent-based model (ABM) with parameters

θto be calibrated against observed dataD. - Likelihood: Establish a stochastic likelihood function

L(D|θ)that runs the ABMntimes perθ. - Efficient Sampling: Use a NUTS sampler (e.g., via PyMC3 or Stan) with 4 parallel chains.

- Verification:

- Run chains for a minimum of 1000 tuning steps and 1000 draws.

- Calculate $\hat{R}$ and bulk/tail ESS for all parameters.

- If $\hat{R} > 1.01$, increase draw count.

- Examine trace plots for stationarity.

- Output: Posterior distributions for

θ, validated for convergence.

Data Tables

Table 1: Comparison of MCMC Sampling Algorithms

| Algorithm | Speed (Iter/sec) | Effective Sample Size/sec | Convergence Diagnostic Required? | Best For |

|---|---|---|---|---|

| Metropolis-Hastings | 150 | 10 | Gelman-Rubin ($\hat{R}$) | Simple, low-dim. posteriors |

| Hamiltonian MC (HMC) | 90 | 45 | $\hat{R}$ & ESS | Models with gradients |

| No-U-Turn Sampler (NUTS) | 70 | 50 | $\hat{R}$ & ESS (Divergences) | Complex, high-dim. posteriors |

Table 2: Staged Verification Performance (Simulated Data)

| Dataset Size (N) | Full Verification Time (s) | Staged Verification Time (s) | False Negative Rate | Computational Saving |

|---|---|---|---|---|

| 10,000 | 142 | 28 | 0.0% | 80% |

| 100,000 | 1,520 | 205 | 0.0% | 87% |

| 1,000,000 | 15,800 | 1,850 | <0.1% | 88% |

Visualizations

Title: Two-Stage Verification Workflow

Title: MCMC Calibration & Convergence Check

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for Computational Verification

| Item | Function in Verification | Example/Note |

|---|---|---|

| Statistical Test Suites (scipy.stats, R) | Provide foundational algorithms for distribution testing, correlation analysis, and other confirmatory metrics. | Use scipy.stats.anderson for rigorous normality tests. |

| Probabilistic Programming Frameworks (PyMC3, Stan) | Enable the specification of Bayesian models and provide state-of-the-art, efficient MCMC samplers (HMC, NUTS). | Essential for parameter calibration with uncertainty. |

| High-Performance Computing (HPC) Scheduler (SLURM) | Manages parallelization of independent verification jobs (e.g., across many datasets or model runs). | Optimizes wall-clock time, not just CPU time. |

| Numerical Linear Algebra Libraries (NumPy, BLAS/LAPACK) | Accelerate core matrix operations that underpin almost all statistical and machine learning verification. | Ensure these are optimized (e.g., MKL, OpenBLAS). |

| Containerization (Docker/Singularity) | Ensures verification experiments are reproducible by encapsulating the exact software environment. | Critical for audit trails and protocol sharing. |

Technical Support Center

Troubleshooting Guide

Q1: My automated verification system, calibrated on 2022 coastal plankton data, is now flagging over 60% of new 2024 samples as anomalous. Is the system broken? A: The system is likely functioning correctly, but experiencing concept drift. The underlying statistical properties of your ecological data have evolved, rendering the original calibration obsolete. This is a common issue in long-term ecological monitoring. Do not immediately recalibrate. First, conduct a drift diagnosis using the protocol below.

Experimental Protocol: Diagnosing Covariate Shift in Ecological Streams

- Data Segmentation: Partition your data:

Calibration Set(2022, n=5000 samples) andEvaluation Set(2024, n=1500 samples). - Feature Extraction: Use the same feature pipeline (e.g., chlorophyll-A concentration, cell size distribution, temperature sensitivity index) to generate vectors for both sets.

- Drift Detection Test: Perform a two-sample Kolmogorov-Smirnov (KS) test on each feature distribution.

- Result Interpretation: A p-value < 0.01 (with Bonferroni correction) for any key feature indicates significant covariate shift.

Table 1: Example KS-Test Results for Phytoplankton Features

| Feature | Calibration Set Mean (2022) | Evaluation Set Mean (2024) | KS Statistic (D) | p-value | Drift Detected? |

|---|---|---|---|---|---|

| Chlorophyll-A (µg/L) | 12.4 | 18.7 | 0.421 | 2.3e-16 | Yes |

| Average Cell Size (µm) | 15.2 | 14.8 | 0.032 | 0.087 | No |

| Nitrate Uptake Rate | 0.45 | 0.29 | 0.387 | 7.1e-13 | Yes |

Q2: After confirming drift, how do I update my model without discarding all historical data? A: Implement an adaptive learning strategy. A rolling window retraining approach is often effective for gradual drift.

Experimental Protocol: Rolling Window Recalibration

- Window Definition: Define a temporal window

W. For monthly sampling,W=24 monthsis a typical starting point. - Model Retraining: Continuously retrain your verification model using only the most recent

Wmonths of data. - Performance Tracking: Maintain a separate hold-out validation set from the most recent 3 months to monitor precision/recall after each retrain.

- Trigger Adjustment: If performance drops >15%, trigger an immediate retrain and consider adjusting

W.

Q3: How can I distinguish between real ecological change and sensor degradation causing the drift? A: This is a critical diagnostic step. Follow this verification workflow.

Title: Differentiating Sensor Fault from Ecological Concept Drift

Frequently Asked Questions (FAQs)

Q: What are the most common types of concept drift in ecological data, and which is hardest to detect?

A: See the table below for a comparison. Virtual drift is often the most challenging to detect as the true decision boundary (P(Y|X)) remains unchanged, requiring sophisticated feature-space analysis.

Table 2: Common Types of Concept Drift in Ecological Data

| Drift Type | Description | Ecological Example | Detection Difficulty | |

|---|---|---|---|---|