Ensuring Trustworthy Data: A Comprehensive Guide to Data Quality Assurance in Ecological Monitoring for Drug Development

This article provides a targeted guide for researchers, scientists, and drug development professionals on implementing rigorous data quality assurance (DQA) within ecological monitoring studies.

Ensuring Trustworthy Data: A Comprehensive Guide to Data Quality Assurance in Ecological Monitoring for Drug Development

Abstract

This article provides a targeted guide for researchers, scientists, and drug development professionals on implementing rigorous data quality assurance (DQA) within ecological monitoring studies. It explores the critical importance of high-fidelity ecological data for accurate environmental impact assessments in drug development. The scope covers foundational DQA principles and frameworks, practical methodologies for application in field and lab settings, strategies for troubleshooting common data quality issues, and advanced techniques for validating and comparing ecological datasets. The goal is to equip professionals with the knowledge to produce reliable, reproducible, and regulatory-compliant data that underpins sound scientific and business decisions.

Why Data Quality is Non-Negotiable in Ecological Monitoring: Foundational Concepts for Researchers

Within the framework of a thesis on data quality assurance in ecological monitoring research, this whitepaper examines the critical intersection of ecological data integrity, pharmaceutical development, and environmental safety. The discovery and development of novel therapeutics, particularly those derived from natural products (NPs), are intrinsically linked to accurate biodiversity and ecological data. Similarly, the environmental risk assessment (ERA) of pharmaceuticals after market release depends on high-quality monitoring data. Failures in data quality at any stage introduce profound risks, from wasted R&D investment to unforeseen ecological damage.

The Dual Pipeline: Where Ecological Data Meets Drug Development

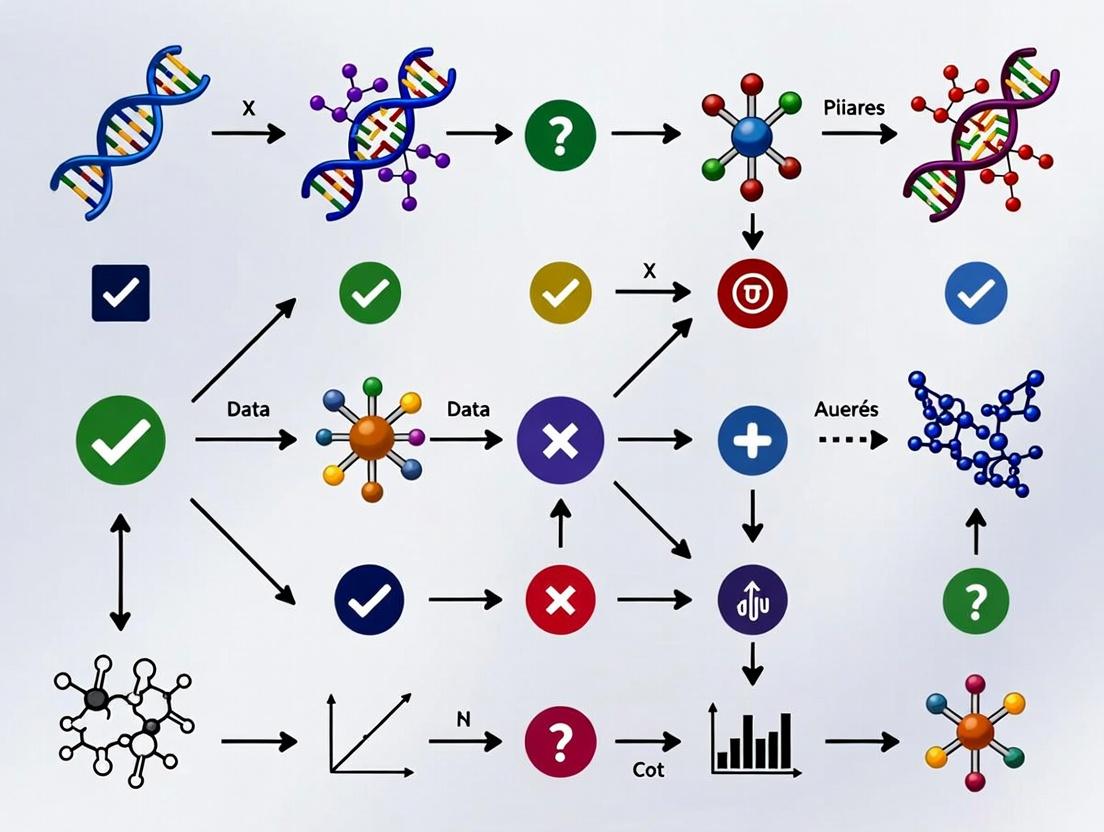

The journey from ecosystem to medicine relies on two parallel data streams: Biodiversity & Ecological Function Data and Drug Discovery & Development Data. Compromise in the former cascades into the latter.

Diagram Title: Dual Data Streams in Eco-Drug Discovery & Quality Failure Point

Quantitative Impact: The Cost of Poor Data

Recent analyses and case studies quantify the high stakes of inadequate ecological data.

Table 1: Impact of Poor Ecological Data on Drug Discovery Phases

| Phase | Common Data Quality Failure | Direct Consequence | Estimated Cost/Risk Impact |

|---|---|---|---|

| Source Bioprospecting | Misidentification of source organism; Inaccurate geolocation. | Lead compound irreproducibility; Lost intellectual property. | ~$0.5-2M per failed lead; wasted collection effort. |

| Pre-clinical Development | Lack of data on species population viability & sustainable yield. | Supply chain collapse; regulatory rejection on ethical grounds. | Clinical trial delay (~$1.4M/day); project termination. |

| Environmental Risk Assessment | Inaccurate degradation rates; lacking sensitive species toxicity data. | Post-market regulatory action; ecosystem damage; product restrictions. | Fines & remediation costs; reputational damage. |

Table 2: Key Statistics Linking Data Quality to Outcomes

| Metric | Value with High-Quality Data | Value with Poor-Quality Data | Source/Note |

|---|---|---|---|

| Natural Product Lead Reproducibility | >85% | <30% | Based on literature analysis of reported NP rediscovery failures. |

| Time to Identify Sustainable Source | 6-12 months | 24+ months (or never) | FAO 2023 report on sustainable genetic resource sourcing. |

| Accuracy of Predicted Environmental Concentration (PEC) | ± 30% of real value | ± 300%+ of real value | Model sensitivity analysis from recent ERA studies. |

Experimental Protocols for Assuring Ecological Data Quality

Robust methodologies are essential for generating data fit for purpose in high-stakes applications.

Protocol 1: Integrated Taxonomic & Metabolomic Profiling for Bioprospecting

Aim: To unambiguously identify a source organism and its characteristic metabolite profile to ensure reproducibility.

- Field Collection: Document GPS coordinates (with error <5m), habitat photos, microhabitat data, and associated species. Collect voucher specimens in triplicate.

- Morphological Taxonomy: Perform initial ID using dichotomous keys. High-resolution imaging of key morphological features.

- Molecular Barcoding: Extract genomic DNA from tissue sample. Amplify and sequence standard barcode regions (e.g., rbcL & matK for plants; COI for fauna). Compare to curated databases (GenBank, BOLD) using a defined similarity threshold (≥99% for species-level).

- Metabolite Fingerprinting: Prepare crude extract from a separate tissue sample. Analyze via UPLC-QTOF-MS. Process data to create a characteristic mass spectral fingerprint and molecular network.

- Data Integration: Create a single digital record linking voucher specimen ID (with depository info), geolocation, genetic barcode accession number, and raw/fprocessed metabolomic data.

Protocol 2: Microcosm-Based Environmental Fate and Effect Testing

Aim: To generate reliable data on pharmaceutical degradation and ecotoxicity for regulatory ERA.

- System Setup: Establish replicate aquatic microcosms (e.g., 10L tanks) with defined sediment, water, and standard microbial/plankton communities. Acclimate for 28 days.

- Dosing: Introduce the pharmaceutical compound at a predicted environmental concentration (PEC) and a 10x PEC spike. Include solvent controls.

- Fate Sampling: At time points (1h, 1, 7, 28, 56 days), collect water/sediment samples. Quantify parent compound and major metabolites via LC-MS/MS. Calculate degradation half-life (DT50).

- Effect Monitoring: Track population dynamics of key species (e.g., Daphnia magna, algae) via daily counts/biomass measurements. Measure endpoint functional diversity via metabolomic profiling of the microbial community at day 0 and 56.

- Data Analysis: Determine NOEC (No Observed Effect Concentration) and PNEC (Predicted No-Effect Concentration). Compare PEC/PNEC ratio with confidence intervals derived from replicate variance.

Diagram Title: Integrated Taxonomic & Metabolomic Profiling Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Quality-Assured Ecological Data Generation

| Item / Reagent | Function | Critical Quality Consideration |

|---|---|---|

| Silica Gel Desiccant | Rapid preservation of tissue for DNA & metabolite analysis. | Must be indicator-grade, regularly regenerated to prevent degradation. |

| DNA/RNA Stabilization Buffer | Preserves genetic material at ambient temperature during field transport. | Must be validated for the target taxa; nuclease-free. |

| Certified Reference Standards (Natural Products) | For metabolomic quantification and instrument calibration. | Purity >98%; sourced from reputable collections (e.g., NIST, Sigma). |

| Environmental DNA (eDNA) Extraction Kits | Isolates trace DNA from soil/water for biodiversity assessment. | Optimized for inhibitor-rich samples; includes extraction controls. |

| Stable Isotope-Labeled Pharmaceutical Analogs | Tracks environmental fate and transformation pathways in microcosm studies. | Isotopic purity >99%; custom synthesized for novel compounds. |

| Standardized Test Organisms (Daphnia magna, Pseudokirchneriella subcapitata) | For consistent, reproducible ecotoxicity testing. | Cultured under ISO guidelines; age-synchronized for tests. |

| Taxonomic Voucher Specimen Preservation Materials | Creates permanent physical record of the studied organism. | Archival-quality paper, inert gases, or ethanol concentrations per taxon protocols. |

Within the broader thesis of data quality assurance for ecological monitoring research, the definition and rigorous assessment of core data quality dimensions is foundational. Ecological data underpins critical decisions in conservation, species management, and environmental policy. For researchers and drug development professionals—the latter often reliant on ecological data for biodiscovery and environmental impact assessments—understanding these dimensions in a practical, field-based context is essential for ensuring the reliability and usability of information.

Core Dimensions of Data Quality: Definitions & Ecological Examples

| Dimension | Definition | Ecological Context Example | Potential Impact of Poor Quality |

|---|---|---|---|

| Accuracy | The degree to which data correctly describes the "true" value or state of the measured phenomenon. | Measuring the population count of an endangered bird species. An accurate count reflects the actual number present. | Over/underestimation of population viability, leading to flawed conservation strategies. |

| Precision | The closeness of repeated measurements to each other (repeatability/reproducibility). | Using a drone with a thermal camera to count a seal colony multiple times; a precise method yields similar counts each flight. | High variability masks true trends, reducing statistical power to detect significant changes. |

| Completeness | The extent to which expected data is present without gaps. | A multi-year dataset on river water pH, temperature, and pollutant levels with no missing monthly samples. | Missing data points can bias analysis, invalidate time-series models, and hide causal relationships. |

| Consistency | The absence of contradictions within a dataset or when compared with other datasets. | Species taxonomy is applied uniformly (e.g., Canis lupus vs. "gray wolf") across all entries and related databases. | Inability to aggregate or compare data across studies, leading to integration errors. |

| Timeliness | The degree to which data is current and available within a useful time frame. | Real-time transmission of acoustic data from underwater hydrophones detecting illegal fishing activity. | Delayed data renders it useless for rapid response interventions (e.g., poaching, oil spills). |

Experimental Protocols for Assessing Data Quality Dimensions

The following methodologies are adapted from current ecological research practices.

Protocol for Assessing Accuracy and Precision in Biometric Data

Aim: To quantify the accuracy and precision of a novel, non-invasive body length measurement technique for terrestrial mammals (e.g., via camera traps with laser scalers) against the traditional manual capture-and-measure method (considered the "gold standard").

- Site Selection: Choose a controlled setting (e.g., wildlife sanctuary) with a known population of a target species (e.g., white-tailed deer).

- Gold Standard Data Collection: Safely capture and manually measure the body length of n=30 individual animals using standardized zoometric techniques. Record measurements M_manual.

- Test Method Data Collection: For the same n=30 individuals, obtain camera trap images with laser scalers from a fixed distance and angle. Three independent analysts measure body length from the images using digital caliper software. Each analyst takes three measurements per individual.

- Data Analysis:

- Accuracy: Calculate the mean difference (bias) between the average laser-derived measurement per individual and the manual measurement. Perform a paired t-test or Bland-Altman analysis.

- Precision: Calculate the within-analyst coefficient of variation (CV) for the three repeated image measurements and the between-analyst CV for their mean measurements.

Protocol for Assessing Completeness and Consistency in Biodiversity Inventories

Aim: To audit the completeness and consistency of a long-term arthropod pitfall trap dataset.

- Metadata Audit: Compile all field logbooks, lab spreadsheets, and database exports for a defined period (e.g., 2015-2025).

- Completeness Check:

- Create a matrix of expected data: Sampling Dates (rows) x Variables (columns: Species ID, Count, Sex, etc.).

- Systematically flag missing cells (null values). Calculate the percentage of missing data per variable and per sampling year.

- Trace missing data back to source (field collection failure, lab processing backlog, data entry omission) using logs.

- Consistency Check:

- Taxonomic Consistency: Extract all species binomials. Compare against a current authoritative taxonomy list (e.g., GBIF backbone). Flag synonyms, deprecated names, and spelling variants.

- Unit Consistency: Verify uniform use of measurement units (e.g., all lengths in mm, not cm).

- Temporal Consistency: Ensure date formats are uniform and timezone is documented for time-sensitive samples.

Visualizing the Data Quality Assurance Workflow in Ecological Monitoring

Diagram Title: Ecological Data QA Workflow from Collection to Curation

Diagram Title: Core Dimensions of Ecological Data Quality

The Scientist's Toolkit: Research Reagent Solutions for Ecological QA

| Item/Category | Function in Ecological Monitoring & QA |

|---|---|

| Calibrated Standard Reference Materials | Used to calibrate field instruments (e.g., pH meters, GPS units, gas analyzers) to ensure Accuracy. Examples: NIST-traceable pH buffers, GPS base stations. |

| Automated Data Loggers with Redundant Sensors | Deployed to collect high-frequency, synchronized environmental data (temp, humidity, pressure), improving Precision and Completeness by reducing manual error and gaps. |

| DNA Barcoding Kits & Standardized Primers | Provide a consistent molecular method for species identification, reducing taxonomic ambiguity compared to morphological identification alone. |

| Field Data Collection Apps (e.g., ODK, Survey123) | Enforce data structure, prevent invalid entries via dropdowns, and enable real-time geotagging and upload, directly enhancing Consistency, Completeness, and Timeliness. |

| Controlled Vocabularies & Metadata Schemas (EML, Darwin Core) | Standardized templates for describing data, ensuring Consistency across projects and enabling interoperability with global repositories like GBIF or EDI. |

QA/QC Software Scripts (R: assertr, pointblank; Python: pandas-profiling, great_expectations) |

Automate checks for outliers, missing values, and logical contradictions, systematically quantifying Accuracy, Completeness, and Consistency. |

Within ecological monitoring and drug development research, ensuring data quality, integrity, and reusability is paramount. This guide examines three critical frameworks—Good Laboratory Practice (GLP), the FAIR Guiding Principles (Findable, Accessible, Interoperable, Reusable), and the Ecological Metadata Language (EML)—as foundational pillars for data quality assurance. These standards govern different stages of the research data lifecycle, from experimental execution to data sharing and preservation.

Good Laboratory Practice (GLP)

GLP is a formal, legally defined quality system covering the organizational process and conditions under which non-clinical health and environmental safety studies are planned, performed, monitored, recorded, reported, and archived. It is mandated for drug development and chemical safety assessments.

Core Principles & Experimental Protocols

GLP ensures the reliability and integrity of test data. Key experimental protocols governed by GLP include:

- Toxicology Studies: Detailed methodology for repeated-dose toxicity testing in rodents.

- Test Article Characterization: Document source, batch, purity, and stability.

- Animal Husbandry: Standardized housing (temperature, humidity, light cycle), diet, and water.

- Dose Formulation & Administration: Prepare test article in vehicle (e.g., carboxymethyl cellulose). Conduct dose-range finding study. Randomly assign animals to control, vehicle control, and three dose groups (e.g., n=10/sex/group). Administer daily via oral gavage for 28 days.

- In-life Observations: Record clinical signs, body weight, and food consumption daily/weekly.

- Terminal Procedures: At study end, collect blood for hematology/clinical chemistry, perform necropsy, and preserve organs in formalin for histopathological examination by a board-certified pathologist.

- Data Recording: All raw data entered in indelible ink, dated, and signed. Any changes must be crossed out with reason given, initialed, and dated.

- Ecotoxicology Studies: Methodology for an aquatic toxicity test (e.g., Daphnia magna reproduction test).

- Test System Preparation: Cultivate Daphnia under standardized conditions.

- Exposure System: Prepare five concentrations of test substance and a control in reconstituted water. Use 10 beakers per concentration, each with one daphnid.

- Exposure & Monitoring: Renew test solutions daily. Feed organisms a defined algae diet. Monitor parent survival and offspring production daily for 21 days.

- Endpoint Calculation: Calculate EC50 for reproduction inhibition.

Diagram: GLP Study Execution Workflow

Table 1: Key GLP Requirements for Data Integrity

| Requirement | Description | Typical Documentation |

|---|---|---|

| Study Director | Single point of control for the entire study. | Signed Study Plan appointment. |

| Quality Assurance Unit | Independent audit/monitoring of the study. | QA inspection reports, signed QA statement in final report. |

| Facilities & Equipment | Adequate size, design, and maintenance. Calibrated apparatus. | SOPs, calibration logs, maintenance records. |

| Standard Operating Procedures (SOPs) | Documented procedures for all operational aspects. | Library of approved SOPs, training records. |

| Final Report | Complete, accurate description of methods and results. | Report signed by Study Director, stating GLP compliance. |

| Archival | Secure storage of study plan, raw data, reports, and specimens. | Archive index, limited access log. |

FAIR Guiding Principles

FAIR provides a framework to enhance the value of digital research assets by making them machine-actionable and reusable by humans.

Detailed Methodology for Implementing FAIR in Ecological Research

- Findable:

- Protocol: Assign a globally unique and persistent identifier (e.g., DOI) to the dataset. Use a public repository (e.g., Dryad, Zenodo). Describe data with rich, searchable metadata (e.g., using EML schema).

- Accessible:

- Protocol: Deposit data in a trusted repository with standard, open communication protocols (e.g., HTTPS). Metadata should be always accessible, even if data is under embargo.

- Interoperable:

- Protocol: Use formal, accessible, and broadly applicable knowledge representation languages (e.g., RDF, XML). Use controlled vocabularies and ontologies (e.g., ENVO for environments, OBO Foundry ontologies).

- Reusable:

- Protocol: Provide detailed, domain-relevant provenance (methods, instruments, software). Release data with a clear, machine-readable license (e.g., CC-BY). Meet relevant community standards.

Diagram: FAIR Data Stewardship Cycle

Ecological Metadata Language (EML)

EML is a metadata specification developed by the ecology discipline, implemented as XML schemas, used to document ecological data sets.

Core Components and Implementation Protocol

Protocol for Creating EML Metadata:

- Identify Modules: Determine needed modules (dataset, project, personnel, methods, data table, spatial coverage, temporal coverage).

- Compile Information: Gather all relevant project, people, geographic, temporal, and methodological details.

- Use Tools: Generate EML using tools like the

EMLpackage in R,pymecin Python, or Morpho data management software. - Validate: Validate the generated XML against the EML schema using parser libraries or the PASTA+ validator.

- Publish: Upload the EML record alongside the data to a repository like the Environmental Data Initiative (EDI) or DataONE member node.

Diagram: EML Modular Structure

Table 2: Comparison of GLP, FAIR, and EML

| Aspect | Good Laboratory Practice (GLP) | FAIR Guiding Principles | Ecological Metadata Language (EML) |

|---|---|---|---|

| Primary Scope | Regulatory non-clinical safety study conduct. | Stewardship of all digital research objects (data, software). | Description of ecological/environmental datasets. |

| Governance | Legal regulation (OECD, FDA, EPA). | Community-developed guiding principles. | Community-developed standard schema. |

| Key Focus | Data integrity, traceability, and quality assurance during research execution. | Data discovery, machine-actionability, and reuse post-research. | Structured, detailed metadata to enable data understanding. |

| Implementation | Through SOPs, QA audits, and detailed protocol adherence. | Through repository policies, identifier systems, and use of semantic tools. | Through XML documents following specific schemas. |

| Typical Phase | Active experimental/data generation phase. | Data publication, sharing, and preservation phase. | Data packaging and documentation phase (pre-publication). |

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Materials for Ecological Monitoring & Toxicology

| Item | Function in Research |

|---|---|

| Standard Reference Toxicants (e.g., KCl, Sodium Lauryl Sulfate) | Used in ecotoxicology bioassays (e.g., Daphnia, algal tests) to validate organism health and test system sensitivity. |

| EPA/ISO Standard Synthetic Freshwater & Marine Media | Provides consistent, defined water chemistry for culturing test organisms and conducting aquatic toxicity tests. |

| Certified Reference Materials (CRMs) for Environmental Matrices | Soil, sediment, or tissue samples with known contaminant concentrations for quality control/assurance in analytical chemistry. |

| Lyophilized Control Sera & Calibrators | Essential for ensuring accuracy and precision in clinical chemistry analyzers used in GLP toxicology studies. |

| Fixed-Stain Cell Preparations (e.g., for Hematology Analyzers) | Used for quality control and calibration of hematology instruments analyzing blood from test animals. |

| Formalin, Paraffin, Histology Stains (H&E) | Standard reagents for tissue fixation, processing, and staining for histopathological evaluation in GLP studies. |

| Data Loggers (Temperature, Humidity, Light) | Critical for GLP-compliant environmental monitoring in animal rooms, incubators, and test chambers. |

| Calibrated Pipettes & Analytical Balances | Foundation for accurate measurement of test substances, doses, and experimental materials. Regular calibration is a GLP mandate. |

GLP, FAIR, and EML are complementary frameworks essential for end-to-end data quality assurance. GLP ensures data integrity at the point of origin in regulated research. EML provides the structured, disciplinary language to describe complex ecological data, making it understandable. The FAIR principles then leverage this foundation to maximize data discovery and reuse across the scientific community. Together, they form a robust infrastructure for producing trustworthy, sustainable, and impactful scientific evidence in both drug development and ecological monitoring.

Within the thesis on Introduction to data quality assurance in ecological monitoring research, the data lifecycle provides the structural framework for ensuring the integrity, traceability, and fitness-for-purpose of ecological data. For researchers, scientists, and drug development professionals, particularly in environmental impact assessments for regulatory submissions, a rigorous, documented lifecycle is non-negotiable. This guide details the technical phases, protocols, and quality gates that transform raw field observations into defensible, regulatory-ready evidence.

The Data Lifecycle: Phases and Quality Assurance Gates

The lifecycle is a sequential yet iterative process, with each phase generating specific deliverables and requiring explicit quality checks. The following table summarizes the core phases, primary actions, and associated Quality Assurance/Quality Control (QA/QC) measures.

Table 1: Phases of the Ecological Data Lifecycle and Integrated QA/QC Measures

| Lifecycle Phase | Primary Actions & Outputs | Key QA/QC Measures & Documentation |

|---|---|---|

| 1. Planning & Design | Define study objectives, endpoints, and statistical power. Create Sampling and Analysis Plan (SAP). Select validated methods. | Protocol peer review. Statistical power analysis. Ethical review (if applicable). Pre-defined acceptance criteria for QC samples. |

| 2. Field Collection & Acquisition | In-situ measurement, specimen/sample collection, sensor deployment. Output: Raw data logs, physical samples, GPS waypoints. | Chain of Custody (CoC) forms. Field Standard Operating Procedures (SOPs). Calibration logs for instruments. Field duplicate and blank samples. |

| 3. Data Curation & Processing | Data transcription, digitization, unit conversion, georeferencing. Output: Cleaned, formatted digital datasets. | Double-data entry verification. Metadata annotation using standards (e.g., EML). Automated range and logic checks. Data curation logs. |

| 4. Analysis & Modeling | Statistical testing, trend analysis, spatial modeling, indicator calculation. Output: Analysis results, figures, statistical summaries. | Use of version-controlled scripts (e.g., R, Python). Benchmarking with known datasets. Sensitivity analysis. Peer review of code and methodology. |

| 5. Reporting & Visualization | Synthesis of findings into reports, dashboards, and visualizations. Output: Draft and final reports, interactive data products. | Consistency checks between text, tables, and figures. Adherence to reporting guidelines (e.g., STARD for diagnostics). Accessibility review of visualizations. |

| 6. Archival & Submission | Preparation of regulatory submission packages or public repository deposits. Output: Complete data packages, submitted dossiers. | Compliance with repository schema (e.g., DEB, NEON). Completeness check against SAP. Final QA audit before submission. |

Detailed Experimental Protocols for Key Monitoring Activities

Protocol for Aquatic Macroinvertebrate Community Sampling (Stream Health Assessment)

- Objective: To collect a quantitative sample of benthic macroinvertebrates for assessing biological water quality and biodiversity.

- Materials: D-frame kick net (500µm mesh), waders, sample jars (1L), ethanol (95%), labels, field data sheet, GPS, calibrated water quality meter.

- Methodology:

- Site Selection: Follow SAP-defined transects. Record GPS coordinates and general habitat descriptors.

- Sample Collection: For a single composite sample, firmly place the net on the stream bed. Disturb the substrate immediately upstream of the net over a 0.5m x 0.5m area for 3 minutes via kicking and stone-rubbing.

- Processing: Transfer contents of net into a jar. Rinse net thoroughly into jar. Preserve immediately with 95% ethanol. Affix label with SiteID, Date, Time, Collector initials inside jar. Create external label.

- QC Samples: Collect a field duplicate sample at 10% of sites. Collect a field blank (jar with preservative only) per sampling day.

- Documentation: Complete CoC form and field sheet with all metadata (flow, weather, anomalies). Package samples for transport.

Protocol for Vegetation Quadrat Survey (Plant Community Composition)

- Objective: To obtain species-level percent cover and frequency data within a defined plot.

- Materials: 1m x 1m quadrat frame, field guide, handheld data logger (or datasheet), clinometer, soil probe.

- Methodology:

- Plot Establishment: Randomly locate plot center per SAP. Use compass to orient quadrat.

- Species Identification & Cover Estimation: Visually estimate the percent cover of each vascular plant species within the frame to the nearest 1%. For cover <1%, record as trace. Include separate estimates for bare ground, litter, and rock.

- Data Recording: Record species by scientific name. Use pre-loaded species lists in digital logger to minimize error. Record observer name and estimation confidence.

- QA/QC: A second observer independently estimates cover at 5% of plots to calculate inter-observer reliability. Photograph each quadrat from a consistent height and angle.

Protocol for Continuous Sensor Deployment (Water Quality Parameters)

- Objective: To collect high-frequency time-series data for parameters like dissolved oxygen (DO), pH, temperature, and conductivity.

- Materials: Multi-parameter sonde, calibration solutions, deployment cage/cable, data buoy or shore-based logger, batteries.

- Methodology:

- Pre-Deployment Calibration: Calibrate DO sensor using zero-oxygen solution and water-saturated air per manufacturer SOP. Calibrate pH sensor using a 2-point calibration with pH 4.01, 7.00, and 10.01 buffers. Calibrate conductivity sensor with a standard solution.

- Deployment: Secure sonde in cage at mid-depth. Ensure proper orientation for flow. Securely attach to fixed anchor and data logger. Record deployment time, location (GPS), and sensor serial numbers.

- Post-Retrieval Validation: Immediately upon retrieval, check sensor readings against a freshly calibrated handheld meter in ambient water. Perform a post-deployment drift check in calibration standards.

- Data Handling: Download data, noting retrieval time. Flag periods of potential biofouling, low battery, or sensor burial.

Visualization of Workflows and Relationships

Diagram Title: Ecological Data Lifecycle with QA Gates

Diagram Title: Sample Flow from Field to Lab with QC

The Scientist's Toolkit: Essential Research Reagent Solutions & Materials

Table 2: Key Reagents and Materials for Ecological Monitoring

| Item | Primary Function & Rationale |

|---|---|

| 95% Ethanol (with 5% glycerol) | Standard preservative for benthic macroinvertebrate and tissue samples. Denatures proteins, preventing decomposition; glycerol prevents brittleness. |

| RNAlater Stabilization Solution | Preserves the RNA integrity in tissue samples collected for molecular analysis (e.g., eDNA, transcriptomics), enabling lab-based genetic studies. |

| Buffer Solutions (pH 4.01, 7.00, 10.01) | Certified calibration standards for pH meters and multi-parameter sondes. Essential for maintaining measurement accuracy and NIST-traceability. |

| Potassium Iodide (KI) / Sodium Thiosulfate | Used in Winkler titration for dissolved oxygen analysis, serving as a primary method to validate and calibrate optical or electrochemical DO sensors. |

| Formalin (Buffered, 10%) | Traditional fixative for plankton and ichthyoplankton samples. Provides excellent morphological preservation (requires careful health and safety handling). |

| Deionized/Distilled Water (Certified) | Used for preparing blank samples, rinsing equipment, and making standard solutions. Critical for identifying and minimizing background contamination. |

| Certified Reference Materials (CRMs) | For soil, water, or tissue analysis. Samples with known concentrations of analytes (e.g., metals, nutrients) used to validate analytical instrument accuracy and recovery rates. |

| Silica Gel Desiccant | For preserving plant voucher specimens and soil samples intended for molecular work by rapidly removing moisture and halting microbial activity. |

| GPS Unit (Survey-Grade) | Provides precise geospatial coordinates for sample locations and plot centers, ensuring spatial accuracy and repeatability, crucial for temporal studies. |

| Calibrated Data Logger | The core component for recording continuous measurements from environmental sensors. Requires regular calibration against primary standards. |

Within ecological monitoring research for drug development, Data Quality Assurance (DQA) is the cornerstone of credible environmental impact assessments, regulatory submissions, and sustainability reporting. Effective DQA must align the divergent priorities of three core stakeholder groups: Scientists (requiring precision, accuracy, and fitness-for-purpose for ecological inference), Regulators (demanding auditable traceability, strict protocol adherence, and complete transparency), and Corporate Sustainability Officers (needing standardized, reportable metrics for ESG (Environmental, Social, and Governance) disclosures and license-to-operate). This guide details technical protocols and frameworks to harmonize these needs.

Core DQA Requirements Across Stakeholder Groups

Table 1: Primary DQA Requirements by Stakeholder

| Stakeholder | Primary DQA Need | Key Metric Examples | Data Output Criticality |

|---|---|---|---|

| Scientists/Researchers | Analytical precision, methodological rigor, contextual metadata. | Limit of Detection (LOD), coefficient of variation (CV), spatial GPS accuracy. | High; enables robust statistical analysis and publication. |

| Regulators (e.g., FDA, EMA, EPA) | ALCOA+ principles (Attributable, Legible, Contemporaneous, Original, Accurate, + Complete, Consistent, Enduring, Available). | Audit trail completeness, chain of custody documentation, % of data points meeting pre-defined QC criteria. | Absolute; required for Investigational New Drug (IND) or New Drug Application (NDA) environmental modules. |

| Corporate Sustainability | Standardization, aggregation, interoperability with reporting frameworks. | GHG Protocol alignment, WEF Stakeholder Capitalism Metrics, TNFD (Taskforce on Nature-related Financial Disclosures) readiness. | High; ensures compliance with investor and disclosure mandates (e.g., CSRD, SEC). |

Experimental Protocols for Aligned DQA

Protocol: Integrated Field Sampling & Chain of Custody (CoC)

Objective: To collect ecological samples (e.g., water, soil, biota) with quality controls that satisfy scientific, regulatory, and auditability needs. Materials: Calibrated GPS, pre-labeled sample containers (lot-traceable), inert sampling equipment, digital field logbook/tablet, barcode/RFID tags, tamper-evident seals, certified reference materials (CRMs) for matrix spikes. Procedure:

- Pre-Sampling:

- Calibrate all field instruments (e.g., multiparameter sondes, GPS) using NIST-traceable standards. Document calibration certificates in metadata.

- Deploy field blanks and trip spikes (CRM) at a rate of 5% per sampling batch.

- Sampling:

- Record all sample coordinates, time, and environmental conditions (pH, temp, DO) digitally. Attach barcode to sample container immediately.

- Take triplicate samples at 10% of sampling sites for intra-site variability assessment.

- Post-Sampling:

- Apply tamper-evident seals. Log sample transfer to courier in digital CoC system (timestamp, custodian name).

- Ship with temperature loggers; data from loggers integrated into master dataset.

Protocol: Laboratory Data Acquisition & QC Integration

Objective: To generate analytical data with embedded QC that supports statistical analysis and regulatory audit. Materials: Laboratory Information Management System (LIMS), CRMs, internal standards, QC check samples. Procedure:

- LIMS Integration: Upon receipt, scan sample barcode into LIMS, initiating a pre-defined analytical workflow. The workflow automatically schedules necessary QC samples (method blanks, continuing calibration verification, laboratory control samples, duplicates).

- Analysis: For mass spectrometry-based analysis (e.g., LC-MS/MS for pharmaceutical residues):

- Inject sequence must follow: Initial Calibration → Method Blank → CCV → then samples interspersed with QC every 10 samples.

- Internal standards are added to every sample to correct for matrix effects.

- QC Acceptance Criteria: Pre-set rules in LIMS (e.g., CCV must be within ±15% of true value; sample duplicate RPD ≤20%). Any failure triggers automated flag and defined corrective action.

Visualization: Aligned DQA Workflow

Diagram Title: Integrated DQA Workflow for Multi-Stakeholder Alignment

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Reagents & Materials for Ecotoxicological DQA

| Item | Function in DQA | Relevance to Stakeholder Alignment |

|---|---|---|

| Certified Reference Materials (CRMs) | Provides traceable calibration and verifies method accuracy. Fundamental for quantifying uncertainty. | Scientist: Ensures data accuracy. Regulator: Mandatory for GLP compliance. Sustainability: Underpins credible claims. |

| Stable Isotope-Labeled Internal Standards | Compensates for matrix effects and analyte loss during sample prep in LC-MS/MS. Improves precision. | Scientist: Critical for precise quantification in complex matrices. Regulator: Expected for high-quality bioanalytical data. |

| Performance Evaluation (PE) Samples | Blind samples of known concentration provided by external proficiency schemes (e.g., EQuAS). Tests lab competency. | Scientist: Benchmarks lab performance. Regulator: Independent proof of data reliability. |

| DNA/RNA Preservation Reagents (e.g., RNAlater) | Stabilizes genetic material from environmental samples for metabarcoding studies. Preserves sample integrity. | Scientist: Enables high-integrity genomic data for biodiversity assessment. Sustainability: Key for TNFD-related genetic indicator data. |

| Chain of Custody Kits (Barcodes, Seals, Logs) | Ensures sample identity and integrity from collection to analysis. Creates auditable trail. | Regulator: Core ALCOA+ requirement. All: Prevents data integrity failures. |

From Theory to Field: Practical DQA Methodologies for Ecological Monitoring Programs

Data Quality Assurance (DQA) is a systematic process to ensure data are fit for their intended use, encompassing planning, implementation, and assessment. Within ecological monitoring and drug development, DQA begins at the foundational stages of experimental and sampling design. This guide outlines technical strategies to embed quality a priori, preventing costly errors and irreproducible results.

Foundational DQA Principles in Design

Key Concepts

- Accuracy vs. Precision: Accuracy (closeness to true value) is prioritized in calibration and reference standards. Precision (repeatability) is addressed through replication.

- Bias Minimization: Systematic error is controlled via randomization, blinding, and appropriate controls.

- Representativeness: The sample must accurately reflect the target population (e.g., ecosystem, patient cohort).

Quantitative Design Targets

Establishing quantitative targets for data quality before data collection is critical.

Table 1: Common Data Quality Objectives (DQOs) in Design

| DQA Metric | Target in Ecological Monitoring | Target in Pre-Clinical Drug Development | Primary Design Control Mechanism |

|---|---|---|---|

| Measurement Uncertainty | ≤ 20% RSD for key analytes (e.g., nutrient concentrations) | ≤ 15% RSD for pharmacokinetic (PK) assays | Instrument calibration, replication level |

| Limit of Detection (LOD) | Sufficient to detect pollutants at 1/10th regulatory limit | Sufficient to quantify drug at 1/5th of C~min~ | Assay optimization, sample prep method |

| Statistical Power (1-β) | ≥ 0.80 to detect a 30% population change | ≥ 0.90 to detect a 25% treatment effect | Sample size calculation, effect size estimation |

| Type I Error Rate (α) | 0.05 | 0.05 (or adjusted for multiplicity) | Statistical hypothesis framework |

| Sample Contamination Risk | < 5% probability | < 1% probability (e.g., cross-contamination) | Field/lab protocol, physical separation |

Experimental Design Protocols for DQA

Protocol: Randomized Block Design for Field Ecology

Objective: Control for spatial gradient (e.g., soil moisture, altitude) bias when testing a treatment effect.

- Define Blocking Factor: Identify major environmental gradient. Divide study area into homogeneous blocks along this gradient.

- Randomization within Block: Randomly assign all treatment levels (e.g., fertilizer types, disturbance regimes) to plots within each block.

- Replication: Each treatment must appear once per block. Multiple blocks constitute replication.

- Analysis: Use ANOVA with Block as a random effect to partition variance and increase test sensitivity.

Protocol: Blinded, Placebo-Controlled Dose-Response Study

Objective: Unbiased assessment of compound efficacy and toxicity.

- Randomization: Animals or subjects are randomly assigned to treatment groups (vehicle, low/med/high dose).

- Blinding (Masking): Technicians administering treatments and assessing outcomes are unaware of group assignments. A third party holds the key.

- Control Groups: Include a vehicle control (placebo) and, if relevant, a positive control (known active compound).

- Dosing: Use a standardized volume/weight-based protocol. Document preparation chain-of-custody.

- Endpoint Measurement: Define primary endpoints a priori. Use validated assays with established SOPs.

Sampling Design Protocols for DQA

Protocol: Stratified Random Sampling for Ecosystem Assessment

Objective: Ensure all subpopulations (strata) of interest are adequately represented.

- Define Strata: Map distinct habitat types (e.g., forest, wetland, grassland) using GIS.

- Allocate Effort: Determine sample allocation (proportional to stratum area or variance).

- Random Site Selection: Within each stratum, generate random GPS coordinates for sampling points.

- Field Execution: Navigate to coordinates. If a point is inaccessible, select the nearest feasible location and document reason.

Protocol: Longitudinal Bio-sampling for PK/PD Studies

Objective: Generate high-quality time-series data for pharmacokinetic (PK) and pharmacodynamic (PD) modeling.

- Time Point Selection: Based on pilot data or literature, select points to capture absorption, distribution, metabolism, and excretion phases.

- Sample Volume & Matrix: Define max allowable blood volume per subject/time period. Specify matrix (plasma, serum, tissue) and anti-coagulant.

- Standardized Processing: Implement strict, time-critical processing SOPs (e.g., centrifuge within 30 min at 4°C).

- Chain of Custody: Log sample from collection, through processing, storage (-80°C), to analysis. Track freeze-thaw cycles.

Visualizing DQA Integration in Study Design

Diagram 1: DQA in the Study Design Workflow

The Scientist's Toolkit: Key Research Reagent & Material Solutions

Table 2: Essential Research Reagents & Materials for DQA-Centric Studies

| Item Category | Specific Example(s) | Primary Function in DQA |

|---|---|---|

| Certified Reference Materials (CRMs) | NIST Standard Reference Materials (SRMs), Certified analyte standards. | Calibration and verification of instrument accuracy; trueness checks. |

| Internal Standards (IS) | Stable isotope-labeled analogs (e.g., ¹³C, ²H) for LC-MS/MS; foreign proteins for ELISA. | Correct for variability in sample preparation and instrumental analysis; improves precision. |

| Quality Control (QC) Samples | Pooled biological QC (study-specific), commercial QC sera, fortified field blanks. | Monitor assay stability and precision across batches; detect drift. |

| Environmental Samplers | Passive samplers (POCIS, SPMDs), automated water samplers (ISCO). | Provide time-integrated samples, reduce temporal variability, standardize collection. |

| Barcode/LIMS System | Pre-printed barcoded tubes, Laboratory Information Management System (LIMS). | Ensures sample traceability, prevents misidentification, automates data logging. |

| Validated Assay Kits | FDA-cleared ELISA kits, qPCR kits with MIOE compliance. | Provide predefined performance characteristics (LOD, LOQ, range), reducing validation burden. |

| Blinding Supplies | Opaque capsules for diet dosing, coded vehicle solutions. | Enables proper masking to minimize observer bias in treatment studies. |

In ecological monitoring and drug development research, data integrity is paramount. Standard Operating Procedures (SOPs) are the foundational framework that ensures the precision, accuracy, reproducibility, and traceability of data from collection to analysis. They are the critical link between field observations or lab bench work and high-quality, defensible scientific conclusions. This guide details the creation and enforcement of SOPs as a core component of a data quality assurance (QA) system, mitigating variability and error introduced by human, environmental, and instrumental factors.

The Imperative for SOPs: A Quantitative View

The impact of procedural standardization on data quality is measurable. The following table summarizes key findings from recent studies on error reduction and efficiency gains.

Table 1: Impact of SOP Implementation on Research Data Quality and Operational Efficiency

| Metric Category | Scenario Without SOPs | Scenario With SOPs | % Improvement / Reduction | Source Context |

|---|---|---|---|---|

| Data Entry Error Rate | 4.2% manual transcription | 0.8% using SOP-mandated double-entry | ~81% reduction | Clinical sample logging (2023 audit) |

| Inter-operator Variability | 22% CV in cell counting | 7% CV with calibrated SOP | ~68% reduction | In vitro bioassay (2024 study) |

| Sample Processing Time | 45 ± 12 minutes per sample | 28 ± 3 minutes per sample | ~38% time reduction | Field soil core processing (2023) |

| Protocol Deviation Rate | 31% of assays | 6% of assays | ~81% reduction | High-throughput screening lab (2024) |

| Equipment Calibration Drift | Detected in 15% of monthly checks | Detected in 4% of checks with SOP schedule | ~73% reduction | Environmental sensor network (2023) |

SOP Creation: A Stepwise Methodology

Phase 1: Development and Documentation

- Identify Critical Process: Map the research workflow. Priority goes to processes prone to high variability, safety risks, or those directly generating primary data (e.g., soil sampling, ELISA, RNA extraction).

- Assemble a Drafting Team: Include a lead scientist, a senior technician, and a quality assurance officer. Incorporate end-user perspective.

- Define Scope and Objectives: Clearly state the SOP's purpose, applicable range, and personnel roles.

- Document the Procedure Sequentially:

- Materials & Reagents: Detailed specifications (see Scientist's Toolkit).

- Preparatory Steps: Calibration, safety precautions, reagent preparation.

- Step-by-Step Instructions: Use active voice, imperative mood ("Label the tube," "Record the time"). Specify tolerances (e.g., "Incubate for 30 min ± 2 min").

- Data Recording: Mandate what, where, and how to record. Use controlled forms or electronic capture.

- Troubleshooting & Acceptance Criteria: List common issues and solutions. Define pass/fail criteria for the step or output.

- Review and Validate: The team performs the SOP as written in a pilot study. Measure outputs for consistency against predefined QA criteria.

- Approve and Version Control: Obtain formal approval. Assign a unique ID and version number. Establish a master list to retire outdated versions.

Phase 2: Core Experimental Protocol Example: Total Phosphorus Analysis in Water Samples (EPA Method 365.1+)

- Objective: Quantify total phosphorus (TP) in freshwater samples colorimetrically after persulfate digestion.

- Principle: All phosphorus forms are oxidized to orthophosphate, which reacts with ammonium molybdate and antimony potassium tartrate to form a phosphoantimonylmolybdate complex, reduced to a blue complex by ascorbic acid, measurable at 880 nm.

- Detailed Methodology:

- Sample Preservation: Field SOP mandates immediate filtration (0.45 µm) and acidification to pH <2 with H2SO4, storage at 4°C.

- Digestion: a. Piper 25.0 mL of well-mixed sample into a pre-labeled 50 mL glass digestion tube. b. Add 4.0 mL of acidified ammonium persulfate solution (prepared weekly). c. Autoclave at 121°C, 15 psi, for 30 minutes. Cool to room temperature.

- Color Development: a. Neutralize digestate to pH 5-7 with 1N NaOH, using phenol red indicator. b. Transfer 25.0 mL to a clean cuvette. c. Add 2.0 mL of combined reagent (ammonium molybdate, antimony potassium tartrate, sulfuric acid, ascorbic acid). Cap and mix thoroughly. d. Let stand for exactly 15 minutes at 20-25°C.

- Analysis: a. Zero spectrophotometer with a reagent blank. b. Measure absorbance of standards and samples at 880 nm. c. Calculate concentration from a 5-point calibration curve (0-100 µg P/L), r² ≥ 0.995 required.

Visualization of SOP-Driven Workflows

Diagram 1: SOP-Integrated Research Data Pipeline (89 chars)

Diagram 2: SOP Enforcement & Continuous Improvement Loop (81 chars)

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Field and Lab SOPs in Ecological & Pharmaceutical Research

| Item Category | Specific Example / Product | Primary Function in QA Context | Critical SOP Specification |

|---|---|---|---|

| Sample Stabilizer | RNAlater, Sulfuric Acid (for TP) | Preserves molecular integrity or chemical state from field to lab. Prevents analyte degradation. | Volume:sample ratio, temperature, maximum hold time. |

| Calibration Standards | NIST-traceable CRM for metals, Pharmacopeia APIs | Provides metrological traceability. Ensures accuracy and allows comparability across labs/studies. | Source, certification, preparation method, storage, expiration. |

| Enzymatic Assay Master Mix | Taq Polymerase Master Mix, Luciferase Assay System | Reduces pipetting variability and contamination risk in high-sensitivity assays (e.g., qPCR, reporter assays). | Thawing protocol, mixing method, aliquot size, freeze-thaw cycles. |

| Reference Biologicals | Cell Line with STR Profiling, Certified Reference Soil | Controls for biological response variability and matrix effects. Essential for inter-assay reproducibility. | Passage number, cultivation conditions, authentication schedule. |

| Data Integrity Tools | Electronic Lab Notebook (ELN), Barcode Labels & Scanner | Ensures attribution, timeliness, legibility, and traceability of original observations (ALCOA+ principles). | User authentication, audit trail, barcode format, scan verification step. |

SOP Enforcement and Auditing

Creation is futile without enforcement. A robust system includes:

- Training and Certification: Mandatory training on each SOP with documented competency assessment (e.g., a supervised demonstration).

- Regular Audits: Internal "spot-check" audits compare practice against the written SOP. Findings are tracked via a Corrective and Preventive Action (CAPA) system (see Diagram 2).

- Data Review: Supervisors review raw data notebooks or electronic files for compliance with recording SOPs.

- Culture of Quality: Leadership must champion SOP adherence as a non-negotiable element of scientific rigor, not bureaucratic overhead.

SOPs are the indispensable backbone supporting the entire edifice of data quality assurance in ecological monitoring and drug development. They transform best intentions into executable, consistent, and auditable actions. By investing in their meticulous creation, rigorous enforcement, and continual refinement, research teams convert operational discipline into the highest currency of science: trustworthy, high-quality data.

Instrument Calibration, Maintenance, and Verification Protocols for Reliable Measurements

Reliable measurements form the bedrock of high-quality ecological data, which in turn underpins robust environmental research, impact assessments, and pharmaceutical development reliant on natural products. This guide details the rigorous protocols necessary for ensuring instrumental data integrity, directly supporting the thesis that comprehensive data quality assurance is non-negotiable in ecological monitoring research. The principles herein are critical for researchers and scientists generating data for regulatory submission or foundational discovery.

Foundational Concepts: Calibration, Verification, and Maintenance

- Calibration: The process of comparing instrument readings to a known, traceable standard and adjusting the instrument to minimize measurement error. Establishes a quantitative relationship between the instrument's signal and the analyte concentration.

- Verification/Performance Qualification (PQ): The act of confirming that a previously calibrated instrument performs within specified tolerance limits for its intended application, using a second, independent standard.

- Maintenance: Scheduled activities (preventive) and unscheduled repairs (corrective) aimed at keeping equipment in optimal working condition and preventing drift or failure.

Core Protocols for Key Instrument Categories

Spectrophotometers (UV-Vis, Fluorescence)

Calibration Protocol (Wavelength Accuracy):

- Use a holmium oxide or didymium glass filter as a certified reference material (CRM).

- Scan the absorption peaks across the operational range (e.g., 279.4 nm, 360.9 nm, 536.2 nm for holmium oxide).

- Record the measured peak wavelengths.

- Calculate the deviation from the certified values. Adjust the instrument's alignment if deviations exceed manufacturer specifications (typically ±0.5 nm for UV-Vis).

Verification Protocol (Photometric Accuracy):

- Prepare a series of potassium dichromate (K₂Cr₂O₇) solutions in 0.005 M H₂SO₄ at known concentrations (e.g., 30, 60, 90 mg/L).

- Measure absorbance at 235, 257, 313, and 350 nm.

- Compare measured absorbance values against established reference values (e.g., NIST standards). Acceptance criteria are typically within ±0.01 A.

Maintenance Checklist:

- Daily: Check and top up source lamp coolant if required; visually inspect for source drift.

- Monthly: Clean exterior optics and cuvette holders; run automatic diagnostic tests.

- Annually: Professional service for internal optics cleaning, source replacement, and comprehensive performance validation.

Chromatography Systems (HPLC, GC)

Calibration Protocol (Flow Rate Accuracy - HPLC):

- Disconnect the column and connect the outlet to a calibrated volumetric flask.

- Set the pump to a specific flow rate (e.g., 1.0 mL/min) with mobile phase (e.g., water).

- Collect eluent for a measured time (e.g., 10 minutes).

- Weigh the collected eluent and convert to volume using the density.

- Calculate the actual flow rate: Volume (mL) / Time (min). Adjust pump calibration if outside ±1% of set point.

Verification Protocol (Retention Time Precision):

- Inject a standard mixture of analytes relevant to your assays (e.g., caffeine, phenol for HPLC; n-alkanes for GC) at least five times.

- Measure the retention time for each peak.

- Calculate the %RSD of the retention times. Acceptance criteria: %RSD < 0.5% for most applications.

Maintenance Checklist:

- Pre-run: Purge lines, check for leaks, monitor system pressure.

- Weekly: Clean or replace inlet filters, flush columns with appropriate storage solvent.

- Quarterly: Replace pump seals, clean or replace autosampler syringe, bake-out GC inlet liner.

Environmental Sensors (pH, Conductivity, Dissolved Oxygen)

Calibration Protocol (pH Meter):

- Use at least two NIST-traceable pH buffer solutions bracketing your expected sample range (e.g., pH 4.01, 7.00, 10.01).

- Rinse electrode with deionized water, blot dry.

- Immerse in first buffer, allow reading to stabilize, and calibrate ("Cal" point).

- Repeat for second (and third) buffer(s) ("Slope" adjustment).

- The instrument calculates the slope (should be 95-105%) and offset.

Verification Protocol (Post-Calibration Check):

- After calibration, immediately measure a third, different pH buffer (e.g., after calibrating with 4.01 & 7.00, measure pH 10.01).

- The measured value must be within ±0.05 pH units of the buffer's certified value.

Maintenance Checklist:

- Before/After each use: Rinse electrode with appropriate solution (DI water for pH, sample for DO), store in recommended filling solution.

- Monthly: Check and refill reference electrode electrolyte (if refillable); clean sensing membrane with recommended cleaner (e.g., 0.1 M HCl for protein fouling).

- Annually: Replace electrode if response is slow, slope is degraded, or verification fails.

Table 1: Typical Calibration Tolerances and Frequencies for Common Instruments

| Instrument | Calibration Parameter | Typical Tolerance | Recommended Frequency |

|---|---|---|---|

| Analytical Balance | Mass (Linearity) | ±0.1 mg (for 100g load) | Daily (with check weights) |

| UV-Vis Spectrophotometer | Wavelength Accuracy | ±0.5 nm | Quarterly |

| Photometric Accuracy | ±0.01 A | Quarterly | |

| pH Meter | Electrode Slope | 95-105% | Before each use |

| HPLC Pump | Flow Rate Accuracy | ±1% of set point | Quarterly |

| GC-MS | Mass Accuracy (Tuning) | ±0.1 amu | Daily/Weekly |

| Dissolved Oxygen Probe | Reading at 100% Saturation | ±1% of reading or ±0.1 mg/L | Before each use (1-pt cal) |

Table 2: Common Verification Standards and Their Applications

| Instrument Category | Verification Standard | Parameter Verified | Typical Target Value |

|---|---|---|---|

| Spectroscopy | Potassium Dichromate (NIST SRM 935a) | UV-Vis Absorbance | Certified A at specific λ |

| Strontium Chloride Solution | AAS/ICP Emission Intensity | Consistent Intensity | |

| Chromatography | Caffeine/Phenol/Uracil Mix | HPLC System Suitability | Retention Time, Plate Count |

| n-Alkane Mix (C8-C20) | GC Retention Index | Linear RI progression | |

| Environmental | Certified Conductivity Standard | Conductivity Meter | 84 µS/cm, 1413 µS/cm, etc. |

| Zero Gas (N₂) & Span Gas (CO₂ in N₂) | Infrared Gas Analyzer | 0 ppm & known ppm value |

Integrated Quality Assurance Workflow

Diagram Title: Instrument Lifecycle QA Workflow

Diagram Title: Calibration vs. Verification Signal Pathway

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents for Calibration and Verification

| Item Name | Function & Rationale | Example/Notes |

|---|---|---|

| NIST-Traceable Buffer Solutions | Provide known pH values for calibrating and verifying pH meters. Essential for electrochemical accuracy. | pH 4.01, 7.00, 10.01. Must be fresh and uncontaminated. |

| Certified Reference Materials (CRMs) | Substances with one or more certified property values (e.g., concentration, absorbance). Used for ultimate method validation. | NIST SRM 1640a (Trace Elements in Water), ERM-CD201 (PAHs in soil). |

| Holmium Oxide Filter | A solid glass filter with sharp, known absorption peaks. Used for verifying wavelength accuracy of spectrophotometers. | Peak tolerances are typically ±0.5 nm for UV-Vis, stricter for fluorescence. |

| Potassium Dichromate (Acidic) | A stable, reliable standard for verifying photometric accuracy and linearity of UV-Vis spectrophotometers. | Prepared in 0.005 M H₂SO₄; known absorbance at specific wavelengths. |

| Chromatography System Suitability Mix | A mixture of compounds to test HPLC/GC system performance (retention time, resolution, peak shape, sensitivity). | Often includes uracil (for column void volume), caffeine, phenol, etc. |

| Conductivity Standard Solutions | KCl solutions with certified conductivity values at specified temperatures. Used to calibrate conductivity/TDS meters. | Common values: 84 µS/cm, 1413 µS/cm, 12.88 mS/cm. |

| Zero/Span Gases | Certified gas mixtures for calibrating and verifying gas analyzers (e.g., for CO2, CH4, N2O flux measurements). | "Zero" is pure N₂; "Span" is a known concentration of analyte in N₂. |

| Class E1 or E2 Calibration Weights | Mass standards of known, traceable mass for calibrating and checking analytical and microbalances. | Set should cover instrument's weighing range. Handle with gloves and forceps. |

Within ecological monitoring and drug development research, the integrity of scientific conclusions hinges on the quality of the underlying data. Robust data management—encompassing digital capture, secure storage, and version control—forms the foundational pillar of data quality assurance. This guide details technical best practices to ensure data remains accurate, traceable, and reproducible throughout the research lifecycle.

Digital Capture: Standardizing Data at the Source

Digital capture refers to the initial creation of machine-readable data, a critical point where errors can be introduced.

Best Practices:

- Use Structured Formats: Capture data directly into structured formats (e.g., CSV, HDF5) over unstructured notes. For field ecology, utilize mobile data collection apps with pre-defined schemas.

- Automated Sensor Data Ingestion: Implement pipelines that automatically ingest data from environmental sensors (e.g., water quality sondes, camera traps) with timestamp and calibration metadata.

- Metadata Capture: Adopt standards like Ecological Metadata Language (EML) to document the who, what, when, where, why, and how at the point of capture.

Table 1: Comparison of Digital Capture Methods in Ecological Research

| Method | Typical Format | Advantages | Risk to Data Quality |

|---|---|---|---|

| Manual Field Log | Paper Notebook | High flexibility, works offline | Transcription errors, physical degradation |

| Mobile Data App | Structured SQLite/CSV | Enforced validation, GPS tagging | Device failure, battery life |

| Automated Sensor | Binary/JSON stream | High temporal resolution, continuous | Data gaps from transmission failure |

| Lab Instrument Output | Proprietary + CSV | High precision, integrated metrics | Vendor lock-in, opaque formatting |

Experimental Protocol: High-Frequency Sensor Data Capture

Objective: To continuously monitor dissolved oxygen (DO) in a wetland ecosystem.

- Calibration: Pre-deploy and post-deploy calibration of YSI EXO2 sonde using certified standards.

- Deployment: Secure sonde at a fixed depth. Configure to log DO, temperature, and pressure at 15-minute intervals.

- Ingestion: Use a Raspberry Pi-based gateway with cellular modem to transmit data nightly via SFTP to a central server.

- Validation: Run automated script to flag values outside biologically plausible ranges (e.g., DO > 20 mg/L) for manual review.

Secure Storage: Ensuring Data Integrity & Accessibility

Secure storage protects data from loss, corruption, and unauthorized access while maintaining availability for analysis.

Best Practices:

- 3-2-1 Backup Rule: Maintain 3 copies of data, on 2 different media, with 1 copy offsite (e.g., local server, external drive, and encrypted cloud storage).

- Immutable Archiving: For raw data, use Write-Once-Read-Many (WORM) storage or create checksum-verified archival packages (e.g., using BagIt specification).

- Access Control: Implement role-based access control (RBAC). Ensure principal of least privilege.

Table 2: Storage Mediums for Research Data Lifecycle

| Storage Tier | Recommended Use | Example Solutions | Security Consideration |

|---|---|---|---|

| Active Working | Current analysis, collaboration | Network-Attached Storage (NAS), Cloud Buckets (S3) | End-to-end encryption, strict ACLs |

| Short-Term Backup | Recent version recovery | Local external drives, institution's backup server | Encryption at rest, regular integrity checks |

| Long-Term Archive | Raw/final published data | Tape libraries, Glacier/Archive Cloud, Dataverse | Geographic redundancy, format migration plan |

Version Control: Tracking Change and Enabling Reproducibility

Version control systems (VCS) are not just for code; they are essential for tracking changes to datasets, scripts, and documentation.

Best Practices:

- Git for Scripts and Documentation: Use Git (with platforms like GitHub or GitLab) for all analysis code, lab protocols, and manuscripts. Commit with descriptive messages.

- Git-LFS or DVC for Data: For versioning large datasets, use Git Large File Storage (LFS) or Data Version Control (DVC), which store data in a remote repository while keeping track of file hashes in Git.

- Provenance Logging: Maintain a machine-readable log (e.g., a JSON file) that links specific data versions to the code and parameters used to process them.

Experimental Protocol: Versioned Analysis Workflow

Objective: Process and analyze species abundance data from quarterly surveys.

- Initialize: Create a Git repository with

analysis_script.R,README.md, and a.gitattributesfile to manage data with Git-LFS. - Track Data: Place raw

Q1_survey.csvin directory. Track withgit lfs track "*.csv"and commit. - Process: Run script to clean data, outputting

Q1_survey_cleaned.csv. Commit both script and output. - Iterate: For Q2 data, create a new branch (

feature/Q2-analysis). Update script if needed, process new data, and merge back to main branch.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Robust Data Management

| Tool / Reagent | Category | Function in Data Management |

|---|---|---|

| Open Data Kit (ODK) | Digital Capture | Toolkit for building mobile field data collection forms. |

| RStudio / JupyterLab | Analysis & Documentation | Integrated development environments that combine code, output, and narrative. |

| Git & GitHub/GitLab | Version Control | Distributed system for tracking changes and collaborative development. |

| Data Version Control (DVC) | Version Control | Open-source VCS specifically designed for large datasets and ML projects. |

| BagIt Packaging Tool | Secure Storage | Creates standardized, checksum-verified "bags" for data archiving and transfer. |

| Sensaline Logger | Digital Capture | Example of a robust, field-deployable environmental data logger. |

| Cryptomator | Secure Storage | Provides client-side encryption for cloud storage buckets. |

| Digital Object Identifier | Publishing | Persistent identifier for published datasets, ensuring permanent citability. |

Visualizing the Data Management Workflow

Diagram 1: Data quality assurance lifecycle in research

Diagram 2: Hybrid secure storage architecture for research data

Implementing rigorous practices in digital capture, secure storage, and version control is non-negotiable for ensuring data quality in high-stakes fields like ecological monitoring and drug development. This framework not only safeguards against data loss and corruption but also creates a transparent, auditable chain of custody from observation to publication. By integrating these best practices into the research workflow, scientists and researchers build a foundation of trust in their data, enabling reproducible and impactful science.

In ecological monitoring and environmental drug discovery, data forms the empirical bedrock for modeling ecosystem health, tracking biodiversity, and identifying bioactive compounds. Assuring the quality of this data—from field sensor readings to specimen metadata—is therefore non-negotiable. This guide details a three-tiered technical framework for data quality assurance (DQA), integrating sequential checks at collection, post-collection, and processing stages to produce research-ready datasets.

Tier 1: Real-time Field Audits

Real-time audits are proactive checks performed during data acquisition to prevent error propagation.

Experimental Protocol: In-situ Sensor Calibration & Cross-Verification

- Objective: To validate readings from primary environmental sensors (e.g., for pH, dissolved oxygen, temperature) against a calibrated standard in real-time.

- Methodology:

- At a predetermined frequency (e.g., every 10 sampling points or at start/end of each field day), deploy a set of certified, portable reference instruments.

- Simultaneously measure the target parameter with both the primary monitoring sensor and the reference instrument.

- Record the GPS coordinates, timestamp, and values from both devices.

- Calculate the immediate discrepancy. If the delta exceeds a pre-defined tolerance (see Table 1), halt sampling, troubleshoot (e.g., clean sensor, recalibrate), and flag the preceding batch of data since the last successful audit.

Data Presentation: Field Audit Tolerance Benchmarks

Table 1: Example tolerance thresholds for common ecological monitoring parameters.

| Parameter | Typical Sensor Type | Acceptable Real-time Delta (Audit vs. Field Sensor) | Common Source of Field Error |

|---|---|---|---|

| Water Temperature | Thermistor | ±0.2 °C | Sensor drift, biofouling |

| pH | Glass Electrode | ±0.3 pH units | Clogged junction, dried gel |

| Dissolved Oxygen | Optical/Clark Cell | ±0.5 mg/L | Membrane damage, stirring failure |

| Soil Moisture | TDR/Capacitance | ±3% VWC | Poor soil-sensor contact |

Mandatory Visualization: Real-time Field Audit Workflow

Title: Real-time Field Audit & Correction Loop

Tier 2: Post-collection Reviews

This tier involves structured verification of data completeness and consistency immediately after fieldwork, before analysis.

Experimental Protocol: Sample Chain-of-Custody (CoC) and Metadata Reconciliation

- Objective: To ensure physical samples (e.g., water, soil, plant extracts) are perfectly matched to their digital metadata and have an unbroken custody trail.

- Methodology:

- Post-fieldwork, generate a manifest from the electronic field notebook (e.g., sample IDs, location, time, collector).

- Physically line up all collected samples in the lab.

- Perform a barcode/QR scan of each physical vial and reconcile against the digital manifest. Check for missing samples or IDs not in the manifest.

- Verify that all required metadata fields (e.g., habitat description, photographic log ID) are populated for each sample.

- Document any discrepancies in a Post-Collection Anomaly Log, which must be resolved before data cleaning.

The Scientist's Toolkit: Research Reagent Solutions for Field & Lab QA

Table 2: Essential materials for ecological monitoring quality assurance.

| Item | Function in QA Process |

|---|---|

| NIST-Traceable Calibration Standards (e.g., pH buffers, conductivity solutions) | Provide authoritative reference points for sensor calibration during Tier 1 audits. |

| Blank & Spiked Field Samples | Transported to site; used to check for sample contamination (blank) and analyte recovery (spiked) in complex matrices. |

| Stable Isotope-Labeled Internal Standards (for metabolomics/proteomics) | Added immediately upon sample collection to correct for losses during later processing (Tier 3). |

| Electronic Field Notebook (EFN) with GPS/Time Sync | Ensures immutable, timestamped, geotagged data logging, critical for Tier 2 CoC review. |

| Lyophilizer (Freeze-Dryer) | Standardizes preservation of biological samples (soil, tissue) for downstream chemical analysis, minimizing degradation bias. |

Tier 3: Data Cleaning Workflows

A systematic, rule-based, and documented process to transform raw, validated data into an analysis-ready dataset.

Experimental Protocol: Automated Anomaly Detection & Imputation Reporting

- Objective: To programmatically identify statistical outliers, handle missing data, and enforce consistency, while creating a full audit trail of all changes.

- Methodology:

- Rule-Based Flagging: Apply logical rules (e.g.,

soil_moisture_pct > 100 → FLAG) and statistical thresholds (e.g., Median Absolute Deviation for outliers). - Contextual Validation: Cross-check related variables (e.g., if

species_nameis "Rainforest Tree," thenhabitat_typemust not be "Alpine Tundra"). - Controlled Imputation: For missing or flagged data, apply pre-defined methods (e.g.,

k-nearest neighborsfor spatial data,carry-forwardfor temporal logs). Crucially, never impute without creating animputation_flagcolumn. - Versioned Scripts: Execute all cleaning steps via version-controlled scripts (e.g., R/Python) that output a cleaned dataset and a changelog detailing every alteration.

- Rule-Based Flagging: Apply logical rules (e.g.,

Mandatory Visualization: Tiered Data Quality Assurance Pipeline

Title: Three-Tier Ecological Data QA Pipeline

Data Presentation: Common Data Cleaning Rules & Actions

Table 3: Examples of structured cleaning rules for ecological data.

| Rule Type | Example Rule | Action Taken | Audit Log Entry |

|---|---|---|---|

| Logical | if (depth_m > 0) & (light_intensity > surface_light) |

Flag as ILLOGICAL_LIGHT |

Row ID [X]: Light > surface at depth. Set to NA. |

| Domain | air_temp_c not between -40 and 50 |

Set to NA |

Row ID [Y]: Temp -45°C out of range. |

| Missingness | missing(sample_volume) |

Impute via median(plot_samples) |

Row ID [Z]: Volume missing. Imputed with median 15.2ml. |

| Temporal | sample_time before collection_trip_start |

Flag as TIME_ANOMALY |

Row ID [A]: Sample time precedes trip start. Time column set to NA. |

This tiered framework—spanning from preventative field audits to rigorous post-collection reviews and transparent, scripted cleaning—creates a robust defense against data corruption. For ecological monitoring and drug discovery research, it ensures that downstream models, biodiversity assessments, and compound identifications are built upon a foundation of verifiably high-quality data, directly supporting reproducible and impactful science.

Diagnosing and Solving Common Data Quality Pitfalls in Ecological Studies

Within the framework of a broader thesis on Introduction to Data Quality Assurance in Ecological Monitoring Research, this technical guide details the critical symptoms—or "red flags"—that indicate compromised data quality in ecological datasets. For researchers, scientists, and drug development professionals leveraging ecological data for biodiscovery or environmental baselining, recognizing these symptoms is the essential first step in implementing robust quality assurance protocols.

Core Symptoms and Quantitative Indicators

Poor data quality manifests through specific, measurable symptoms. The following table summarizes the primary red flags, their common causes, and potential impacts on analysis.

Table 1: Core Symptoms of Poor Data Quality in Ecological Datasets

| Symptom Category | Specific Red Flags | Common Causes | Impact on Analysis |

|---|---|---|---|

| Completeness | High percentage of missing values (>5-10%); Systematic absence of data from specific sites, times, or taxa. | Sensor failure, sampler error, inconsistent recording protocols. | Introduces bias, reduces statistical power, compromises model training. |

| Consistency & Standardization | Inconsistent taxonomic nomenclature; Mixed units (e.g., ppm vs. ppb); Varied date formats. | Multi-investigator projects, legacy data integration, lack of controlled vocabularies. | Hampers data integration and aggregation, leads to erroneous calculations. |

| Accuracy & Precision | Values outside plausible biological/physicochemical ranges (e.g., negative abundance, pH>14); High variance in replicate samples. | Calibration drift, misidentification, contamination, low instrument precision. | Produces invalid conclusions, obscures true ecological signals. |

| Temporality | Illogical time sequences; Unmarked timezone differences; Inappropriate temporal granularity for the process studied. | Logger clock errors, improper metadata recording. | Renders time-series analysis invalid, confuses cause-effect relationships. |

| Spatial Integrity | Coordinates in incorrect location (e.g., ocean for forest plot); Imprecise or inaccurate georeferencing; Mismatched coordinate reference systems. | GPS error, transcription mistakes, missing projection metadata. | Invalidates spatial models and GIS-based analyses, corrupts habitat mapping. |

Methodologies for Detection and Validation

Protocol for Automated Range and Plausibility Checks

- Objective: To programmatically identify values that fall outside scientifically acceptable limits.

Procedure:

- Define Acceptable Ranges: For each parameter (e.g., dissolved oxygen, species count), establish minimum and maximum plausible values based on literature and expert knowledge.

Implement Script: Use R or Python to flag records violating these thresholds.

- Example R Code Snippet:

- Example R Code Snippet:

Review & Action: Manually review flagged records to determine if they are errors (requiring correction/removal) or rare, valid outliers.

Protocol for Taxonomic Name Standardization and Resolution

- Objective: To ensure consistency in species identification across a dataset.

- Procedure:

- Compile Raw Names: Aggregate all species binomials and common names from the dataset.

- Resolve via Authority: Use an API-driven tool (e.g.,

taxizeR package, GBIF Name Parser) to match raw names to a canonical taxonomic backbone (e.g., World Register of Marine Species, ITIS). - Flag Discrepancies: Document unresolved names for expert review. Replace all raw names with accepted canonical names and unique taxon IDs.

- Report: Generate a report listing synonym resolutions and unresolved cases.

Visualizing Data Quality Assessment Workflows

The logical flow for systematic data quality screening is outlined below.

Data Quality Assessment Screening Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents & Materials for Field and Lab Quality Control

| Item | Function in Quality Assurance |

|---|---|