From Noise to Knowledge: Advanced Strategies for Quantifying Uncertainty in Citizen Science for Biomedical Research

Citizen science offers unprecedented data collection potential for environmental epidemiology, drug safety monitoring, and public health surveillance.

From Noise to Knowledge: Advanced Strategies for Quantifying Uncertainty in Citizen Science for Biomedical Research

Abstract

Citizen science offers unprecedented data collection potential for environmental epidemiology, drug safety monitoring, and public health surveillance. However, inherent data variability introduces significant uncertainty, limiting its adoption in rigorous biomedical research. This article provides a comprehensive framework for researchers and drug development professionals to quantify, analyze, and mitigate this uncertainty. We explore foundational sources of error in citizen-generated data, detail statistical and machine learning methodologies for uncertainty quantification (UQ), present troubleshooting strategies for common data quality issues, and validate these approaches through comparative case studies in clinical and environmental health contexts. Our aim is to equip scientists with the tools needed to transform noisy, crowd-sourced observations into robust, actionable evidence for research and development.

Understanding the Landscape: Core Sources and Types of Uncertainty in Citizen Science Data

Technical Support Center

Q1: We are observing bird species counts. Our volunteers have varying skill levels. How do we quantify the uncertainty introduced by misidentification?

A: This is a classic source of epistemic uncertainty (reducible through improved knowledge). Implement a Sub-Sampling Validation Protocol.

Experimental Protocol: Expert Validation Sub-Sampling

- Random Stratified Sampling: From the full volunteer dataset, randomly select 10-20% of observations, stratified by volunteer ID and reported species rarity.

- Expert Review: A panel of domain experts (ornithologists) independently reviews these selected observations, using the original location/time data and, if available, volunteer-submitted photos/audio.

- Confusion Matrix Analysis: Create a matrix comparing volunteer IDs and reported species against expert-validated ground truth.

- Uncertainty Quantification: Calculate metrics per volunteer and per species (see table below).

Table 1: Metrics for Quantifying Misidentification Uncertainty

| Metric | Formula | Interpretation |

|---|---|---|

| Observer-specific Accuracy | (Correct IDs by Observer / Total IDs by Observer) | Measures individual volunteer reliability. |

| Species-specific Mis-ID Rate | (Incorrect IDs of Species X / Total Reported IDs of Species X) | Highlights commonly confused species. |

| Epistemic Uncertainty Score (EUS) | 1 - (Weighted Average Accuracy across all volunteers) | A single scalar (0-1) representing reducible uncertainty in the dataset. |

Q2: Our environmental sensor data from volunteers shows inherent randomness in measurements, even at the same location. How do we separate this from observer bias?

A: You are describing aleatory uncertainty (inherent variability). Differentiate it from epistemic bias using a Controlled Replication Experiment.

Experimental Protocol: Paired Sensor Deployment

- Deployment: At a subset of fixed monitoring sites, deploy a calibrated, research-grade sensor (the "gold standard") alongside the volunteer-maintained sensor.

- Synchronous Data Collection: Collect simultaneous, time-series measurements (e.g., hourly pH, temperature, PM2.5) from both sensors over a significant period (e.g., 4 weeks).

- Data Partitioning:

- Aleatory Variability: Analyze the distribution (mean, variance, range) of the research-grade sensor's readings. This represents the true environmental randomness.

- Epistemic Bias: Analyze the systematic difference (mean error, drift over time) between the volunteer sensor and the research-grade sensor readings.

Table 2: Separating Aleatory and Epistemic Uncertainty in Sensor Data

| Data Source | Statistical Analysis | Uncertainty Type Inferred |

|---|---|---|

| Research-Grade Sensor | Standard Deviation, Distribution Fitting (e.g., Normal, Weibull) | Aleatory: Inherent environmental variability. |

| Difference (Volunteer - Research) | Mean Error (Bias), Root Mean Square Error (RMSE), Time-series Drift Analysis | Epistemic: Systematic error due to sensor quality, placement, or maintenance. |

Q3: How can we model the combined effect of both uncertainty types to report a reliable confidence interval for a population trend (e.g., decline in a species)?

A: Employ a Bayesian Hierarchical Model (BHM) that explicitly includes parameters for both uncertainty types.

Experimental Protocol: Integrated Uncertainty Modeling

- Model Structure:

- Observation Layer:

Reported_Count ~ Poisson(λ * exp(ε_observer + ε_species))ε_observer: Random effect for volunteer skill (epistemic).ε_species: Random effect for species detectability (aleatory/epistemic mix).

- Process Layer:

λ ~ f(Environmental Covariates, Temporal Trend)- The "true" ecological process. - Parameter Layer: Priors for all uncertainty parameters.

- Observation Layer:

- Inference: Use Markov Chain Monte Carlo (MCMC) sampling to estimate the posterior distributions of all parameters, including the temporal trend.

- Output: The trend is estimated with a 95% Credible Interval that inherently propagates both the aleatory variability in counts and the epistemic uncertainty in observer skill.

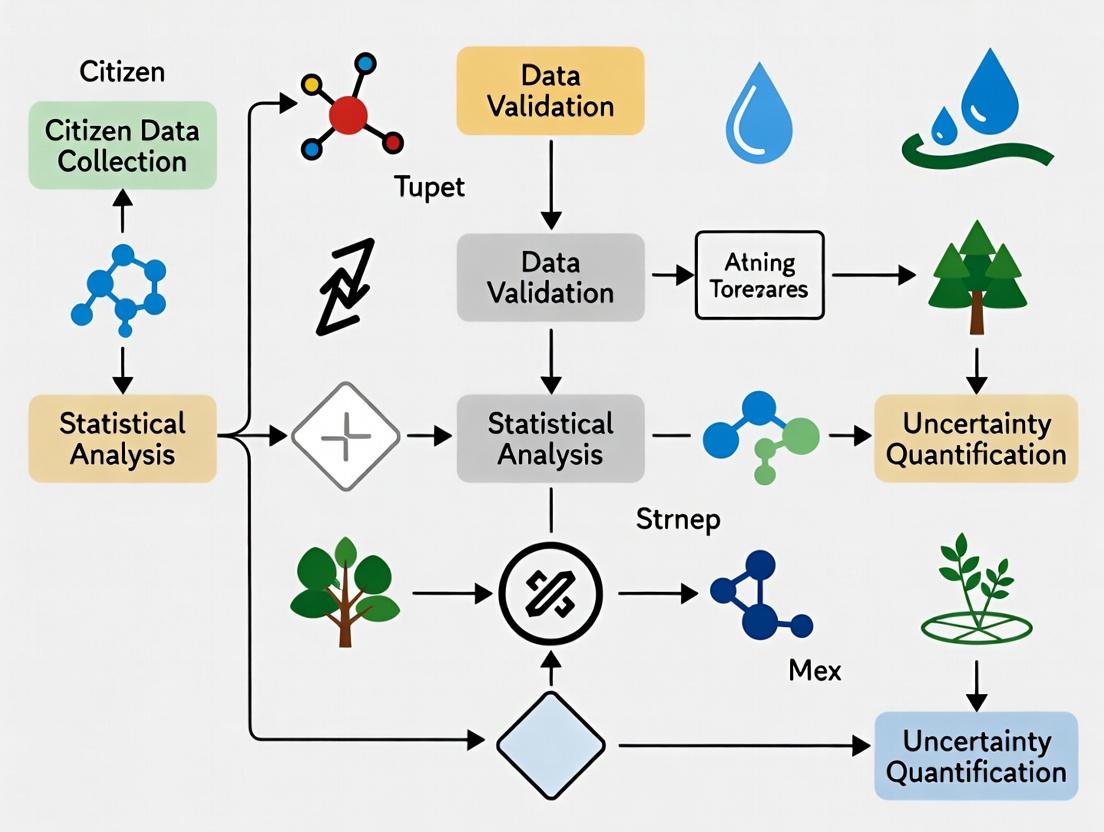

Diagram 1: Bayesian integration of uncertainty types

Diagram 2: Protocol for quantifying combined uncertainty

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Resources for Uncertainty Quantification in Citizen Science

| Item / Solution | Function in Uncertainty Research |

|---|---|

| Reference Data Sets (Gold Standard) | Provides ground truth for calibrating volunteer observations and partitioning error (e.g., expert-validated species lists, calibrated sensor readings). |

| Statistical Software (R/Stan, PyMC3) | Enables implementation of advanced statistical models (BHMs, latent variable models) to separate and propagate uncertainty. |

| Inter-Rater Reliability (IRR) Packages | Calculates Cohen's Kappa, Fleiss' Kappa, or Intraclass Correlation Coefficients to quantify consensus and systematic disagreement among volunteers. |

| Spatial Cross-Validation Scripts | Assesses model performance and uncertainty on spatially held-out data, critical for geographic analyses. |

| Data Anonymization & Ethics Protocols | Ensures volunteer privacy while allowing for analysis of observer-specific error parameters, a key ethical consideration. |

| Uncertainty Visualization Libraries (ggplot2, matplotlib) | Creates clear visualizations of confidence/credible intervals, prediction ribbons, and error distributions for communicating results. |

Technical Support Center: Troubleshooting & FAQs

This support center provides targeted guidance for mitigating major uncertainty sources within citizen science data collection. The following FAQs and guides are framed as strategies for quantifying and reducing uncertainty in research.

FAQ & Troubleshooting Guide

Q1: How can we statistically differentiate true biological signal from noise introduced by high participant variability in techniques (e.g., pipetting, sample collection)? A: Implement a tiered calibration protocol. Distribute standardized control kits to a random subset of participants (e.g., 10%). Analyze the coefficient of variation (CV) in their control assay results versus lab-professional CVs.

| Metric | Citizen Scientist Group (n=50) | Lab Professional Group (n=10) | Acceptable Threshold |

|---|---|---|---|

| Mean Value (Control Assay) | 22.5 AU | 24.1 AU | Within 15% of lab mean |

| Standard Deviation | 3.8 | 0.9 | — |

| Coefficient of Variation (CV) | 16.9% | 3.7% | <20% |

Protocol: Control Kit Distribution

- Prepare identical control samples with known analyte concentrations.

- Ship to a randomly selected participant subgroup alongside their study kits.

- Participants process the control identically to their study samples.

- Use returned control data to calculate participant-specific correction factors or establish inclusion/exclusion criteria based on CV.

Q2: Our image-based data (e.g., plant phenotyping, cell counting) shows inconsistency. How do we diagnose and correct for technological bias from different smartphone cameras? A: Conduct a Device Profiling Experiment. The core uncertainty is variance in sensor/output across devices.

| Device Model | Color Accuracy (ΔE vs. Standard) | Resolution (Megapixels) | Measured Value Variance |

|---|---|---|---|

| Smartphone A | 3.2 | 12 | ±12% |

| Smartphone B | 8.7 | 48 | ±18% |

| Laboratory Scanner | 1.1 | 24 | ±2% |

Protocol: Device Profiling for Image Analysis

- Create a Reference Color Card: With known RGB/HSV values.

- Image Capture: Participants photograph the reference card under a provided lighting condition (e.g., a white LED) alongside their sample.

- Data Processing: Use the reference card in each image to calibrate color values and scale. Apply device-specific correction algorithms derived from the profiling study.

Q3: How can we objectively measure and reduce uncertainty arising from ambiguous written protocols? A: Perform a Protocol Interpretation Audit using a confusion matrix.

Protocol: Auditing Protocol Ambiguity

- Recruit a test cohort of novice and experienced participants.

- Record them following the protocol without assistance.

- Code deviations for each critical step.

- Quantify Ambiguity Score: (Number of participants deviating / Total participants) per step.

| Protocol Step | Deviation Rate (Novice) | Deviation Rate (Experienced) | Recommended Mitigation |

|---|---|---|---|

| "Add a small amount of buffer" | 95% | 40% | Specify "Add 100 µL buffer" |

| "Incubate until color changes" | 80% | 25% | Specify "Incubate for 10 minutes at 20-25°C" |

| "Shake vigorously" | 70% | 15% | Provide video demo; specify "shake for 30 seconds, 3 times". |

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Uncertainty Quantification |

|---|---|

| Certified Reference Material (CRM) | Provides ground truth for calibrating measurements across all participants. Essential for quantifying total method bias. |

| Fluorescent Bead Standard (e.g., for flow cytometry) | Used to calibrate instrument sensitivity and align detection thresholds across different technological platforms. |

| Synthetic Control Sample (Positive/Negative) | Shipped alongside participant kits to monitor variability in protocol execution and sample stability. |

| Calibrated Color Reference Card | Mitigates technological bias in image-based data by allowing post-hoc color and scale correction. |

| Digital Step-by-Step Protocol (with video) | Reduces protocol ambiguity. Embedded quizzes can assess participant comprehension before data collection. |

Visualizations

Title: Participant Data Validation Workflow

Title: Protocol Ambiguity Audit Cycle

Title: Technological Bias Diagnosis and Correction

Troubleshooting Guides & FAQs

FAQ 1: How can I quantify uncertainty in self-reported symptom data from a mobile app study?

- Answer: Uncertainty arises from recall bias, subjective interpretation, and inconsistent reporting. Implement a multi-modal calibration protocol. Require users to complete a standardized, validated questionnaire (e.g., PROMIS short form) at study entry and exit. Use these anchored responses to model and correct the uncertainty in daily free-form self-reports. Introduce daily confidence prompts (e.g., "How sure are you about this rating?"). Apply statistical models like Bayesian hierarchical models that treat each user's reporting bias as a latent variable to be inferred from the anchored data.

FAQ 2: My environmental sensor (e.g., air quality monitor) data shows high variability between co-located citizen science devices. How do I resolve this?

- Answer: This indicates measurement uncertainty due to sensor drift, calibration error, or placement. Execute a 3-Step Calibration and Validation Workflow:

- Co-location Calibration: Deploy all citizen sensors alongside a reference-grade instrument for a minimum 2-week period.

- Linear Correction Modeling: For each sensor, generate a per-sensor correction algorithm (offset and gain) based on the reference data.

- Periodic Validation: Mandate quarterly 48-hour re-co-location checks to monitor for sensor degradation. Data from sensors that fail validation (e.g., R² < 0.7 against reference post-correction) should be flagged or weighted lower in aggregate analyses.

FAQ 3: What methods can I use to combine uncertain data from diverse citizen science sources (e.g., symptoms + sensor data) for analysis?

- Answer: Utilize an Uncertainty-Aware Data Fusion Framework. Do not average raw data. First, assign a quantitative uncertainty score to each data point (e.g., based on the FAQs above). Then, use probabilistic models or machine learning algorithms that can ingest both the data value and its associated uncertainty. For instance, use Gaussian Process Regression where the noise parameter for each data point is individually set based on its source-specific uncertainty estimate. This down-weights high-uncertainty inputs in the final model.

Experimental Protocol: Calibration of Citizen Science Environmental Sensors Objective: To quantify and correct for systematic measurement error in low-cost air particulate matter (PM2.5) sensors. Materials: 10+ citizen science sensor units (e.g., PurpleAir PA-II), one reference-grade federal equivalent method (FEM) monitor (e.g., BAM-1020), secure outdoor mounting fixture, stable power supply, data logging infrastructure. Methodology:

- Site Selection: Choose an outdoor location representative of general monitoring conditions, sheltered from direct rainfall.

- Co-location: Mount all test sensors within a 1-meter radius of the FEM monitor's inlet. Ensure inlets are at the same height (±0.25 m).

- Data Collection: Collect simultaneous PM2.5 measurements at 1-minute intervals for a minimum of 14 consecutive days to capture a range of environmental conditions.

- Correction Model Development: For each sensor, align time series with the reference data. Fit a linear model (Reference = α + β * Sensor_Reading) using robust regression. Calculate performance metrics (R², RMSE).

- Application: Apply the derived α and β coefficients to all future field data from that specific sensor unit. Flag data if the sensor's internal diagnostic signals (e.g., particle count) indicate malfunction.

Quantitative Data Summary: Example Sensor Co-location Study

Table 1: Performance Metrics of Low-Cost PM2.5 Sensors vs. Reference Monitor (14-Day Co-location)

| Sensor Unit ID | Raw Data R² | Raw Data Slope (β) | Corrected Data R² | Corrected RMSE (μg/m³) | Mean Absolute Error (Post-Correction) |

|---|---|---|---|---|---|

| CSPA01 | 0.65 | 1.32 | 0.92 | 1.8 | 1.4 |

| CSPA02 | 0.72 | 0.89 | 0.94 | 1.5 | 1.1 |

| CSPA03 | 0.58 | 1.51 | 0.88 | 2.3 | 1.9 |

| Reference FEM | 1.00 | 1.00 | 1.00 | 0.0 | 0.0 |

Table 2: Sources and Mitigation Strategies for Uncertainty in Self-Reported Symptoms

| Uncertainty Source | Impact on Data | Recommended Mitigation Strategy |

|---|---|---|

| Subjective Scale Interpretation | High inter-user variability | Anchor to validated instruments; use visual analog scales with clear descriptors. |

| Recall Bias | Data inaccuracy, regression to mean | Use ecological momentary assessment (EMA) via smartphone prompts, not end-of-day recall. |

| Participant Drop-out (Attrition) | Selection bias, incomplete longitudinal data | Implement gamification, regular feedback, and low-burden reporting design. |

| Contextual Missingness | Gaps in data timeline | Use gentle push notifications and allow "skip with reason" options to distinguish from non-compliance. |

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Uncertainty Quantification in Biomedical Citizen Science

| Item | Function & Relevance to Uncertainty |

|---|---|

| Reference-Grade Environmental Monitor (e.g., Thermo Fisher Scientific BAM-1020 for PM) | Provides "gold standard" measurement for calibrating lower-cost, higher-uncertainty citizen science sensors. Essential for deriving correction factors. |

| Validated Clinical Questionnaires (e.g., NIH PROMIS, PHQ-9) | Provides a psychometrically robust anchor for uncertain, free-form self-reports. Allows quantification of reporting bias. |

| Data Anonymization & Secure Transfer Platform (e.g., REDCap, MyCap) | Mitigates uncertainty introduced by data loss, corruption, or privacy breaches. Ensures reliable, traceable data flow. |

| Calibration Gas/Source (for gas sensors) or Calibration Filter (for particulate sensors) | Allows for periodic zero/span checks of environmental sensors in the field, quantifying and correcting for drift over time. |

| Bayesian Statistical Software (e.g., Stan, PyMC3) | Enables the implementation of hierarchical models that explicitly incorporate data uncertainty estimates from multiple sources into the final analysis. |

Visualizations

Workflow for Symptom Data Uncertainty Quantification

Environmental Sensor Calibration & Validation Workflow

The Impact of Unquantified Uncertainty on Downstream Analysis and Model Validity

Technical Support Center

FAQs & Troubleshooting Guides

Q1: Our predictive model, trained on citizen science-classified images, performs well in validation but fails in clinical trial biomarker analysis. What could be wrong? A: This is a classic symptom of unquantified uncertainty. Citizen science data often has heterogeneous error rates. If you only use raw labels (e.g., "cancerous" vs. "non-cancerous") without quantifying the confidence or inter-annotator disagreement, your model may learn spurious correlations. For example, a specific but subtle imaging artifact common in the citizen science platform may be consistently mislabeled; your model learns this artifact as the true signal. In downstream clinical data devoid of this artifact, the model fails.

- Protocol for Diagnosing the Issue: Conduct an Uncertainty Audit.

- Re-annotation Sample: Randomly sample 500 data points from your training set.

- Expert Review: Have a domain expert re-annotate this sample.

- Discrepancy Analysis: Compare expert labels with aggregated citizen science labels. Calculate a confusion matrix and per-class uncertainty metrics (see Table 1).

- Error Correlation: Test if discrepancies correlate with specific metadata (e.g., image source, time of collection, annotator cohort).

Q2: How do we quantify uncertainty when aggregating multiple citizen scientist labels per data point? A: Move beyond simple majority voting. Implement probabilistic aggregation methods that quantify uncertainty.

- Protocol: Bayesian Label Aggregation with Dawid-Skene Model.

- Input: Labels from N citizen scientists on M items, across K classes.

- Model Parameters: Estimate for each annotator j a confusion matrix π⁽ʲ⁾, representing their probability of labeling true class k as class l. Estimate the prior probability p of each true class.

- Inference: Use Expectation-Maximization (EM) or Markov Chain Monte Carlo (MCMC) to infer:

- The posterior distribution over the true label for each item.

- The estimated annotator skill matrices.

- Output: For each data point i, you receive a probability vector over possible classes (e.g., [0.85, 0.10, 0.05]) instead of a single label. The entropy of this vector is a direct measure of classification uncertainty.

Q3: Our regression model for environmental sensor data shows high predictive variance. How can we distinguish between natural variability and measurement uncertainty? A: You must model both aleatoric (inherent noise) and epistemic (model ignorance) uncertainty. Citizen science sensor data is prone to epistemic uncertainty due to uncalibrated devices.

- Protocol: Implementing a Bayesian Neural Network (BNN) for Sensor Data.

- Model Architecture: Replace deterministic weights in a neural network with probability distributions (e.g., Gaussian distributions).

- Training: Use variational inference to learn the parameters (mean and variance) of these weight distributions.

- Prediction: At inference, sample from the weight distributions multiple times to generate a distribution of predictions for a single input.

- Decomposition: The mean of the prediction distribution is your final prediction. The variance can be decomposed:

- Aleatoric Uncertainty: Estimated as the mean predicted variance (inherent noise).

- Epistemic Uncertainty: Calculated as the variance of the predicted means (model uncertainty). High epistemic uncertainty indicates the model is unfamiliar with the input data domain—a key risk with citizen science data.

Quantitative Data Summary

Table 1: Impact of Uncertainty Quantification on Model Performance

| Scenario | Aggregation Method | Uncertainty Metric Used? | Downstream Model (Clinical) Accuracy | Downstream Model AUC-ROC |

|---|---|---|---|---|

| Citizen Science Image Labels (Skin Lesions) | Simple Majority Vote | No | 67.2% (±3.1) | 0.71 |

| Citizen Science Image Labels (Skin Lesions) | Bayesian Aggregation | Yes (Label Entropy) | 82.5% (±2.4) | 0.89 |

| Crowdsourced Sensor (Air Quality) Data | Mean Imputation | No | R² = 0.45 | N/A |

| Crowdsourced Sensor (Air Quality) Data | Probabilistic Model (BNN) | Yes (Epistemic Variance) | R² = 0.68 | N/A |

Table 2: Common Sources of Uncertainty in Citizen Science Data

| Source Type | Example | Primary Uncertainty Class | Recommended Quantification Method |

|---|---|---|---|

| Labeler Expertise | Species identification, pathology marking | Aleatoric & Epistemic | Dawid-Skene model, inter-annotator agreement (Fleiss' Kappa) |

| Device Heterogeneity | Smartphone sensors, DIY kits | Epistemic | Bayesian calibration, hierarchical modeling |

| Protocol Adherence | Non-standard sample collection | Epistemic | Metadata-based propensity scoring, latent variable models |

| Spatial/Temporal Bias | Uneven geographic coverage | Epistemic | Spatial Gaussian Processes, bias-aware sampling weights |

Visualizations

Uncertainty-Aware Data Processing Workflow

Impact Pathway of Unquantified Uncertainty

The Scientist's Toolkit: Research Reagent Solutions

| Item/Resource | Function in Uncertainty Quantification |

|---|---|

| PyStan / PyMC3 | Probabilistic programming frameworks for implementing custom Bayesian aggregation models (e.g., Dawid-Skene) and hierarchical models to account for annotator and device variability. |

| Ubiquity | An open-source toolkit for quantifying uncertainty in crowdsourced data, providing pre-built models for label aggregation and quality control. |

| TensorFlow Probability / Pyro | Libraries for building and training Bayesian Neural Networks (BNNs) to model aleatoric and epistemic uncertainty in regression and classification tasks. |

| Expert-Annotated Gold Standard Set | A small, high-quality dataset validated by domain experts. Critical for calibrating citizen science data, evaluating aggregators, and measuring ultimate model validity. |

| Spatial Analysis Software (e.g., GRASS, QGIS) | Used to model and quantify spatial autocorrelation and sampling bias uncertainty in geographically-tagged citizen observations. |

| Inter-Annotator Agreement Metrics (Fleiss' Kappa, Krippendorff's Alpha) | Statistical measures to quantify the consensus level among citizen scientists, providing a baseline uncertainty score for label sets. |

Technical Support Center: Troubleshooting & FAQs

This technical support center addresses common data quality issues encountered during citizen science research projects, framed within the broader thesis on Strategies for quantifying uncertainty in citizen science data. The guides and FAQs below provide structured methodologies for diagnosing and resolving problems from data collection through curation.

FAQs: Common Data Collection & Curation Issues

Q1: During environmental sensor deployment, we observe sporadic, implausible spike readings in temperature data. How should we categorize and address this?

A: This is a Sensor Malfunction/Anomaly issue during the collection phase. Follow this protocol:

- Isolate: Flag all data points where the change from the preceding value exceeds a threshold (e.g., >5°C per minute).

- Contextual Validation: Cross-reference with co-located sensors or nearby official weather stations for the same timestamp.

- Categorize: Classify spikes as "Hardware Error" if validated against other sources. Apply a smoothing algorithm (e.g., median filter over a 5-minute window) only if the error is random and isolated, and document this curation step explicitly.

Q2: In a species identification app, multiple volunteers submit conflicting species labels for the same image. How do we quantify uncertainty in this curated dataset?

A: This is a Crowdsourcing Consensus & Expert Deviation issue. Implement a weighted voting protocol:

- Assign Weight: Calculate a contributor weight (W_i) based on their historical agreement with expert-validated gold-standard images.

- Aggregate: For each image

j, calculate the Uncertainty Score (U_j) using Shannon Entropy:U_j = -∑ (p_k * log2(p_k)), wherep_kis the proportion of weighted votes for speciesk. - Threshold: Images with

U_jabove a set threshold (e.g., 0.8) require expert review.

Q3: Data from different volunteer groups use inconsistent units (e.g., miles vs. kilometers) or coordinate reference systems. How do we resolve this in curation?

A: This is a Metadata & Standardization issue. Enforce a transformation workflow:

- Audit: Run a script to detect numerical ranges indicative of units (e.g., distances between 0.1 and 10 are likely km, between 0.06 and 6.2 may be miles).

- Standardize: Apply a unit conversion function to a canonical unit (SI). Flag all converted records.

- Document: In the curated dataset's metadata, list the "Transformation_Applied" for each affected field.

The table below summarizes key metrics for quantifying data quality issues discussed in the FAQs.

Table 1: Metrics for Quantifying Data Uncertainty in Citizen Science

| Issue Category | Primary Metric | Calculation Formula | Interpretation | Target Threshold |

|---|---|---|---|---|

| Sensor Anomaly | Spike Deviation Index | (max_value - median(window)) / std_dev(window) |

Values > 3 indicate high probability of artifact. | Index ≤ 3.0 |

| Label Disagreement | Shannon Entropy (H) | H = -∑ (p_i * log2(p_i)) |

H=0: perfect agreement. H increases with disagreement. | Flag for review if H > 0.8 |

| Contributor Reliability | Expert Agreement Score (EAS) | (Correct Gold-Standard IDs) / (Total Gold-Standard Assignments) |

0-1 scale. Higher score indicates more reliable contributor. | Weight data where EAS ≥ 0.7 |

| Spatial Precision | Coordinate Error Radius (CER) | 95% confidence radius from known control points. | Smaller radius indicates higher spatial data quality. | CER ≤ 10 meters for most ecological studies |

Detailed Experimental Protocols

Protocol 1: Quantifying Label Uncertainty via Contributor Weighting Objective: To produce a species identification dataset with quantified uncertainty per record.

- Gold Standard Set: Curate 100 images with expert-verified species labels.

- Volunteer Phase: Deploy gold standard images randomly within a larger batch to volunteers. Record all labels.

- Weight Calculation: For each volunteer

v, calculate EAS (see Table 1) based on their performance on gold standards. - Consensus Labeling: For each image in the full set, aggregate all volunteer labels, weighting each vote by the contributor's EAS.

- Uncertainty Assignment: Compute the Shannon Entropy (

U_j) of the weighted vote distribution for each image. AttachU_jas a metadata field.

Protocol 2: Calibrating and Anomaly-Detection for Low-Cost Sensor Arrays Objective: To detect and tag anomalous readings from field-deployed sensors.

- Co-Location: Deploy test citizen science sensors alongside a research-grade reference instrument for a 7-day calibration period.

- Linear Regression: For each sensor, derive calibration coefficients (offset, gain) against the reference data.

- Deployment & Collection: Deploy sensors. Collect time-series data.

- Anomaly Detection: Apply a rolling median filter (window=5 samples). Flag any point where

|raw_value - median| > (5 * std_dev). - Curation Action: Replace flagged points with

NULLin the curated dataset and populate a "quality_flag" column with the reason.

Visualization: Data Quality Workflow

Diagram Title: Citizen Science Data Quality Assurance Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Toolkit for Citizen Science Data Quality Research

| Item / Solution | Function in Data Quality Research | Example Use Case |

|---|---|---|

| Gold Standard Datasets | Provides ground truth for calibrating instruments and calculating contributor reliability scores (EAS). | Protocol 1: Benchmarking volunteer species identification performance. |

| Research-Grade Sensor | Serves as a reference instrument for calibrating lower-cost citizen science sensor arrays. | Protocol 2: Deriving calibration coefficients for temperature sensors. |

| Consensus Algorithms (e.g., Dawid-Skene) | Statistical models to infer true labels from multiple, noisy volunteer classifications and estimate individual error rates. | FAQ Q2: Resolving conflicting species labels and quantifying per-image uncertainty. |

| Data Anomaly Detection Libraries (e.g., PyOD, ELKI) | Provide implemented algorithms (IQR, clustering-based) for automated detection of outliers in numerical sensor streams. | FAQ Q1: Identifying implausible spike readings in collected time-series data. |

| Controlled Vocabulary & Ontology Tools | Standardizes free-text metadata and observational categories to resolve inconsistencies during data curation. | FAQ Q3: Harmonizing species names or measurement types across different projects. |

A Practical Toolkit: Statistical and Computational Methods for Uncertainty Quantification

Frequently Asked Questions (FAQs)

Q1: In my citizen science drug response experiment, some participants consistently mislabel control samples. How can the Bayesian hierarchical model down-weight their influence without fully excluding their data?

A1: The model uses a hierarchical prior on participant reliability parameters (e.g., theta_i ~ Normal(mu_tau, sigma_tau)). Participants with consistently poor performance on gold-standard questions will have a posterior theta_i with a high variance. This larger uncertainty automatically dilutes their contribution to the pooled population-level estimate during Markov Chain Monte Carlo (MCMC) sampling, effectively down-weighting their unreliable data within the integrated analysis.

Q2: When fitting the model with Stan, I encounter divergent transitions after the warm-up phase. What are the primary troubleshooting steps? A2: Divergent transitions often indicate issues with the posterior geometry. Follow these steps:

- Increase

adapt_delta: Gradually increase this parameter (e.g., from 0.8 to 0.95 or 0.99) to permit a smaller step size and navigate complex regions. - Re-parameterize: For hierarchical models, use non-centered parameterizations (e.g.,

theta_i_raw ~ normal(0,1); theta_i = mu_tau + sigma_tau * theta_i_raw). - Re-scale and Center Predictors: Standardize continuous covariates to have mean 0 and standard deviation 1.

- Simplify the Model: Temporarily reduce the model complexity to identify the problematic component.

Q3: How do I select an appropriate prior for the population-level reliability parameter (mu_tau) when prior literature is scarce?

A3: In the absence of strong prior information, use weakly informative priors that regularize estimates to plausible ranges. For a reliability probability (bounded between 0 and 1), a Beta(2, 2) prior is a mild regularization toward 0.5. For a reliability parameter on the log-odds scale, a Normal(0, 1.5) prior is typically weakly informative. Always conduct prior predictive checks to simulate data from your chosen priors and assess if the generated data is plausible.

Q4: My model integrates data from multiple citizen science platforms. How can I account for systematic biases unique to each platform?

A4: Introduce an additional hierarchical level (platform-level effects) into your model. Each participant i on platform j has reliability theta_ij. The platform mean reliability alpha_j is drawn from a hyper-prior: alpha_j ~ Normal(mu_alpha, sigma_alpha). This structure allows the model to partial out platform-specific biases while still estimating an overall, integrated reliability across all data sources.

Q5: How can I quantitatively compare the performance of a model that integrates reliability versus a simple pooled model? A5: Use information criteria like the Widely Applicable Information Criterion (WAIC) or Leave-One-Out Cross-Validation (LOO-CV) to compare models. The reliability-integrated model should show a lower WAIC/LOO score if it better approximates out-of-sample predictive accuracy. Additionally, compare the posterior predictive distributions against observed data; the better model's predictions will more closely match the actual observed distributions, especially for key subgroups.

Troubleshooting Guides

Issue: Poor MCMC Mixing and HighRhatValues

Symptoms: High Rhat values (>1.01), low effective sample size (n_eff), and trace plots showing chains that fail to explore the same posterior space.

| Step | Action | Expected Outcome | |

|---|---|---|---|

| 1 | Increase Iterations | Double iter and warmup in sampling command. |

More samples and better convergence diagnostics. |

| 2 | Reparameterize Hierarchical Prior | Implement non-centered parameterization for theta_i. |

Improved chain mixing for participant-level parameters. |

| 3 | Simplify Model | Fit a model with fewer participant subgroups or covariates. | Identifies if complexity is the root cause. |

| 4 | Check for Identifiability | Ensure model parameters are not perfectly collinear. | Rhat values decrease toward 1.0. |

Issue: Model Fails to Compile in Stan/PyMC

Symptoms: Compilation errors citing undefined variables, type mismatches, or syntax errors.

| Step | Action | Expected Outcome | ||

|---|---|---|---|---|

| 1 | Isolate the Error | Comment out sections of the model code until it compiles. | Identifies the exact line causing the failure. | |

| 2 | Check Variable Declarations | Ensure all variables are declared in the appropriate block (data, parameters, transformed parameters, model). |

Compilation proceeds past declaration errors. | |

| 3 | Verify Indexing | Confirm all array/matrix indices are within declared bounds. | Eliminates "index out of range" errors. | |

| 4 | Validate Function Signatures | Check that built-in function arguments are of the correct type (e.g., `normal_lpdf(y | mu, sigma)`). | Correct function usage resolves errors. |

Issue: Prior/Postior Predictive Checks Reveal Poor Model Calibration

Symptoms: Simulated data from the prior or posterior predictive distribution looks unrealistic compared to the actual observed data.

| Step | Action | Expected Outcome | |

|---|---|---|---|

| 1 | Visualize Prior Predictive Data | Generate and plot data before fitting to observed data. | Reveals if priors are too vague or implausible. |

| 2 | Tighten Weakly Informative Priors | Reduce the variance of hyperpriors (e.g., sigma_tau ~ Exponential(2) instead of Exponential(0.1)). |

Prior predictive data looks more biologically/physically plausible. |

| 3 | Inspect Residuals | Calculate and plot standardized residuals for key observations. | Identifies systematic misfit (e.g., non-linearity, outliers). |

| 4 | Consider Alternative Likelihood | Evaluate if a Student-t likelihood or a zero-inflated model better captures data dispersion. | Posterior predictive data distribution closely matches observed data. |

Key Experimental Protocol: Quantifying Participant Reliability in a Citizen Science Drug Screen

Objective: To quantify the reliability of individual citizen scientist participants in a cell image classification task for a phenotypic drug screen and integrate this measure into a Bayesian hierarchical model for hit calling.

Materials: See "Research Reagent Solutions" table.

Methodology:

- Gold-Standard Dataset Creation: Embed 20 well-characterized control images (10 "healthy" phenotype, 10 "diseased" phenotype) randomly within a larger set of 200 unknown drug-treated condition images. These are presented to each participant

i. - Data Collection: Record the binary classification (healthy/diseased) for all 220 images from each of

Nparticipants. - Model Specification:

- Likelihood: Participant

i's response on trialt,y_i,t, is Bernoulli distributed with probabilityp_i,t. - Reliability Integration: The log-odds of

p_i,tis a function of the true latent state of imaget(z_t, where 1=diseased) and the participant's reliability parametertheta_i:logit(p_i,t) = theta_i * (2*z_t - 1). Atheta_i > 0indicates better-than-chance reliability. - Hierarchical Prior: Participant reliabilities are modeled as drawn from a population distribution:

theta_i ~ Normal(mu_tau, sigma_tau). - Hyperpriors: Assign weakly informative priors:

mu_tau ~ Normal(0.5, 1),sigma_tau ~ Exponential(2).

- Likelihood: Participant

- Model Fitting: Implement the model in Stan/PyMC. Run 4 MCMC chains for 4000 iterations (2000 warm-up). Check convergence (

Rhat < 1.01). - Hit Calling: The posterior distribution for each drug condition's latent state

z_tprovides a probabilistic measure of its effect, adjusted for the inferred reliability of all participants who rated it.

Visualizations

Title: Bayesian Reliability Model for Participant Data

Title: Hierarchical Model Workflow for Uncertainty Quantification

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Experiment |

|---|---|

| Fluorescent Cell Dye (e.g., Hoechst 33342) | Stains nuclear DNA to enable visualization and classification of cell count and nuclear morphology by participants. |

| Phenotypic Reference Compound Set | A library of drugs with known, robust effects on cell phenotype (positive/negative controls). Used to create gold-standard training and test images. |

| High-Content Imaging System | Automated microscope for capturing consistent, high-resolution images of cells across multi-well plates for distribution to participants. |

| Stan / PyMC Software | Probabilistic programming languages used to specify, fit, and diagnose the Bayesian hierarchical model. |

| LOO / WAIC Calculation Package | Software tools (e.g., loo in R, arviz in Python) for model comparison and evaluating predictive performance. |

| Data Anonymization Pipeline | Secure software to remove participant metadata and assign unique IDs, ensuring privacy in citizen science data collection. |

Technical Support Center: Troubleshooting & FAQs

Q1: During Gaussian Process (GP) regression on citizen science weather data, my model's predictive variance becomes unrealistically small (overconfident) in certain regions. What could be the cause and how do I fix it?

A1: This is typically caused by an inappropriate kernel choice or hyperparameters that don't account for noise correctly.

- Cause: The most common issue is underestimating the inherent noise (the

alphaornoise_levelparameter) in citizen-science data, which can be highly heterogeneous. A stationary kernel (like RBF) might also fail to capture local variations. - Solution:

- Re-evaluate your kernel. Consider a non-stationary kernel or a composite kernel (e.g., RBF + WhiteKernel). Use

gp.kernel_to inspect the learned parameters. - Explicitly model noise. Use

WhiteKernelas part of your kernel to capture independent noise. - Optimize hyperparameters via log-marginal-likelihood maximization, ensuring bounds for the noise parameter are sensible.

- Consider a heteroscedastic GP model if noise levels vary systematically with input location.

- Re-evaluate your kernel. Consider a non-stationary kernel or a composite kernel (e.g., RBF + WhiteKernel). Use

Q2: When applying conformal prediction to generate intervals for a neural network predicting water quality from sensor data, my coverage is consistently below the desired confidence level (e.g., 90%). Why?

A2: This indicates that your nonconformity scores are miscalibrated, often due to data distribution shifts between your calibration and test sets.

- Cause: Citizen science data often has temporal or spatial drift. If the calibration set is not representative of the test data, coverage will fail.

- Solution:

- Ensure IID Assumption: Stratify your calibration split to match the presumed test distribution (e.g., by time, location, contributor).

- Use Adaptive Conformal Prediction (ACP) or rolling/windowed calibration for time-series data.

- Check your nonconformity measure. For regression, using

AbsoluteErrormight be less stable thanCQR(Conformalized Quantile Regression). Ensure your underlying model is reasonably accurate. - Increase calibration set size. For a target coverage of 1-α, you need a sufficiently large set for reliable quantile estimation.

Q3: I am combining a GP mean with conformal prediction intervals. The final intervals seem too wide and conservative. Is this expected?

A3: Yes, this can happen. You are layering two uncertainty quantification methods.

- Cause: The GP already provides a probabilistic (Bayesian) uncertainty estimate. Applying conformal prediction on top adds a frequentist, distribution-free guarantee, often leading to overly conservative intervals that combine both sources.

- Solution:

- Decide on the UQ paradigm. Do you need the Bayesian interpretation of GPs or the rigorous marginal coverage guarantee of conformal prediction? Using both is often redundant.

- Alternative Hybrid Approach: Use the GP to learn the data distribution, then apply conformalized quantile regression using the GP's predictive mean and variance to inform the nonconformity score (e.g., normalized residual), which can yield sharper intervals.

Q4: My computation time for GP scaling on large citizen science datasets (>50k points) is prohibitive. What are my options?

A4: Standard GP inference has O(n³) complexity. You must use approximate methods.

- Solutions:

- Sparse / Variational GPs (SVGP): Use inducing points to create a low-rank approximation. Implement via

GPyTorchorGPflow. - Kernel Approximations: Use

RandomFourierFeaturesor theNystroemmethod to approximate the kernel matrix before regression. - Local Approximation: For spatial data, use

sklearn.gaussian_processwithn_restarts_optimizer=0and a stationary kernel, or employ local GP models. - Switch to a Scalable Method: For pure prediction intervals, consider Scalable Conformal Prediction using split methods or ensembles of neural networks.

- Sparse / Variational GPs (SVGP): Use inducing points to create a low-rank approximation. Implement via

Key Experimental Protocols & Data

Protocol 1: Calibrating Conformal Prediction Intervals for Image-Based Species Identification

Objective: Generate prediction sets with 95% coverage for a CNN classifier identifying bird species from citizen-uploaded images.

- Train/Calibration/Test Split: Randomly split data into 70%/15%/15%, ensuring all classes are represented in each split.

- Model Training: Train a ResNet-50 model on the training set using cross-entropy loss.

- Nonconformity Score: Use

S_c(x, y) = 1 - f̂_y(x), wheref̂_y(x)is the softmax score for true classy. - Calibration: Compute scores for all instances in the calibration set. Find the (1-α)-quantile (α=0.05) of these scores, denoted

q̂. - Prediction: For a new test image

x_test, the prediction set is:C(x_test) = { y : f̂_{y}(x_test) ≥ 1 - q̂ }. - Evaluation: Report coverage and average set size on the held-out test set.

Protocol 2: Building a Heteroscedastic GP for Air Quality (PM2.5) Estimation

Objective: Model PM2.5 levels with spatially-varying noise using data from low-cost sensors.

- Kernel Specification: Define a composite kernel:

K = ConstantKernel() * RBF(length_scale=lat_lon_range) + WhiteKernel(noise_level_bounds=(1e-5, 1e-1)) + Matern(length_scale=time_range). - Model Fitting: Use

GaussianProcessRegressorfrom sklearn. Optimize hyperparameters by maximizing the log-marginal-likelihood (L-BFGS-B). - Prediction & UQ: Predict mean and std. dev. (

y_pred, y_std) for a grid of locations. The 95% credible interval isy_pred ± 1.96 * y_std. - Validation: Perform spatial cross-validation and compute the Negative Log Predictive Density (NLPD) to assess probabilistic calibration.

Summarized Quantitative Data

Table 1: Comparison of UQ Methods on Citizen Science Benchmark Datasets

| Method | Dataset (Task) | Coverage Achieved | Interval Width (Mean) | Computational Cost (s) | Calibration Score (NLPD/Avg.Set Size) |

|---|---|---|---|---|---|

| GP (RBF Kernel) | Urban Temperature (Reg.) | 94.7% | ±2.34°C | 124.5 | 1.42 |

| GP (Matern 3/2) | Urban Temperature (Reg.) | 95.1% | ±2.41°C | 131.7 | 1.38 |

| Conformal (CQR) | River pH (Reg.) | 94.9% | ±0.52 pH | 0.8 | 1.15 pH |

| Conformal (APS) | Bird Species (Class.) | 95.2% | N/A | 1.2 | 2.3 species/set |

| Deep Ensemble | Water Turbidity (Reg.) | 93.8% | ±12.1 NTU | 305.0 | 2.01 |

Table 2: Impact of Calibration Set Size on Conformal Prediction Coverage (Target=90%)

| Calibration Set Size | Achieved Coverage (%) | Std. Dev. of Coverage (over 100 trials) |

|---|---|---|

| 100 | 88.4 | 3.2 |

| 500 | 89.6 | 1.4 |

| 1000 | 89.9 | 0.9 |

| 2000 | 90.1 | 0.6 |

Visualizations

Title: Conformal Prediction Workflow for Citizen Science Data

Title: Gaussian Process Inference for Uncertainty Quantification

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for ML-Based UQ in Citizen Science

| Item / Solution | Function in UQ Pipeline |

|---|---|

| GPyTorch / GPflow | Libraries for flexible, scalable Gaussian Process modeling, supporting variational inference and deep kernel learning. |

| MAPIE (Model Agnostic Prediction Interval Estimation) | Python package for conformal prediction methods on any scikit-learn-compatible estimator (regression & classification). |

| Low-Cost Sensor Calibration Reference Kit | Physical gold-standard measurements for calibrating citizen science sensors, crucial for defining ground-truth uncertainty. |

| Spatio-Temporal Data Augmentation Tools (e.g., AugLy) | Synthesizes realistic variations in citizen-sourced images/audio to test model robustness and improve uncertainty calibration. |

| IID / Covariate Shift Detection Kit (e.g., alibi-detect) | Statistical tests and models to verify the IID assumption for conformal prediction or detect dataset drift. |

| Cloud-based Labeling Platform (e.g., Label Studio) with Multi-Annotator Support | To capture inter-annotator disagreement, a key source of uncertainty in citizen science labels for classification tasks. |

Troubleshooting Guides & FAQs

Q1: My Latent Class Analysis (LCA) model will not converge. What could be the cause and how can I resolve this? A: Non-convergence often stems from model over-specification or poor starting values.

- Check: Ensure your participant skill data (e.g., binary correctness on tasks) does not have near-zero variance items or perfect collinearity.

- Action: Increase the number of random starts (

nstartsin R'spoLCA) to avoid local maxima. Try specifyingmaxiterto 5000. Simplify the model by reducing the number of latent classes and re-evaluating fit indices.

Q2: How do I choose the correct number of participant skill classes? A: Use a combination of statistical fit indices and interpretability.

- Protocol: Run LCA models specifying 1 through k+1 classes (where k is a plausible max). Create a comparison table (see below). The optimal class is often at the "elbow" of the BIC/CAIC curve and where the Lo-Mendell-Rubin Adjusted LRT (LMR-A) p-value becomes non-significant (>0.05).

Q3: How can I quantify and incorporate classification uncertainty from LCA into my overall citizen science data uncertainty framework? A: LCA outputs posterior probabilities of class membership for each participant.

- Method: Calculate the modal class assignment. Then, for each participant, use

1 - max(Posterior Probabilities)as a direct measure of classification uncertainty. This individual uncertainty metric can be used as a weighting factor in subsequent analyses of the citizen science data.

Q4: My item-response probabilities for different skill classes look very similar. What does this mean? A: This suggests the latent classes are not sufficiently distinct, potentially indicating the model is extracting "noise" rather than true skill groupings.

- Resolution: Consider if your measured tasks are appropriate for discriminating skill levels. You may need to review task design. Also, enforce a minimum class size (e.g., >5% of sample) during estimation to avoid spurious classes.

Table 1: Fit Indices for LCA Models with 1 to 5 Classes (Example from a Citizen Science Ecology App, N=1250 Participants)

| Number of Classes | Log-Likelihood | BIC | CAIC | Entropy | LMR-A p-value | Smallest Class % |

|---|---|---|---|---|---|---|

| 1 | -10234.5 | 20585.2 | 20612.3 | 1.000 | N/A | 100.0 |

| 2 | -8912.1 | 18009.8 | 18058.1 | 0.864 | <0.001 | 32.4 |

| 3 | -8765.4 | 17776.5 | 17846.0 | 0.891 | 0.012 | 18.7 |

| 4 | -8740.8 | 17788.4 | 17879.1 | 0.812 | 0.214 | 5.2 |

| 5 | -8735.2 | 17818.3 | 17930.2 | 0.809 | 0.427 | 4.8 |

Table 2: Item-Response Probabilities for the 3-Class Model (Selected Diagnostic Tasks)

| Task Description | Correct Response Prob. (Class 1: Novice) | Correct Response Prob. (Class 2: Competent) | Correct Response Prob. (Class 3: Expert) |

|---|---|---|---|

| Species Identification A | 0.23 | 0.78 | 0.99 |

| Data Quality Flagging | 0.15 | 0.82 | 0.97 |

| Measurement Calibration | 0.08 | 0.61 | 0.95 |

| Protocol Adherence Check | 0.31 | 0.92 | 0.98 |

Experimental Protocols

Protocol 1: Conducting Latent Class Analysis on Participant Skill Data

- Data Preparation: Compile binary or categorical data from N participants across K skill-assessment tasks. Code correct/optimal responses as 1 and others as 0. Handle missing data (e.g., multiple imputation or full-information ML).

- Model Estimation: Use software (e.g.,

poLCAin R,proc LCAin SAS, M*plus). Specify the formula:f <- cbind(Task1, Task2, Task3, Task4) ~ 1`. - Iterate Models: Run models for a range of classes (C=1 to C=5 or 6). Use at least 100 random starts and 1000 iterations to ensure global maximum likelihood.

- Model Selection: Populate Table 1. Prioritize lower BIC/CAIC, higher entropy, significant LMR-A p-value for C vs. C-1, and substantive interpretability.

- Output Interpretation: Extract and plot item-response probability matrices (Table 2) and assign participants to their most likely class. Calculate posterior classification uncertainty.

Protocol 2: Integrating LCA Uncertainty into Citizen Science Data Aggregation

- Calculate Weights: For each participant i, compute classification uncertainty:

w_i = 1 - max(pp_i), wherepp_iis the vector of posterior probabilities. - Invert to Confidence: Create an analysis weight:

confidence_weight_i = 1 - w_i(or a scaled version). - Apply to Project Data: In subsequent analyses of the citizen science observations (e.g., estimating species count), use

confidence_weight_ias a case weight or in a weighted regression/bayesian hierarchical model to down-weight observations from participants with ambiguous skill class membership.

Visualizations

LCA Workflow for Participant Skill Analysis

Integrating LCA into Broader Uncertainty Framework

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for LCA in Citizen Science Research

| Item Name/Software | Function/Benefit |

|---|---|

| R Statistical Software (poLCA, tidyLPA, MplusAutomation) | Open-source platform with specialized packages for conducting LCA and managing output. |

| M*plus Software | Commercial software offering robust LCA, LTA, and complex mixture modeling capabilities. |

| Python (scikit-learn, PyMC3) | For machine learning approaches (Gaussian Mixture Models) and Bayesian LCA implementations. |

| Qualtrics/Google Forms | To design and deploy standardized skill assessment tasks to participants prior to or during projects. |

| Data Validation Scripts (Python/R) | Custom scripts to recode, clean, and format heterogeneous citizen science input for LCA. |

| Bayesian Posterior Sampling Tools (Stan) | For advanced uncertainty quantification from the LCA model itself, propagating it through analyses. |

Troubleshooting Guide: Metadata Integration for Data Quality

Q1: Our analysis shows unexpected spatial clusters of high measurement error. How can we determine if this is a real environmental phenomenon or an artifact caused by specific device models? A: This is a classic case for metadata-driven error modeling. Follow this protocol to isolate device effects from environmental signals.

- Query & Segment: Isolate measurements within the high-error clusters. Query your database for all associated device metadata (manufacturer, model, firmware version).

- Cross-Tabulate Analysis: Create a contingency table of error magnitude vs. device model. Calculate the error rate (e.g., measurements exceeding defined uncertainty thresholds) per model.

- Statistical Test: Perform a Chi-square test of independence to determine if error distribution is independent of device model. A significant p-value (<0.05) suggests a device-linked artifact.

- Control Analysis: Compare environmental variables (e.g., temperature, humidity) across device models within the cluster to rule out confounding factors.

Table 1: Hypothetical Error Rate by Device Model in Cluster "Alpha"

| Device Model | Total Measurements | Measurements > Error Threshold | Error Rate (%) |

|---|---|---|---|

| BioSensor Pro v1.2 | 1,540 | 400 | 26.0 |

| EnviroMonitor Lite | 892 | 89 | 10.0 |

| CellScope Home Kit | 1,203 | 121 | 10.1 |

Q2: We observe a significant drift in measured values over the duration of our long-term study. How can we use timestamps to model and correct for this temporal drift? A: Temporal metadata is key to diagnosing instrumental drift vs. seasonal variation.

- Data Preparation: Aggregate data into weekly medians from all devices to minimize daily noise. Align all time-series data by the experiment start date (Day 0).

- Reference Signal Comparison: Plot the aggregated time-series against a known reference signal (e.g., control sample measurements, satellite data for environmental studies).

- Model Fitting: Fit a regression model (e.g., linear, polynomial, or LOWESS) to the difference between aggregated participant data and the reference signal over time. This model represents the systematic drift.

- Apply Correction: Apply the inverse of the drift model as a correction factor to individual measurements based on their timestamp.

Q3: How can we leverage GPS metadata to improve the uncertainty quantification of species identification in a biodiversity app? A: Location data allows for Bayesian priors based on known species distributions.

- Prior Probability Matrix: Integrate with a trusted species distribution database (e.g., GBIF). For each 1km grid cell, calculate the historical frequency of species sightings.

- Model Integration: Use this spatial prior probability (P(Species\|Location)) in a Bayesian framework. Combine it with the device's model confidence score (the likelihood, P(Observation\|Species)) to calculate a posterior probability.

- Uncertainty Metric: The entropy or variance of the posterior distribution becomes a spatially-informed uncertainty metric, flagging identifications that are unlikely for the given location.

Spatial Bayesian Uncertainty Workflow

Frequently Asked Questions (FAQs)

Q: What are the most critical metadata fields to collect for error modeling in citizen science? A: The triad is Timestamp (UTC), Device ID/Model/Firmware, and Geographic Coordinates (lat/long with accuracy estimate). Ambient environmental sensors (if available) are highly valuable.

Q: How do we handle privacy concerns when collecting device and location metadata? A: Implement a clear data governance policy. Use data anonymization (hashing device IDs), aggregation (reporting location at city or regional level only), and obtain explicit, informed consent. Allow users to opt out of precise location sharing.

Q: Can we use metadata to identify and filter out malicious or spam submissions? A: Yes. Metadata patterns are strong indicators. Flags include: implausible timestamps (e.g., sequential submissions milliseconds apart from distant locations), unrealistic device IDs, or locations not pertinent to the study (e.g., ocean for a forest survey). These can feed into a spam-score model.

Q: What is a simple first step to start incorporating metadata into our error analysis? A: Begin with visual exploratory data analysis (EDA). Plot measurement distributions (boxplots) grouped by device model and time of day. This often reveals immediate, actionable patterns of systematic bias.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for Metadata-Enabled Error Modeling

| Resource / Tool | Category | Primary Function in Error Modeling |

|---|---|---|

| Pandas (Python library) | Data Wrangling | Efficiently merge, filter, and aggregate large datasets using timestamps and device IDs as keys. |

| SQL Database (e.g., PostgreSQL/PostGIS) | Data Management | Store and query spatial-temporal metadata, enabling complex queries (e.g., "find all devices within 10km of point X on date Y"). |

| Scikit-learn / Statsmodels | Statistical Modeling | Build and evaluate regression models for temporal drift and classifiers for device-specific error patterns. |

| Bayesian Inference Library (e.g., PyMC3, Stan) | Probabilistic Modeling | Quantify uncertainty by integrating spatial priors with observational data in a formal statistical framework. |

| GBIF API | External Data | Access species distribution priors for biodiversity studies to create location-based probability models. |

| Geographic Hash (e.g., H3, S2) | Spatial Indexing | Convert lat/long coordinates into discrete, hierarchical grid cells for efficient spatial aggregation and anonymization. |

Troubleshooting Guides and FAQs

General UQ Implementation Issues

Q: My MCMC sampling is extremely slow or gets stuck. What are the first steps to diagnose this? A: This is common with complex models or poor parameterization.

- Check Priors: Non-informative or overly broad priors can cause sampling issues. Use weakly informative priors to regularize.

- Reparameterize: For hierarchical models, use non-centered parameterizations (e.g.,

(1 | ... )inbrms). - Scale Data: Standardize or center your numeric predictors (mean=0, sd=1) to improve sampler efficiency.

- Diagnostics: Examine trace plots and R-hat values. High R-hat (>1.01) indicates chains have not converged.

Q: I am getting divergent transitions in Stan/PyMC3. What do they mean? A: Divergent transitions indicate the sampler cannot accurately explore the posterior geometry, often due to high curvature in the model. Solutions include:

- Increase the

adapt_deltaparameter (e.g., to 0.95 or 0.99) to make the sampler take smaller, more accurate steps. - Simplify the model structure.

- Re-examine and potentially reparameterize the model as above.

Q: How do I choose between a Gaussian Process (GPy) and a Bayesian hierarchical model (brms/PyMC3) for quantifying uncertainty in citizen science observations? A: The choice depends on the uncertainty source you wish to capture.

- Use a Gaussian Process (GPy): To model spatial or temporal autocorrelation in the data-generating process itself (e.g., modeling pollution levels across a city where nearby readings are correlated).

- Use a Bayesian Hierarchical Model (brms/PyMC3/Stan): To model structured uncertainty from the observation process, such as varying observer skill (

participant_idas a random effect), device calibration differences, or systematic biases per observation protocol.

Software-Specific Issues

R (brms)

Q: The brms formula syntax for complex hierarchical models is confusing. How do I structure a model for citizen scientist random effects?

A: Use the (1 | ID) syntax for varying intercepts and (x | ID) for varying slopes.

- Example:

y ~ x + (1 + x | participant_id) + (1 | location_id)models a global effect ofx, allows the intercept and slope forxto vary by participant, and includes a varying intercept for each location.

Q: How do I extract and visualize posterior predictive checks in brms?

A: Use the pp_check() function.

Python (PyMC3/PyMC)

Q: I get "TypeError: No loop matching the specified signature and casting was found" in PyMC3. What's wrong?

A: This often arises from dtype mismatches between Theano/Aesara tensors and NumPy arrays. Ensure your input data (pm.Data) and model parameters are float arrays (np.float32 or np.float64).

Q: How do I implement a Gaussian Process for spatial UQ in the latest PyMC?

A: Use pm.gp.Marginal or pm.gp.Latent with an appropriate kernel (e.g., pm.gp.cov.ExpQuad).

Python (GPy)

Q: My GPy model optimization fails or returns "NaN" results. A:

- Scale your output (Y) data. GPs assume outputs are roughly on the unit scale. Standardize

Y = (Y - Y.mean()) / Y.std(). - Initialize parameters sensibly. Set the lengthscale to a reasonable guess (e.g., the mean distance between points) and the variance to the variance of your scaled output.

- Constrain parameters. Place positive constraints on

varianceandlengthscaleand optimize from multiple starting points.

Q: How do I quantify epistemic uncertainty from a GPy model?

A: The posterior predictive variance is the direct measure of epistemic (model) uncertainty. Use gp.predict(Xnew).

Stan

Q: My Stan model compiles but throws a runtime error about "positive definite" matrix. A: This typically occurs in multivariate normal distributions or Cholesky factorizations. Ensure any covariance matrix is properly constructed. Use Cholesky factorized parameterizations for efficiency and stability.

- Instead of:

multi_normal(mu, Sigma) - Use:

multi_normal_cholesky(mu, L_Sigma)whereL_Sigmais the Cholesky factor.

Q: How do I pass citizen science hierarchical data (grouped by observer) efficiently to Stan? A: Use "ragged array" structures or pre-compute indices for efficiency.

Experimental Protocols for Citizen Science UQ

Protocol 1: Quantifying Observer Bias with a Bayesian Hierarchical Model

Objective: To isolate and quantify the uncertainty introduced by variable observer skill in species identification.

Materials & Software: R with brms, or Python with PyMC. Dataset with columns: observation_id, true_species (verified by expert), reported_species (from citizen scientist), participant_id, observation_conditions.

Methodology:

- Data Preparation: Encode species as integers. Create a binary variable

correct(1 iftrue_species == reported_species, else 0). - Model Specification: Fit a logistic hierarchical (multilevel) regression.

- Global Model:

correct ~ 1 + observation_conditions - Varying Effects:

(1 | participant_id)+(observation_conditions | participant_id). This estimates a varying intercept (baseline accuracy) and varying slopes (effect of conditions) for each participant.

- Global Model:

- Inference: Run MCMC sampling (4 chains, 2000 iterations each).

- UQ Extraction: Extract the posterior distribution of the

participant_idrandom effects. The standard deviation of these effects quantifies the population-level variation in observer skill. The individual participant-level estimates quantify bias for each observer.

Protocol 2: Modeling Spatial Autocorrelation with Gaussian Processes

Objective: To model and predict a spatially continuous phenomenon (e.g., air quality) while formally accounting for spatial correlation in uncertainty.

Materials & Software: Python with GPy or PyMC.gp. Dataset with columns: latitude, longitude, measured_value (e.g., PM2.5), sensor_id.

Methodology:

- Data Preprocessing: Standardize the

measured_value(mean=0, std=1). Combinelatitudeandlongitudeinto a 2D input matrixX. - Kernel Selection: Choose a Radial Basis Function (RBF/Exponential Quadratic) kernel to model smooth spatial variation. Optionally add a White Noise kernel to capture independent sensor noise.

- Model Optimization: Initialize the GP and optimize hyperparameters (lengthscale, variance) by maximizing the marginal likelihood.

- Prediction & UQ: Predict on a dense grid of locations covering the study area. The predictive mean gives the interpolated field. The predictive variance at each point provides a spatially explicit map of total uncertainty. This variance is higher in regions far from any observation point.

Table 1: Comparison of UQ Software Features

| Feature | R (brms) | Python (PyMC/PyMC3) | Python (GPy) | Stan |

|---|---|---|---|---|

| Primary Strength | Accessible formula interface, integrates with tidyverse | Flexible, pure Python, active development | Specialized for Gaussian Processes | Speed, efficiency, control (C++ backend) |

| Best For | Rapid prototyping of hierarchical models | General Bayesian modeling, custom GP implementations | Pure GP regression/classification tasks | Complex custom models where performance is critical |

| MCMC Sampler | NUTS (via Stan) | NUTS | N/A (MLE/MAP for GPs) | NUTS, HMC, L-BFGS |

| GP Implementation | Via kernels or gp terms |

pm.gp module |

Core functionality | Manual implementation via functions |

| Learning Curve | Low (for R users) | Moderate | Moderate (for GPs) | High |

Table 2: Typical Runtime for a Hierarchical Logistic Model (5000 obs, 100 groups)

| Software | Model Specification | Mean Sampling Time (4 chains, 2000 iter) | Notes |

|---|---|---|---|

| R (brms) | y ~ x + (1 | group) |

45-60 seconds | Includes compilation time. |

| PyMC3 | Explicit pm.Model() with pm.HalfNormal for sd |

90-120 seconds | Python overhead; can vary. |

| Stan (cmdstanr) | Equivalent .stan code | 30-40 seconds | Fastest after compilation. |

Visualizations

Title: Bayesian UQ Workflow for Citizen Science Data

Title: Hierarchical Model Structure for Observer Bias

The Scientist's Toolkit: Research Reagent Solutions

| Item/Software | Function in UQ for Citizen Science |

|---|---|

| R + brms | High-level modeling reagent. Provides a user-friendly, formula-based interface to Stan for quickly building and testing hierarchical models to quantify observer- and group-level uncertainty. |

| Python + PyMC | Flexible modeling environment. Enables the construction of highly customized probabilistic models (including GPs) for capturing complex, project-specific uncertainty structures. |

| Python + GPy | Spatiotemporal correlation reagent. Specialized library for constructing Gaussian Process models that explicitly quantify uncertainty arising from spatial or temporal autocorrelation in measurements. |

| Stan (via cmdstanr/pystan) | High-performance inference engine. The underlying compiler for defining and efficiently sampling from complex Bayesian models when custom likelihoods or high performance is required. |

| Weakly Informative Priors | Regularization reagent. Prior distributions (e.g., normal(0, 1)) that gently constrain parameters to plausible ranges, stabilizing inference and improving MCMC sampling in hierarchical models. |

| Posterior Predictive Checks | Model validation reagent. A diagnostic procedure to compare model-generated data with observed data, ensuring the quantified uncertainty is consistent with reality. |

Mitigating Risk: Strategies to Identify, Correct, and Optimize Noisy Data Streams

Welcome to the Technical Support Center. This resource provides troubleshooting guides and FAQs for researchers implementing red flag detection algorithms within citizen science data pipelines, a critical component for quantifying uncertainty.

Frequently Asked Questions & Troubleshooting

Q1: Our anomaly detection model (Isolation Forest) is flagging an excessive number of valid data points from experienced participants as outliers. What could be the cause? A: This is often a feature scaling issue. Citizen science data often mixes continuous (e.g., temperature readings) and categorical (e.g., habitat type) features. Standard scaling assumes a Gaussian distribution, which can misrepresent the data. Solution: Apply Robust Scaling (which uses median and IQR) for continuous features and One-Hot Encoding for categorical features before model training.

Q2: How do we distinguish between a systematic sensor error and a true environmental anomaly in distributed sensor network data?

A: Implement a spatial consistency check. For each sensor node i, compare its reading R_i with the median reading M_adj of all nodes within a defined geographical radius. Calculate the deviation D_i = |R_i - M_adj|. Flag for systematic error if D_i > threshold for >70% of consecutive readings over a defined period, while adjacent nodes remain internally consistent. A true anomaly would show spatial clustering.

Q3: Our inter-rater reliability (IRR) score (Fleiss' Kappa) is dropping after the first phase of a image classification project. What steps should we take? A: A dropping Kappa often indicates task fatigue or ambiguous guidelines. First, segment your analysis by participant tenure. Calculate Kappa per user group (new vs. experienced). If the drop is isolated to experienced users, it may be "volunteer drift." Protocol: 1) Re-calibrate with a gold-standard test set issued to all users. 2) Enhance feedback: immediately show users their score vs. consensus on recent tasks. 3) Re-clarify the classification guidelines with updated, ambiguous examples.

Q4: What is the minimum sample size for reliably training a supervised error detection classifier? A: The required labeled samples depend on feature complexity. Use the following heuristic table based on empirical studies:

| Model Type | Recommended Minimum Samples per Class | Key Considerations for Citizen Science |

|---|---|---|

| Logistic Regression | 50-100 | Use for baseline; requires manual labeling of "error" vs. "clean" data points. |

| Random Forest | 100-200 | Robust to non-linear relationships; provides feature importance for audit. |

| Simple Neural Net | 500+ | Only viable for large, mature projects with dedicated validation teams. |

Q5: How can we detect and mitigate "bot" or malicious participant behavior efficiently? A: Implement a multi-layered detection workflow. Key metrics include: 1) Temporal Analysis: Submission frequency beyond human capability (e.g., <100ms per task). 2) Pattern Detection: Repetitive, non-random error patterns. 3) Metadata Verification: Check for impossible geolocation jumps. A rule-based filter can be implemented as per the protocol below.

Experimental Protocol: Rule-Based Filter for Malicious Participant Detection

Objective: To algorithmically identify and flag potentially non-human or malicious participants in a citizen science data stream. Materials: Timestamped submission logs, user ID, task ID, geolocation (IP-derived optional), and response data. Methodology:

- Calculate Submission Velocity: For each user, compute the time delta between consecutive submissions over a 24-hour window. Flag user if >15% of deltas are <150 ms.

- Analyze Response Entropy: For categorical tasks, calculate the Shannon Entropy (H) of the user's response distribution. Pure random or fixed responses yield characteristic H values. Flag if H is significantly outside the 5th-95th percentile range of the validated user cohort.

- Spatio-Temporal Consistency Check (if geolocation available): Calculate the physical distance between subsequent submissions using the Haversine formula. Flag if distance implies impossible travel speed (>900 km/h) for sequential tasks.

- Aggregate Flagging: Assign a user a composite risk score (e.g., 1 point per triggered rule). Quarantine data from users with a score >=2 for manual review. Output: A list of flagged User IDs, their risk scores, and the specific rules triggered for audit.

Research Reagent & Digital Toolkit

| Item / Solution | Function in Red Flag Detection |

|---|---|

| RobustScaler (sklearn.preprocessing) | Scales features using statistics robust to outliers (median & IQR), crucial for pre-processing skewed citizen science data. |

| Fleiss' Kappa (irr package in R/statsmodels in Python) | Statistical measure for assessing the reliability of agreement between multiple raters (citizen scientists). |

| Isolation Forest (sklearn.ensemble) | Unsupervised anomaly detection algorithm that isolates anomalies based on random partitioning, effective for high-dimensional datasets. |

| SHAP (SHapley Additive exPlanations) Library | Explains output of machine learning models, identifying which features contributed most to a "red flag" prediction. |

| Synthetic Minority Over-sampling (SMOTE) | Generates synthetic samples for the "error" class when creating supervised models, addressing imbalanced datasets. |

| Haversine Formula (geopy.distance) | Calculates great-circle distance between geographic points, enabling spatial consistency checks. |

Visualizations

Anomaly Detection Workflow for Citizen Science Data

Systematic Error vs. True Anomaly Decision Pathway

Technical Support Center: Calibration & Uncertainty Troubleshooting

This support center provides resources for researchers integrating citizen science data into projects where quantifying uncertainty is critical. The following guides address common calibration challenges.

Frequently Asked Questions (FAQs)

Q1: Despite initial training, our participants consistently misclassify a specific phenological stage in plant images (e.g., "first bloom" vs. "full bloom"). How can we correct this systemic error? A: This indicates a calibration drift. Implement a structured feedback loop.

- Identify: Use a gold-standard subset of images (expert-verified) to flag participant classifications with low confidence scores.

- Analyze: Create a confusion matrix to pinpoint the exact misclassification.

- Intervene: Push targeted "refresher" training modules—specifically highlighting the confused categories with side-by-side examples—to the affected participants.

- Validate: Re-calibrate using a new test set. Track participant accuracy scores over time in a dashboard to monitor improvement.

Q2: Our sensor data (e.g., from citizen-provided air quality monitors) shows high variance compared to reference stations. How do we determine if it's a calibration or hardware issue? A: Follow this diagnostic protocol.

- Co-location Test: Deploy a subset of 5-10 citizen sensors at a single, controlled location with a reference instrument for 72 hours.

- Data Analysis: Calculate the mean bias and standard deviation for each sensor.

- If all sensors show a similar, consistent bias → Issue is likely in the initial calibration protocol or data processing algorithm.

- If variance is high and biases are random → Issue is likely hardware-specific (e.g., sensor degradation, manufacturing variability).

- Action: For calibration issues, develop a universal offset correction. For hardware issues, establish a screening protocol to flag outliers.

Q3: How can we quantitatively measure the reduction in uncertainty achieved by our participant training program? A: Conduct a pre- and post-training assessment using a controlled image set.

- Protocol: Administer a test of 50 pre-validated images to participants before (T0) and after (T1) training. Include a follow-up test (T2) 4 weeks later to assess retention.

- Metrics: Calculate per-participant accuracy (F1-score) and compare group-level uncertainty (expressed as the standard deviation of error rates).

- Quantify Improvement: The reduction in group-level standard deviation from T0 to T1 directly quantifies the decrease in measurement uncertainty attributable to training.

Table 1: Impact of Structured Feedback on Data Quality in a Citizen Science Bird Count Project

| Metric | Before Feedback Loop (Baseline) | After 1 Feedback Cycle | After 2 Feedback Cycles |

|---|---|---|---|

| Average Participant Accuracy (F1-Score) | 0.65 ± 0.18 | 0.78 ± 0.12 | 0.82 ± 0.09 |